Official statement

Other statements from this video 13 ▾

- □ Does Google really prefer structured data over machine learning to understand your pages?

- □ Should you still bother with structured data if machine learning does the heavy lifting?

- □ Do structured data really give webmasters control over how Google displays their content?

- □ Does Google Really Check the Accuracy of Your Structured Data?

- □ Why should you implement generic structured data types before tackling specific ones?

- □ Why Can Your Valid Schema.org Markup Get Rejected by Google?

- □ Should you implement structured data that Google isn't using yet?

- □ Does structured data really influence how Google understands a page's topic?

- □ Do structured data really make a difference when Google can already understand your page?

- □ Does cramming your pages with structured data really boost your rankings?

- □ Can external JSON-LD really cause synchronization problems for Google's indexing?

- □ Are Google's Testing Tools Really Reliable for Detecting Your Missing Structured Data?

- □ Should your structured data always reflect exactly what visitors actually see on your page?

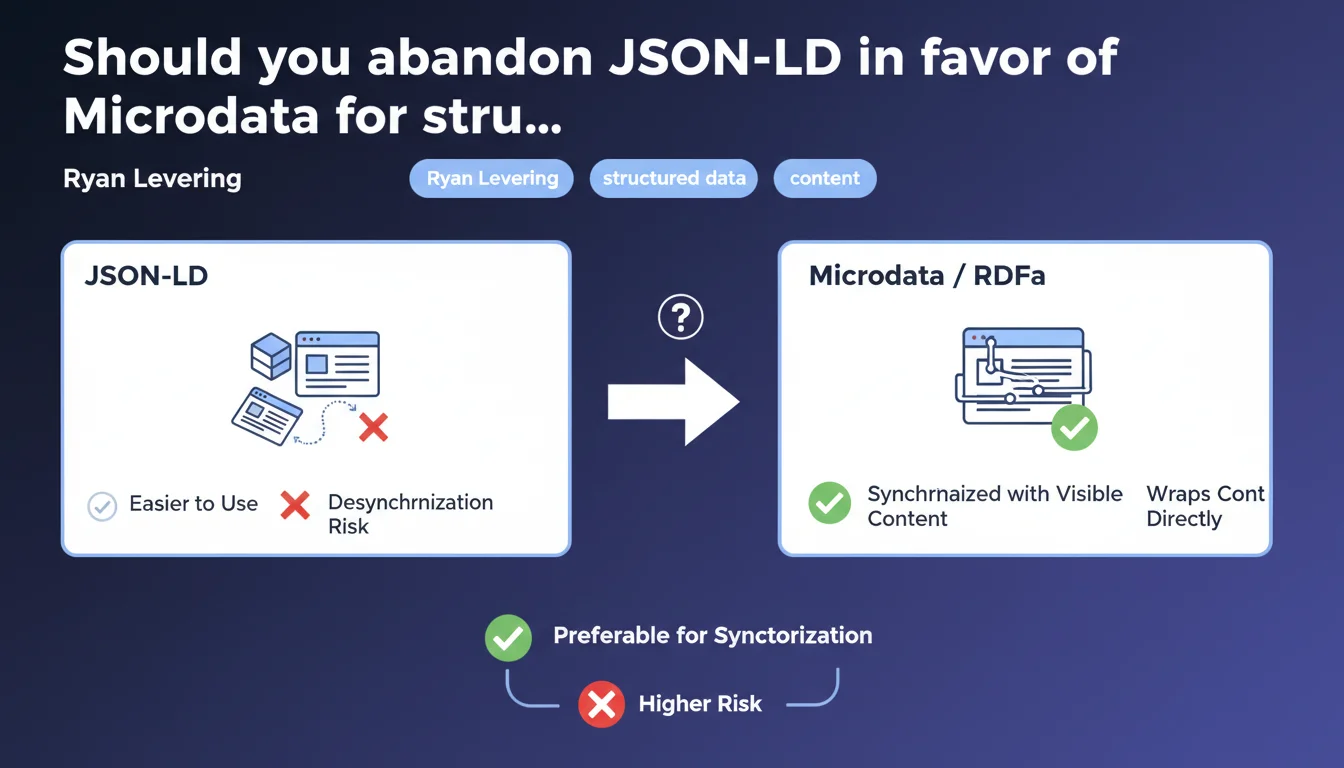

Google acknowledges that Microdata and RDFa remain better synchronized with visible content than JSON-LD. JSON-LD, while simpler to implement, presents an increased risk of desynchronization between structured data and the content actually displayed on the page. An admission that puts official recommendations favoring JSON-LD for years into perspective.

What you need to understand

Why is Google bringing up this synchronization issue?

Desynchronization between structured data and visible content creates a reliability problem for search engines. When information encoded in JSON-LD no longer matches the displayed content, Google may index outdated or incorrect data.

Microdata and RDFa directly wrap visible HTML elements. It's impossible to change a product price without touching the structured markup — coherence is mechanical. JSON-LD, on the other hand, lives in a separate <script> block. Nothing forces the developer to update both at the same time.

Does this statement contradict official recommendations?

Not exactly. Google continues to recommend JSON-LD for its ease of implementation. Official documentation has positioned it as the preferred format since 2016.

What Ryan Levering reveals is that this ease comes at a cost: a structural risk of desynchronization. Google accepts this tradeoff but rarely articulates it as clearly. For sites with frequently updated dynamic content, this flaw can generate critical inconsistencies.

Concretely, what types of desynchronization are observed?

The most common cases concern e-commerce sites: prices modified in the database but not in JSON-LD, outdated product availability, unrefreshed customer ratings. Result: rich snippets display incorrect information.

News sites and blogs encounter the same issue with publication dates, authors, or content modified after publishing. JSON-LD remains frozen on the initial version if no one remembers to regenerate it.

- Microdata/RDFa: mechanical synchronization because integrated into visible HTML

- JSON-LD: simpler to implement but requires rigorous maintenance

- Risk increases with content update frequency

- Google still favors JSON-LD despite this acknowledged weakness

- Desynchronization can lead to incorrect rich snippets

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. In audits, we regularly detect gaps between JSON-LD and visible content — rarely with Microdata. CMS and frameworks often generate JSON-LD at build time or in cache, without regeneration on each content modification.

What stands out is that Google finally articulates a known issue that was never officially documented. Guidelines emphasize structured data coherence without ever pointing out this architectural fragility of JSON-LD. [To verify]: Does Google have internal metrics measuring this desynchronization rate by format?

Why does Google continue recommending JSON-LD despite this flaw?

Because adoption rate trumps technical perfection. JSON-LD democratized structured data for developers unfamiliar with SEO. A copy-pasted <script> block remains more accessible than a complete HTML refactoring in Microdata.

Google arbitrates between markup volume (favored by JSON-LD) and markup reliability (favored by Microdata). In this arbitration, volume wins. Let's be honest: the majority of sites lack the resources to properly implement Microdata across thousands of pages.

In which cases does this recommendation change the game?

For sites with ultra-dynamic content — marketplaces, price aggregators, job listing sites — desynchronization becomes critical. A price displayed at €99 in the snippet but €149 on the detail page destroys trust and conversion rates.

If your editorial workflow clearly separates content creation and technical maintenance, JSON-LD will quickly become obsolete. Microdata forces simultaneous updates — it's constraining but secure.

Practical impact and recommendations

What should you concretely do on an existing JSON-LD site?

Don't panic — no need to rewrite everything in Microdata tomorrow. Start by auditing coherence between your JSON-LD and visible content. Focus on strategic pages: product sheets, featured articles, service pages.

Implement an automated validation process. Each time a price, stock, author or date is modified, the JSON-LD must regenerate. If your CMS doesn't handle this natively, create a server-side hook or trigger.

What mistakes should you absolutely avoid?

Never maintain JSON-LD manually in source code. Once volume exceeds a few dozen pages, it's unmanageable. Desynchronization becomes inevitable.

Also avoid duplicating structured data by mixing JSON-LD and Microdata on the same elements — Google will favor one or the other unpredictably. Choose one format per content type and stick with it.

How do you verify that your implementation stays synchronized?

Use Google Search Console to detect reported inconsistencies. Complement with regular testing via the Rich Results Test on a sample of recently updated pages.

For monitoring, compare values extracted from JSON-LD with visible DOM via an automated script. Any divergence exceeding 24-48 hours signals a problem in your generation pipeline.

- Audit JSON-LD vs visible content coherence on strategic pages

- Automate JSON-LD regeneration on each content modification

- Never maintain structured data manually

- Avoid duplicate JSON-LD + Microdata on the same data

- Monitor inconsistencies via Search Console and Rich Results Test

- For ultra-dynamic content, consider Microdata over JSON-LD

- Implement an automated validation script comparing JSON-LD and DOM

❓ Frequently Asked Questions

Google pénalise-t-il les sites dont le JSON-LD est désynchronisé ?

Peut-on mixer JSON-LD et Microdata sur un même site ?

Microdata est-il plus complexe à implémenter que JSON-LD ?

Comment automatiser la mise à jour du JSON-LD dans WordPress ?

RDFa présente-t-il les mêmes avantages que Microdata ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 07/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.