Official statement

Other statements from this video 13 ▾

- □ Does Google really prefer structured data over machine learning to understand your pages?

- □ Should you still bother with structured data if machine learning does the heavy lifting?

- □ Do structured data really give webmasters control over how Google displays their content?

- □ Does Google Really Check the Accuracy of Your Structured Data?

- □ Why should you implement generic structured data types before tackling specific ones?

- □ Why Can Your Valid Schema.org Markup Get Rejected by Google?

- □ Should you implement structured data that Google isn't using yet?

- □ Does structured data really influence how Google understands a page's topic?

- □ Do structured data really make a difference when Google can already understand your page?

- □ Does cramming your pages with structured data really boost your rankings?

- □ Should you abandon JSON-LD in favor of Microdata for structured data?

- □ Are Google's Testing Tools Really Reliable for Detecting Your Missing Structured Data?

- □ Should your structured data always reflect exactly what visitors actually see on your page?

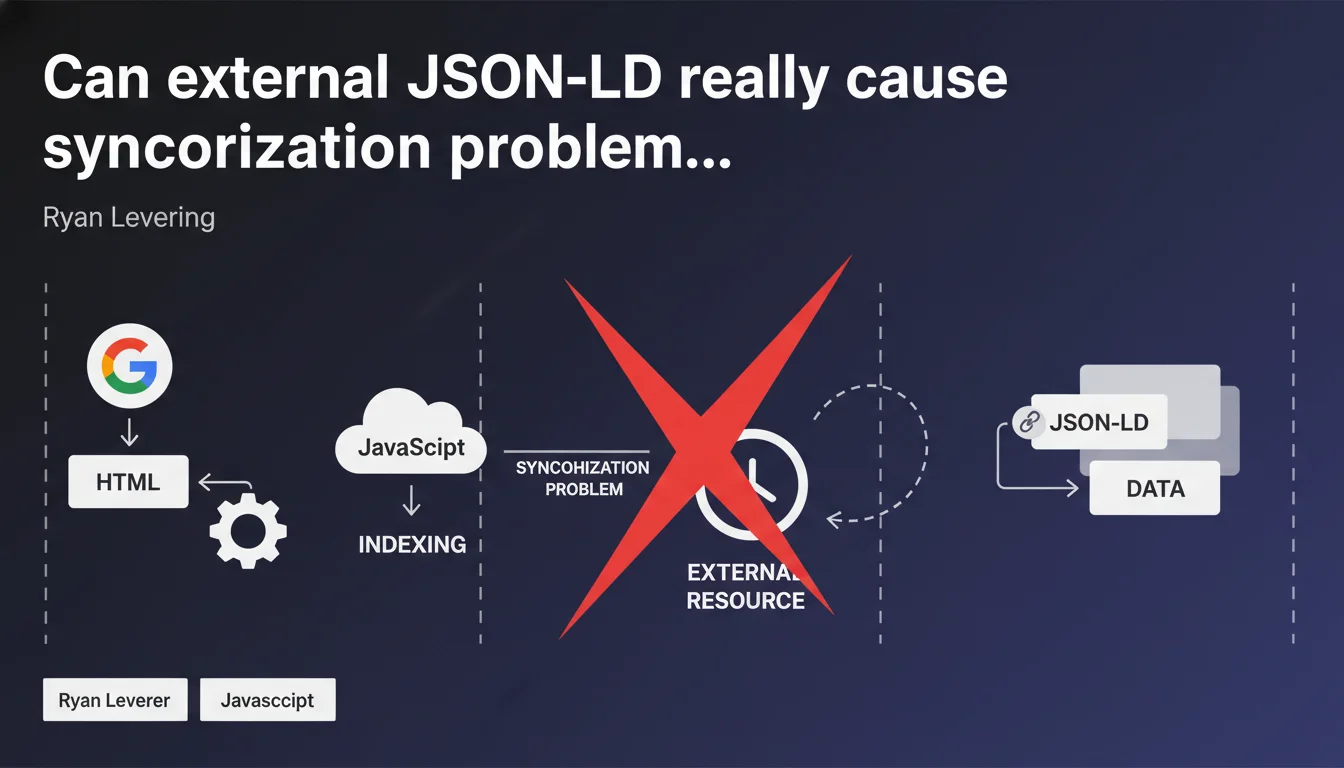

Ryan Levering reveals that JSON-LD loaded from an external resource can create timing delays during Google's crawl. If the JavaScript carrying the JSON-LD isn't downloaded simultaneously with the HTML, the bot risks indexing a page without its structured data — or worse, with outdated data.

What you need to understand

Why is Google suddenly talking about a "synchronization problem"?

The classic JSON-LD implementation involves inserting the script directly into the page's HTML. The crawler receives everything at once: markup, content, structured data. No friction.

But some sites — often via tag management systems or JS frameworks — load the JSON-LD from an external file or through dynamic injection. In this case, Google must first parse the HTML, discover that an external script exists, then make a second request to retrieve it. Between the two, a timing delay appears. And that delay is what Levering calls a "synchronization problem".

What is the concrete risk for indexing?

If the bot crawls the page but the JavaScript updating the JSON-LD hasn't been executed or downloaded yet, two scenarios present themselves:

- The JSON-LD is absent from the version analyzed by Google

- The JSON-LD is present but contains outdated data (case of SPAs that update the schema on the client side)

- The final rendering differs between what the user sees and what Google indexes, creating a semantic understanding gap

Google prioritizes speed. If the external resource takes too long to load, the crawler may simply move on without waiting.

How does this differ from classic inline JSON-LD?

With inline JSON-LD, everything is served all at once. The crawler retrieves the complete HTML, parses the <script type="application/ld+json"> tags, and indexes. Zero additional requests, zero latency.

With external or JS-injected JSON-LD, the bot must handle asynchronicity. And even though Google is now capable of executing JavaScript, nothing guarantees that it will wait indefinitely for a third-party script to load. That's the whole nuance of this statement.

SEO Expert opinion

Is this statement consistent with field observations?

Yes — and it's even quite transparent on Google's part. On sites tested with JSON-LD loaded via GTM or external CDNs, we regularly find that Search Console doesn't report structured data even though it's well present in the DOM on the client side.

Some practitioners have even noticed enrichments (FAQ, Breadcrumb, Product) that disappear and reappear in the SERP — a classic sign of incomplete or poorly synchronized crawling. Let's be honest: Google crawls fast, and if your JSON-LD arrives 500 ms too late, it risks missing the window.

In which cases doesn't this rule apply?

If your JSON-LD is served inline in the first HTML returned by the server, this issue doesn't concern you. You're in the nominal case that Google handles without friction.

On the other hand, if you use a JS framework (React, Vue, Next in CSR mode without proper SSR) or a tag manager to inject the schema after the first paint, you're potentially exposed. [To verify]: Google claims that its crawler waits "a certain time" before rendering the page, but no official duration is communicated. It's vague, and it remains intentionally so.

Should you completely abandon external JSON-LD?

No. But you must understand the trade-off. If your architecture requires JS to generate the schema (e.g. product data pulled from a client-side API), make sure at minimum that:

- The script loads with high priority (preload, intelligent defer)

- SSR rendering or pre-rendering generates the JSON-LD before sending to the client

- You regularly validate via the rich results testing tool + Search Console

The risk isn't systematic, but it exists. And Levering says it plainly: synchronization can fail. It's up to you to ensure it doesn't.

Practical impact and recommendations

What exactly should you do to avoid this problem?

The most robust solution remains server-side injection. Generate your JSON-LD during the build or SSR render, and insert it directly into the HTML before it's sent to the client. No asynchronous request, no delay.

If you use a classic CMS (WordPress, Shopify, Prestashop), verify that your SEO plugins (Yoast, Rank Math, SEOPress) generate the schema inline in the source code — this is normally the default, but some themes or extensions may move it.

What mistakes should you absolutely avoid?

- Don't load JSON-LD from an external script hosted on a third-party CDN without latency control

- Don't rely solely on Google Tag Manager to inject critical structured data (Product, Article, FAQ)

- Don't assume that Google will wait "long enough" — it won't always

- Don't forget to test the crawled version via the URL Inspection Tool in Search Console, not just in your browser

And above all: don't make the mistake of believing that JSON-LD that "displays well in client source code" will necessarily be seen by Google. Just because your DevTools shows it doesn't mean the bot retrieved it at the right time.

How do you verify that your site is compliant?

Use the rich results testing tool in live URL mode, not code snippet mode. This forces Google to actually crawl your page and shows you what it sees.

Then compare with what Search Console reports in the "Enhancements" section. If pages that are valid in testing aren't detected in production, you likely have a timing problem.

The best practice: inline JSON-LD, generated server-side, in the initial HTML. If your current architecture doesn't easily allow this — particularly on complex JS stacks or e-commerce environments with heavy personalization — this type of optimization can quickly become technical. In that case, calling on an SEO-specialized agency allows you to thoroughly audit your rendering architecture and implement a structured data integration strategy tailored to your stack, without risk of regression.

❓ Frequently Asked Questions

Le JSON-LD chargé via Google Tag Manager est-il concerné par ce problème ?

Google attend combien de temps avant de rendre une page avec JavaScript ?

Est-ce que ce problème touche aussi les microdonnées et RDFa ?

Peut-on détecter ce problème dans Search Console ?

Faut-il complètement abandonner l'injection JS de JSON-LD ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 07/04/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.