Official statement

Other statements from this video 8 ▾

- □ Sous-domaines vs sous-répertoires : Google a-t-il vraiment une préférence ?

- □ Les backlinks vont-ils vraiment perdre de l'importance en SEO ?

- □ Faut-il vraiment placer le schema Organization uniquement sur la page d'accueil ?

- □ Peut-on vraiment ajouter n'importe quel schema sans risque pour son SEO ?

- □ Les templates de contenu structurés sont-ils vraiment un atout pour le référencement ?

- □ Pourquoi Google refuse-t-il d'indexer votre contenu généré par templates ?

- □ Pourquoi l'attribut alt doit-il décrire le contexte de l'image et pas seulement l'image elle-même ?

- □ Les H1 différenciés sont-ils la clé pour indexer vos pages à template similaire ?

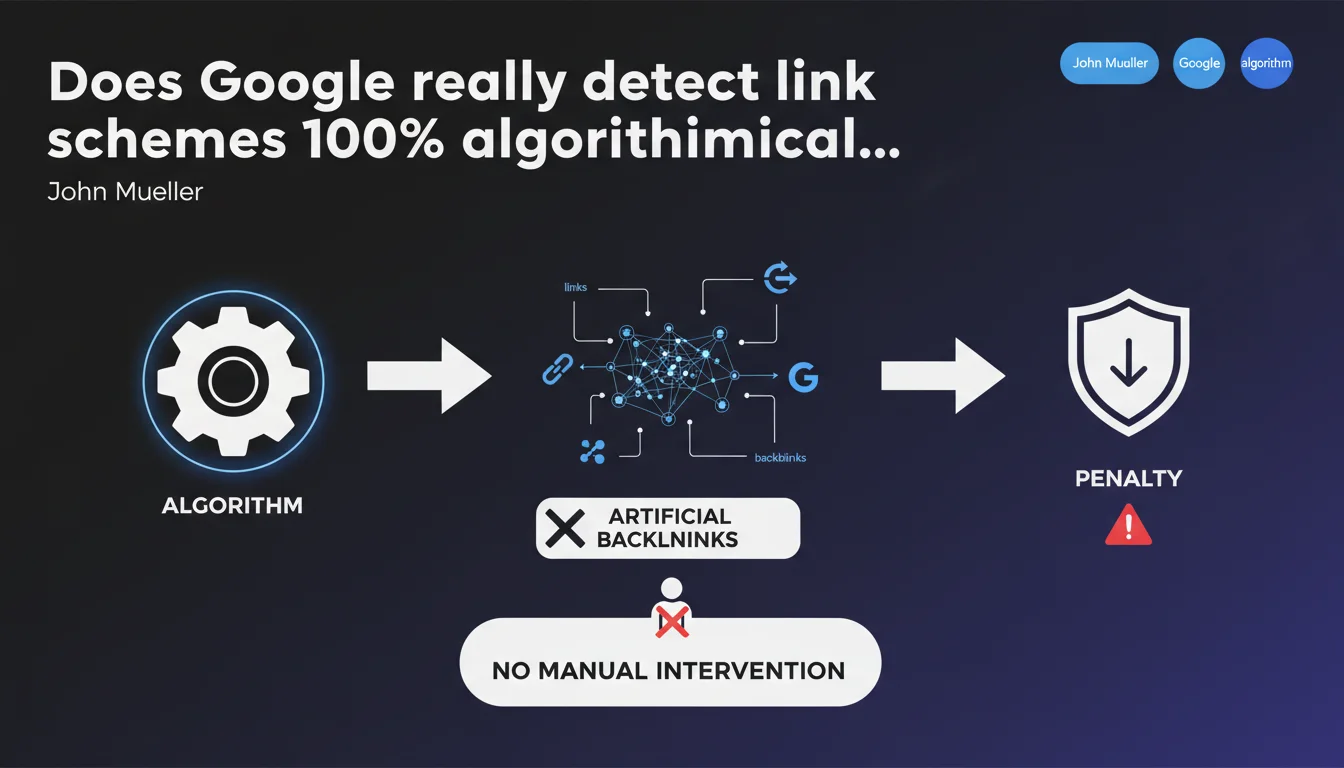

Google claims that the detection and penalization of artificial backlinks are now handled by algorithms, with no manual intervention. In concrete terms, this means link schemes are supposed to be identified automatically, without a quality rater manually analyzing your link profile. However, this statement raises questions about the real precision of these algorithms and the persistent existence of manual actions in certain cases.

What you need to understand

What does algorithmic detection of link schemes really mean?

When Google talks about algorithmic detection, it claims that its automated systems constantly analyze link profiles to identify suspicious patterns. These algorithms scrutinize acquisition velocity, anchor diversity, quality of referring domains, and a multitude of other signals.

Unlike manual action where a human from the Search Quality Team examines your site and sends a notification via Search Console, the algorithmic approach means that penalties or devaluations occur without explicit warning. Your site loses rankings, but no message explains why.

Does this mean manual actions have disappeared?

Not exactly. Mueller clarifies that detection is algorithmic, which doesn't mean manual actions no longer exist at all. They persist for flagrant cases, but Google claims that the majority of link schemes are now handled automatically.

In practice, we do observe a drastic decrease in manual action notifications for "artificial links" in recent years. Algorithms like Penguin have been integrated into core ranking in real time, which has made human interventions less frequent.

What signals allow Google to detect a link scheme?

Google never reveals the exhaustive list, but certain patterns are obvious. The algorithms scrutinize semantic consistency between linking and linked sites, abnormal geographic distribution of links, over-optimized anchors, or the presence of links on sites listed as link farms.

Let's be honest: if you bought 200 backlinks on Fiverr with exact "auto insurance paris" anchors, Google's algorithms won't take three years to figure out something's wrong.

- Abnormal velocity: massive acquisition of links in a short time without editorial justification

- Over-optimized anchors: excessive repetition of exact commercial anchors

- Low-quality referring sites: links from known farms, hacked sites, or poorly masked PBN networks

- Identical link patterns: multiple sites using the same sources and acquisition schemes

- Lack of semantic context: links from content unrelated to your topic

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes and no. On one hand, we do see that manual action notifications for artificial links have dropped. Algorithms detect and neutralize a good portion of link schemes without visible human intervention.

But — and this is a significant "but" — that doesn't mean algorithms catch everything. Well-constructed link schemes with contextualized links on medium-quality but not obviously suspicious sites still regularly fly under the radar. [To verify]: Google claims near-perfect detection, but reality shows that aggressive netlinking strategies continue to generate gains, at least short-term.

What nuances should we add to this claim?

First point: when Google says "algorithmic," it doesn't mean "infallible." Algorithms have blind spots, particularly with well-disguised site networks that imitate genuine editorial recommendations.

Second point: "penalization" isn't always binary. Often, Google simply devalues suspicious links by stripping them of all weight, without necessarily penalizing the destination site. Result: you gain nothing, but you don't necessarily lose either. That's not what we call a true negative penalty.

In what cases doesn't this rule apply fully?

Large e-commerce sites or media outlets with millions of backlinks sometimes escape granular analysis. Google treats these profiles differently, with higher tolerance for certain parasitic signals — simply because volume makes analysis more complex.

Conversely, small sites with few backlinks see each link examined closely. A single questionable link can be enough to trigger devaluation if the overall profile lacks credibility. Algorithmic fairness isn't always there.

Practical impact and recommendations

What should you do concretely to avoid problems?

First, audit your existing link profile. Use standard tools (Ahrefs, Majestic, SEMrush, Search Console) to identify suspicious backlinks. Focus on over-optimized commercial anchors, low-quality sites, and recent massive acquisitions.

Next, if you detect clearly artificial or toxic links, disavow them via Google's disavow file. Yes, Google claims to handle this algorithmically, but you might as well put all the odds in your favor by explicitly flagging links you don't endorse.

What mistakes should you avoid at all costs?

Don't launch aggressive netlinking campaigns with exact anchors and low-cost platforms. Even if some links still work, algorithms constantly improve. What works today could become a time bomb tomorrow.

Also avoid believing that a well-oiled PBN strategy is "invisible." Google has decades of data on technical fingerprints (hosting, CMS, linking patterns, WHOIS). Networks are almost always detected eventually — it's just a matter of time.

How can you verify your site is compliant and protected?

Monitor your ranking fluctuations after each major algorithm update. A sudden drop without apparent reason could signal devaluation of your links. Cross-check this analysis with an audit of your backlink profile to identify suspicious sources.

Also ensure that your new acquisitions meet Google's editorial criteria: contextualized links, thematically coherent sites, varied natural anchors (branded, bare URLs, generic). If you can't justify each link through genuine editorial logic, that's a bad sign.

- Conduct a full audit of your backlink profile every quarter

- Disavow toxic links identified (spam, hacked sites, known farms)

- Abandon netlinking strategies based on massive low-cost link purchases

- Favor editorial links obtained through quality content (linkbaiting, studies, infographics)

- Vary your link anchors: majority branded and generic, minority commercial anchors

- Monitor your rankings after each Core Update or spam update

- Document each netlinking campaign to justify the origin of your links

❓ Frequently Asked Questions

Est-ce que Google envoie encore des notifications d'actions manuelles pour liens artificiels ?

Si mes positions chutent sans action manuelle notifiée, est-ce forcément lié aux backlinks ?

Faut-il encore utiliser le fichier disavow si Google gère tout algorithmiquement ?

Les PBN bien construits sont-ils toujours efficaces malgré la détection algorithmique ?

Comment savoir si un lien est considéré comme artificiel par Google ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/11/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.