Official statement

Other statements from this video 11 ▾

- □ Faut-il vraiment penser aux utilisateurs avant les machines en SEO ?

- □ Tirets vs underscores dans les URLs : pourquoi Google préfère-t-il l'un à l'autre ?

- □ Le contenu masqué dans les accordéons pénalise-t-il votre référencement ?

- □ Le contenu caché est-il devenu aussi important que le contenu visible pour Google ?

- □ Googlebot peut-il vraiment indexer du contenu caché derrière des clics utilisateur ?

- □ Pourquoi Google ignore-t-il votre navigation si elle n'utilise pas de vrais liens anchor ?

- □ Les Core Web Vitals suffisent-ils vraiment à mesurer l'expérience utilisateur ?

- □ Pourquoi Google refuse-t-il de donner des critères précis sur certains aspects de l'UX ?

- □ Les URLs lisibles et cohérentes sont-elles vraiment un critère de ranking ?

- □ L'accessibilité web influence-t-elle directement le classement dans Google ?

- □ Lighthouse rate-t-il vraiment la qualité de vos ancres de liens ?

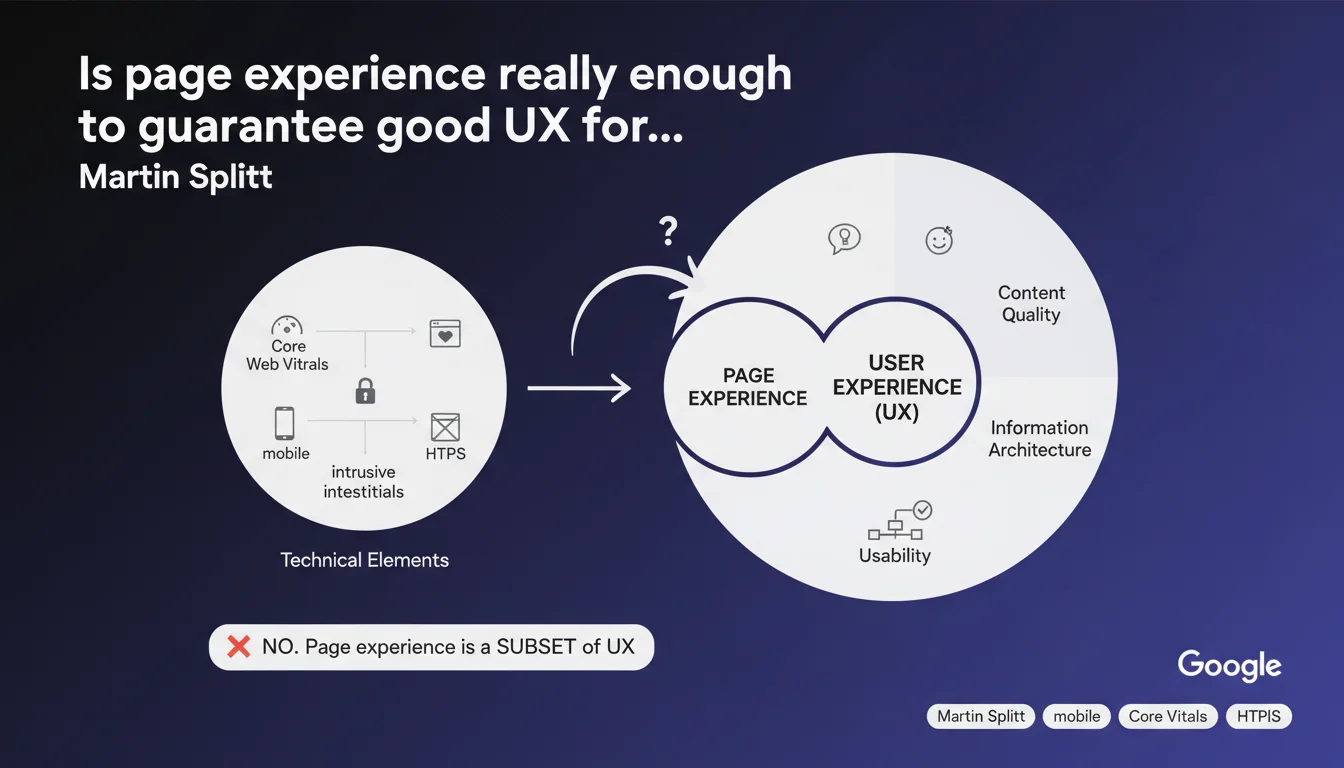

Google clearly distinguishes between page experience and overall user experience. Core Web Vitals, HTTPS, and mobile compatibility represent only a technical fraction of what Google considers good UX. In other words: optimizing your technical signals doesn't guarantee a site that's relevant to your visitors.

What you need to understand

Why does Google separate page experience and UX?

The statement by Martin Splitt sets an important conceptual framework: page experience is only a measurable subset of user experience.

Concretely, Google can quantify Core Web Vitals, verify HTTPS presence, detect intrusive interstitials, or test mobile compatibility. These elements are technical in nature and can be audited automatically.

But UX goes far beyond that: information architecture, editorial quality, content relevance, intuitive design, smooth user journey. These are criteria that Google attempts to evaluate through behavioral signals and semantic algorithms, but cannot reduce to a single numerical score.

What aspects of UX escape page experience metrics?

Everything that falls under subjective user perception. A technically perfect site can display outdated design, incomprehensible navigation, or hollow content — and achieve poor results despite green Core Web Vitals scores.

Conversely, some sites with average technical scores perform well thanks to a solid information architecture, engaging writing, and precise alignment with search intent.

Google evaluates these dimensions through indirect signals: bounce rate, time on site, result clicks, returns to SERP. But none of this appears in PageSpeed Insights.

Should Core Web Vitals be ignored then?

No. They remain an official ranking signal and essential technical foundation. But they don't compensate for mediocre content or failing UX.

The logic: Core Web Vitals are a necessary but not sufficient condition. They fall under technical hygiene, not content strategy.

- Page experience: measurable technical signals (CWV, HTTPS, mobile-friendly, interstitials)

- Overall UX: architecture, editorial quality, relevance, design, user journey

- Technical metrics don't replace genuine UX thinking

- Google uses behavioral signals to evaluate what it cannot measure directly

SEO Expert opinion

Is this distinction consistent with field observations?

Perfectly. We regularly observe sites with excellent Core Web Vitals that stagnate in search results because their content doesn't match search intent or their architecture buries key information.

Conversely, some heavy sites — particularly in e-commerce and media — maintain their positions thanks to strong editorial authority, intelligent internal linking, and precise answers to queries.

Let's be honest: Google cannot quantify the clarity of a conversion funnel or the relevance of a FAQ. Instead, it relies on behavioral proxies — and this is where real UX makes the difference.

What nuances should be added to this statement?

Martin Splitt doesn't specify what relative weight Google assigns to each dimension. Is it 20% for Core Web Vitals, 80% for overall UX? No public data on that. [To verify]

Furthermore, the concept of "user experience" remains vague in Google's communications. We don't know exactly which behavioral signals are used, or how they're weighted against technical criteria.

What we know: Google constantly tests new metrics (interaction to next paint, session duration, scroll depth). But no official documentation details their impact on rankings.

In what contexts does this rule have the most impact?

Sites with high page volume (e-commerce, media, directories) are most affected. Poor UX architecture causes massive damage there, even with correct Core Web Vitals.

Sites with low competition can afford average technical scores if their content perfectly matches intent. But as competition increases, the combination of technical + UX becomes decisive.

Practical impact and recommendations

What should you do concretely to improve UX beyond Core Web Vitals?

First, audit the user journey: map main entry pages, analyze bounce rate by query type, identify friction points (confusing navigation, invisible CTAs, dense content).

Next, test content relevance against search intent. Google Analytics and Search Console let you cross-reference queries, landing pages, and behavior. If a page generates traffic but high bounce rate, the problem is often a mismatch between the title promise and actual content.

Finally, don't neglect information architecture: coherent internal linking, clear H1-H3 hierarchy, visible breadcrumb. These elements facilitate navigation and strengthen Google's thematic understanding.

What mistakes must be avoided?

Believing that a good PageSpeed Insights score is enough to guarantee good rankings. Core Web Vitals are a technical prerequisite, not a complete SEO strategy.

Another trap: optimizing for metrics without thinking about the real user. Classic example — reducing image weight until they're illegible, or removing useful features to gain a few milliseconds.

Most common: ignoring the behavioral signals visible in Search Console and GA4. If your pages generate traffic but no engagement, that's a failing UX signal that Google detects sooner or later.

How do you verify your site offers solid UX?

Start with real user testing: watch uninitiated people navigate your key pages. Note hesitations, missed clicks, backtracking. This is the best indicator of poor UX.

Then cross-reference Search Console data (impressions, CTR, average position) with Google Analytics (bounce rate, session duration, pages per session). A low CTR despite good position signals a title or meta description issue. A high bounce rate on a high-volume query indicates a mismatch between the promise and content.

Finally, audit your internal linking: do strategic pages receive enough links from the rest of the site? Are anchor texts consistent with the target page topic?

- Audit user journey: bounce rate, entry pages, friction points

- Verify content relevance against search intent

- Optimize information architecture: internal linking, Hn hierarchy, breadcrumb

- Cross-reference Search Console and GA4 to identify weak behavioral signals

- Test UX with real users, not just automated tools

- Don't sacrifice usefulness for pure speed

❓ Frequently Asked Questions

Les Core Web Vitals sont-ils toujours un critère de classement ?

Google mesure-t-il directement l'UX ou seulement des signaux techniques ?

Un site avec des Core Web Vitals médiocres peut-il bien se classer ?

Quels outils utiliser pour évaluer l'UX au-delà des métriques techniques ?

L'optimisation UX a-t-elle un impact rapide sur le classement ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 21/06/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.