Official statement

Other statements from this video 11 ▾

- □ L'expérience de page suffit-elle vraiment à garantir une bonne UX pour Google ?

- □ Faut-il vraiment penser aux utilisateurs avant les machines en SEO ?

- □ Tirets vs underscores dans les URLs : pourquoi Google préfère-t-il l'un à l'autre ?

- □ Le contenu masqué dans les accordéons pénalise-t-il votre référencement ?

- □ Le contenu caché est-il devenu aussi important que le contenu visible pour Google ?

- □ Googlebot peut-il vraiment indexer du contenu caché derrière des clics utilisateur ?

- □ Pourquoi Google ignore-t-il votre navigation si elle n'utilise pas de vrais liens anchor ?

- □ Les Core Web Vitals suffisent-ils vraiment à mesurer l'expérience utilisateur ?

- □ Les URLs lisibles et cohérentes sont-elles vraiment un critère de ranking ?

- □ L'accessibilité web influence-t-elle directement le classement dans Google ?

- □ Lighthouse rate-t-il vraiment la qualité de vos ancres de liens ?

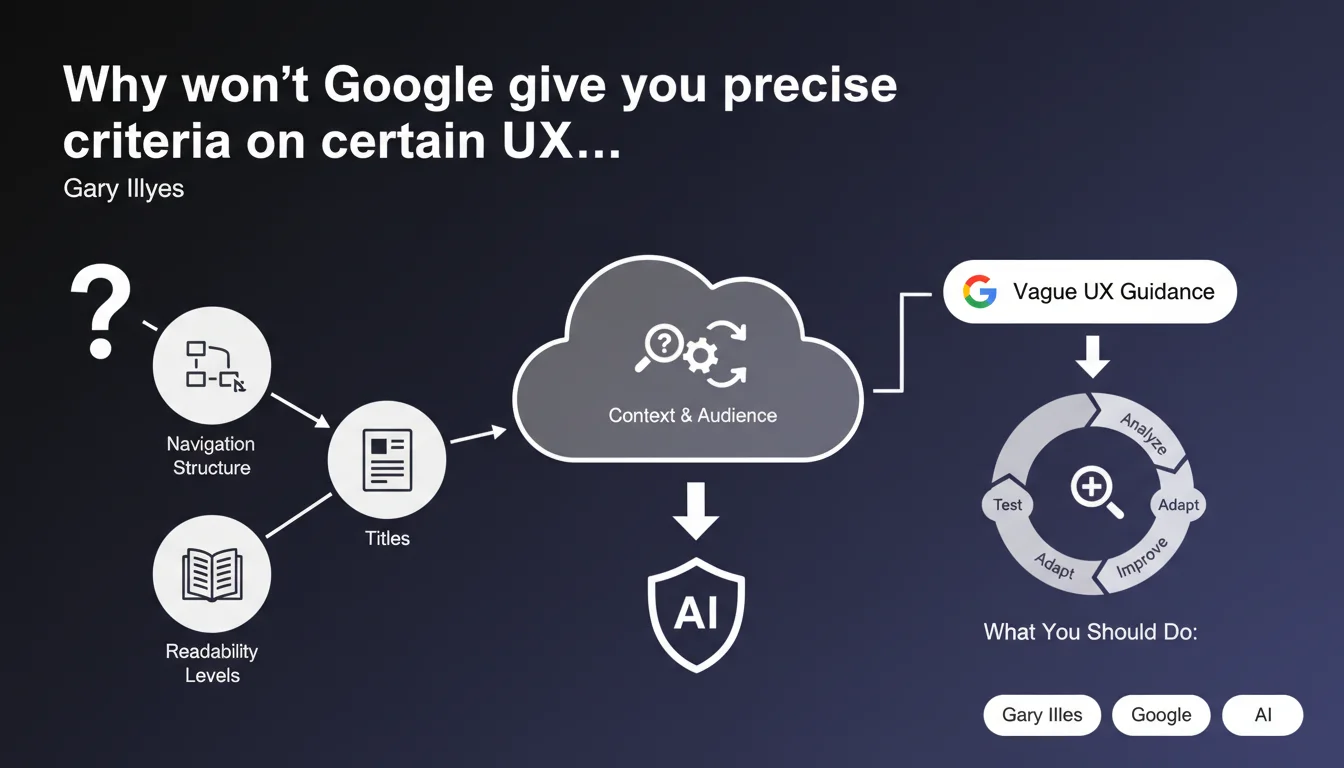

Google deliberately stays vague on UX criteria like navigation, titles, and readability levels. Their argument: these aspects depend too heavily on context and audience to be standardized. A position that leaves SEOs navigating gray zones—again.

What you need to understand

What exactly does Google mean by "difficult to define"?

Gary Illyes justifies the absence of precise recommendations on certain user experience elements by invoking contextual variability. An optimal navigation structure for an e-commerce site has nothing in common with that of a technical blog. A readability level suited to a scientific audience would be incomprehensible to the general public.

The problem is that this logic applies to almost everything in SEO. So why does Google provide precise thresholds for Core Web Vitals but remain silent on what constitutes a "good" title hierarchy? The boundary between "too complex to define" and "we prefer to keep control" remains blurry.

Which UX aspects are affected by this abstraction?

Illyes cites three examples: navigation structure, titles (likely Hn tags and title tags), and readability level. In practice, Google will never say "your menu should have a maximum of 7 entries" or "aim for a Flesch-Kincaid reading level of 8".

This position is consistent with Google's historical approach: avoid overly rigid prescriptions that could be exploited as automated recipes. But it also forces SEOs to navigate by sight on criteria that Google itself classifies as "important".

Is this something new in Google's communication?

No, it's an explicit confirmation of an established practice. Google has always alternated between quantified recommendations (loading time, CLS, etc.) and deliberate gray zones (content quality, expertise, user satisfaction).

The nuance here is that Illyes openly assumes this abstraction strategy. He doesn't claim that data is missing—he says that defining a standard would be counterproductive. It's a philosophical position, not a technical one.

- Google acknowledges that it cannot standardize certain UX criteria without creating unintended consequences

- The cited examples (navigation, titles, readability) are nonetheless signals mentioned in quality guidelines

- This approach forces SEOs to prioritize contextual analysis over the application of universal rules

- The dividing line between "measurable criteria" and "subjective criteria" remains at Google's discretion

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Yes and no. In principle, the argument holds: a government website doesn't have the same navigation constraints as a viral media site. But in practice, Google does penalize sites for structural or readability problems—without ever explaining precisely which threshold was crossed.

We regularly observe cases where a navigation redesign dramatically improves organic performance, or conversely where an inappropriate readability level tanks satisfaction rates (measured indirectly through user behavior). Google uses these signals, but refuses to provide the rules of the game. It's frustrating but strategically logical for them.

What deliberate gray zones does this position maintain?

The real issue is that Google can invoke these "contextual" criteria to justify any algorithmic adjustment without having to justify itself. A site loses traffic? Perhaps its navigation became "unsuitable". But how can you prove it if no standard exists?

[Worth checking]: It would be interesting to compare this statement with Google patents on site structure analysis. We know that metrics like click depth, internal link density, or semantic consistency of anchors are measured. Why not at least provide acceptable ranges?

The most likely hypothesis: Google wants to discourage mechanical optimization in favor of genuine UX thinking. But without minimum guidelines, sites with fewer resources are penalized against those that can afford in-depth user testing.

In which cases might this "context above all" rule not apply?

Let's be honest: there are universal anti-patterns in navigation and readability. A menu with 50 entries at the same level is objectively bad for any type of site. Text stuffed with jargon incomprehensible to 95% of your target audience is an identifiable problem.

Google could very well define alert thresholds (e.g., "beyond X levels of depth, accessibility becomes critical") without imposing a universal standard. But they don't—probably to maintain maximum algorithmic flexibility.

Practical impact and recommendations

What should you do concretely in the absence of quantified recommendations?

First rule: stop searching for THE universal best practice. If Google says it doesn't exist, it's probably true—or at least consistent with their approach. The real question becomes: which navigation/readability level/hierarchy works for your audience?

Concretely, that means investing in real user testing: heatmaps, session recordings, A/B tests on navigation structures. The tools exist (Hotjar, Microsoft Clarity, etc.) and provide more reliable data than any generic SEO checklist.

For readability level, use tools like Hemingway or readability scores built into Yoast/Rank Math—but adapt the thresholds to your audience. A mainstream medical site should target Flesch-Kincaid 8-10, a scientific journal can go 14-16. Context matters.

What mistakes should you avoid when facing this gray zone?

Mistake #1: applying "best practices" copy-pasted without thinking. The fact that Zalando has 3-level navigation doesn't mean it's optimal for your niche shop.

Mistake #2: ignoring these aspects entirely on the grounds that Google doesn't provide criteria. The absence of a standard doesn't mean the absence of impact—just that you need to measure yourself what works.

Mistake #3: over-optimizing for a robot at the expense of the real user. If your menu is perfect for crawlers but incomprehensible to your visitors, you lose on both fronts (bounce rate, time on site, conversions—all indirect signals Google picks up).

How can you validate that your UX choices align with Google's expectations?

Monitor behavioral metrics in Search Console: click-through rates, time spent on site (visible indirectly through brand queries and returning traffic), pages per session. A degradation of these metrics after a redesign is a warning signal.

Analyze crawl budget: if Google abandons crawling mid-way through your site architecture, it's likely that your structure poses a problem—even without an official quantified criterion.

Test with real users representative of your target. Qualitative studies (5-10 people are enough to identify 80% of major problems) are more revealing than any SEO checklist.

- Audit your navigation structure with a crawl tool (Screaming Frog, Oncrawl) to spot excessive depth and orphaned pages

- Measure the readability level of your main pages with a tool adapted to your language (Flesch-Kincaid, Gunning Fog, etc.)

- Compare these scores with well-ranking competitors—not to copy, but to identify significant gaps

- Install a heatmap/session recording tool to observe how users actually navigate

- Run A/B tests on critical navigation structures (menu, filters, pagination)

- Monitor GSC metrics after modifications to detect positive or negative impacts

- Document your choices and their rationales—in the absence of standards, internal consistency becomes an asset

❓ Frequently Asked Questions

Google ne donne aucun critère chiffré pour la navigation, les titres ou la lisibilité ?

Est-ce que cela signifie que ces éléments n'ont pas d'impact sur le classement ?

Comment savoir si ma structure de navigation est adaptée sans critères de référence ?

Faut-il quand même viser un niveau de lecture spécifique ?

Cette position de Google est-elle cohérente avec leur approche globale du SEO ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 21/06/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.