Official statement

Other statements from this video 9 ▾

- □ Google favorisait-il vraiment le HTML au détriment du JavaScript pour l'indexation ?

- □ Les spinners de chargement peuvent-ils vraiment bloquer l'indexation de vos pages JavaScript ?

- □ Pourquoi vos liens JavaScript ralentissent-ils la découverte de vos pages par Google ?

- □ Le JavaScript peut-il vraiment être indexé plus vite que l'HTML ?

- □ Comment vérifier si Google rend vraiment votre JavaScript avec la méthode du honeypot ?

- □ Tous les frameworks JavaScript sont-ils vraiment égaux face au crawl de Google ?

- □ Google ment-il sur le rendu JavaScript ou simplifie-t-il juste la vérité ?

- □ Faut-il vraiment corriger la technique avant de miser sur le contenu et les backlinks ?

- □ Pourquoi Google recommande-t-il de tester en conditions réelles plutôt que de croire la documentation ?

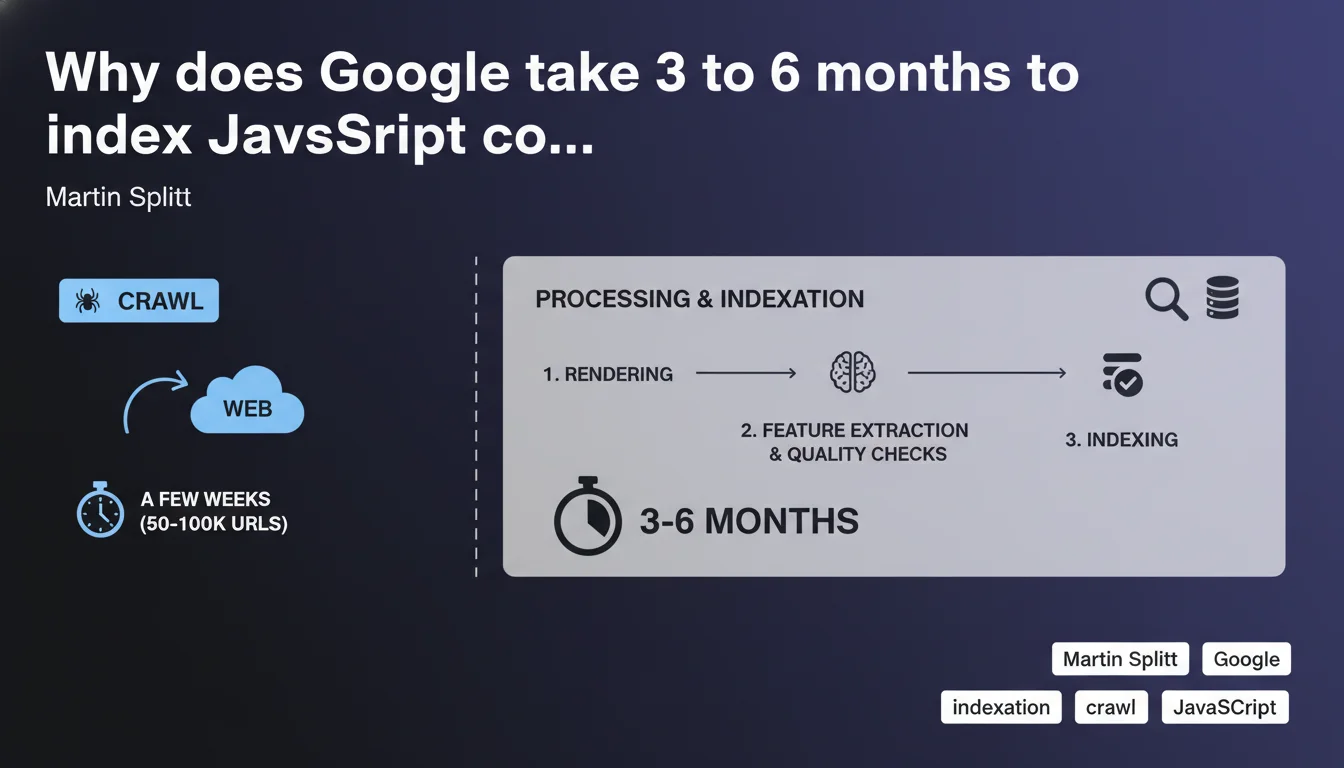

Google crawls JavaScript sites quickly (a few weeks for 50-100K URLs) but full processing and indexing of rendered content can require 3 to 6 additional months before appearing in search results. This massive delay between crawl and effective indexation creates a critical temporal gap for JS-heavy sites.

What you need to understand

Does Google really differentiate between crawling and indexing JavaScript?

Splitt points out a gap that's often overlooked: crawling and indexation are two distinct phases for JavaScript content. Googlebot visits your URLs within a few weeks, retrieves the initial HTML, but JavaScript rendering — which requires executing code to generate the final DOM — occurs in a separate queue.

This deferred rendering queue can take months to clear. In practice, your critical content can remain invisible in SERPs even if Googlebot has technically "seen" the page.

Why is this 3 to 6 month delay so long?

Google doesn't provide precise technical details — [To verify] — but the likely reason comes down to computing resources. Rendering JavaScript is expensive in CPU: each page requires a headless browser, code execution, waiting for network requests, and DOM generation.

For a site with 50-100K URLs, even with massive infrastructure, processing complete rendering takes time. Google likely prioritizes high-authority sites or those already generating established organic traffic.

How does this differ from a standard HTML site?

A site built with static HTML or server-side rendering (SSR) delivers final content right from the initial crawl. No additional queue. Indexation follows almost immediately if content meets quality criteria.

With client-side JavaScript, Google must return later for rendering. That return is neither guaranteed immediately nor necessarily prioritized — hence the several-month delay mentioned.

- Fast crawl ≠ fast indexation: don't confuse these two metrics in your reports.

- JavaScript rendering is a distinct and expensive step for Google.

- SSR or static HTML sites benefit from a massive time advantage in indexation.

- The 3-6 month timeframe mentioned applies to sites of 50-100K URLs — extrapolate carefully for other volumes.

SEO Expert opinion

Is this statement consistent with field observations?

Yes — and that's precisely what's problematic. For years, SEO professionals have observed that JS-heavy pages take forever to rank, even when they appear as "crawled" in Search Console. Splitt formalizes what we already knew: crawling is just the first step.

But let's be honest: 3 to 6 months is catastrophic for a product launch, site redesign, or any SEO strategy with business deadlines. This delay turns client-side JavaScript into a strategic liability if you haven't anticipated SSR or prerendering.

What nuances should be applied to this claim?

Splitt mentions sites of 50-100K URLs. What about small sites with 500 pages? Monsters with 5 million URLs? [To verify] — Google provides no delay curve based on volume.

Moreover, nothing indicates whether this delay applies uniformly to all pages or if Google prioritizes certain sections (homepage, main categories). It's likely that URLs with high traffic probability pass through faster — but there's no official data on this.

In what cases doesn't this rule apply?

If you use Server-Side Rendering (SSR) or static generation (SSG), content is delivered as standard HTML from the first crawl. No rendering queue, near-immediate indexation if everything else is clean.

Likewise, sites with dynamic prerendering (via Prerender.io, Rendertron, or similar) serve complete HTML to Googlebot. The 3-6 month delay disappears. This is exactly why large e-commerce platforms are massively migrating to Next.js or Nuxt in SSR/SSG mode.

Practical impact and recommendations

What concrete steps should you take to reduce this delay?

First priority: abandon pure client-side JavaScript for all critical content. If your business depends on fast indexation, Client-Side Rendering (CSR) is no longer a viable option. Migrate to SSR, SSG, or at minimum prerendering for Googlebot.

Next, optimize your crawl budget. The less time Google spends crawling unnecessary resources, the more resources it can allocate to rendering. Block parasitic URLs, clean up redirect chains, eliminate soft 404s.

How do you verify your site isn't suffering from this delay?

Use the URL inspection tool in Search Console and compare "fetched HTML" to "rendered DOM". If critical content only appears in the rendered DOM, you're in the several-month queue.

Also check the last crawl date vs. actual indexation date in your server logs and Search Console. A gap of several weeks or months confirms the issue.

What mistakes must you avoid at all costs?

Don't rely solely on "crawled" status in Search Console to validate indexation. Google may have visited the page without rendering or indexing it yet. Systematically test with a site:yoururl.com search in Google.

Also avoid launching a JS-heavy redesign without an SSR strategy if you have tight commercial deadlines. 6 months of indexation delay is potentially two quarters of lost revenue.

- Migrate to SSR, SSG, or prerendering for critical content

- Compare fetched HTML vs. rendered DOM in Search Console

- Monitor the gap between crawl date and actual indexation date

- Optimize crawl budget (block unnecessary URLs, clean redirects)

- Test actual indexation with site: rather than relying on Search Console status

- Plan for a minimum 6-month delay for any JS-heavy launch without SSR

❓ Frequently Asked Questions

Le délai de 3-6 mois s'applique-t-il aussi aux mises à jour de contenu ?

Un site de 500 pages JavaScript est-il concerné par ce délai ?

Le SSR élimine-t-il complètement ce problème ?

Peut-on forcer Google à accélérer le rendu JavaScript ?

Les Progressive Web Apps (PWA) sont-elles concernées ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 01/02/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.