Official statement

Other statements from this video 9 ▾

- □ Les spinners de chargement peuvent-ils vraiment bloquer l'indexation de vos pages JavaScript ?

- □ Pourquoi l'indexation JavaScript prend-elle 3 à 6 mois après le crawl ?

- □ Pourquoi vos liens JavaScript ralentissent-ils la découverte de vos pages par Google ?

- □ Le JavaScript peut-il vraiment être indexé plus vite que l'HTML ?

- □ Comment vérifier si Google rend vraiment votre JavaScript avec la méthode du honeypot ?

- □ Tous les frameworks JavaScript sont-ils vraiment égaux face au crawl de Google ?

- □ Google ment-il sur le rendu JavaScript ou simplifie-t-il juste la vérité ?

- □ Faut-il vraiment corriger la technique avant de miser sur le contenu et les backlinks ?

- □ Pourquoi Google recommande-t-il de tester en conditions réelles plutôt que de croire la documentation ?

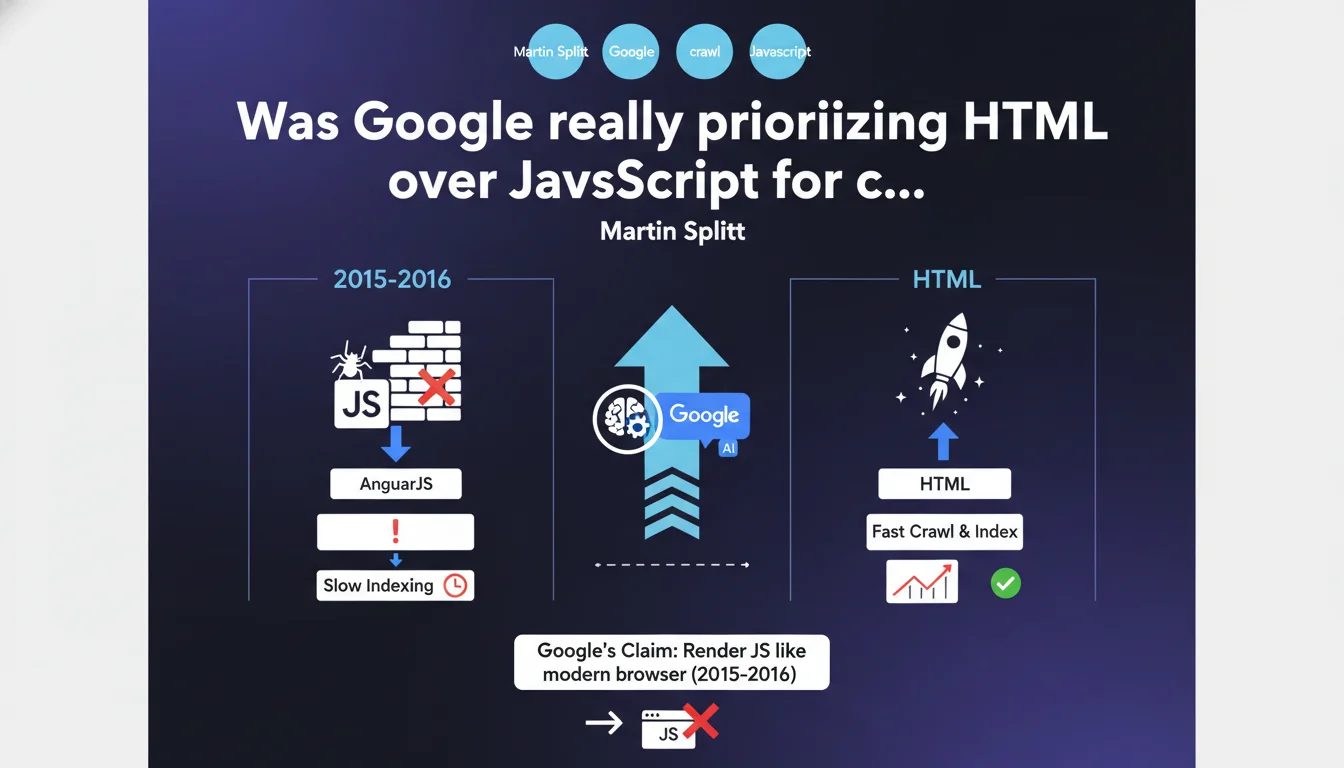

In 2015-2016, Google struggled to properly crawl certain JavaScript frameworks like AngularJS, despite public claims to the contrary. Pure HTML sites were indexed faster and more completely than their JavaScript equivalents. A confession that sheds new light on Google's official messaging at the time about client-side rendering.

What you need to understand

Why did Google struggle to index JavaScript correctly?

In 2015-2016, JavaScript frameworks like AngularJS were exploding in popularity. Developers loved this approach, which shifted display logic to the client side. Except Googlebot wasn't equipped to handle this complexity in real time.

Yet Google was publicly communicating about its ability to render JavaScript like a modern browser. The reality on the ground? A significant gap between marketing messaging and actual technical performance. Full-JavaScript sites sometimes waited weeks before being properly indexed.

What differentiated HTML indexing from JavaScript indexing?

HTML crawling was immediate: Googlebot retrieved the source code, analyzed it, indexed the content. Efficient, fast, predictable.

With JavaScript, an additional step was added: rendering in a separate queue. The crawler had to wait for a headless browser to execute the code, generate the final DOM, and only then index. This latency created absurd situations where content remained invisible for days.

What were the practical consequences for JavaScript-based sites?

Sites that migrated to AngularJS noticed unexplained drops in organic traffic. Their content existed, but Google couldn't see it — or couldn't see it quickly enough to maintain their rankings.

Developers found themselves stuck: follow modern web development trends or prioritize SEO visibility. A choice that should never have existed if Google's promises had been kept from the start.

- HTML guaranteed immediate indexing without depending on a separate rendering queue

- AngularJS and similar frameworks created undocumented indexing delays

- Google communicated about its JavaScript rendering capability while knowing its systems faced major difficulties

- Indexing latency could reach several weeks on certain full-JavaScript sites

- No official documentation warned developers about these limitations before migration

SEO Expert opinion

Is this admission consistent with ground-level observations from that era?

Absolutely. SEOs who worked on JavaScript migrations between 2015 and 2017 all have scars to show. Post-migration traffic drops were systematic, yet Google invariably pointed to its documentation claiming that "JavaScript is no longer a problem".

Except the problem was very real. Entire websites became partially invisible because their content was dynamically generated. And when you contacted Google, the standard response was "we render JavaScript like Chrome". Technically true. In practice? Weeks of delay.

Why didn't Google communicate clearly about these limitations?

That's the million-dollar question. Google had every incentive to maintain an image of a modern search engine capable of indexing everything. Publicly admitting that its crawler struggled with AngularJS would have been an admission of technical weakness compared to Bing or other competitors.

The problem is that this vague communication cost thousands of sites dearly. How many poorly anticipated JavaScript migrations? How many businesses that lost 30-40% of their organic traffic without understanding why? [To be verified] but testimonials from that era suggest it was massive.

Have Google's systems really improved since then?

Yes and no. Google has invested heavily in Evergreen Googlebot and improved JavaScript rendering. Today, things are better — but they're not perfect.

The real change? Google communicates more openly about best practices for SSR and hydration. But fundamentally, a pure HTML site is still always crawled more efficiently than a full-JavaScript equivalent. The physics of the web hasn't changed: fewer steps equals faster speed.

Practical impact and recommendations

What should you do if your site still relies heavily on client-side JavaScript?

Migrate to a hybrid architecture is the safest recommendation. SSR (Server-Side Rendering) or SSG (Static Site Generation) ensure that critical content appears in the initial HTML. Googlebot no longer has to wait for JavaScript rendering.

If you're on Next.js, Nuxt, or a modern framework, verify that your important pages are using getServerSideProps or getStaticProps. If you're still on a pure SPA (React without SSR, Vue without Nuxt), you're potentially at risk.

How can you verify that Google is properly indexing your JavaScript content?

Use the URL inspection tool in Search Console. Compare the "raw HTML" and the "rendered version". If essential content only appears in the rendered version, you're dependent on the JavaScript queue — and therefore on potential delays.

Also run crawls with Screaming Frog with JavaScript disabled. Anything that disappears in this mode is invisible to a basic crawler. Even if Googlebot performs better, it's a warning signal.

What mistakes should you absolutely avoid during a technical overhaul?

Never assume that "Google handles JavaScript now". Even in 2023-2025, certain poorly configured frameworks create indexing problems. Always test systematically before deploying to production.

Also avoid loading critical content through late asynchronous API calls. If your H1, main text, or internal links appear after 2-3 seconds of loading, you're wasting crawl budget and risking partial indexation.

- Prioritize SSR or SSG for strategic pages (categories, product pages, articles)

- Verify that critical content appears in the initial HTML source

- Test indexing with the URL inspection tool in Search Console

- Crawl your site with JavaScript disabled to detect invisible content

- Measure rendering time: if main content appears after 2 seconds, there's a problem

- Implement regular monitoring of indexed pages and their rendered content

- Avoid pure SPAs without SSR/SSG on sites that depend on SEO

❓ Frequently Asked Questions

Google indexe-t-il mieux le JavaScript aujourd'hui qu'en 2015-2016 ?

Un site 100% JavaScript peut-il quand même bien se positionner sur Google ?

Quels frameworks JavaScript posent encore des problèmes d'indexation ?

Faut-il abandonner complètement le JavaScript pour le SEO ?

Comment savoir si mon site souffre d'un problème d'indexation JavaScript ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 01/02/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.