Official statement

Other statements from this video 9 ▾

- □ Google favorisait-il vraiment le HTML au détriment du JavaScript pour l'indexation ?

- □ Les spinners de chargement peuvent-ils vraiment bloquer l'indexation de vos pages JavaScript ?

- □ Pourquoi l'indexation JavaScript prend-elle 3 à 6 mois après le crawl ?

- □ Pourquoi vos liens JavaScript ralentissent-ils la découverte de vos pages par Google ?

- □ Comment vérifier si Google rend vraiment votre JavaScript avec la méthode du honeypot ?

- □ Tous les frameworks JavaScript sont-ils vraiment égaux face au crawl de Google ?

- □ Google ment-il sur le rendu JavaScript ou simplifie-t-il juste la vérité ?

- □ Faut-il vraiment corriger la technique avant de miser sur le contenu et les backlinks ?

- □ Pourquoi Google recommande-t-il de tester en conditions réelles plutôt que de croire la documentation ?

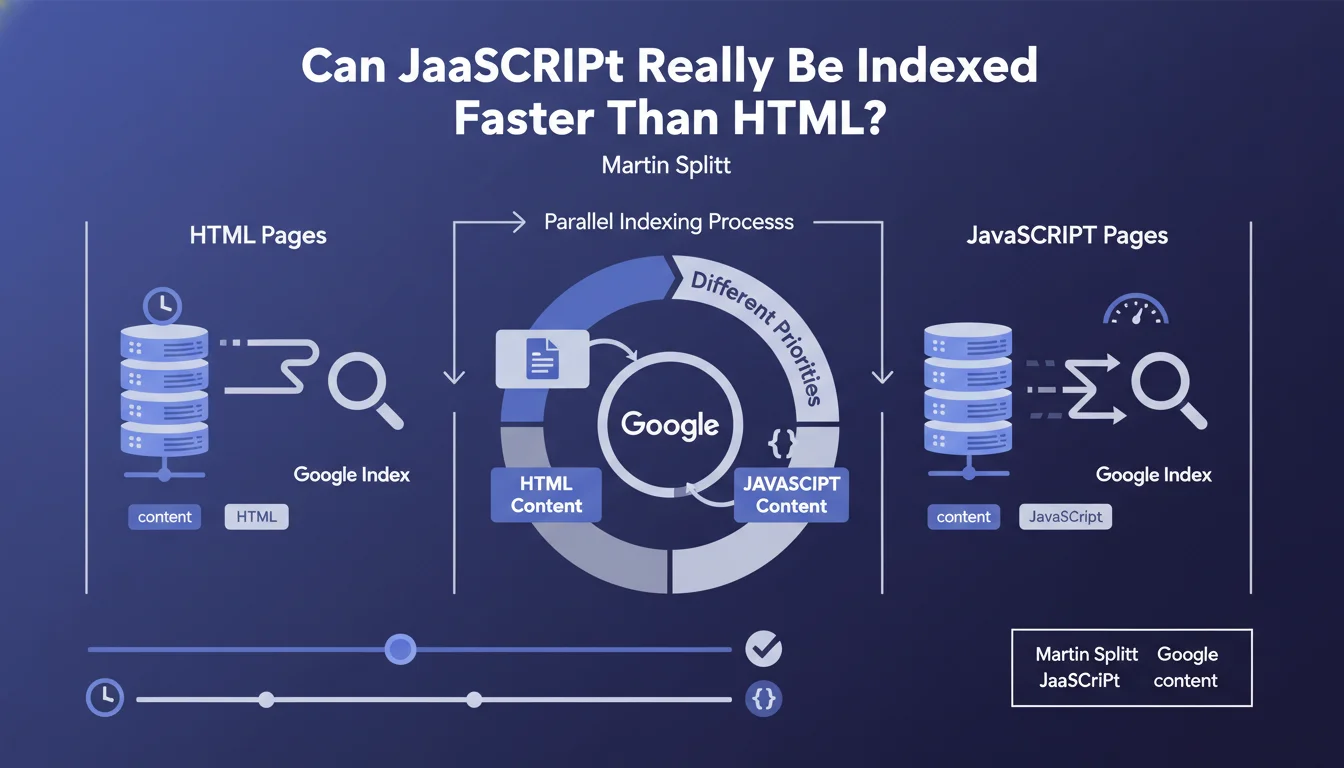

Google claims that certain JavaScript pages can be indexed faster than their static HTML equivalents, thanks to parallel indexing processes with varying priorities based on content type. This statement challenges the common belief that JavaScript systematically slows down indexing.

What you need to understand

Why does this statement contradict established beliefs?

For years, the golden rule was simple: static HTML always comes first. The argument? Google must first crawl the page, then send it to a queue for JavaScript rendering, which inevitably creates delay.

Martin Splitt introduces here a nuance rarely discussed. Google uses parallel indexing processes that don't necessarily follow a strict sequential order. Some JS pages can be processed with higher priority than HTML deemed less critical.

What does this story about parallel processes actually mean?

Google doesn't operate like a queue at a bakery. It manages multiple simultaneous flows: initial crawl, JavaScript rendering, content extraction, indexing. These operations can overlap.

If your JavaScript page is already cached on the client side, if it loads quickly, and if Google has already rendered it recently, the gap between HTML and JS can disappear. Worse: a heavy HTML page, poorly structured, with cascading redirects can take longer than a well-optimized JS.

In what cases can this scenario really occur?

Splitt doesn't provide numbers — and that's the problem. We can imagine situations where this claim holds:

- Progressive web applications (PWAs) with service workers and aggressive caching

- JavaScript pages pre-rendered server-side (SSR) or with static rendering (SSG)

- Sites with high crawl budget where Google can afford to parallelize massively

- Content deemed high priority by the algorithm (news, high-traffic products)

- Static HTML pages but technically deficient (high server response time, multiple redirects)

SEO Expert opinion

Is this statement consistent with field observations?

Let's be honest: in 95% of cases, static HTML remains faster to index. Field tests consistently show a delta of several hours, even days, between discovery and complete indexing for pure client-side JavaScript.

Where Splitt is right is on the edge cases. I've seen React sites with SSR indexed in a few hours, while poorly configured WordPress with 12 cache plugins dragged on for days. The problem is that Google sells us the exception as a potential generality. [To verify] with your own data — never take this kind of claim at face value.

What critical nuances are missing from this statement?

First nuance: indexing ≠ ranking. Whether a JS page is indexed quickly says nothing about its ability to rank. The rendered content must be quality, signals must be present.

Second nuance: priority varies by domain. An authoritative site with a generous crawl budget can afford JavaScript without much suffering. A small site with 50 pages crawled per day? Every second lost matters.

In what contexts does this rule absolutely not apply?

Any site with limited crawl budget must prioritize HTML. Same for e-commerce sites with thousands of product sheets: you can't bet on Google's good will to render 10,000 JS pages quickly.

And that's where it gets stuck: Splitt talks about "certain pages" without ever defining the percentage. 5%? 20%? Without hard numbers, this statement remains anecdotal — interesting, but not actionable at scale.

Practical impact and recommendations

What should you concretely do with this information?

Don't change your base strategy: static HTML or SSR remain the reference. This statement doesn't authorize you to migrate everything to client-side React hoping Google sorts it out.

However, if you're already on modern JavaScript (Next.js, Nuxt, SvelteKit), optimize the initial render. Make sure critical content is available without waiting for full JS load. Use SSR or SSG for priority pages.

- Verify that your critical JS pages are properly rendered server-side (SSR) or generated statically (SSG)

- Analyze delays between crawl and indexing in Search Console (Coverage tab)

- Compare indexing performance between HTML and JS pages of equivalent weight

- Optimize server response time and eliminate unnecessary redirects, even on HTML

- Use the Mobile-Friendly Test to verify that Google correctly renders your JS pages

How can you measure if this "variable priority" works in your favor?

Look at your server logs and cross-reference them with Search Console indexing data. If Googlebot frequently crawls your JS pages and indexing follows quickly, you're in the green zone.

Also test with the URL Inspection Tool: if Google renders your page in a few seconds, that's a good sign. If it takes 15 seconds and content is incomplete, you have a problem — regardless of what Splitt says.

What mistakes should you absolutely avoid?

Don't fall into the trap of premature optimization. If your HTML site works well, don't break it to test this theory. The reverse is also true: if you need to refactor into JS for product reasons, don't panic — with best practices (SSR, hydration, caching), you won't necessarily lose indexing.

Also avoid generalizing your observations. What works on an authoritative domain with 10 million pages won't apply to a niche site with 200 articles. Context is king.

❓ Frequently Asked Questions

Faut-il abandonner le HTML statique au profit du JavaScript ?

Comment savoir si mes pages JavaScript bénéficient de cette priorité ?

Le SSR est-il obligatoire pour un site JavaScript moderne ?

Cette déclaration s'applique-t-elle aux petits sites avec peu de crawl budget ?

Peut-on mesurer l'impact du JavaScript sur l'indexation ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 01/02/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.