Official statement

Other statements from this video 9 ▾

- □ Google favorisait-il vraiment le HTML au détriment du JavaScript pour l'indexation ?

- □ Les spinners de chargement peuvent-ils vraiment bloquer l'indexation de vos pages JavaScript ?

- □ Pourquoi l'indexation JavaScript prend-elle 3 à 6 mois après le crawl ?

- □ Le JavaScript peut-il vraiment être indexé plus vite que l'HTML ?

- □ Comment vérifier si Google rend vraiment votre JavaScript avec la méthode du honeypot ?

- □ Tous les frameworks JavaScript sont-ils vraiment égaux face au crawl de Google ?

- □ Google ment-il sur le rendu JavaScript ou simplifie-t-il juste la vérité ?

- □ Faut-il vraiment corriger la technique avant de miser sur le contenu et les backlinks ?

- □ Pourquoi Google recommande-t-il de tester en conditions réelles plutôt que de croire la documentation ?

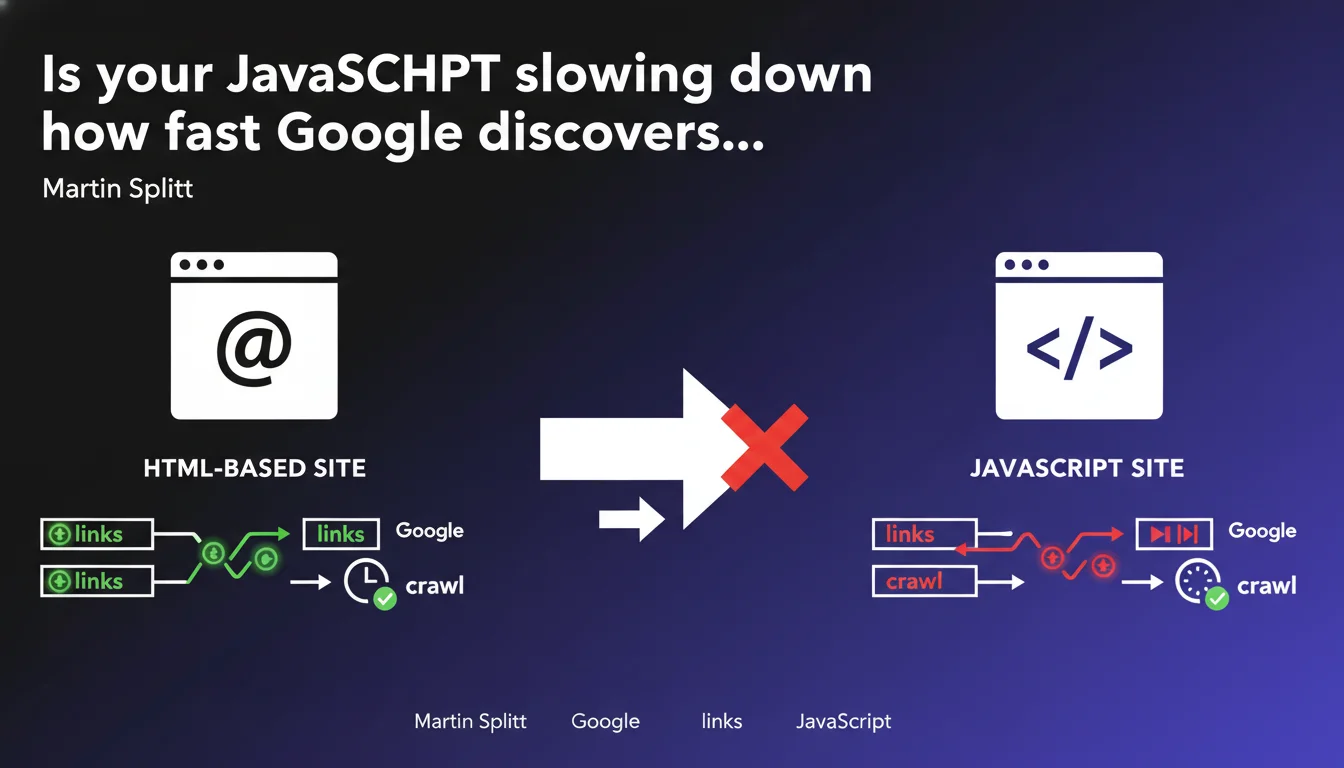

Google confirms that dynamically generated JavaScript links are discovered and crawled significantly more slowly than native HTML links. This delay can directly impact the indexation of your internal pages and compromise their visibility in search results, especially on high-volume websites with thousands of pages.

What you need to understand

What's the concrete difference between an HTML link and a JavaScript link?

A standard HTML link is directly present in the page's source code, accessible from the first load. Googlebot detects it instantly without needing to execute additional code.

A JavaScript link, on the other hand, requires Google's rendering engine to execute the script, wait for it to load, then analyze the modified DOM. This process introduces an unavoidable delay between the page discovery and that of its internal links.

Why does this delay pose a problem for SEO?

The later a link is discovered, the later the page it points to will be crawled—or possibly never crawled at all if your crawl budget is limited. On a site with several thousand pages, this delay cascades: strategic pages can remain invisible for weeks.

Martin Splitt mentions "comparative experiments" that show a significant gap. Without precise numerical data, it's difficult to quantify the exact impact, but the signal is clear: HTML remains the fast lane for crawling.

Are all JavaScript sites impacted in the same way?

No. A site that's a Single Page Application (SPA) entirely built in React or Vue.js experiences this slowdown across its entire structure. Conversely, a site that's mostly HTML with a few localized dynamic links injected will be less penalized.

The implementation type matters too: server-side rendering (SSR) or precompilation (SSG) can neutralize this problem by generating HTML links right from the server response.

- HTML links are crawled immediately, without a JavaScript rendering step

- JavaScript links require code execution and slow down page discovery

- This delay directly impacts crawl budget and can block the indexation of strategic pages

- SPA sites are more exposed than hybrid HTML/JS sites

- SSR or SSG bypass this problem by serving native HTML

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, completely. For years, we've seen that sites heavily dependent on JavaScript for internal navigation suffer from chronic indexation problems. Pages that are perfectly accessible to users remain orphaned in Google Search Console.

Technical audits regularly reveal entire sections of e-commerce or media sites that Googlebot has never crawled, simply because the links to these pages were injected dynamically. This isn't theory—it's a measurable and recurring drag on performance.

Is Google being straightforward on this topic?

Not really. Martin Splitt mentions "comparative experiments" without ever sharing metrics. How much delay exactly? A few hours? Several days? On what type of site? [Needs verification]

Google has repeated since 2015 that it can handle JavaScript "like a modern browser," but it systematically omits mentioning that this rendering is deferred, resource-limited, and non-prioritized. Result: many sites think they're well indexed when a portion of their pages remains invisible.

In what cases can this slowdown be overlooked?

On a 20-page brochure site with simple linking, the delay will be imperceptible. Likewise, if your crawl budget is abundantly sufficient—typical of high-authority sites with few pages—Google will eventually crawl everything, even with JavaScript.

But once you're dealing with thousands of pages, frequently updated content, or sites with tight crawl budgets, every millisecond counts. In these contexts, betting on JavaScript for internal navigation means accepting a structural penalty.

Practical impact and recommendations

What should you do concretely to limit this slowdown?

Favor native HTML links as much as possible in your main navigation, menus, breadcrumbs, and product or article listings. Avoid injecting these critical elements via client-side JavaScript.

If your site uses a modern framework (Next.js, Nuxt, SvelteKit), enable SSR or SSG so your links are generated server-side and delivered directly as HTML. This is the most effective solution to circumvent the problem without overhauling your tech stack.

For secondary or dynamic links (filters, suggestions, personalized modules), JavaScript is still acceptable—but never for critical indexation paths.

How can you verify that your internal links are being discovered quickly?

Check the coverage and crawl reports in Google Search Console. If you see important pages marked "Discovered, currently not indexed," it's often a sign that Googlebot found them late or had difficulty.

Also use crawl tools like Screaming Frog or OnCrawl in "JavaScript disabled" mode. Compare the number of pages discovered with and without JS: the gap reveals your exposure to this issue.

- Replace JavaScript links with HTML <a href> tags in critical areas (navigation, products, articles)

- Enable SSR or SSG on modern frameworks to serve native HTML

- Regularly audit non-indexed pages in Google Search Console

- Crawl your site with and without JavaScript to measure the discovery gap

- Keep HTML links even if JavaScript interactions enrich the user experience

❓ Frequently Asked Questions

Google indexe-t-il quand même les liens JavaScript ou les ignore-t-il complètement ?

Le SSR résout-il définitivement ce problème de lenteur ?

Peut-on mixer liens HTML et liens JavaScript sur un même site sans risque ?

Comment savoir si mon site est impacté par ce ralentissement ?

Les frameworks modernes comme React ou Vue.js sont-ils incompatibles avec un bon SEO ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 01/02/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.