Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google n'indexe-t-il jamais l'intégralité d'un site web ?

- □ Pourquoi vos pages restent-elles en 'Découvert - actuellement non indexé' ?

- □ Faut-il vraiment attendre que Google indexe vos pages ?

- □ Comment Googlebot ajuste-t-il sa vitesse de crawl en fonction des performances de votre serveur ?

- □ Comment diagnostiquer les problèmes serveur qui freinent le crawl de Google ?

- □ Les problèmes de serveur ne touchent-ils vraiment que les très gros sites ?

- □ Pourquoi Google refuse-t-il d'indexer vos pages en statut 'Découvert' ?

- □ Google peut-il vraiment ignorer des pans entiers de votre site à cause d'un pattern de faible qualité ?

- □ Le maillage interne suffit-il vraiment à faire indexer vos pages découvertes ?

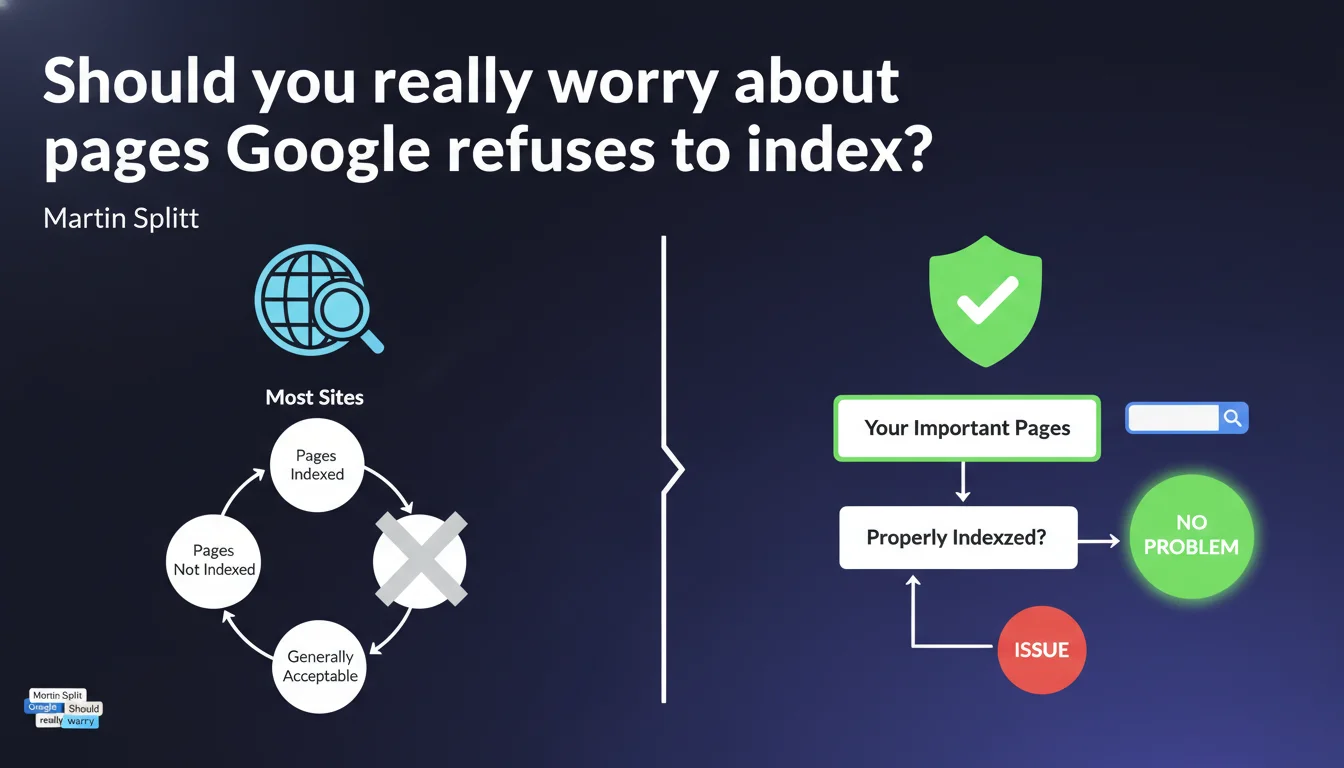

Martin Splitt confirms that it's normal for some pages on a site not to be indexed. What matters is that your strategic pages are well represented in the index. Google doesn't promise to index 100% of your content, and this is expected behavior.

What you need to understand

Why does Google refuse to index certain pages?

Google doesn't guarantee exhaustive indexation of a site. Its algorithm selects what deserves to be stored in its index based on quality criteria, relevance, and available resources.

Duplicate pages, weak content, parameterized URLs, or technical resources with no value for the end user are regularly discarded. Google optimizes its index — it doesn't want to saturate it with pages that add nothing to its search results.

What counts as an "important page" for Google?

Martin Splitt doesn't give a precise definition. But it's clear he means pages that generate qualified traffic, that answer your audience's search intent, or that support your business model.

A product sheet with stock, a well-documented blog post, a structural category page — that's what counts. A "Legal notice" page or an outdated sorting filter? It doesn't matter if they're missing from the index.

Does this statement challenge our indexation practices?

No, it confirms what practitioners have observed for years. But it also legitimizes a certain passivity from Google regarding the indexation problems many sites experience — and that's where it gets tricky.

Saying "it's normal" doesn't solve the problem of sites seeing their strategic pages excluded without apparent reason. This statement can serve as a smokescreen for real malfunctions.

- Not all pages on a site deserve to be indexed according to Google

- Selective indexation is expected behavior, not a bug

- Google prioritizes pages with high added value for users

- The problem arises when strategic pages are excluded without clear justification

- This position can mask failures in the selection algorithm

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, overall. Technical audits regularly show that 20 to 40% of a site's URLs can be absent from the index — and often, this is justified. Poorly configured faceted filter pages, blog archives without traffic, session URLs... plenty of content that Google legitimately ignores.

But — and this is where Splitt's discourse becomes problematic — it frequently happens that strategic pages are excluded without explanation. Product sheets with stock, long-form well-researched articles, worked-on category pages... yet absent from the index for weeks.

What nuances should be added to this message?

[To verify] Google provides no clear threshold to distinguish a "normal" situation from a real problem. Starting from what percentage of non-indexed pages should you worry? Mystery.

Another blind spot: this statement doesn't specify whether pages excluded from the index can still consume crawl budget. If Googlebot regularly visits URLs it will never index, that's a waste of resources that indirectly penalizes the site.

And that's where it gets stuck. Splitt says "it's normal", but provides no levers to control this selection. We're left in the dark about the actual criteria that push Google to exclude one page over another.

In what cases does this rule not apply?

If your site is a pure-play e-commerce with 500 product sheets and 200 are missing from the index, it's no longer "normal". It's a direct commercial problem.

Same thing for a news outlet: if your articles from today aren't indexed within hours of publication, you lose the freshness battle. Google can say it's "acceptable" — for you, it's not.

Practical impact and recommendations

What should you do concretely to optimize indexation?

Start by identifying your strategic pages — those that generate qualified traffic or revenue. Verify their presence in the index with a site: search or via Search Console. If they're missing, dig deeper.

Next, clean up. Block in robots.txt or with noindex the pages without value: filters, sorts, useless archives, parameterized URLs. The less Google crawls weak content, the more it can focus on what matters.

What mistakes should you avoid when facing non-indexed pages?

Don't assume "Google indexes everything". That era is over. Don't force indexation of thousands of pages via bloated XML sitemaps — you risk diluting the signal.

Also avoid believing that each excluded page is a disaster. If your "Cookie Policy" page isn't indexed, nobody searches for it. Focus your efforts on pages that deserve to be found.

How can you verify that your site complies with this logic?

Audit the indexation rate in Search Console. Look at the ratio pages submitted / pages indexed. If the gap is massive (>50%), analyze the exclusion reasons in the coverage report.

Then identify the excluded pages that shouldn't be. If they're well crawled, well linked, with unique and relevant content, but still missing from the index, there's a problem — and Splitt's statement doesn't solve it.

- List the site's strategic pages and verify their presence in the index

- Analyze exclusion reasons in the Search Console coverage report

- Clean up URLs with no added value (filters, sorts, archives, duplicates)

- Optimize internal linking to priority pages

- Check the content quality of excluded pages: are they really relevant?

- Monitor indexation rate changes month after month

- Don't force indexation of secondary pages via mass submissions

❓ Frequently Asked Questions

Quel pourcentage de pages non indexées est considéré comme normal ?

Comment forcer l'indexation d'une page importante absente de l'index ?

Les pages non indexées consomment-elles du crawl budget ?

Google indexe-t-il plus facilement les pages avec beaucoup de backlinks ?

Faut-il supprimer les pages que Google n'indexe pas ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 20/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.