Official statement

What you need to understand

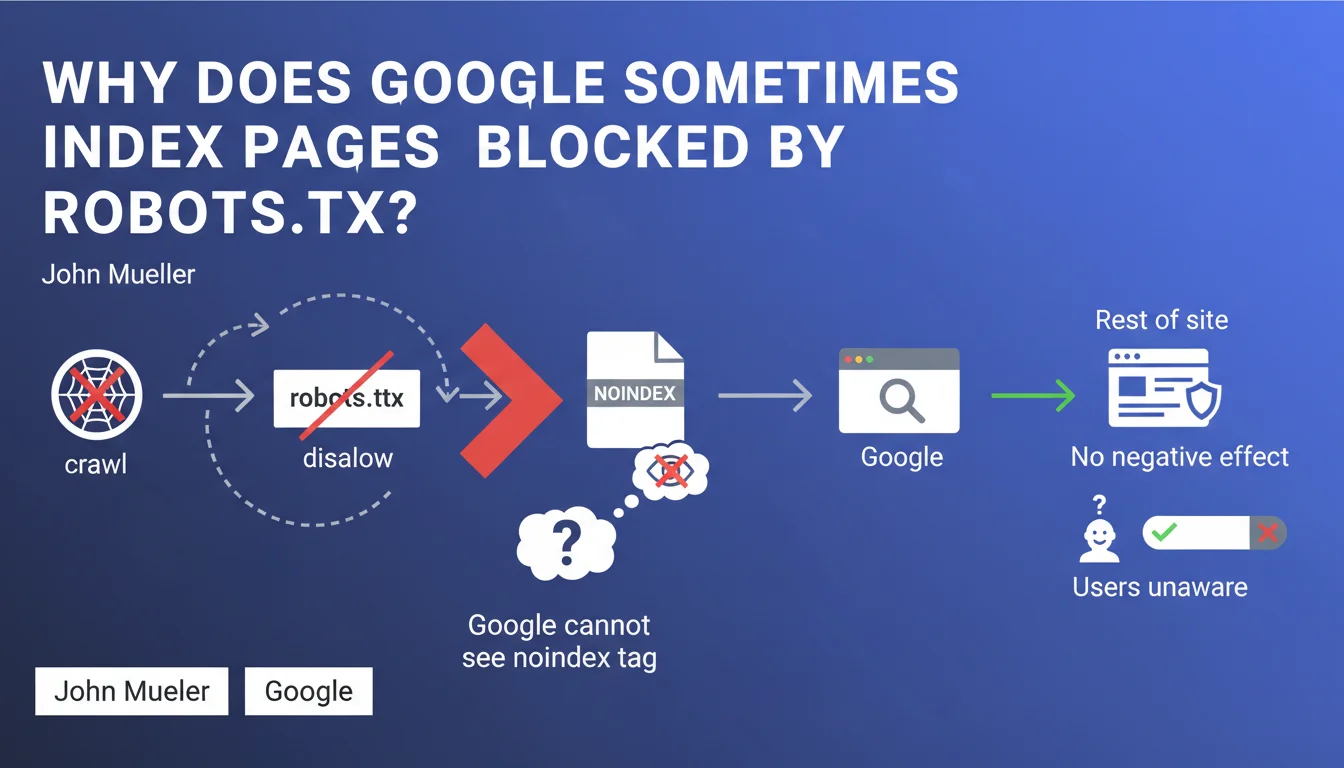

This statement highlights a technical paradox often overlooked by SEO practitioners. When a page is blocked in the robots.txt file via a Disallow directive, Googlebot cannot crawl it.

However, if that same page contains a meta noindex tag in its HTML code, Google will never be able to detect it since it doesn't access the page's content. Result: the page can appear in the index, typically without a description or content snippet.

This phenomenon occurs particularly when external links point to these blocked pages. Google detects the URL through these backlinks and may decide to index it, even without being able to analyze its actual content.

- Robots.txt blocks crawling, not necessarily indexing

- The noindex tag needs to be read during crawling to be effective

- Pages indexed this way typically appear without a snippet or description

- This phenomenon has no negative impact on the rest of the site according to Google

- Most users never encounter these pages in search results

SEO Expert opinion

This explanation from Google is perfectly consistent with what we've observed in the field for years. SEO audits regularly reveal URLs blocked in robots.txt that nevertheless appear in the index with the typical mention "No information is available for this page."

However, we must nuance the claim that this has "no negative effect." In some cases, a large volume of poorly managed pages can dilute the crawl budget and create confusion in Google's understanding of the site's architecture.

Google's recommendation is correct but incomplete: beyond making pages "crawlable + indexable," you must also consider internal link management to these pages and their potential canonicalization.

Practical impact and recommendations

- Audit your robots.txt: identify all blocked URLs and check if they appear in the index via a "site:yourdomain.com" search

- Never block a page in robots.txt that you want to deindex: leave it accessible so Googlebot can read the noindex tag

- Use the right combination: to deindex = noindex without robots.txt; to not crawl but allow indexing = robots.txt only

- Remove internal links pointing to pages you don't want crawled or indexed

- Check external backlinks: even when blocked in robots.txt, pages with numerous inbound links can be indexed

- Use Search Console to request temporary removal of incorrectly indexed URLs while you fix the problem

- Prefer HTTP 410 (Gone) or 404 status codes for permanently deleted pages rather than robots.txt blocking

- Document your indexation strategy: create a clear decision matrix (index/deindex vs. crawl/don't crawl)

In summary: Managing indexation requires a thorough understanding of Google's crawling and indexing mechanisms. A configuration error between robots.txt and noindex directives can have lasting consequences on visibility.

These technical decisions require in-depth expertise in SEO architecture and continuous monitoring. Given the complexity of these configurations and the risks of errors with potentially significant consequences, many sites choose to work with a specialized SEO agency that can implement a coherent indexation strategy tailored to each project's specific needs.

💬 Comments (0)

Be the first to comment.