Official statement

Other statements from this video 9 ▾

- □ Pourquoi Google n'indexe-t-il jamais l'intégralité d'un site web ?

- □ Faut-il vraiment attendre que Google indexe vos pages ?

- □ Comment Googlebot ajuste-t-il sa vitesse de crawl en fonction des performances de votre serveur ?

- □ Comment diagnostiquer les problèmes serveur qui freinent le crawl de Google ?

- □ Les problèmes de serveur ne touchent-ils vraiment que les très gros sites ?

- □ Pourquoi Google refuse-t-il d'indexer vos pages en statut 'Découvert' ?

- □ Google peut-il vraiment ignorer des pans entiers de votre site à cause d'un pattern de faible qualité ?

- □ Le maillage interne suffit-il vraiment à faire indexer vos pages découvertes ?

- □ Faut-il vraiment se préoccuper des pages non indexées par Google ?

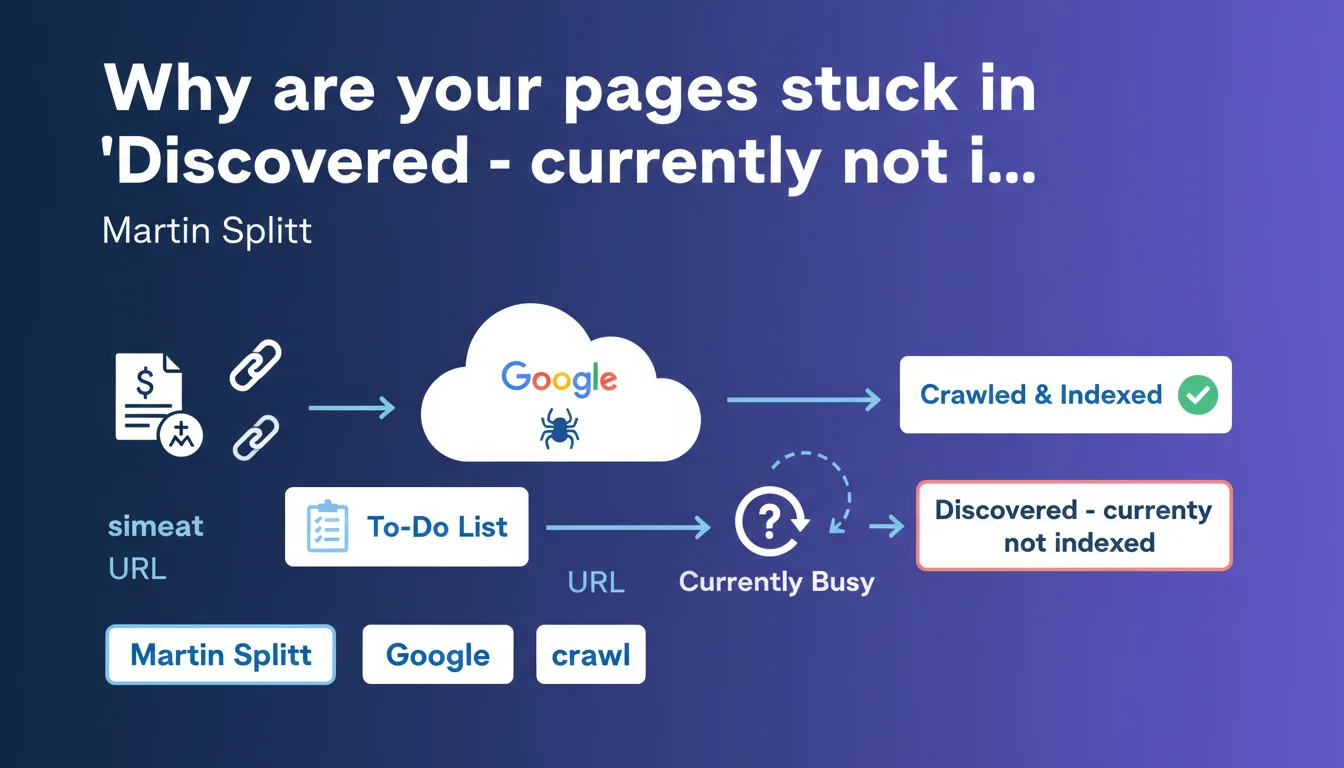

When Google displays 'Discovered - currently not indexed', it simply means Googlebot has spotted the URL but hasn't had time to crawl it yet — it was busy elsewhere. This isn't a penalty signal, just a matter of prioritization in the crawl queue.

What you need to understand

What does this status actually mean?

The 'Discovered - currently not indexed' status appears in Search Console when Googlebot has identified a URL — via a sitemap, internal link, or external link — but hasn't visited it yet.

This URL enters a crawl queue. Google has nothing against it; it simply hasn't allocated resources to process it yet. Martin Splitt clarifies that it's a matter of priority: other URLs have captured the bot's attention before yours.

Does this mean there's a technical problem?

No. This status is not a penalty, nor an indicator of poor-quality content. It says nothing about the intrinsic value of your page.

It's a transitional state. A URL may remain in this state for a few days, a few weeks — or even longer if your site doesn't have a generous crawl budget or if the page is deemed low priority by Googlebot's algorithm.

What factors influence this delay?

Google doesn't publicly detail its prioritization criteria, but we know several elements come into play: the site's popularity, update frequency, perceived quality of already-indexed content, and page depth in the site structure.

A site with low internal PageRank, few backlinks, and under-crawled content will see this status persist longer than a site with strong authority and a steady publication pace.

- This status is not a sanction — it's a queue indicator.

- Googlebot discovers the URL but hasn't visited it yet due to lack of time or priority.

- The delay depends on crawl budget and Google's internal URL prioritization.

- No quality signal is directly associated with this status — for now.

SEO Expert opinion

Is Google's explanation complete?

Let's be honest: it's technically correct, but incomplete. Saying Googlebot was "busy with other URLs" amounts to admitting there's a priority hierarchy — but Google never details which criteria influence this prioritization.

In practice, we observe that some URLs in 'Discovered' remain blocked for months, even on sites with a theoretically comfortable crawl budget. If it were truly just a matter of "haven't had time yet", why would the delay sometimes be so long? [Needs verification] with detailed crawl data, but the hypothesis of an implicit quality or relevance filter shouldn't be ruled out.

When does this status become problematic?

If a handful of secondary pages remain 'Discovered', it's not dramatic. However, if you notice that strategic pages — new product sheets, important blog articles — stagnate in this state for several weeks, there's a crawl budget or internal prioritization issue.

Concretely? This may signal defective internal linking, a lack of freshness signals, or simply a site too vast for the allocated crawl budget. Google won't say it explicitly, but it's up to you to diagnose it.

Should you force crawling of these URLs?

Requesting indexation via Search Console may speed up the process for a few critical URLs, but it's not a scalable solution. If you have hundreds of pages in 'Discovered', manually forcing indexation is unrealistic.

Better to work on structural signals: improve internal linking, boost the popularity of orphaned pages, optimize crawl frequency through robots.txt and strategic sitemaps. If Google is deprioritizing your content, it's often because your architecture isn't giving it the right clues.

Practical impact and recommendations

What should you do if too many pages remain 'Discovered'?

First step: crawl budget audit. Check crawl reports in Search Console and identify URLs unnecessarily monopolizing resources — duplicate pages, parametric URLs, obsolete content.

Next, strengthen internal linking toward strategic pages. If an important page is 4-5 clicks away from the homepage, Googlebot will see it as secondary. Move it up in the hierarchy, add contextual links from pages with high internal PageRank.

What critical mistakes must you avoid?

Don't bombard Google with manual indexation requests for hundreds of URLs. This solves nothing structurally and risks diluting the effectiveness of your legitimate requests.

Also avoid submitting massive XML sitemaps containing thousands of unimportant URLs. Google will discover them but won't crawl them — you're unnecessarily saturating the queue. Better to use a targeted sitemap focused on your priority content.

How can you verify your optimization is working?

Track the evolution of 'Discovered' status in Search Console over 4 to 6 weeks. If the number of pending URLs gradually decreases, your crawl budget optimization is bearing fruit.

Also use log analysis tools (Oncrawl, Botify, Screaming Frog Log Analyzer) to observe the actual frequency of Googlebot visits and adjust your priorities accordingly.

- Identify strategic URLs stuck in 'Discovered' for more than 2 weeks

- Audit crawl budget and disindex unnecessary content (noindex tag, robots.txt)

- Strengthen internal linking toward priority pages

- Submit a targeted XML sitemap, not an exhaustive one

- Monitor server logs to confirm increased Googlebot crawl

- Avoid mass manual indexation requests

❓ Frequently Asked Questions

Combien de temps une URL peut-elle rester en 'Découvert - actuellement non indexé' ?

Faut-il supprimer les URLs en 'Découvert' du sitemap ?

Demander une indexation manuelle accélère-t-il le processus ?

Ce statut signifie-t-il que ma page est de mauvaise qualité ?

Peut-on forcer Google à crawler plus rapidement un site ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 20/08/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.