Official statement

Other statements from this video 10 ▾

- □ Faut-il baliser les programmes de fidélité pour améliorer ses résultats enrichis ?

- □ Pourquoi Google abandonne-t-il 7 types de données structurées et que faut-il faire maintenant ?

- □ Faut-il maintenir les données structurées si Google arrête d'en afficher certaines ?

- 4:56 Pourquoi Google refuse-t-il de s'engager sur l'avenir des AI Overviews ?

- 8:48 Peut-on empêcher Google de nous positionner sur certains mots-clés ?

- 9:56 La qualité d'une page suffit-elle pour garantir son indexation ?

- 9:56 Combien de temps Google met-il vraiment à reconnaître les changements SEO ?

- 12:00 Comment Google découvre-t-il vraiment les URLs de votre site ?

- 12:00 Faut-il vraiment compter le nombre exact d'URLs de son site ?

- 15:15 Faut-il vraiment soumettre son sitemap tous les jours ?

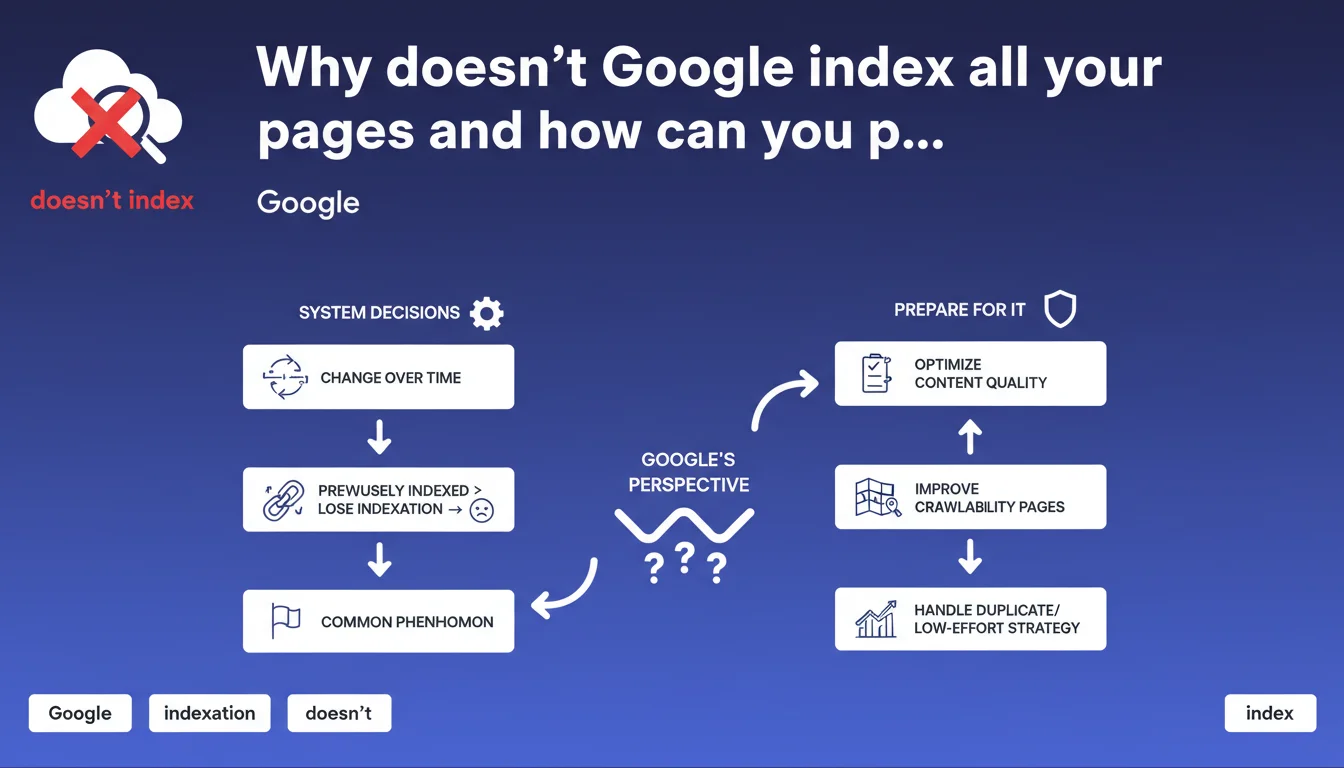

Google states it does not guarantee the indexation of any page, even previously indexed ones. This decision relies on its algorithms and can change at any time. The gradual de-indexation of certain URLs should be considered normal, not an anomaly.

What you need to understand

What does this Google statement really mean?

Google does not commit to indexing your entire site. Each page is evaluated according to internal criteria — content quality, relevance, user experience, technical signals — and can be rejected or removed from the index at any time.

This position formalizes what many were already observing: indexation is not an acquired right. A page indexed today can disappear tomorrow if Google determines it no longer adds value to its index.

Why do some pages lose their indexation?

The reasons are multiple and rarely explicit. Google mentions system decisions without detailing the exact parameters. This can include detection of duplicate content, low user engagement, insufficient quality assessment, or simply an algorithmic re-evaluation.

The intentional vagueness of this communication leaves little room to precisely diagnose why a particular page disappears. It's frustrating, but Google owns it.

Is this really a common phenomenon or something exceptional?

Google insists on the normal nature of de-indexation. It's not a bug, it's a feature. In other words: if you notice a gradual erosion of your indexed pages, it's not necessarily a sign of a penalty.

This also puts into perspective the obsessive focus on the total number of indexed pages. What matters is the quality and performance of the pages that remain in the index.

- Selective indexation: Google chooses what it indexes based on its own criteria for added value

- Assumed volatility: a page indexed today can disappear without notice or detailed justification

- Normalization of loss: partial de-indexation is presented as a natural evolution, not a malfunction

- Absence of guarantee: no promise of indexation, even for quality content

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. For years, we've seen unexplained indexation fluctuations. Sites lose 20 to 40 percent of their indexed URLs without apparent reason, with no correlation to an algorithm update or identifiable technical issue.

What's changing is that Google is formalizing it. Before, we desperately sought a cause — misconfigured robots.txt, failing canonicals, exhausted crawl budget. Today, Google tells us: sometimes, it's just an algorithmic decision. [To verify]: the question remains whether this "normalization" doesn't also serve to cover real index dysfunctions.

What nuances should be added to this position?

Let's be honest: this communication benefits Google. It removes the engine's responsibility in case of massive indexation issues. "It's normal" becomes an easy answer when thousands of pages disappear without explanation.

But be careful — just because Google normalizes de-indexation doesn't mean you should give up. A sudden drop in indexation remains a warning signal to investigate. Don't blame everything on "the capricious algorithm." Check your technical fundamentals first.

In which cases does this rule not really apply?

Strategic pages — homepage, main categories, key product sheets — should logically remain indexed if they are technically sound and aligned with E-E-A-T criteria. If that's not the case, there's a real problem.

The "normality" invoked by Google mainly concerns low-value pages: deep pagination, filter facets, minor content variants. There, yes, de-indexation is understandable. But if your pillar content disappears, don't hide behind this excuse.

Practical impact and recommendations

What should you do concretely in the face of this reality?

First step: identify your critical pages. Not all your URLs are equal. Focus on those that generate traffic, conversions, or structure your semantic architecture. These pages must absolutely remain indexed.

Next, strengthen their quality signals. Solid internal linking, updated content, flawless technical performance, positive engagement signals. The more a page is judged useful and performing, the less likely it is to disappear from the index.

What mistakes must you absolutely avoid?

Don't artificially inflate your index with low-value pages. Google makes it clear: not everything deserves to be indexed. Creating 10,000 mediocre pages won't help you — on the contrary, it risks diluting the perception of your site's overall quality.

Don't panic at every minor fluctuation either. If you lose 5 percent of indexed pages on a 50,000-URL site, it's probably natural algorithmic cleanup. Focus on significant variations and on pages with high business impact.

How do you monitor and anticipate these indexation variations?

Set up regular monitoring via Search Console. Track the evolution of the number of indexed pages, but especially identify which pages are disappearing. A tool like Screaming Frog combined with Search Console exports lets you cross-reference data.

Document your observations. If an entire category of pages is systematically de-indexed, it may signal that this type of content doesn't meet Google's standards. Adjust your editorial strategy accordingly.

- Map your strategic pages and verify their monthly indexation

- Prioritize optimizing high-ROI content to maximize their quality signals

- Proactively clean up low-value pages (noindex, deletion, improvement)

- Track index evolution in Search Console with regular exports

- Don't confuse normal fluctuation with structural technical issues

- Investigate any sudden drop (>20 percent) before attributing it to the algorithm

❓ Frequently Asked Questions

Google peut-il désindexer des pages sans raison apparente ?

Faut-il s'inquiéter si le nombre de pages indexées diminue progressivement ?

Comment savoir quelles pages Google a choisi de ne pas indexer ?

Peut-on forcer Google à indexer une page spécifique ?

Cette politique s'applique-t-elle à tous les types de sites ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 26/06/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.