Official statement

Other statements from this video 8 ▾

- □ Pourquoi Google refuse-t-il de viser 100% de fiabilité pour son moteur de recherche ?

- □ Google vérifie-t-il réellement l'expérience utilisateur au-delà des codes HTTP ?

- □ Pourquoi Google veut-il détecter les incidents avant que vous ne les signaliez ?

- □ Comment Google gère-t-il les pics de trafic sans pénaliser le référencement ?

- □ Pourquoi Google traite-t-il certaines requêtes à moindre coût que d'autres ?

- □ Comment Google provisionne-t-il ses ressources serveur pour les pics de trafic prévisibles ?

- □ Comment Google gère-t-il les incidents de ranking avec des mitigations rapides ?

- □ Pourquoi Google coupe-t-il brutalement certains data centers en cas d'incident ?

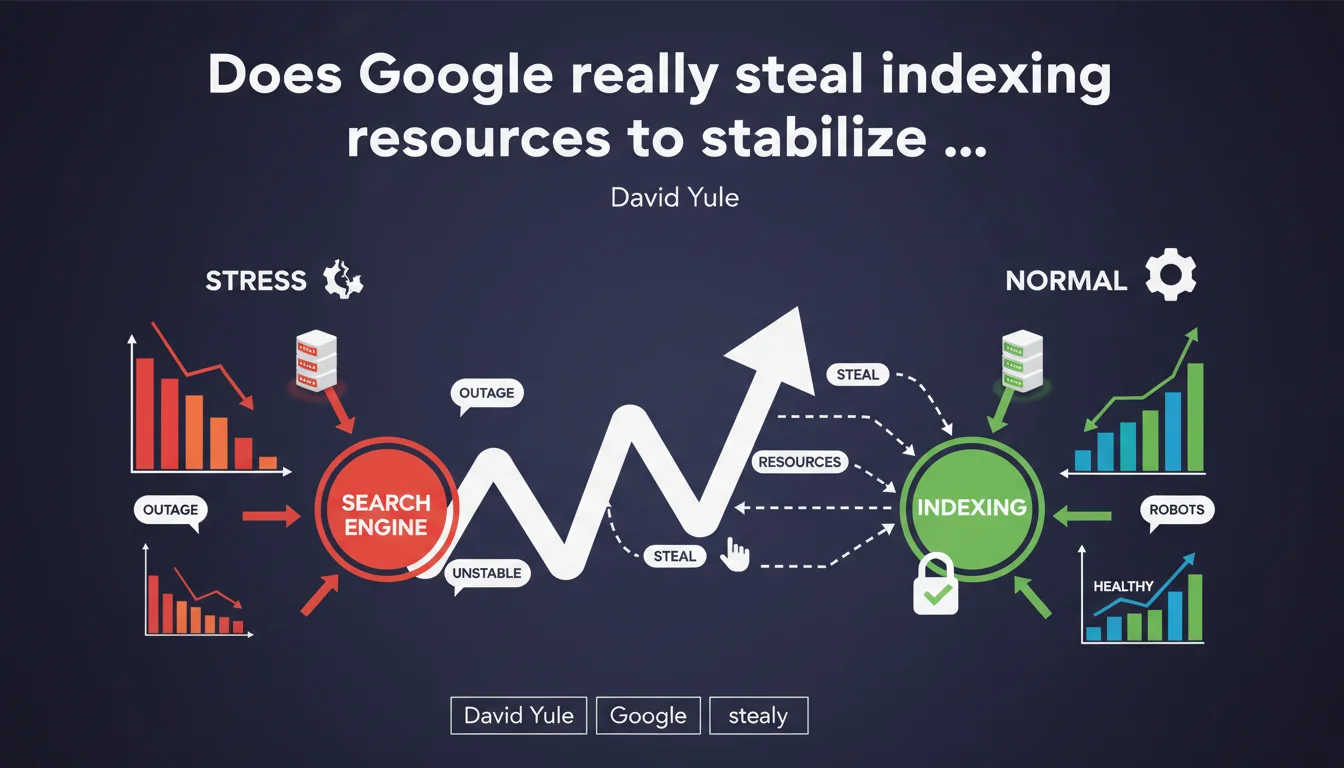

Google confirms that during major incidents, its SRE team can shift resources between different Search components. Concretely, if indexing is running normally but ranking is under stress, Google can temporarily sacrifice indexing resources to stabilize results. This revelation explains certain crawl anomalies observed during periods of instability.

What you need to understand

What does this "resource redistribution" between services actually mean?

Google Search is not a monolithic system. It's an ecosystem of interdependent services: indexation, crawl, ranking, serving, freshness, Knowledge Graph, and more. Each consumes computing resources (CPU, memory, bandwidth).

During a major incident — a partial outage, unexpected overload, critical bug — some components can be placed under stress while others run normally. Google's SRE (Site Reliability Engineering) team can then decide to drain resources from healthy systems to reinforce those in difficulty.

Why is this practice important for SEO practitioners?

This statement provides an official explanation for anomalies observed for years. Have you ever noticed phases where your crawl budget collapsed for no apparent reason? Where freshly published pages took 3 days instead of 3 hours to be indexed? Where position fluctuations seemed disconnected from your actions?

These incidents can be caused by internal trade-offs at Google. If the ranking system is overloaded (for example during a gradually rolled-out algorithm update), Google can reduce crawling to free up computing power. You haven't done anything wrong — it's Google juggling its resources.

Which components can be affected by these redistributions?

All Search systems are potentially affected. Crawling is often the first to be sacrificed: it's not critical for immediate user experience. Delayed indexation (pages discovered but not processed immediately) can be slowed down. Signal reprocessing (PageRank recalculation, semantic embedding updates) can be suspended.

On the other hand, SERP serving (responding to user queries) is absolute priority. Google will always prefer sacrificing crawl rather than degrading SERP display speed.

- Crawling and indexation can be temporarily slowed during major incidents to stabilize other components

- These trade-offs are invisible from the webmaster side — no alerts are sent via Search Console

- Unexplained crawl budget variations may result from these redistributions rather than a problem on your site

- SERP serving always remains priority — Google will never sacrifice user experience to crawl more pages

SEO Expert opinion

Is this statement consistent with on-the-ground observations?

Absolutely. For years, SEO practitioners have noticed inexplicable phases of crawl slowdowns, often simultaneous across multiple unrelated sites. Logs show Googlebot suddenly becoming less active, then resuming its normal pace a few hours or days later — without any changes being made on the site side.

David Yule's revelation finally validates a widely shared hypothesis in the SEO community: these variations aren't always tied to your individual crawl budget, but to infrastructure decisions made by Google to maintain overall Search stability. Let's be honest — it's frustrating when you're waiting for critical content to be indexed, but it makes sense from an engineering perspective.

What limitations should be placed on this statement?

Google speaks of "major incidents," not routine operations. Don't conclude that every minor crawl fluctuation is due to resource redistribution. Most of the time, if your crawl drops sharply, you should first suspect your own infrastructure: degraded response times, 5xx errors, misconfigured robots.txt blocks.

Another important nuance: Google doesn't specify which criteria trigger these trade-offs. When exactly does it consider an incident "major"? How long do these redistributions last? Which services are prioritized in which situation? [To verify] — the statement remains vague on thresholds and governance of these decisions.

Can this practice negatively impact a site's SEO?

Yes, but temporarily. If Google reduces crawling for 24-48 hours to stabilize a critical system, your new pages or important updates will be indexed with a delay. For a news site or e-commerce platform with flash sales, this can represent real traffic loss.

But — and this is crucial — this penalty is neither targeted nor punitive. Your site isn't specifically being hit. All sites suffer the same proportional slowdown. Once the incident is resolved, crawling resumes its normal pace and queued pages are processed. No lasting impact on rankings.

Practical impact and recommendations

How can I distinguish a Google incident from a problem on my site?

First reflex: check whether the crawl drop is isolated or widespread. Consult SEO communities (forums, Twitter, Slack groups) to see if other professionals are noticing the same thing at the same time. If yes, it's probably a Google trade-off. If no, it's your infrastructure.

Second check: analyze your server logs. A crawl drop linked to a Google incident shows up as fewer Googlebot hits, but remaining requests have normal 200 codes and standard response times. If you see 5xx errors, timeouts, or latency spikes, the problem is on your end.

What should I concretely do during these incidents?

Let's be pragmatic: you can't do anything to influence Google's infrastructure decisions. You won't receive any alerts, no messages in Search Console. These redistributions are invisible to webmasters and resolved internally by SRE teams.

The only sensible action is to maintain your infrastructure impeccably at all times. That way, if Google temporarily reduces crawling, you're certain it's not your fault — and as soon as resources are restored, your site will be crawled efficiently without friction.

How can I minimize the impact of these redistributions on my business?

Anticipate. If you're publishing critical or time-sensitive content, never count on immediate indexation. Plan a safety margin of 24-48 hours between publication and when you absolutely need the page to be indexed.

Use priority indexation tools when truly necessary: manual submission via Search Console for strategic pages, well-structured XML sitemaps, strong internal linking from your most-crawled pages. These signals guarantee nothing during an incident, but they increase your chances.

- Monitor your server logs to distinguish a Googlebot slowdown from a technical problem on your infrastructure

- Consult SEO communities during anomalies to confirm whether other sites are impacted

- Maintain optimal server response times (< 200ms) to maximize your crawl when Google resources return

- Plan your critical publications with 48-hour margins rather than betting on immediate indexation

- Use Search Console manual submissions only for truly strategic pages

- Structure your XML sitemap by priority order so important pages are crawled first

❓ Frequently Asked Questions

Combien de temps durent ces redistributions de ressources ?

Est-ce que tous les sites sont impactés de la même manière ?

Puis-je demander à Google de ne pas réduire le crawl sur mon site pendant ces incidents ?

Ces redistributions affectent-elles le ranking de mes pages déjà indexées ?

Comment Google décide-t-il quels services sacrifier en priorité ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/10/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.