Official statement

Other statements from this video 9 ▾

- □ Pourquoi Googlebot signale-t-il des soft 404 sur vos pages géolocalisées vides ?

- □ Le cloaking géolocalisé est-il vraiment acceptable pour Google ?

- □ Afficher du contenu national par défaut est-il considéré comme du cloaking par Google ?

- □ Googlebot crawle-t-il vraiment votre site depuis plusieurs pays ?

- □ Faut-il attendre avant de juger l'impact d'une mise à jour algorithmique Google ?

- □ Pourquoi l'analyse des fichiers logs est-elle indispensable pour les gros sites ?

- □ Pourquoi une page vide détruit-elle votre expérience utilisateur et votre SEO ?

- □ Comment garantir une expérience cohérente avec les attentes utilisateur sans risquer une pénalité pour cloaking ?

- □ Faut-il vraiment comparer l'état réel des pages avant et après une baisse de trafic ?

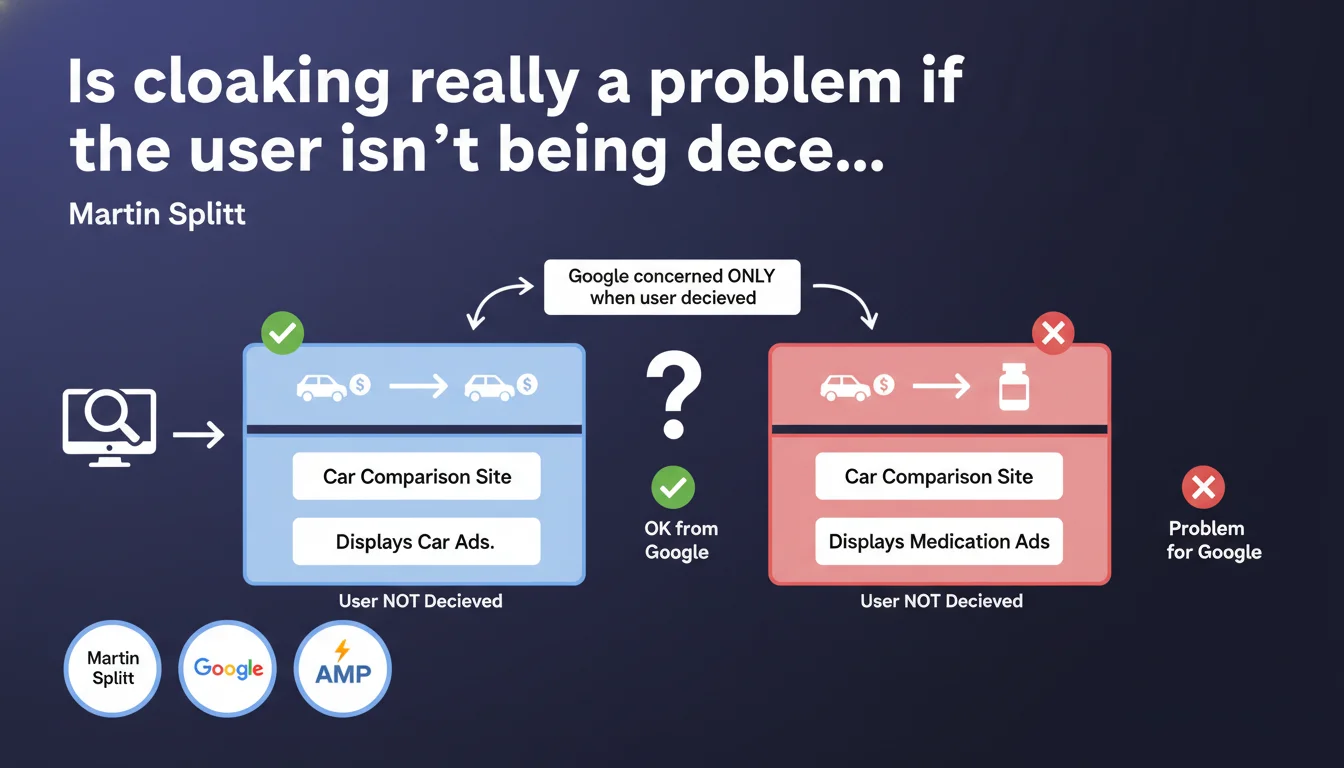

Google clarifies that cloaking only becomes an issue when it deceives the end user. A site displaying radically different content from what the SERP announces (example: promising a car comparison, delivering pharmaceutical ads) crosses the line. The intent to deceive the user takes precedence over the technique employed.

What you need to understand

What does Google really mean by "user deception"?

The classic technical definition of cloaking — showing different content to the bot and the user — is no longer sufficient to qualify as a violation. Google is refocusing its approach on user experience: if someone searches for "car comparison", clicks on a result, and finds themselves drowning in pharmaceutical ads, there is deception.

The example given by Splitt is deliberately exaggerated. It illustrates a complete gap between the promise of the search result and the reality of the page. What matters is the disconnect between the user's legitimate expectation (based on the snippet, title, meta description) and what they actually receive.

Does this statement change the historical definition of cloaking?

Not really. Google has always penalized cloaking, but this clarification shifts the focus. Previously, the documentation emphasized the difference in content between bot and human. Here, the emphasis is on malicious intent: deliberately deceiving the user for clicks, abusive monetization, or spam.

Concretely? A site that slightly adapts its display based on user-agent for legitimate technical reasons (mobile compatibility, rendering optimization) should not be at risk. Provided that the user actually receives what was promised in the SERP.

What are the limitations of this vague definition?

Splitt does not provide a quantitative threshold. How much can content diverge before it becomes "deceptive"? A car comparison site that displays also pharmaceutical ads (but not exclusively) crosses the line? The answer remains in a gray area.

This gray zone leaves room for interpretation by Google. In the absence of numerical criteria, it's the spam team that judges on a case-by-case basis. For a practitioner, this means you must document the legitimate intent of any rendering divergence between bots and users.

- Cloaking is only penalized if it misleads the user between SERP promise and page reality

- Legitimate technical adjustments (mobile rendering, transparent A/B testing) should not cause problems

- Google does not provide a precise threshold — the boundary between optimization and deception remains unclear

- The intent to harm user experience is at the heart of Google's decision

SEO Expert opinion

Is this statement consistent with penalties observed in the field?

Yes and no. Manual penalty cases for cloaking indeed concern sites where the gap between promise and delivery is glaring. Pharma spam disguised as legitimate content, wild redirects to third-party sites, satellite pages — all scenarios where the user gets tricked.

But — and this is where it gets sticky — we also observe sites penalized for minor technical divergences, without any manifest intent to deceive. [To verify]: cases of sanctions for slight differential JavaScript rendering exist, even when the final content is nearly identical. Google claims to target deception, but field application isn't always as nuanced.

What about A/B testing and personalization practices?

Splitt does not explicitly mention A/B testing or personalization by user segment. Yet these practices inherently display different content depending on the visitor. If the bot sees version A and 50% of users see version B, is that cloaking?

In theory, no — as long as each version matches what is promised in the SERP. In practice, documenting the approach and ensuring that Googlebot can access the different variants (via testing with Inspect URL) is an essential precaution. Some A/B testing tools systematically serve the same version to the bot: it's risky if the version served does not reflect the majority of traffic.

Why does Google remain so vague about technical criteria?

Keeping a broad definition allows Google to adapt without having to revise its documentation every time a new spam trick emerges. If the rule were "no more than 10% difference in text content between bot and user", spammers would calibrate their pages to 9.9%. By remaining vague, Google preserves a margin of discretionary authority.

For us practitioners, this means no automated checklist guarantees immunity. You must evaluate each case with common sense: if a user feels deceived upon arriving on the page, Google can penalize. If the technical adaptation genuinely serves the experience (accessibility, performance), the risk is minimal. [To verify]: no official documentation lists tolerated thresholds — everything rests on human interpretation at Google.

Practical impact and recommendations

How can I verify that my site doesn't fall into deceptive cloaking?

First step: systematically compare what Googlebot sees and what a standard user sees. Use the "Inspect URL" tool in Search Console to get the rendering as Google perceives it. Then compare with a standard incognito session (desktop and mobile).

If you notice significant gaps — missing content blocks, different titles, elements hidden from the bot — question the reason. A JavaScript carousel that doesn't load on the bot side, that's not serious if essential content is present. A promotional block displayed only to humans, that's borderline. An entire site that switches themes between bot and user, that's the red line.

What mistakes should be absolutely avoided?

Never display rich editorial content to the bot and a mostly ad-filled or empty page to the user. This is the classic pharma spam pattern: the indexed page discusses general health, the user lands on a dubious medication store. Google detects this gap through behavioral signals (explosive bounce rate, immediate return to SERP) and manual reviews.

Also avoid conditional redirects based on user-agent. Redirecting Googlebot to one URL and users to another is pure and simple cloaking, even if both pages cover the same subject. If you must redirect, do it for everyone, bot included.

What if I legitimately personalize my content?

Document your approach. If you adapt content based on geolocation (example: e-commerce site with regional inventory), make sure Googlebot sees a default version consistent with the majority of your traffic. Use hreflang or rel=canonical tags to signal regional variants if necessary.

For A/B testing, prioritize tools that serve the same variant to the bot as to the user on the first visit. If your tool modifies the DOM client-side after initial render, verify that Googlebot captures the final version. A one-time and slight gap is not problematic — a systematic and structural gap, if so.

- Regularly compare Googlebot rendering (Search Console) vs actual user (incognito)

- Eliminate any conditional redirects based on user-agent to different URLs

- Verify that the content promised in the SERP snippet matches the actual page

- Document the technical reasons for any rendering divergence (accessibility, performance)

- Test A/B testing tools to ensure Googlebot sees a representative version

- Avoid displaying massive amounts of ads or off-topic content only to users

The boundary between technical optimization and deceptive cloaking rests on intent and user impact. If the gap between what the SERP promises and what the page delivers is significant, the risk of sanction exists. In complex cases — advanced personalization, heavy JavaScript architectures, multivariate testing — it may be wise to seek specialized support to audit rendering divergences in detail and secure compliance with Google's expectations.

❓ Frequently Asked Questions

Un site peut-il afficher du contenu différent sur mobile et desktop sans risquer une sanction pour cloaking ?

L'A/B testing côté client est-il considéré comme du cloaking ?

Peut-on cacher certains blocs publicitaires à Googlebot pour améliorer le crawl budget ?

Comment Google détecte-t-il le cloaking en pratique ?

Un site sanctionné pour cloaking peut-il récupérer son classement ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 13/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.