Official statement

Other statements from this video 11 ▾

- □ Comment exploiter l'export massif de données Search Console vers BigQuery pour optimiser votre stratégie SEO ?

- □ Google récompense-t-il vraiment la qualité du contenu indépendamment de sa méthode de production ?

- □ L'automatisation du contenu est-elle vraiment considérée comme du spam par Google ?

- □ L'IA générative impose-t-elle de nouvelles règles d'évaluation du contenu selon Google ?

- □ Faut-il vraiment se soucier du qui, comment et pourquoi dans la création de contenu ?

- □ Le tableau de bord de statut de Google change-t-il vraiment la donne pour les professionnels SEO ?

- □ Pourquoi Google ajoute-t-il l'Expérience aux critères EAT pour évaluer la qualité des contenus ?

- □ Rel=canonical : pourquoi Google a-t-il mis à jour sa documentation officielle ?

- □ Pourquoi Google publie-t-il une galerie officielle des éléments visuels de la recherche ?

- □ Pourquoi Google publie-t-il un guide spécifique sur les liens destiné aux designers web ?

- □ Le système d'avis produits de Google s'étend : quelles langues sont concernées et qu'est-ce que ça change pour vous ?

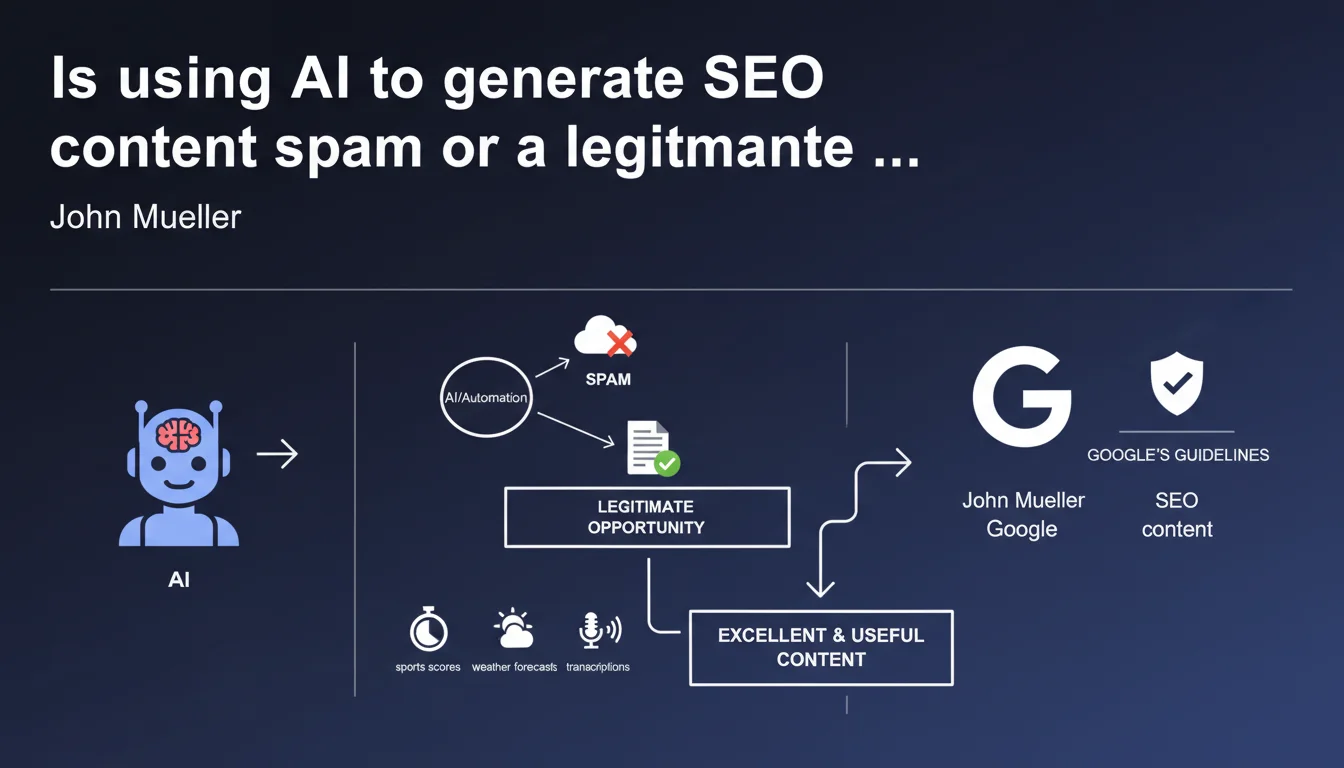

Google confirms that automation and AI are not inherently spam. The use of automated tools to produce useful content (sports scores, weather forecasts, transcriptions) has always been accepted. As long as the generated content provides real value to users, AI is compatible with Google's guidelines.

What you need to understand

Has Google really changed its stance on AI?

No, there's no dramatic reversal. John Mueller reminds us of a principle that has existed for years: automation is not automatically spam. Sites that automatically generate sports results, weather bulletins or video transcriptions are not penalized — because they meet a user need.

What's changing is the context. With the explosion of ChatGPT and similar tools, Google needs to clarify its position in the face of fears that the web will be flooded with mass-generated content. The nuance is simple: it's not the tool that matters, it's the result.

What distinguishes acceptable AI content from spam?

The added value for the end user. A match score updated in real-time via automation? Useful. A page generated in bulk to target a keyword with generic content and no real value? Spam.

Google isn't trying to detect whether you used GPT-4 or wrote by hand. The engine evaluates whether the content answers the search intent, whether it's trustworthy, whether it brings expertise and originality. The source tool is secondary.

- Useful automation has existed forever: structured data, aggregators, report generators

- AI is an accelerator, not a magical ranking distributor

- EEAT guidelines apply: expertise, authoritativeness, trustworthiness — regardless of the writing tool

- Google wants content created for users, not for engines

Does this statement absolve AI content creators of responsibility?

Absolutely not. Mueller says that AI can help create excellent content — not that it will by default. Editorial responsibility remains complete.

If you publish poorly verified AI content, riddled with factual errors or that only exists to rank on keywords, you're at risk. Google's algorithms (notably Helpful Content) can spot patterns of weak content regardless of how it was produced.

SEO Expert opinion

Is this position consistent with what we observe in practice?

Yes and no. On paper, Google's position is logical: the tool matters little, only the result counts. But in reality, we observe entire AI-generated sites ranking very well — until a manual update or algorithmic change sends them plummeting.

The problem is that Google provides no clear metrics to distinguish a "good" use from a "bad" use of AI. What makes content "useful"? At what level of expertise must you edit a GPT output for it to be acceptable? [To verify]: there is no public data allowing us to draw this line.

Doesn't AI risk standardizing content and harming web diversity?

It's a real risk that Google never mentions openly. If everyone uses the same prompts on the same models, we end up with massive content homogenization. LLMs recycle what already exists — they rarely create anything genuinely original.

For an SEO expert, this means you must actively differentiate. Inject proprietary data, client case studies, unique editorial angles. Otherwise, you're producing "acceptable" content that will be lost in the noise. And Google will always favor expert content that stands out.

Can you rely on this statement to plan your content strategy?

With caution. John Mueller often speaks in general terms, but the spam and quality teams operate by stricter criteria. What is "acceptable" according to the guidelines may still be downranked if a quality rater judges your content weak.

Practically: don't hide behind this statement to justify sloppy content. AI is a productivity tool, not a shield against penalties. Invest in human review, factual enrichment and editorial originality.

Practical impact and recommendations

How do you use AI without risking a penalty?

The basic rule: never publish raw AI content without human intervention. Even if GPT generates correct text, you must verify it, enrich it, fact-check it. Add concrete examples, recent data, an editorial point of view.

Prioritize AI for repetitive or structured tasks: meta descriptions, paraphrasing, data summaries. For strategic pages (Money pages, pillar content), human expertise remains essential.

What mistakes should you absolutely avoid?

Don't scale up in bulk. Publishing 500 AI articles in a month on long-tail keywords is a red flag. Google detects abnormal publication patterns, especially if the content is generic.

Also avoid republishing AI content across multiple domains (cross-site duplicate content), or creating satellite site networks fed by the same prompts. These practices look too much like automated spam — exactly what Google wants to fight.

- Systematically verify the factuality of generated information (dates, figures, citations)

- Add proprietary elements: case studies, internal data, firsthand experience

- Maintain a natural publishing pace — no suspicious spikes in content

- Use AI to optimize, not replace: outlines, paraphrasing, summaries

- Apply EEAT criteria: cite sources, display author, demonstrate expertise

- Avoid purely SEO content: pages created only to rank with no user value

Should you disclose the use of AI in your content?

Google doesn't formally require it — but transparency is a best practice, especially in YMYL sectors (health, finance). A clear disclaimer can strengthen user trust and prevent accusations of deception.

That said, what matters most is the final quality of the content. Whether you use AI or not, the content must meet user expectations and adhere to editorial standards. If that's the case, mentioning the tool is secondary.

AI is a powerful lever for gaining productivity, but it doesn't exempt you from solid editorial strategy or human oversight. Setting up a validation workflow, defining strict quality criteria and integrating AI into rigorous EEAT practice requires pointed expertise. Many companies underestimate this complexity and end up with content that doesn't perform — or worse, that attracts penalties. If you want to build an AI content strategy that is both effective and compliant, working with a specialized SEO agency can help you avoid costly mistakes and accelerate your results.

❓ Frequently Asked Questions

Google pénalise-t-il les contenus générés par IA ?

Peut-on utiliser ChatGPT pour rédiger des articles de blog sans risque ?

Faut-il mentionner l'usage de l'IA sur son site ?

Les contenus IA peuvent-ils ranker aussi bien que du contenu humain ?

Quels types de contenus sont les plus adaptés à l'IA ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 18/04/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.