Official statement

Other statements from this video 9 ▾

- □ Do Search Console grouped exports to BigQuery really replace the Search Analytics API?

- □ Does Google's bulk export finally unlock all the performance metrics you've been missing?

- □ Did you know Google counts only one impression when multiple pages from your site appear in the same search results?

- □ Why does Google use 0 as the topmost position in Search Console data?

- □ How does Google's searchdata_url_impression table break down your search performance data?

- □ Why does Google anonymize certain URLs in your Discover data, and what does it mean for your SEO strategy?

- □ Are you missing out on rich snippet opportunities? How to master search appearance fields and dominate your SERP real estate?

- □ Should you really limit queries by date in Search Console to optimize your performance?

- □ Should you really filter out anonymized queries from Google Search Console to boost your data quality?

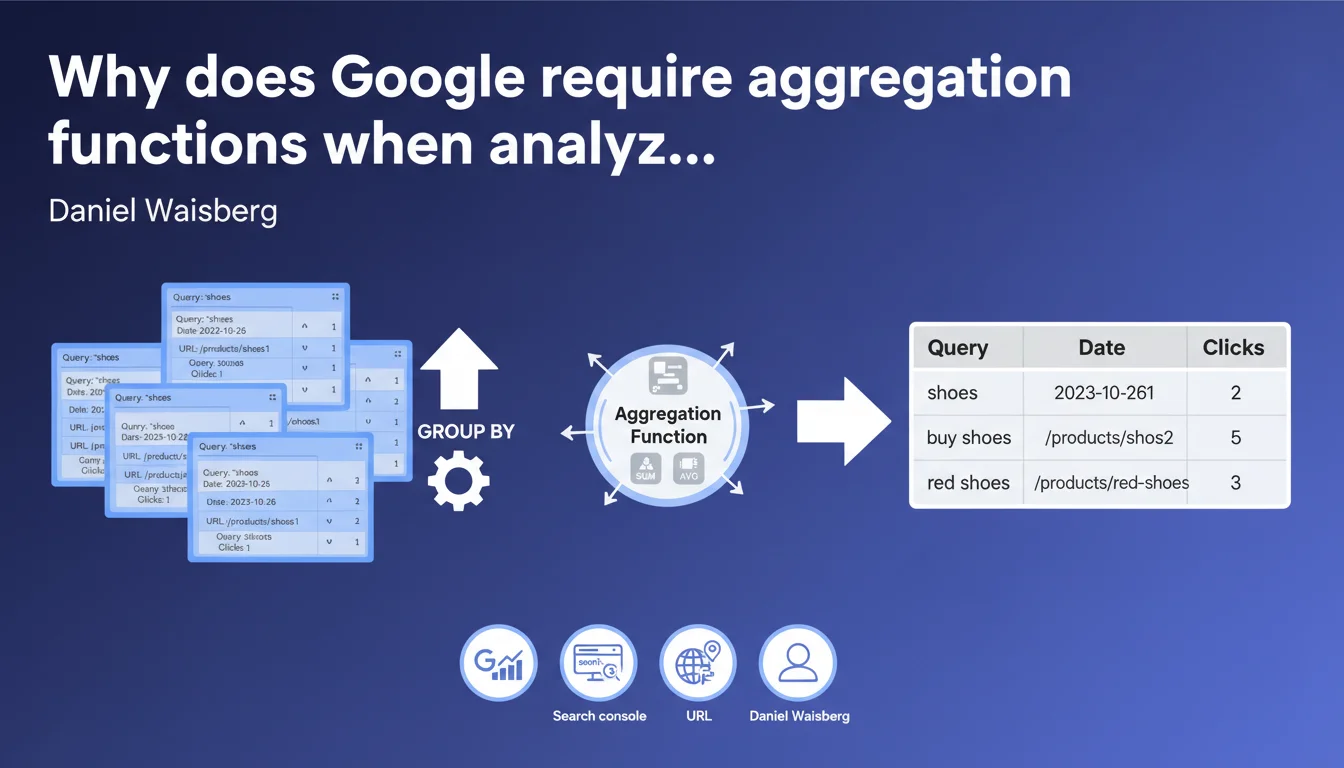

Google confirms that Search Console data is never consolidated by default: the same URL can appear multiple times for the same date. Using aggregation functions (SUM, COUNT) with GROUP BY is therefore mandatory to obtain reliable totals for clicks and impressions. Ignoring this rule leads to distorted analyses and incorrect SEO decisions.

What you need to understand

This statement by Daniel Waisberg concerns the exploitation of Google Search Console data via API or exports. The message is clear: never assume that the rows returned are already aggregated.

Why isn't Search Console data consolidated by default?

Google's internal architecture stores search events in a granular manner. A given query can generate multiple rows in the database for the same URL and the same date, particularly if it appeared in different contexts (device, location, result type).

When you extract raw data, you get this native granularity. Google doesn't pre-aggregate anything for you — it's up to you to consolidate.

What is an aggregation function in this context?

These are classic SQL operations such as SUM(), COUNT(), AVG() combined with GROUP BY. For example: SELECT page, SUM(clicks), SUM(impressions) FROM data GROUP BY page.

Without this step, you risk counting the same clicks or impressions multiple times if you simply total row by row.

What are the consequences if you ignore this rule?

You get overestimated data — sometimes spectacularly. Dashboards display inflated totals, strategic reports are skewed, and SEO decisions made on these bases become counterproductive.

This is particularly critical when crossing multiple dimensions (page + query + device). The more dimensions you add, the greater the risk of duplication.

- Search Console table rows are never pre-consolidated by Google

- The same query can appear multiple times for the same date and URL

- The use of SUM() and GROUP BY is mandatory for reliable totals

- Ignoring this rule leads to systematic overestimation of performance

- The more dimensions you cross, the higher the duplication risk

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Absolutely. Any professional who has exploited the Search Console API has observed this behavior. Raw exports indeed contain seemingly redundant rows that require manual consolidation.

What's less obvious is that even the GSC web interface performs these aggregations behind the scenes. You never see duplicate rows — Google hides them. But as soon as you go through the API or BigQuery exports, you face raw reality.

Why doesn't Google consolidate this data directly?

The technical reason probably stems from the scalability of the system. Storing granular events allows maximum flexibility to cross any dimension later.

Pre-aggregating all possible combinations (date × URL × query × device × country × result type...) would represent an exponential storage volume and computational complexity. Google prefers to let you aggregate according to your needs.

In which cases does this rule cause the most problems?

The most frequent errors occur when building automated dashboards without solid SQL skills. Non-technical people recover CSV exports, do a simple SUM() in Excel without GROUP BY — and artificially inflate KPIs.

Another trap: third-party tools that connect to the GSC API. If their aggregation logic is poorly coded, you inherit false data without even knowing it. [To be checked] systematically by comparing with the official interface.

Practical impact and recommendations

What should you concretely do with Search Console exports?

Systematize the use of SQL queries with GROUP BY whenever you manipulate raw data. Whether in BigQuery, a Python script, or even Excel with Power Query — always consolidate before analyzing.

Typical example: SELECT date, page, SUM(clicks) AS total_clicks, SUM(impressions) AS total_impressions FROM gsc_data GROUP BY date, page. This is the minimum basis for reliable totals.

What errors must you absolutely avoid?

Never do a simple column total without GROUP BY — this is error number one. Never assume that a GSC CSV export is already consolidated — it never is.

Another trap: crossing too many dimensions simultaneously without thinking about granularity. The more GROUP BY you add, the more you fragment the data — but too few GROUP BY and you double the counts.

How do you verify that your analyses are correct?

Foolproof method: compare your aggregated totals with those displayed in the web interface of Search Console for the same period and same filters. If the figures diverge, your aggregation is faulty.

Test with edge cases — for example a single URL on a single day. If you get more clicks than what GSC shows, you have a consolidation problem.

- Always use SUM() with GROUP BY on raw Search Console data

- Never make direct totals from CSV exports without prior consolidation

- Validate your aggregations by comparing with the official GSC interface

- Audit third-party tools that connect to the API to verify their aggregation logic

- Document your SQL queries to ensure analysis reproducibility

- Train non-technical teams on the basics of data aggregation

Correct exploitation of Search Console data requires solid technical skills in data manipulation — SQL, scripting, or at minimum advanced Excel mastery. Many companies underestimate this complexity and end up with skewed analyses that cost them dearly in erroneous decisions.

If your team lacks resources to implement reliable analysis infrastructure, support from a specialized SEO agency may prove worthwhile. Beyond technical expertise, this ensures consistent dashboards and avoids costly performance overestimation errors.

❓ Frequently Asked Questions

L'interface Web de Search Console agrège-t-elle automatiquement les données ?

Pourquoi une même requête apparaît-elle plusieurs fois pour la même date ?

Peut-on faire confiance aux totaux affichés dans les outils SEO tiers ?

Quelles fonctions d'agrégation utiliser pour les positions moyennes ?

Comment éviter les erreurs d'agrégation dans Excel ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 01/06/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.