Official statement

Other statements from this video 7 ▾

- □ Faut-il encore utiliser rel=next et rel=prev pour la pagination ?

- □ Faut-il vraiment valider son HTML W3C pour être crawlé par Google ?

- □ Google rend-il vraiment l'intégralité de vos pages JavaScript ?

- □ Le HTML sémantique renforce-t-il vraiment la confiance de Google dans votre contenu ?

- □ Google lit-il vraiment vos retours sur sa documentation SEO ?

- □ Peut-on vraiment faire confiance à la documentation officielle de Google ?

- □ Pourquoi vos scores PageSpeed Insights changent-ils à chaque test ?

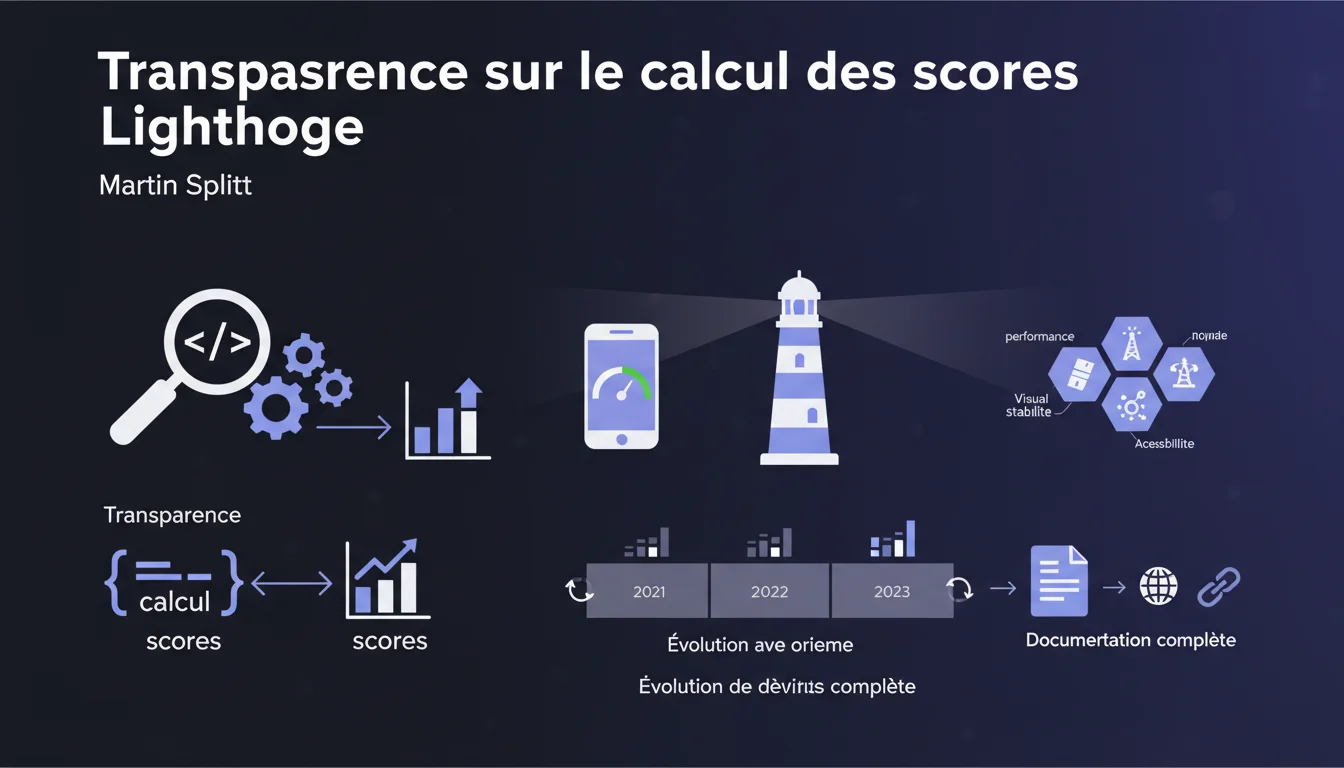

Google claims that Lighthouse thoroughly details its score calculation methods and the weighting of metrics. This documentation would allow SEO professionals to understand exactly how performance is evaluated and how these criteria evolve. Let's be honest: it remains to be seen whether this transparency is as complete as advertised in practice.

What you need to understand

What does this proclaimed transparency really mean? <\/h3>

Google communicates about the existence of a detailed documentation<\/strong> describing the algorithms used to calculate Lighthouse scores. This documentation would explain not only the metrics considered but also their respective weighting<\/strong>.<\/p> Specifically, the Lighthouse team provides technical resources to help understand why a site receives a particular score. The coefficients applied<\/strong> to each metric (LCP, FID, CLS, etc.) would be documented, along with their changes over time.<\/p> The weights of different metrics are not fixed. The Lighthouse team regularly adjusts these weightings<\/strong> based on the evolution of the web and Google's priorities.<\/p> A site can therefore see its score fluctuate even without any technical modification—simply because the evaluation criteria<\/strong> have been readjusted. This instability complicates long-term performance management.<\/p> The official Lighthouse documentation is hosted on GitHub and Google's web.dev. It details the mathematical formulas<\/strong> applied to calculate each partial score and the overall score.<\/p> Changes in weighting are usually announced via the release notes<\/strong> of Lighthouse, sometimes with several months of notice before being applied in production.<\/p>Why is this change in weighting important? <\/h3>

Where can we find this so-called documentation? <\/h3>

SEO Expert opinion

Is this transparency as complete as claimed? <\/h3>

On paper, the Lighthouse documentation is indeed provided. The formulas are there, the coefficients as well. But—and here's where it gets tricky—the technical complexity<\/strong> makes this transparency largely unutilizable for most practitioners.<\/p> The calculation algorithms involve statistical distributions<\/strong>, logarithmic curves, and adjustments that require advanced data science skills to be truly understood. Transparency exists, but it remains theoretical<\/strong> for many. [To verify]<\/strong>: does Google really provide ALL parameters, or are some still kept in a black box? <\/p> Absolutely. I have observed score variations<\/strong> of 5 to 15 points on client projects without any technical modifications to the site. These fluctuations simply followed updates to Lighthouse.<\/p> This instability complicates client reporting and tracking performance over time<\/strong>. How can one justify a score drop when it’s the measurement tool that changed, not the site? Google communicates about transparency, but predictability<\/strong> remains a real issue.<\/p> Yes, but with reservations. Individual metrics (LCP, CLS, etc.) remain reliable indicators<\/strong> for identifying issues. It’s the overall synthetic score that raises questions.<\/p> In practice, I recommend monitoring the raw metrics<\/strong> rather than the aggregated score. A LCP of 1.8s makes sense. A Lighthouse score of 87 remains an abstraction whose interpretation varies according to the version of the tool and its current weightings.<\/p>Do changes in weighting pose a stability issue? <\/h3>

Can we really manage our optimizations with this data? <\/h3>

Practical impact and recommendations

What should we actually do with this information? <\/h3>

First rule: never manage your optimizations solely based on the overall Lighthouse score<\/strong>. Focus on the individual metrics<\/strong> and their absolute values.<\/p> Regularly consult the Lighthouse documentation to anticipate weighting changes<\/strong>. Subscribe to the GitHub notifications for the Lighthouse project to be alerted of major developments.<\/p> Before panicking over a score drop, check three elements: the version of Lighthouse<\/strong> used, any recent weighting changes, and the actual CrUX data<\/strong> of your users.<\/p> If your real-world metrics (accessible via CrUX or your own RUM tools) remain stable, a variation in the Lighthouse lab score is probably just a methodological artifact<\/strong>. Focus on the real user experience.<\/p> Never compare scores from different versions<\/strong> of Lighthouse. As the weightings have changed, the comparison makes no statistical sense.<\/p> Avoid setting goals based solely on the overall score ("reach 90+"). Prefer goals based on specific metrics<\/strong>: LCP < 2.5s, CLS < 0.1, etc.<\/p>How to correctly interpret score variations? <\/h3>

What mistakes should be avoided in performance tracking? <\/h3>

❓ Frequently Asked Questions

Les scores Lighthouse ont-ils un impact direct sur le classement Google ?

Pourquoi mon score Lighthouse varie-t-il d'un test à l'autre sans modification du site ?

Quelle version de Lighthouse utilise PageSpeed Insights ?

Faut-il viser un score Lighthouse de 100 ?

Les données CrUX sont-elles plus fiables que les scores Lighthouse ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 13/01/2022

🎥 Watch the full video on YouTube →Related statements

Get real-time analysis of the latest Google SEO declarations

Be the first to know every time a new official Google statement drops — with full expert analysis.

💬 Comments (0)

Be the first to comment.