Official statement

Other statements from this video 11 ▾

- □ Le CTR est-il vraiment un proxy fiable de la pertinence d'une requête ?

- □ Faut-il prioriser les requêtes à faible position mais CTR élevé pour maximiser son trafic organique ?

- □ Faut-il vraiment prioriser les requêtes déjà classées plutôt que de viser de nouveaux mots-clés ?

- □ Faut-il vraiment ignorer les requêtes non pertinentes qui génèrent du trafic ?

- □ Les données structurées volent-elles vraiment vos clics en première position ?

- □ Pourquoi vos concurrents captent-ils plus de clics que vous en SERP ?

- □ Pourquoi Google insiste-t-il autant sur la précision des balises title, meta descriptions et attributs ALT ?

- □ Les balises d'en-tête structurent-elles vraiment mieux le contenu pour Google ?

- □ Les données structurées garantissent-elles vraiment l'accès aux résultats enrichis ?

- □ Faut-il vraiment s'appuyer sur les mots connexes pour élargir sa stratégie de mots-clés ?

- □ Google Trends peut-il vraiment identifier les opportunités SEO avant vos concurrents ?

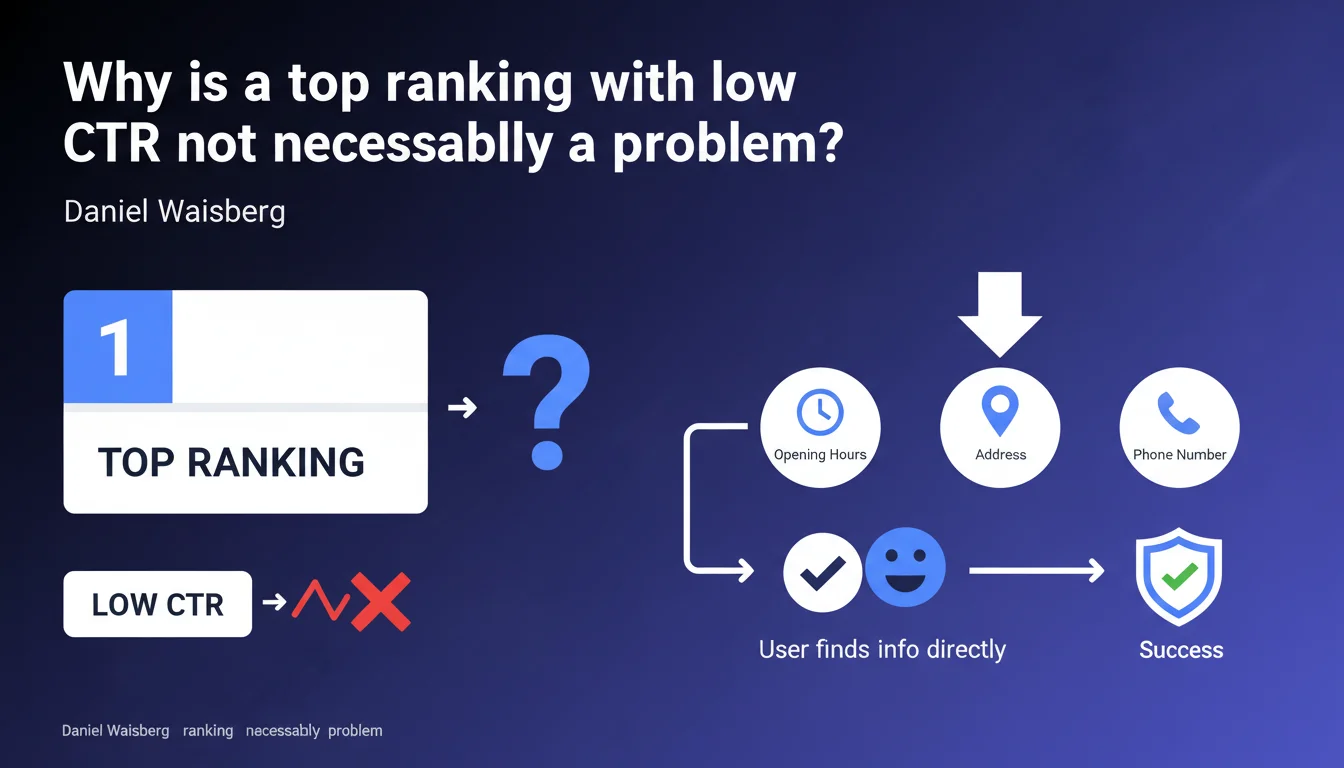

Google reminds us that a #1 position with low CTR can simply mean that users found the information they needed directly in the SERPs — opening hours, address, phone number. This isn't necessarily a red flag about result quality, but rather a characteristic of certain simple informational queries.

What you need to understand

What does this concretely mean for SEO professionals?

Daniel Waisberg points out a ground reality: not all #1 positions generate the same click-through rate. Some queries display the answer directly in rich results — Knowledge Panel, opening hours, contact information via Google Business Profile, featured snippets.

For these queries, the user has no reason to click. They already have what they're looking for. A 5% CTR on a local query with Knowledge Graph is therefore not abnormal — it's predictable.

Why is Google emphasizing this point now?

The statement appears aimed at calming the concerns of webmasters who see CTR plummeting despite stable rankings. With the rise of SERP features (AI Overviews, featured snippets, People Also Ask), Google knows that many clicks are disappearing — legitimately, in its view.

The underlying argument: if the user finds their answer without clicking, that's a win for user experience. Not for the website owner, obviously, but for Google.

Which queries are mainly affected?

Short informational queries and local searches. "Carrefour opening hours Lyon," "Paris City Hall phone number," "Brad Pitt's age" — anything that can be resolved by a Knowledge Panel or enriched card.

Conversely, transactional queries or in-depth searches (comparisons, guides, purchases) maintain high CTRs. The user needs more information than a snippet can provide.

- Low CTR ≠ relevance problem if the information is already visible in the SERPs

- Local queries and simple informational searches are the most affected

- Google considers this an improvement to user experience

- Organic traffic can mechanically decline on certain queries without SEO degrading

SEO Expert opinion

Is this statement consistent with on-the-ground observations?

Yes. For years, we've observed that featured snippets cannibalize traffic, even in #1 position. An Ahrefs study already showed that winning position zero could drop overall CTR by 20 to 30% on certain queries.

The problem is that Google presents this as an acceptable inevitability. The user is satisfied? Never mind if the site loses traffic. Except the web's economic model is built on visits — not passive satisfaction.

What nuances should be added?

You must distinguish two cases. First case: the information displayed in the SERPs comes from your own site (rich snippet, structured data). You lose traffic, but you retain indirect visibility and credibility — the user sees your name.

Second case: the information comes from a third-party source aggregated by Google (Knowledge Graph, Wikipedia, directories). There, you lose everything: the click, the visibility, the attribution. And it's more frequent than you'd think.

Waisberg doesn't make this distinction. He talks about "the information sought" without specifying where it comes from or how it was indexed. [To verify]: does Google prioritize source sites in these cases, or does it simply pull the information without compensation?

In which cases does this rule not apply?

On transactional queries, comparisons, or those requiring a user journey. A user searching "best CRM for SMEs" won't settle for a snippet — they want to read, compare, explore.

Similarly, brand queries maintain high CTRs even with a Knowledge Panel. The user is trying to access the site, not just learn the corporate headquarters address.

Practical impact and recommendations

What should you concretely do if your CTR is low despite good positioning?

Start by identifying the query type. If it's a local or simple informational query, a 5-10% CTR in #1 can be structurally normal. Check your Search Console to see which queries are affected.

Next, analyze the SERP itself. Open a private window and search your query. What does the user see before your result? A Knowledge Panel with all the information? A competing featured snippet? A Google Maps card?

If the information comes from your site but displays without a click (rich snippet, structured data), it's the lesser evil. You lose traffic but retain a form of credit. If the information comes from elsewhere, you have two options: either optimize to reclaim that snippet, or diversify your traffic.

What errors should you avoid when interpreting data?

Never blindly compare CTR across two different queries. A query with transactional intent will always have a higher CTR than an informational query, even at equal position.

Classic mistake: panicking when seeing 8% CTR in #1 on a local query while a competitor achieves 25% on a product query. These are incomparable typologies.

Another trap: interpreting a CTR drop as snippet degradation. Sometimes it's just Google adding a "People Also Ask" block above you, visually pushing your result lower without changing your ranking.

How should you adjust your strategy facing this reality?

If your main queries are structurally channeled by SERP features, diversify. Target long-tail variations where Google displays fewer enriched results. Develop transactional or comparative content that requires a click.

Optimize your structured data to be the source of the enriched snippet, even if you lose the click. At least you control the message and stay visible. And monitor the evolution of these formats — they change often.

- Segment queries by intent in Search Console to identify CTR patterns

- Manually analyze SERPs to understand what displays before your result

- Implement relevant structured data (hours, address, FAQ, How-to) to capture enriched snippets

- Track CTR evolution after each major Google Update — SERP formats change

- Don't focus solely on CTR: also measure indirect brand visibility (impressions, displayed snippets)

- Diversify traffic toward queries less exposed to SERP features

❓ Frequently Asked Questions

Un CTR faible en position #1 peut-il pénaliser mon référencement à terme ?

Comment savoir si mon CTR est normal pour ma requête ?

Dois-je optimiser mon snippet même si l'info s'affiche déjà dans les SERP ?

Les données structurées peuvent-elles réduire mon CTR en affichant trop d'infos ?

Google compense-t-il d'une manière ou d'une autre la perte de trafic liée aux extraits enrichis ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 13/04/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.