Official statement

Other statements from this video 12 ▾

- □ Should you trust PageSpeed Insights or Search Console to measure your site's speed?

- □ Does Google really index all of your website's content?

- □ Is Googlebot Really Ignoring Your JavaScript Links If You're Not Using <a> Tags?

- □ Does Google really reject the idea of a unified SEO score for ranking?

- □ Can you safely link to HTTP websites without hurting your SEO rankings?

- □ Does Google really expect you to write 'naturally' to rank well?

- □ Should you really delete your disavow file?

- □ Does blocking crawl with robots.txt actually prevent deindexation?

- □ Can you safely list the same URL in multiple sitemap files without harming your SEO?

- □ How can you index embedded iframe content without indexing the source page separately?

- □ Is the HSTS preload list really a game-changer for your SEO rankings?

- □ Does a descriptive domain name really guarantee your ranking for that search query?

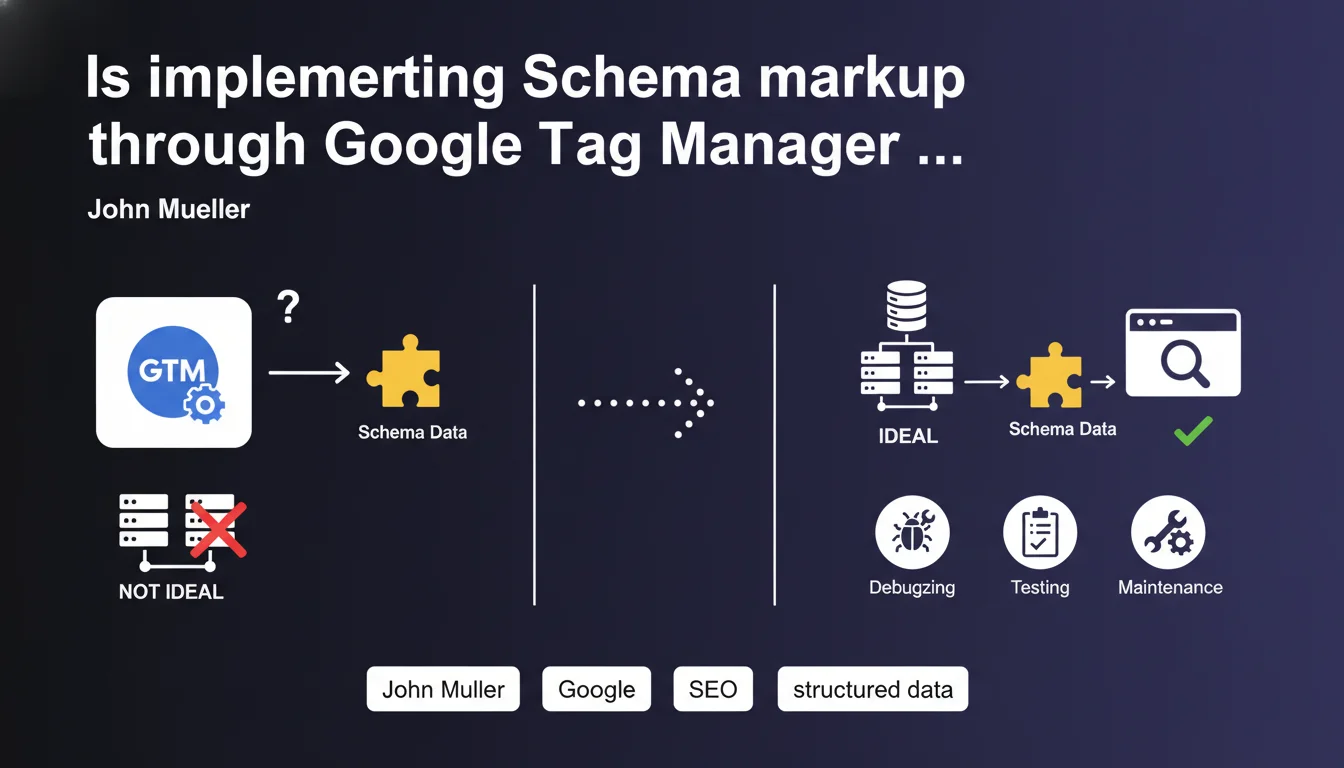

Google can technically process structured data injected via GTM, but Mueller clearly recommends server-side implementation. The main issue isn't indexation—it's maintenance: difficult debugging, less reliable testing, JavaScript dependency. For short-term tactical use, GTM works. For sustainable growth, it's a risky bet.

What you need to understand

Why is this statement coming out now?

Implementations of Schema markup via GTM have multiplied in recent years, driven by the ease of deployment without heavy technical intervention. Many agencies and consultants have adopted this method to accelerate projects, especially on rigid CMS platforms or blocked technical environments.

Mueller is responding here to a practice that has become common. His message does not condemn the method—it clarifies its operational limitations. It's a warning about technical sustainability, not about immediate effectiveness.

What exactly does Google object to with this approach?

Nothing at the level of structured data processing itself. Googlebot executes JavaScript, reads the Schema injected by GTM, and uses it normally to generate rich snippets or feed the Knowledge Graph.

The problem lies elsewhere: debugging, testing and maintenance. When Schema is injected client-side, validation tools (Search Console, Rich Results Test) don't always see the final rendering immediately. Errors are harder to trace, especially when GTM contains dozens of tags with complex firing conditions.

In which cases does this method remain acceptable?

Mueller doesn't say "never use GTM". He says it's not optimal long-term. Translation: for quick tests, POCs, or environments where touching server-side code takes months, GTM remains a valid tactical lever.

But as soon as Schema becomes critical to visibility (e-commerce with Product, media with Article/NewsArticle, local businesses with LocalBusiness), it's better to invest in a proper server-side implementation.

- GTM works: Google normally processes structured data injected via JavaScript

- The real issue: complicated maintenance, risky debugging, less reliable testing

- Prefer server-side for any implementation that is permanent and critical to visibility

- GTM remains acceptable for short-term tactical use or rapid testing

- Validation tools may not immediately see the final rendering via GTM

SEO Expert opinion

Is this statement consistent with observed practices?

On the ground, we find that sites with hard-coded Schema on the server side encounter fewer silent bugs. Errors reported by Search Console are clearer, validation is faster, updates are less risky.

With GTM, I've seen too many cases where a tag change, a poorly configured firing condition, or a script conflict broke the Schema without anyone noticing for weeks. Google indexes it, yes—but you're navigating blind.

What nuances should be applied to this recommendation?

Mueller remains intentionally vague on one point: at what volume or criticality does GTM become truly problematic? He provides no threshold, no objective criteria. [To verify]: does the performance of JavaScript rendering really impact Googlebot's Schema extraction rate? No public data formally proves this.

Second nuance: some CMS platforms (Shopify, certain locked WordPress configs) make server-side implementation extremely heavy. In these cases, GTM remains the lesser evil—but you must then over-document the tags, version the changes, and monitor like a hawk.

Finally, the server/client distinction becomes blurred with modern architectures (SSR, hydration). A Schema injected by Next.js via SSR is technically "server-side" even though it's JavaScript. Mueller doesn't delve into these subtleties.

In what contexts does GTM remain relevant despite everything?

Schema A/B testing (rare but useful), quick deployments on sites where dev cycles take 3 months, temporary addition of Event Schema for one-off marketing operations. Let's be honest: on a well-configured WordPress site with a modern theme, there's no valid reason to use GTM for permanent Schema.

But on a monstrous legacy stack with 12 layers of IT validation, GTM can save a launch. This is pragmatism, not technical excellence—and that's acknowledged.

Practical impact and recommendations

What should you do concretely if you're using GTM for Schema?

First step: audit the criticality of each Schema injected via GTM. If it's Product Schema on an e-commerce site generating 60% of organic traffic, plan the server-side migration. If it's BreadcrumbList or Organization, priority is lower.

Next, document each GTM tag related to Schema: which properties, which variables, which firing conditions. Too many sites have "phantom" Schema in GTM that no one understands anymore. It's a ticking time bomb.

How do you verify that your Schema works correctly via GTM?

Don't just rely on occasional Rich Results Test validation. Set up automated monitoring: weekly crawl with Schema extraction from rendered content, comparison with expected version, alerts on discrepancies.

Also use the "Enhancements" report in Search Console to spot errors that only appear after indexing. With GTM, the gap between the browser rendering you test and the Googlebot rendering can be significant, especially if JS takes time to execute.

When and how to migrate to a server-side implementation?

If you decide to migrate, do it in stages: start with the most critical Schema types (Product, Article, LocalBusiness), test in staging with a full crawl, then roll out progressively. Keep GTM running in parallel while you validate everything works.

Plan a rollback strategy: if the server-side implementation breaks, you should be able to reactivate GTM in 5 minutes. And monitor Search Console for at least 3 weeks after migration—some regressions only appear after several crawl cycles.

- Audit the criticality of each Schema currently in GTM

- Document all GTM tags related to Schema (properties, variables, triggers)

- Set up automated monitoring of rendered Schema (crawl + extraction)

- Plan a progressive migration to server-side for critical Schemas

- Test in staging with a full crawl before any deployment

- Have a rollback plan to GTM in case of regression

- Monitor Search Console for 3 weeks post-migration

- Remove GTM Schema tags only after complete validation

❓ Frequently Asked Questions

Google indexe-t-il moins bien le Schema ajouté via GTM ?

Peut-on utiliser GTM pour tester rapidement un nouveau type de Schema ?

Les outils de validation voient-ils le Schema injecté par GTM ?

Quels sont les risques concrets d'utiliser GTM pour du Schema critique ?

Faut-il supprimer immédiatement tout Schema en GTM ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/07/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.