Official statement

Other statements from this video 6 ▾

- □ Pourquoi la standardisation du robots.txt par l'IETF change-t-elle la donne pour les crawlers ?

- □ Pourquoi Google limite-t-il la taille de robots.txt à 500 Ko ?

- □ Les flux RSS et Atom sont-ils vraiment utilisés par Google pour découvrir vos contenus ?

- □ Les sitemaps XML sont-ils vraiment indispensables sans standardisation officielle ?

- □ Pourquoi Google a-t-il ouvert le code de son parseur robots.txt ?

- □ Le robots.txt et les sitemaps XML sont-ils désormais officiellement liés ?

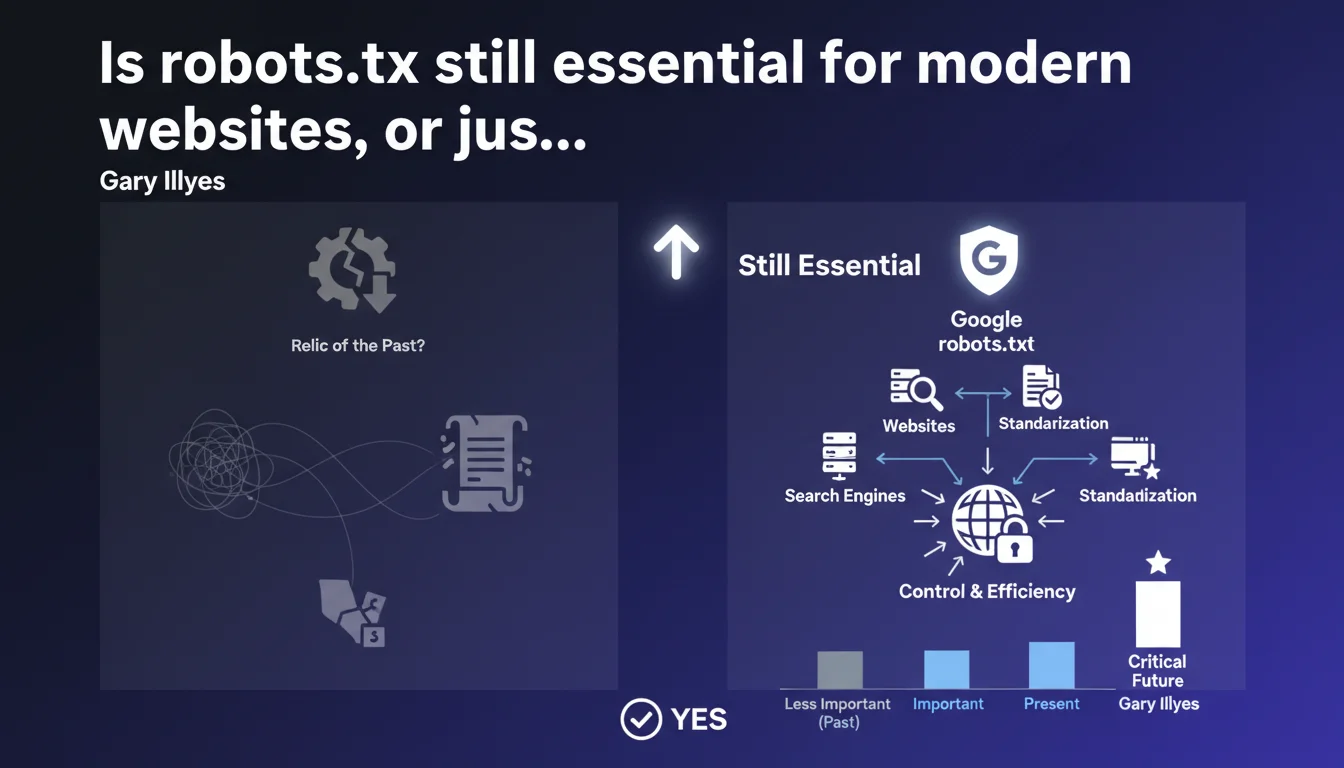

Google confirms that robots.txt remains a fundamental file for all search engines, not outdated technology. The standardization of this protocol simplifies life for site owners by unifying the rules for writing files for all crawlers. Ignoring or neglecting this file exposes you to crawl budget and indexation problems.

What you need to understand

Why does Google insist on the importance of robots.txt?

Gary Illyes' statement corrects a persistent misconception: that robots.txt would be an outdated tool, replaced by more modern methods like meta robots tags or X-Robots-Tag. That's wrong. For Google and other engines, this file remains the first line of defense for controlling what should be crawled or not.

The standardization Gary mentions refers to the passage of robots.txt to RFC 9309, which formalizes a protocol used for decades. Concretely? One file, clear syntax, and all crawlers that respect the protocol follow the same rules. No need to manage exceptions by engine.

What is the real scope of this standardization?

Standardizing robots.txt reduces the technical burden on site owners. Before standardization, some engines interpreted wildcards or complex directives differently. Today, the rules are fixed: a Disallow applies the same way at Google, Bing, Yandex, or DuckDuckGo.

For SEOs, this means less empirical testing and more predictability. A poorly written directive blocks everyone the same way — which can be a problem, but at least it's consistent.

Does robots.txt replace other crawl control methods?

No. And that's where many get it wrong. Robots.txt does not deindex: it simply prevents access. If a URL is already indexed or has backlinks, Google can keep it in its index even if it's blocked in robots.txt.

To deindex, you need to use noindex (meta or X-Robots-Tag). To manage facets, URL parameters, or fine-grained crawl budget, robots.txt remains an essential but complementary lever.

- Robots.txt: prevents crawler access to certain resources

- RFC 9309 standardization: unifies file interpretation across all engines

- Not a deindexation tool: does not remove pages from Google's index

- Complementary to noindex tags: each method has its precise role

- Direct impact on crawl budget: misconfigured, it wastes resources or blocks strategic content

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, completely. On high-volume sites — e-commerce, marketplaces, media outlets — robots.txt remains the first-level tool for filtering crawls. Server logs confirm that Google scrupulously respects directives, especially since standardization.

However, Gary doesn't specify one crucial point: robots.txt doesn't prevent indexation if backlinks point to a blocked URL. We regularly see indexed pages with the note "No information available about this page". This is a frequent source of confusion. [To verify] in cases of migration or redesign where obsolete URLs remain visible in SERPs.

What nuances should be added to this claim?

First point: not all crawlers respect robots.txt. Malicious scrapers, aggressive monitoring bots, or AI crawlers don't care at all. The file only protects against actors playing by the rules — essentially legitimate search engines.

Second point: standardization doesn't solve everything. Divergences persist on non-standard directives like Crawl-delay (ignored by Google) or Host (Yandex only). If you're managing international SEO, these nuances matter.

In what cases does this file become counterproductive?

Blocking CSS or JavaScript resources in robots.txt has been problematic for years. Google needs these files to properly render pages and assess user experience. If you block /assets/ or /scripts/, you potentially sabotage your indexation.

Another classic pitfall: blocking paginated pages or useful filters thinking you're saving crawl budget, when they actually generate qualified traffic. The intention behind each directive matters more than the syntax.

Practical impact and recommendations

What should you concretely do to optimize your robots.txt?

Start by auditing your current file. Many sites carry obsolete directives from migrations or poorly configured plugins. Every line must have a reason to exist. If you no longer know why a rule exists, delete it.

Next, test with Google Search Console. It simulates Googlebot access and detects unintentional blocks. Also check server logs to spot frequently crawled but blocked URLs — a sign of poor prioritization.

What mistakes should you absolutely avoid?

Never block resources needed for rendering: CSS, JavaScript, fonts. Google needs these files to correctly evaluate your Core Web Vitals and mobile experience. Blocking /wp-content/themes/ on WordPress is a common and costly error.

Also avoid mixing robots.txt and noindex. If you want to deindex a section, use a meta robots noindex tag and leave crawl open. Blocking in robots.txt prevents Google from seeing the noindex directive — result: pages stay indexed.

How do you verify that your site complies with best practices?

Use a syntax validator to detect formatting errors (misplaced spaces, incorrect wildcards). Then compare your directives with coverage reports in GSC: important URLs marked as "Blocked by robots.txt" should trigger an alert.

Finally, cross-reference with crawl budget data in logs. If Googlebot is massively trying to access URLs you've blocked, either your blocking strategy is miscalibrated, or these URLs receive links that need to be handled differently.

- Audit the existing robots.txt file and remove obsolete directives

- Test each modification with Google Search Console before production deployment

- Never block CSS, JavaScript, or critical rendering resources

- Use noindex for deindexing, robots.txt only for crawl control

- Regularly check server logs to detect crawl attempts on blocked URLs

- Validate syntax with a dedicated tool (wildcards, spaces, case sensitivity)

- Monitor GSC coverage reports to spot unintentional blocks

Robots.txt remains a pillar of technical SEO, but its configuration requires rigor and deep knowledge of interactions between crawl, indexation, and rendering. A mistake can cost dearly in visibility.

If your site has complex architecture, significant volumes, or critical crawl budget challenges, these optimizations deserve expert review. Consulting with a specialized SEO agency guarantees precise diagnosis and a crawl strategy tailored to your business objectives.

❓ Frequently Asked Questions

Robots.txt empêche-t-il vraiment la désindexation d'une page ?

Puis-je bloquer les fichiers CSS et JavaScript dans robots.txt ?

Tous les moteurs de recherche respectent-ils robots.txt ?

Faut-il bloquer les paramètres d'URL ou les pages paginées dans robots.txt ?

Comment vérifier rapidement si mon robots.txt bloque des pages importantes ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 17/04/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.