Official statement

Other statements from this video 6 ▾

- □ Pourquoi la standardisation du robots.txt par l'IETF change-t-elle la donne pour les crawlers ?

- □ Pourquoi Google limite-t-il la taille de robots.txt à 500 Ko ?

- □ Les flux RSS et Atom sont-ils vraiment utilisés par Google pour découvrir vos contenus ?

- □ Pourquoi robots.txt reste-t-il indispensable même pour les sites modernes ?

- □ Pourquoi Google a-t-il ouvert le code de son parseur robots.txt ?

- □ Le robots.txt et les sitemaps XML sont-ils désormais officiellement liés ?

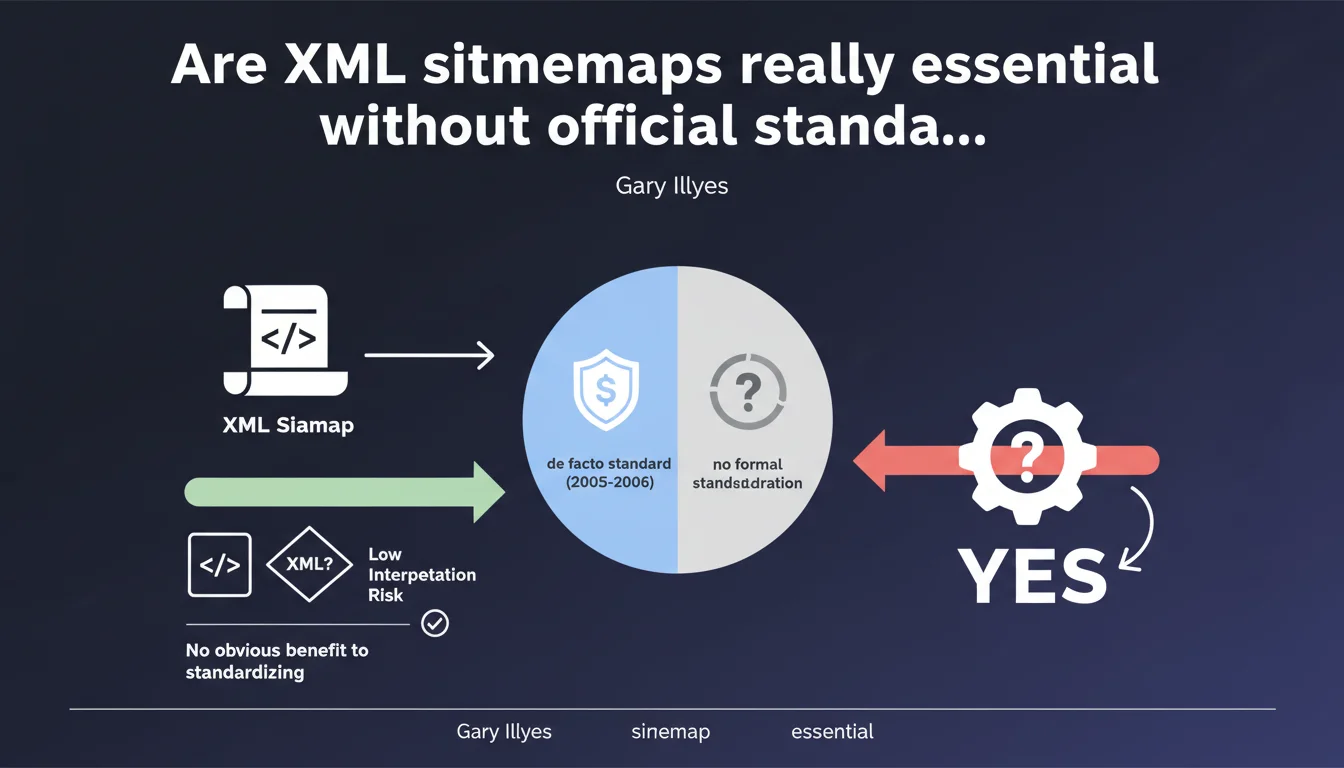

Google confirms that the XML Sitemap format has never been formally standardized by a standardization body, although it has become a de facto standard since its creation. The absence of official standardization is not considered problematic by Google, which believes that the simplicity of the XML format makes divergent interpretations unlikely. For SEO practitioners, this statement reminds us that the effectiveness of sitemaps relies more on their correct implementation than on a fixed standard.

What you need to understand

Why this clarification about the lack of formal standardization?

Gary Illyes clarifies a point often misunderstood: the XML Sitemap format, although universally adopted, has never been validated by an official body such as the IETF or the W3C. This distinction between de facto standard and formal standard may seem trivial, but it reveals that the protocol is based on a consensus of usage rather than on strict normative documentation.

For an SEO practitioner, this means that current specifications — available on sitemaps.org — constitute the working reference, without any guarantee of evolution governed by a traditional standardization process. It is Google and search engines that dictate implementation rules, not a neutral third party.

Is the XML format really so simple that no ambiguity exists?

Google justifies the lack of standardization by the simplicity of the XML format and the low risk of divergent interpretation. In practice, the Sitemap format is limited to a structure of predefined tags: loc, lastmod, changefreq, priority.

Let's be honest: this apparent simplicity masks gray areas. Optional tags such as changefreq or priority are widely ignored by Google in its crawl process. The lack of clear standards on handling URL variants, redirects, or hreflang tags in sitemaps generates heterogeneous practices depending on the CMS.

What are the concrete risks of this informality?

The lack of formal standardization exposes a risk of fragmentation if search engines adopt divergent interpretations in the future. Currently, Google, Bing, and Yandex share broadly the same reading of the format.

But the situation could evolve: undocumented extensions, unilateral modifications of specifications by a search engine, or the introduction of new proprietary tags would create implementation gaps. For now, this scenario remains hypothetical — but informality leaves the door open.

- De facto standard: adopted by consensus of usage, without validation by a standardization body

- Widely ignored tags: changefreq and priority do not influence Google's crawl

- No structured evolution process: search engines can unilaterally modify their interpretations

- Low but non-zero fragmentation risk in case of divergent evolution between engines

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, for the most part. XML sitemaps have functioned stably for nearly two decades without formal standardization, which supports Google's argument that the format is simple enough to do without official standards.

However, this stability relies on Google's dominance in the ecosystem. If tomorrow an alternative search engine gains market share with a different interpretation of optional tags or protocol extensions, this informality could become problematic. For now, it's Google that dictates — and as long as that's the case, formal standardization effectively adds nothing.

What nuances should be added to this simplicity claim?

Gary Illyes minimizes the risk of divergent interpretation by emphasizing the simplicity of the XML format. But this view ignores the complexities of actual implementation on large sites.

An e-commerce site with millions of pages, regional variants, dynamic URL parameters, and complex hreflang does not generate a "simple" sitemap. Common errors — incorrect URLs, 301 redirects in the sitemap, incorrect lastmod tags — do not stem from format interpretation, but from its operational mastery. And there, the absence of strict normative documentation leaves room for approximate practices.

[To verify]: Google has never published quantified data on the error rate in sitemaps submitted via Search Console. The claim that "there is little risk" would deserve to be supported by real metrics.

In what cases does this informality become a handicap?

The absence of a formal standard becomes problematic when seeking to evolve the protocol. Emerging needs — such as declaring enriched video content, advanced structured data, or AMP/PWA versions — require extensions to the base format.

These extensions exist (video sitemaps, images, news), but they remain piecemeal documented on sitemaps.org and in Google Search Central documentation. Without a standardization process, each search engine can introduce its own variants — which forces SEO practitioners to multiply engine-specific implementations.

Practical impact and recommendations

What should you do concretely with this information?

This statement does not change anything about the best practices for XML sitemap implementation. Continue to generate clean, up-to-date sitemaps that conform to the specifications documented on sitemaps.org and in Search Console.

Focus on elements that Google actually uses: valid URLs in <loc>, accurate modification dates in <lastmod>, and exclusion of URLs blocked by robots.txt or in noindex. Ignore changefreq and priority — Google ignores them too.

What errors should you avoid in sitemap management?

The lack of formal standardization does not exempt you from rigor. The most frequent errors observed in the field relate to data quality provided in the sitemap, not to format interpretation.

Avoid including 301/302 URLs, pages blocked by robots.txt, or URLs with unnecessary parameters. Every URL in your sitemap must be indexable and relevant. A sitemap cluttered with thousands of redirects or orphan pages undermines Google's confidence in your declarations.

How do you verify the compliance and effectiveness of your sitemaps?

Use Search Console to monitor processing errors and the coverage rate of submitted URLs. If Google indexes less than 80% of URLs declared in your main sitemap, dig deeper: either your sitemap contains non-relevant URLs, or a structural issue prevents indexation.

Regularly audit the consistency between your sitemap and your internal linking. A sitemap that declares orphan URLs — not linked from other pages on the site — is a signal of poor information architecture.

- Generate sitemaps conforming to sitemaps.org and Search Console specifications

- Exclude 301/302 URLs, blocked by robots.txt, or in noindex

- Use only <loc> and <lastmod> tags — ignore changefreq and priority

- Segment large sitemaps (50,000 URLs max per file, 50 MB max uncompressed)

- Monitor coverage rate in Search Console (target: >80% of URLs indexed)

- Verify consistency between sitemap and internal linking — no orphan URLs

- Automate lastmod date updates when actual content changes

❓ Frequently Asked Questions

Google utilise-t-il réellement les balises changefreq et priority dans les sitemaps ?

L'absence de standardisation formelle peut-elle poser problème à l'avenir ?

Dois-je inclure toutes mes pages dans le sitemap XML ?

Quelle est la taille maximale recommandée pour un fichier sitemap ?

Les extensions de sitemap (vidéo, images, actualités) sont-elles standardisées ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 17/04/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.