Official statement

Other statements from this video 6 ▾

- □ Pourquoi la standardisation du robots.txt par l'IETF change-t-elle la donne pour les crawlers ?

- □ Pourquoi Google limite-t-il la taille de robots.txt à 500 Ko ?

- □ Les flux RSS et Atom sont-ils vraiment utilisés par Google pour découvrir vos contenus ?

- □ Les sitemaps XML sont-ils vraiment indispensables sans standardisation officielle ?

- □ Pourquoi robots.txt reste-t-il indispensable même pour les sites modernes ?

- □ Pourquoi Google a-t-il ouvert le code de son parseur robots.txt ?

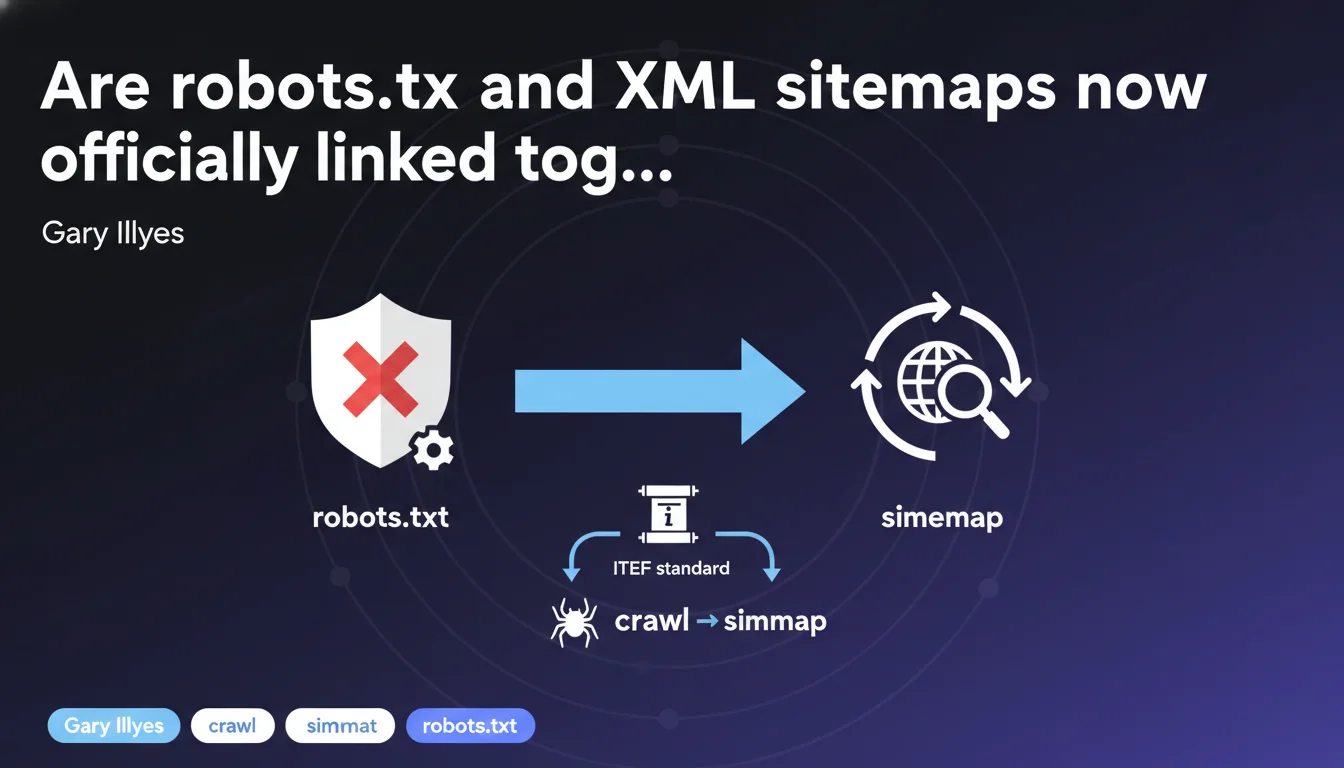

The IETF is integrating XML sitemaps as an informative reference in the robots.txt standard, creating a formal connection between these two mechanisms. This official recognition formalizes an already common practice, but raises questions about the real impact on crawling and indexation. Concretely, nothing changes immediately — but this standardization could pave the way for future developments.

What you need to understand

What does this informative reference actually change in practice?

The robots.txt standard from the IETF (Internet Engineering Task Force) now mentions XML sitemaps as an informative reference. This formal link recognizes that these two files — historically separate — work in tandem to guide crawlers.

In practice, the Sitemap: directive in robots.txt has already existed for years. Google and Bing use it daily. This standardization doesn't modify the behavior of search engines, but it anchors this practice within a standardized framework.

Why formalize a link that already existed?

Because until now, nothing obligated search engines to respect or even acknowledge the Sitemap: directive in robots.txt. It was a tacit convention, undocumented in any official standard.

This formalization guarantees consistency between engines and third-party tools. It also clarifies the role of robots.txt: not only to block access, but also to indicate where to find URLs to crawl.

What are the benefits for a website?

- Centralization: declaring sitemaps directly in robots.txt avoids having to go through Search Console or webmaster tools.

- Automation: new crawlers or third-party robots can discover sitemaps without manual configuration.

- Validation: a recognized standard facilitates auditing and quality control of technical files.

- Durability: this recognition reduces the risk that Google or other actors will ignore this directive in the future.

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. The Sitemap: directive in robots.txt has been functioning for at least 15 years. Google has always respected it, just like the declaration via Search Console.

What's puzzling is the timing. Why formalize now a practice that's already universal? Either Google is anticipating a future evolution in crawling (differentiated treatment based on sitemap source?), or the IETF is simply seeking to document existing practice. [To be verified]: no public data confirms a change in Googlebot behavior.

Should we expect an impact on crawl budget or indexation?

No, not in the immediate term. Sites that already declare their sitemap in robots.txt will see no change. Those that don't can continue using Search Console without issue.

The only case where this formalization matters: sites with multiple sitemaps or complex architectures. Centralizing all declarations in robots.txt simplifies management — but the tech team still needs to keep this file updated. An obsolete sitemap declared in robots.txt can create more problems than it solves.

What nuances should be added?

This standardization doesn't make robots.txt essential for declaring a sitemap. Google continues to accept declarations via Search Console, which remains the most reliable method for monitoring indexation errors.

Another point: the Sitemap: directive doesn't influence crawl priority. A search engine can ignore an entire sitemap if it judges the content to be of low quality or already known. Formalizing the link doesn't change this reality.

Practical impact and recommendations

What should you do concretely after this announcement?

If your robots.txt already contains one or more Sitemap: directives, verify that they point to valid and up-to-date URLs. A 404 sitemap or an obsolete one can slow down crawling — it's better to remove it than leave it in error.

If you're not yet using this method, test it in parallel with Search Console. Declare your main sitemap in robots.txt and monitor server logs to confirm that Googlebot is retrieving it properly.

What mistakes should you absolutely avoid?

Don't multiply inconsistent declarations. If a sitemap is listed in robots.txt and in Search Console with different URLs, Google will prioritize the Search Console one — but you're creating confusion in your audits.

Another pitfall: declaring a sitemap index in robots.txt without maintaining the sub-sitemaps. If a sub-sitemap returns a 500 error, the entire index becomes suspect. It's better to declare the final sitemaps directly.

How do you verify that your configuration is optimal?

- Open your robots.txt file: each Sitemap: line must point to an accessible URL in HTTPS and return a 200 code.

- Test each sitemap in Search Console to verify it contains no parsing errors or blocked URLs.

- Analyze your server logs: Googlebot should request robots.txt before crawling the sitemap URLs. If it doesn't, the link may not be exploited.

- Document the declaration method chosen (robots.txt, Search Console, or both) to avoid duplicates during future audits.

- Automate robots.txt updates if your CMS dynamically generates sitemaps. A static file quickly becomes obsolete.

❓ Frequently Asked Questions

Dois-je obligatoirement déclarer mon sitemap dans le robots.txt maintenant ?

Si je déclare mon sitemap dans le robots.txt, puis-je supprimer celui de la Search Console ?

Un sitemap déclaré dans le robots.txt est-il crawlé plus rapidement ?

Que se passe-t-il si plusieurs sitemaps sont déclarés dans le robots.txt et la Search Console ?

Cette normalisation impacte-t-elle les autres moteurs de recherche comme Bing ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 17/04/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.