Official statement

Other statements from this video 12 ▾

- □ Pourquoi Google refuse-t-il désormais certaines directives dans le robots.txt ?

- □ Comment Google gère-t-il réellement les codes de statut HTTP lors du crawl ?

- □ Pourquoi Google extrait-il les balises meta robots et canonical pendant l'indexation plutôt qu'au crawl ?

- □ Pourquoi un noindex sur une page hreflang peut-il contaminer tout votre cluster international ?

- □ Faut-il vraiment compter sur JavaScript pour gérer le noindex ?

- □ Comment désindexer un PDF ou un fichier binaire avec l'en-tête X-Robots-Tag ?

- □ La directive unavailable_after ralentit-elle vraiment le crawling de Google ?

- □ Faut-il désactiver le cache Google pour maîtriser l'affichage de vos snippets ?

- □ Peut-on vraiment forcer Google à rafraîchir un snippet sans être propriétaire du site ?

- □ L'outil de suppression de Google supprime-t-il vraiment vos URLs de l'index ?

- □ Pourquoi Google met-il des mois à supprimer définitivement une page de son index ?

- □ L'outil de suppression Google bloque-t-il réellement le crawl des pages ?

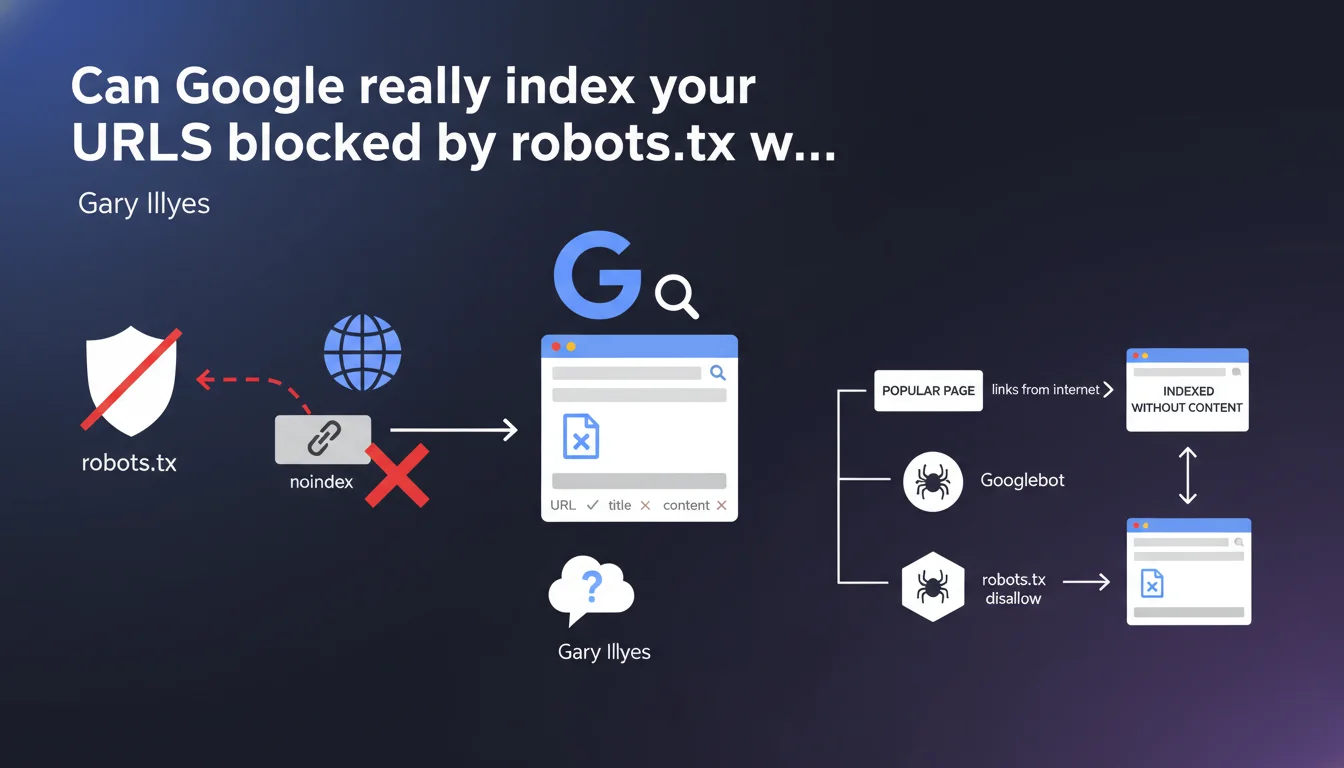

Google can index the URL of a page blocked by robots.txt if it's very popular on the web, but without its content or snippet. The problem: your meta noindex tag will never be read because of the robots.txt block, leaving you with no real control over this partial indexation.

What you need to understand

How can Google index a page it doesn't crawl?

This is the paradox that confuses many SEO professionals. When you block a URL via robots.txt disallow, you prevent Googlebot from crawling the page. Logically, no crawl = no indexation.

Except Google doesn't work solely on crawling. If a page accumulates enough external popularity signals — backlinks, social media mentions, shares — Google can decide to index the URL alone. Not the content, just the address. The result in the SERPs will display the URL and possibly a reconstructed title, but no snippet.

Why doesn't the meta noindex tag work in this case?

For a meta noindex tag to be taken into account, Google must crawl the page and read its HTML code. If robots.txt blocks access, Googlebot can never reach this tag.

It's a conflicting directive: you're prohibiting crawl while hoping Google will respect an instruction it physically cannot see. In this configuration, robots.txt takes precedence — but in a counter-intuitive way, since the page can still appear in the index.

What popularity signals trigger this indexation?

Google doesn't provide a precise threshold, but we're talking about pages that receive quality backlinks, frequent mentions in third-party content, or a significant volume of shares. Think of sensitive PDFs, internal documents that leak, or staging pages that get linked by mistake.

Partial indexation isn't systematic. It happens when Google determines that the URL has sufficient relevance for users, despite not having access to the content.

- robots.txt disallow blocks crawl, not necessarily indexation

- A very popular URL can be indexed without its content or snippet

- The meta noindex tag will never be read if robots.txt blocks access

- Only external signals (backlinks, mentions) trigger this partial indexation

- The displayed result will be a bare URL, sometimes with a reconstructed title

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, and it's actually a classic finding in poorly conducted SEO audits. We regularly see sites that block entire sections via robots.txt — /admin/, /test/, /staging/ — and still end up with these URLs in the index. Often discovered by chance using a site: command.

The problem is that this partial indexation goes unnoticed until it creates a reputation issue or phantom duplicate content problem. A staging URL indexed without a snippet is discreet — but it can also point to sensitive or unfinished content.

Why doesn't Google completely block indexation in this case?

Because robots.txt was never designed to manage indexation, only crawling. It's a 90s-era protocol meant to save bandwidth, not to control SERP visibility.

Google has always maintained this position: if you want to prevent indexation, use meta noindex or X-Robots-Tag. But the nuance here is that these directives require a crawl to be read. [To verify]: Google provides no data on the popularity threshold triggering this partial indexation, nor on the frequency of this phenomenon.

Are there exceptions or edge cases?

Yes. A URL with no backlinks or external mentions has little chance of being indexed, even if it's technically accessible. Conversely, a page blocked by robots.txt but massively shared on Reddit or Hacker News can get indexed within hours.

Another nuance: if you temporarily unblock a URL via robots.txt to let Google read your meta noindex, then block it again, you create a opportunistic crawl window. Google can index the content during this timeframe, especially if the page changes status frequently.

Practical impact and recommendations

What should you concretely do to avoid this partial indexation?

First, never rely solely on robots.txt to manage indexation. If a page must stay out of the index, the recommended strategy is to leave it crawlable but add a meta noindex tag or an X-Robots-Tag: noindex header.

For truly sensitive content — internal data, staging pages, test environments — use HTTP authentication (login/password at the server level) or IP whitelisting restrictions. No external signal can force indexation of an inaccessible page.

How do you detect if your site already suffers from this problem?

Run a site:yourdomain.com search in Google and scrutinize the results. Indexed URLs without snippets, with just a generic title or bare URL, are suspicious. Then cross-reference with your robots.txt to identify pages blocked from crawl but indexed anyway.

Also use Google Search Console: Pages section > "Why pages aren't indexed" > "Blocked by robots.txt". If some of these URLs appear in the index anyway, you're right in the case described by Gary Illyes.

What mistakes must you absolutely avoid?

Don't block a page with robots.txt that you want to deindex via meta noindex. This is a common error: you think you're securing doubly, but in reality you're canceling the noindex effect. Google can't read a directive it never crawled.

Another trap: temporarily unblocking robots.txt to "let" noindex through, then blocking again. Risky timing, and you risk creating unpredictable indexation fluctuations. Better to choose a stable strategy.

- Allow crawl of pages with meta noindex rather than blocking them with robots.txt

- Use HTTP authentication or IP whitelisting for truly sensitive content

- Regularly audit indexed URLs via site: command and Search Console

- Cross-reference robots.txt data with actual indexation status to detect inconsistencies

- Never combine robots.txt disallow and meta noindex on the same URL

- Monitor incoming backlinks to sensitive pages using tools like Ahrefs or Majestic

❓ Frequently Asked Questions

Peut-on forcer la désindexation d'une URL bloquée par robots.txt ?

Combien de backlinks faut-il pour déclencher cette indexation partielle ?

Robots.txt disallow protège-t-il contre le scraping ou la copie de contenu ?

Si je débloque robots.txt pour ajouter noindex, combien de temps avant que Google crawle la page ?

Une URL indexée sans snippet a-t-elle un impact SEO négatif ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 04/08/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.