Official statement

Other statements from this video 14 ▾

- □ Faut-il changer de domaine lors d'une réduction de catalogue ou conserver l'existant ?

- □ Les backlinks vers une page 404 sont-ils définitivement perdus ou récupérables ?

- □ Peut-on vraiment avoir des millions de redirections 301 sans impacter son SEO ?

- □ Faut-il vraiment ignorer les erreurs 404 dans Google Search Console ?

- □ Google crawle-t-il vraiment les liens dans les menus déroulants au survol ?

- □ Combien de redirections peut-on vraiment mettre sur un site sans pénalité SEO ?

- □ Faut-il privilégier une personne ou une organisation comme auteur d'un article pour le SEO ?

- □ Faut-il vraiment aligner URL, title et H1 pour ranker en SEO ?

- □ Bloquer une page de redirection par robots.txt peut-il vraiment empêcher le passage du PageRank ?

- □ Les tirets multiples dans un nom de domaine pénalisent-ils votre SEO ?

- □ Faut-il publier du contenu tous les jours pour bien ranker sur Google ?

- □ Faut-il vraiment abandonner le texte dans les images pour le SEO ?

- □ Désindexer des URLs : Google limite-t-il vraiment les options à deux méthodes ?

- □ Les Core Web Vitals écrasent-ils vraiment la pertinence dans le classement Google ?

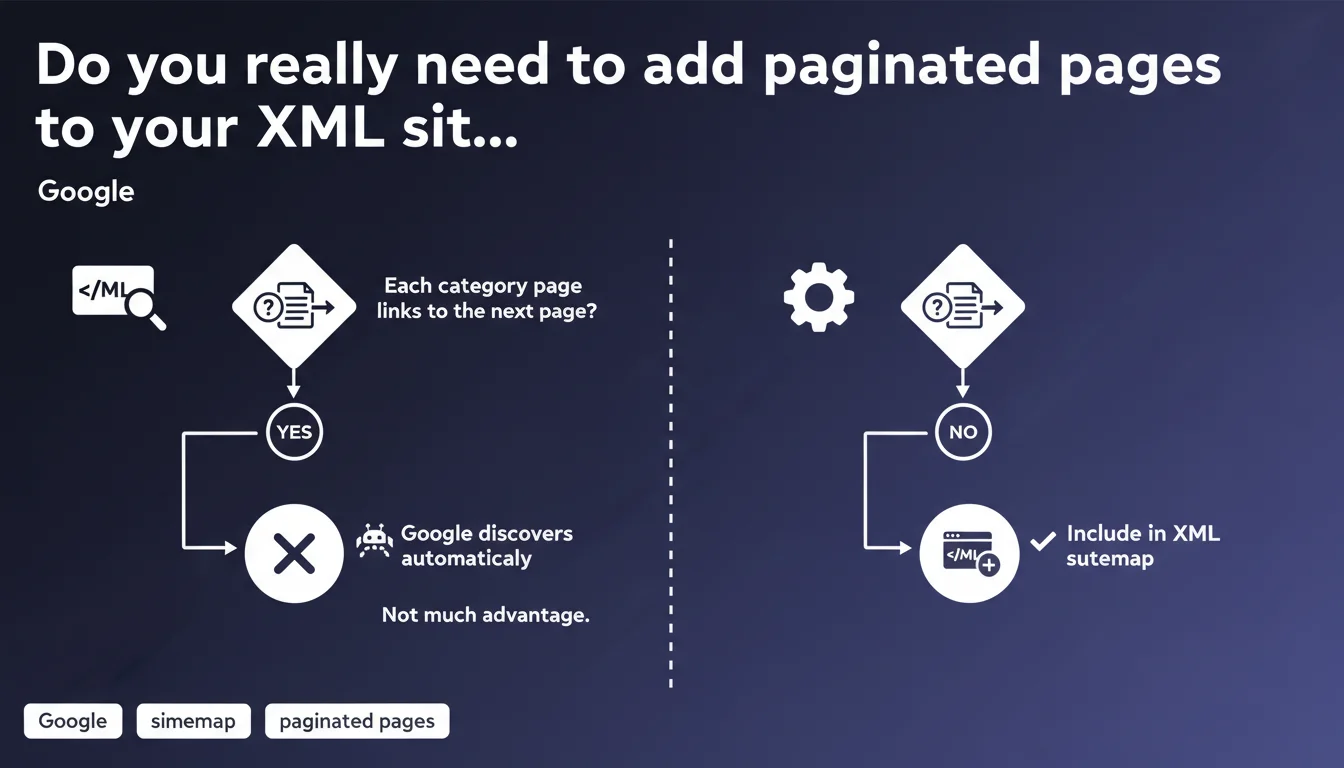

Google automatically discovers paginated pages if each category page has a link to the next page. Adding them to an XML sitemap therefore provides no significant advantage in this scenario. Standard internal linking structure is sufficient for pagination crawling.

What you need to understand

How does Google's paginated page discovery mechanism work?

Google relies on internal linking to follow pagination links. Concretely, if your page 1 contains a link to page 2, which itself points to page 3, Googlebot will naturally explore this chain.

This statement confirms that XML sitemaps are not essential for this type of content. Google trusts the navigation structure — provided it is logical and crawlable.

In what contexts does this logic apply?

This principle essentially applies to e-commerce category pages or standard paginated listings. The typical pattern: a page 1 with a "Next" button that leads to page 2, and so on.

However, if your pagination is managed by client-side JavaScript or if the links are not detectable during initial crawling, the situation changes. Google cannot "automatically" discover what it doesn't see in the raw HTML.

Why does Google say "maybe not much advantage"?

This cautious wording reflects a reality: in some cases, the sitemap can still accelerate discovery or serve as a safety net. Google isn't saying "useless", but rather "redundant".

The nuance matters. If your crawl budget is tight or your architecture is complex, the sitemap remains a control tool — even for pagination.

- Natural crawling: Google follows pagination links automatically if the structure is clear

- XML sitemap: Useful as a safety net, but not essential in a standard case

- Essential condition: Each page must point to the next via a standard HTML link

- Exceptions: JavaScript pagination, complex architecture, limited crawl budget

SEO Expert opinion

Is this statement consistent with real-world practices?

Yes, overall. Tests show that Google does indeed crawl paginated pages via internal links, without needing the sitemap. Let's be honest: the vast majority of e-commerce sites with standard pagination see their pages 2, 3, 4... indexed without issue.

But — and this is where it gets tricky — this statement remains vague on timelines. "Automatically discovers" doesn't mean "indexes quickly". [To verify] depending on your crawl budget and pagination depth.

In what cases does this rule not apply?

First obvious case: infinite pagination or JavaScript "Load more" buttons. If the link to the next page doesn't exist in static HTML, Google can't discover anything automatically.

Second case: sites with a tight crawl budget. If your site has millions of pages and Googlebot limits its visits, relying solely on natural crawling can delay indexing of deep pages. The sitemap then becomes a prioritization signal.

Third case: architectures where pagination is accessible via multiple paths (crossed filters, multiple facets). Google's statement assumes a simple linear structure — reality is often messier.

What's the best approach based on my experience?

Concretely? Keep paginated pages in the sitemap, even if Google says it's not necessary. It costs nothing and can serve as a parachute if crawling issues arise.

The argument of "not much advantage" doesn't mean "disadvantage". Unless you have a gigantic XML sitemap that exceeds technical limits, there's no reason to remove these URLs. It's a marginal optimization for marginal gains.

Practical impact and recommendations

What should you do concretely with your pagination?

First, verify that each paginated page contains a proper standard HTML link to the next page. Inspect the source code — not just the visual display. If the link is generated in JavaScript after loading, that's a red flag.

Next, test crawling with a tool like Screaming Frog or Sitebulb. Run a crawl from your page 1 and verify that all paginated pages are discovered. If some don't show up, your structure has issues.

Finally, check your server logs. Look at how frequently Googlebot visits your pages 5, 10, 20. If the bot never goes beyond page 3, the sitemap can indeed help push these URLs.

What critical mistakes should you avoid?

Don't massively remove your paginated pages from the sitemap without monitoring. This statement doesn't justify a radical cleanup — especially on a large site.

Also avoid relying solely on natural crawling if your pagination exceeds 50 pages. Beyond that, the probability that Google will explore everything without external help decreases, especially if crawl budget is limited.

Another pitfall: believing that "automatic discovery" = "guaranteed indexing". Google can crawl a paginated page and decide not to index it (duplicate content, low added value). These are two distinct steps.

- Verify that each paginated page contains an HTML link to the next one

- Test pagination crawling with a dedicated tool (Screaming Frog, Sitebulb)

- Analyze server logs to measure how frequently Googlebot visits deep pages

- Keep paginated pages in the XML sitemap as a precaution, unless there are technical constraints

- Monitor actual indexing (Search Console) after any strategy changes

- Don't confuse crawling and indexing: Google can discover without indexing

❓ Frequently Asked Questions

Dois-je supprimer les pages paginées de mon sitemap XML ?

Et si ma pagination est gérée en JavaScript ?

Comment savoir si Google crawle bien toutes mes pages paginées ?

Faut-il utiliser rel="next" et rel="prev" pour la pagination ?

Le crawl budget peut-il limiter l'indexation de mes pages paginées ?

🎥 From the same video 14

Other SEO insights extracted from this same Google Search Central video · published on 29/12/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.