Official statement

Other statements from this video 10 ▾

- □ Faut-il supprimer les données structurées HowTo de vos pages après l'arrêt des résultats enrichis ?

- □ Faut-il abandonner le balisage FAQ sur votre site après la restriction de Google ?

- □ Faut-il vraiment laisser votre CMS gérer vos données structurées ?

- □ Combien de fois Google déploie-t-il vraiment ses core updates ?

- □ Faut-il bloquer le contenu tiers de l'indexation pour éviter les pénalités du Helpful Content ?

- □ Pourquoi Google vous renvoie-t-il vers sa documentation après une chute de classement ?

- □ Faut-il s'abonner au Search Status Dashboard de Google pour anticiper les mises à jour ?

- □ Les noms de sites multilingues s'affichent-ils automatiquement dans Google ?

- □ Google filtre-t-il vraiment vos pages par langue pour chaque requête ?

- □ Google indexe-t-il vraiment vos fichiers CSV et faut-il s'en préoccuper ?

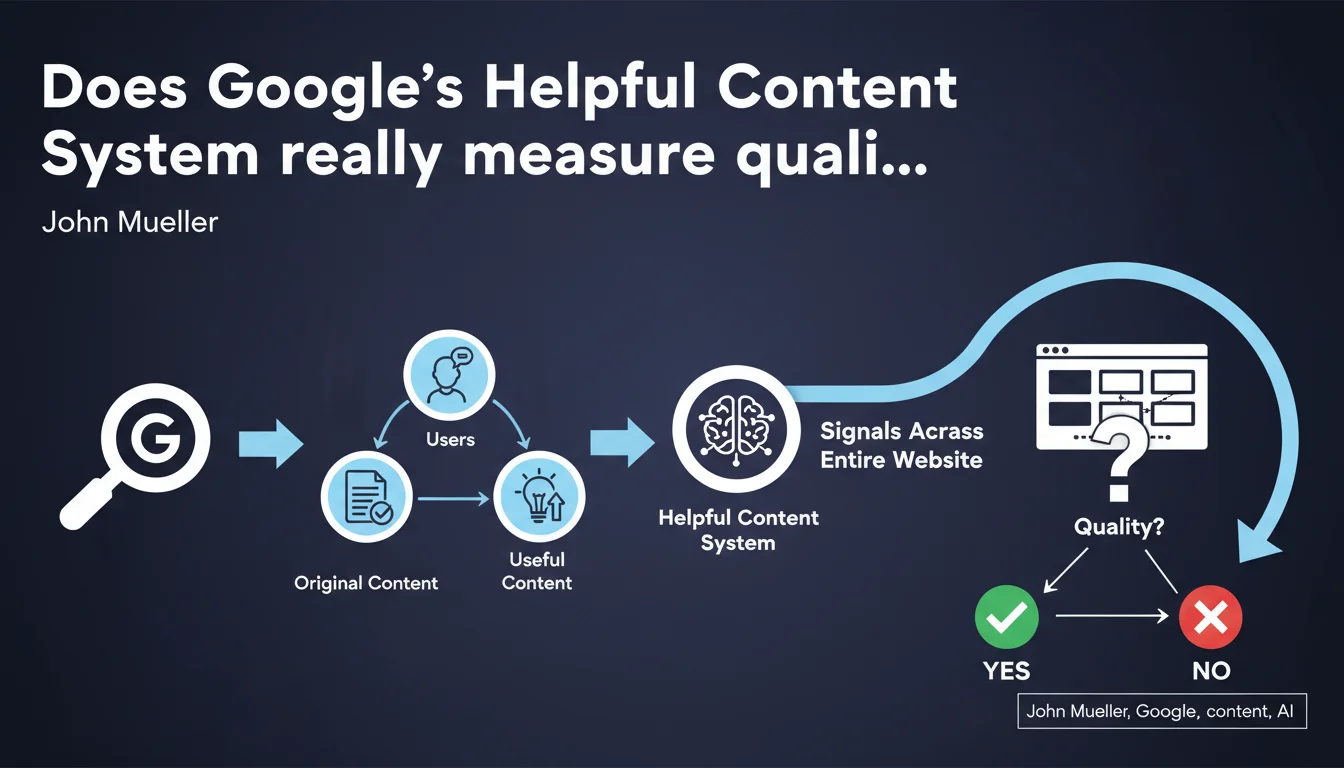

Google claims that its Helpful Content System prioritizes original content created for users rather than for search engines. The crucial point: this system applies signals at the entire site level, not page by page. A site flagged negatively will see all of its pages penalized, even high-quality ones.

What you need to understand

What exactly does this system target?

The Helpful Content System seeks to identify sites that produce content primarily to rank, rather than to answer a real user need. Google wants to detect content farms, sites bloated with hollow SEO articles, pages generated in bulk without added value.

Originality and usefulness are the two criteria highlighted. Concretely, Google measures whether your content provides something the user cannot find elsewhere, and whether it truly answers their search intent.

Why talk about signals "at the site level"?

This is where everything changes. Unlike Panda, which could target specific sections, this system evaluates the site as a whole. If Google detects a significant ratio of weak or opportunistic content, the entire domain can be demoted.

A site with 80% solid content but 20% spam pages generated to capture long-tail traffic risks being penalized globally. The logic: a trustworthy site would not publish massive amounts of worthless content.

How does Google measure the "usefulness" of content?

Google remains deliberately vague about exact metrics. We assume a mix of behavioral signals (bounce rate, time on page, pogo-sticking), semantic analysis, and patterns detected via machine learning.

Let's be honest: nobody knows exactly how Google quantifies "originality" or "usefulness" at scale. Official recommendations remain vague — along the lines of "write for users, not for engines" — which frankly doesn't help practitioners much.

- Global evaluation: the signal applies to the entire site, not page by page

- Dual criteria: originality AND usefulness, not one without the other

- Primary target: content created mainly to rank, without real value

- Cumulative impact: a significant volume of weak content can contaminate the entire domain

- Methodological opacity: Google does not reveal the technical signals used

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. Since this system arrived, we have indeed observed sharp drops in visibility on sites with generic or opportunistic content. Niches bloated with "Top 10" articles or rewritten content have taken a hit.

But — and this is where it gets tricky — we also see quality sites affected, simply because they publish frequently. High volume becomes suspicious to Google. [To verify]: the algorithm's actual ability to distinguish a legitimate media outlet publishing 50 articles/month from a content farm doing the same thing.

What nuances should be added to this statement?

The idea of signals "at the site level" poses a granularity problem. Can an e-commerce site with 10,000 solid product pages and 500 mediocre blog articles be penalized globally? Field feedback suggests yes, in some cases.

Google talks about "originality," but its system seems primarily to detect semantic duplication and AI writing patterns. Content can be useful without being completely original — think of product comparisons, technical tutorials that necessarily repeat the same steps.

In what cases can this system malfunction?

False positives exist. I've seen legitimate news sites lose 40% of their traffic after a Helpful Content update, simply because they were heavily covering trending topics. Google sometimes interprets editorial reactivity as SEO opportunism.

Another problematic case: multilingual or multi-regional sites. Is translating content considered "non-original"? Does adapting an article for different local markets trigger alerts? [To verify]: the algorithm's exact behavior with translated or localized content.

Practical impact and recommendations

What should you do concretely to align with this system?

First step: audit your existing content. Identify pages that generate little engagement, have low time on page, or rank for no queries after several months. These pages are potential anchors dragging you down.

Then ask yourself THE question for each piece of content: "Does a user landing on this page find an answer they couldn't find better elsewhere?" If the answer is no, you have three options: improve radically, merge with an existing page, or delete.

What mistakes should you avoid at all costs?

Don't fall into the trap of volume for volume's sake. Publishing 200 generic articles is worth less than 20 truly differentiated pieces of content. Google has clearly changed its approach: quantity no longer compensates for mediocrity.

Also avoid purely SEO content — pages that exist only because a keyword research tool told you "2,900 searches/month." If nobody would naturally read your article outside an SEO context, that's a red flag.

How can you verify that my site meets these criteria?

Use engagement metrics from Google Analytics or your measurement tool: average time on page, bounce rate, scroll depth. Useful content keeps users engaged. Cross-reference with Search Console data: high CTR but low time on page often signals disappointing content.

Also test the naive reader method: have someone outside SEO read your content. If that person finds the article useful and well-written without prior context, you're probably safe. If they drop off after two paragraphs, Google will detect that too.

- Audit all existing content using engagement metrics

- Delete or improve low-value pages (no-index is not enough)

- Stop producing opportunistic content based solely on search volume

- Prioritize depth and expertise on chosen topics over broad coverage

- Systematically measure real user engagement on new content

- Document your writers' expertise (author bio, field experience, credentials)

- Avoid detectable AI patterns: overly uniform structure, generic phrasing, lack of perspective

❓ Frequently Asked Questions

Le système de contenu utile est-il une pénalité manuelle ?

Combien de temps faut-il pour récupérer après avoir été impacté ?

Peut-on utiliser l'IA pour créer du contenu sans être pénalisé ?

Le no-index suffit-il pour isoler du contenu faible ?

Comment savoir si mon site est touché par ce système ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 05/10/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.