Official statement

Other statements from this video 9 ▾

- □ Comment Google crawle-t-il vraiment vos pages web ?

- □ Comment Google découvre-t-il vraiment vos nouvelles pages ?

- □ Comment Googlebot décide-t-il quelles pages crawler sur votre site ?

- □ Googlebot ralentit-il volontairement sur votre site pour ne pas le surcharger ?

- □ Pourquoi Googlebot ignore-t-il une partie des URLs qu'il découvre ?

- □ Googlebot peut-il vraiment crawler le contenu derrière une page de connexion ?

- □ Pourquoi Google ne voit-il pas votre contenu JavaScript sans rendering ?

- □ Faut-il vraiment un sitemap XML pour être indexé par Google ?

- □ Faut-il vraiment automatiser la génération de vos sitemaps ?

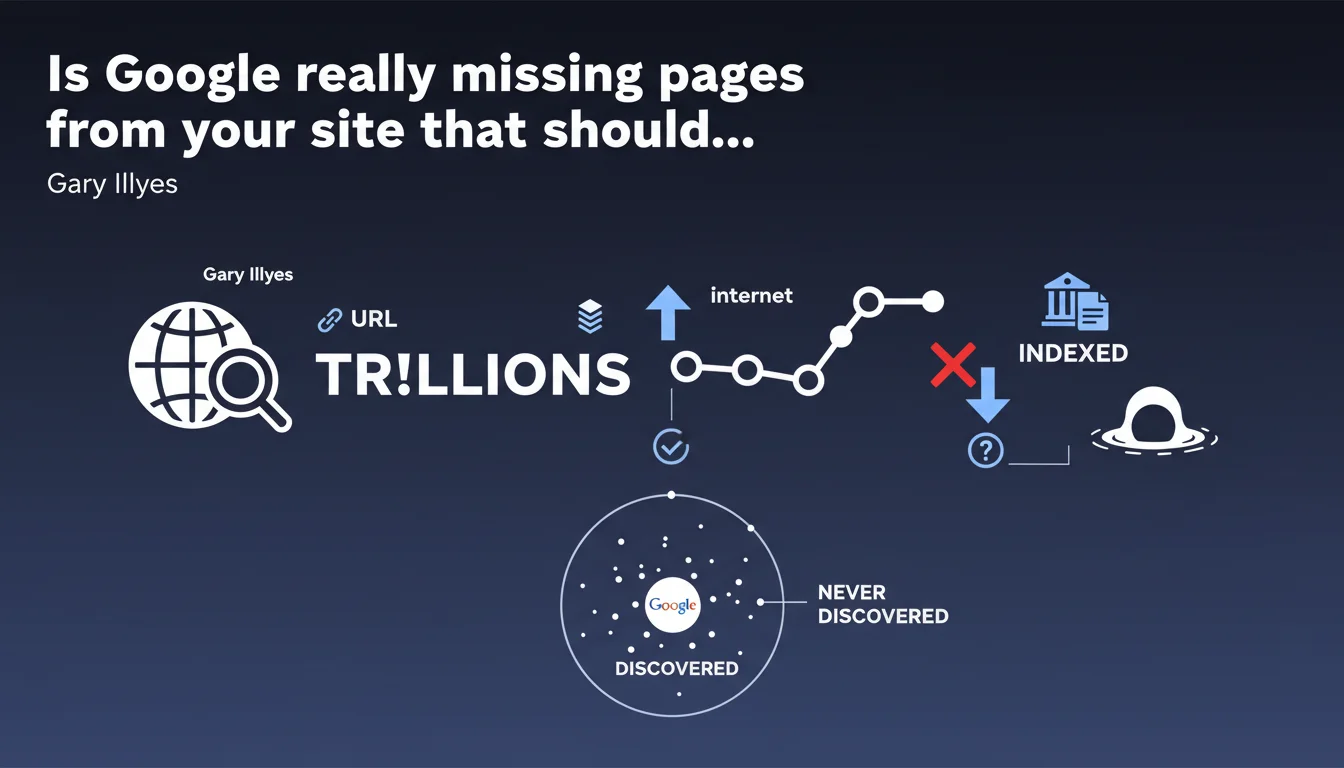

Google confirms that certain pages will never be discovered among the trillions of URLs on the internet. This statement reminds us that indexation is not automatic and that websites must actively facilitate content discovery. Crawl budget and technical structure remain critical challenges.

What you need to understand

What does Google's statement really mean?

Gary Illyes reminds us of a reality that is often underestimated: Google does not crawl the entire web. With trillions of URLs online, the search engine makes choices. Some pages will never be visited, others discovered months later.

This claim is not new, but it deserves to be taken seriously. Sites that rely on passive discovery through natural crawling are taking a risk — especially those that generate massive amounts of content or suffer from technical issues.

Why are certain URLs never discovered?

Several factors limit discovery: absence of inbound links, excessive depth in site architecture, misconfigured robots.txt files, orphaned pages without internal linking. Google does not guess that a page exists if no signal makes it visible.

Crawl budget also plays a central role. Sites with thousands of pages but low authority or poor response times see their allocated crawl budget drastically reduced. Result: entire sections of the site remain in the shadows.

What are the direct consequences for a website?

A page that is not discovered will never be indexed. No indexation, no ranking possible. It's that simple. Strategic content — product sheets, in-depth articles, conversion pages — can end up invisible if nothing is done to signal them to Google.

- Limited crawl budget: Google allocates a finite crawl time per site, proportional to its authority and technical health.

- Site architecture depth: The deeper a page is buried, the less likely it is to be crawled quickly.

- Absence of links: No internal or external links to a page = no natural discovery.

- Negative technical signals: High response times, recurring 5xx errors, chained redirects harm discovery.

- Orphaned pages: Content created but never connected to the rest of the site.

SEO Expert opinion

Is this statement consistent with what we observe in practice?

Absolutely. We regularly see sites with thousands of pages where only a fraction of their URLs are indexed. Google Search Console confirms it: some sites see 30 to 40% of their pages as "discovered but not crawled" or "crawled but not indexed".

The problem is especially acute for e-commerce sites with faceted filters, forums, classified ad sites, or content aggregators. These platforms generate URLs continuously — and Google has neither the time nor the interest to visit all of them.

What nuance should we add to this statement?

Gary Illyes mentions "trillions of URLs," but doesn't specify what proportion of these pages are genuinely useful or high-quality. [To be verified]: how many of these undiscovered URLs are spam, technical duplicates, or content with no added value?

Let's be honest — a significant share of uncrawled pages probably deserves to remain forgotten. The real issue is ensuring that your strategic pages are not part of that pool. And there, data is lacking. Google provides no threshold, no clear metric to assess whether your site is problematically affected.

When does this rule not apply?

If your site is small (a few hundred pages), well-structured, with clean XML sitemaps and coherent internal linking, you probably have nothing to worry about. Google will discover the essentials without difficulty.

However, once you cross the threshold of thousands of URLs — with auto-generated content, infinite search filters, or forum archives — the situation changes dramatically. There, Gary Illyes' statement becomes a direct warning.

Practical impact and recommendations

What should you do concretely to maximize page discovery?

Submit your XML sitemaps via Google Search Console. This is the most direct way to signal your important URLs. Ensure these sitemaps are up-to-date, free of 404 errors and redirects, and contain only pages you actually want indexed.

Optimize your internal linking. Every strategic page should be accessible in a maximum of 3 clicks from the homepage. Use descriptive anchor text and vary navigation paths to multiply discovery opportunities.

Work on your crawl budget. Eliminate unnecessary URLs (sort parameters, redundant filters, user sessions), fix server errors, reduce response times. Every millisecond saved allows Google to crawl more useful pages.

What mistakes should you avoid at all costs?

Don't accidentally block entire sections of your site via robots.txt or noindex tags. Regularly check your crawl rules in Google Search Console and test them on critical URLs.

Avoid orphaned pages. If a page is not linked to anything, Google will probably never find it. Conduct regular audits to identify isolated content and reconnect it to your site architecture.

Don't rely solely on sitemaps. They help, but Google prioritizes discovery via links. Content with no internal or external links will likely remain invisible, even if it appears in a sitemap.

How can you verify that your site is properly discovered?

Check the coverage report in Google Search Console. Identify pages "discovered but not crawled" and ask yourself why: insufficient crawl budget? Pages too deep? Technical problems?

Use an SEO crawler (Screaming Frog, Oncrawl, Botify) to simulate Googlebot behavior. Compare the URLs discovered by your tool with those indexed by Google. The gap will give you an idea of problem areas.

- Submit and maintain XML sitemaps in Google Search Console

- Verify that all strategic pages are accessible within 3 clicks maximum from the homepage

- Eliminate unnecessary URLs that waste crawl budget (filters, sessions, duplicates)

- Fix server errors (5xx) and optimize response times

- Regularly audit orphaned pages and reconnect them to internal linking

- Analyze the coverage report in Google Search Console to identify bottlenecks

- Use an SEO crawler to simulate Googlebot behavior and detect gaps

- Obtain backlinks to important pages to facilitate their natural discovery

❓ Frequently Asked Questions

Combien de pages Google peut-il découvrir sur mon site ?

Les sitemaps XML garantissent-ils la découverte de toutes mes pages ?

Pourquoi certaines de mes pages sont « découvertes mais non explorées » ?

Faut-il bloquer les URLs inutiles dans robots.txt ou via noindex ?

Combien de temps faut-il à Google pour découvrir une nouvelle page ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 22/02/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.