Official statement

Other statements from this video 9 ▾

- □ Comment Google crawle-t-il vraiment vos pages web ?

- □ Comment Google découvre-t-il vraiment vos nouvelles pages ?

- □ Pourquoi Google ne découvre-t-il pas toutes les URLs de votre site ?

- □ Comment Googlebot décide-t-il quelles pages crawler sur votre site ?

- □ Googlebot ralentit-il volontairement sur votre site pour ne pas le surcharger ?

- □ Pourquoi Googlebot ignore-t-il une partie des URLs qu'il découvre ?

- □ Googlebot peut-il vraiment crawler le contenu derrière une page de connexion ?

- □ Pourquoi Google ne voit-il pas votre contenu JavaScript sans rendering ?

- □ Faut-il vraiment un sitemap XML pour être indexé par Google ?

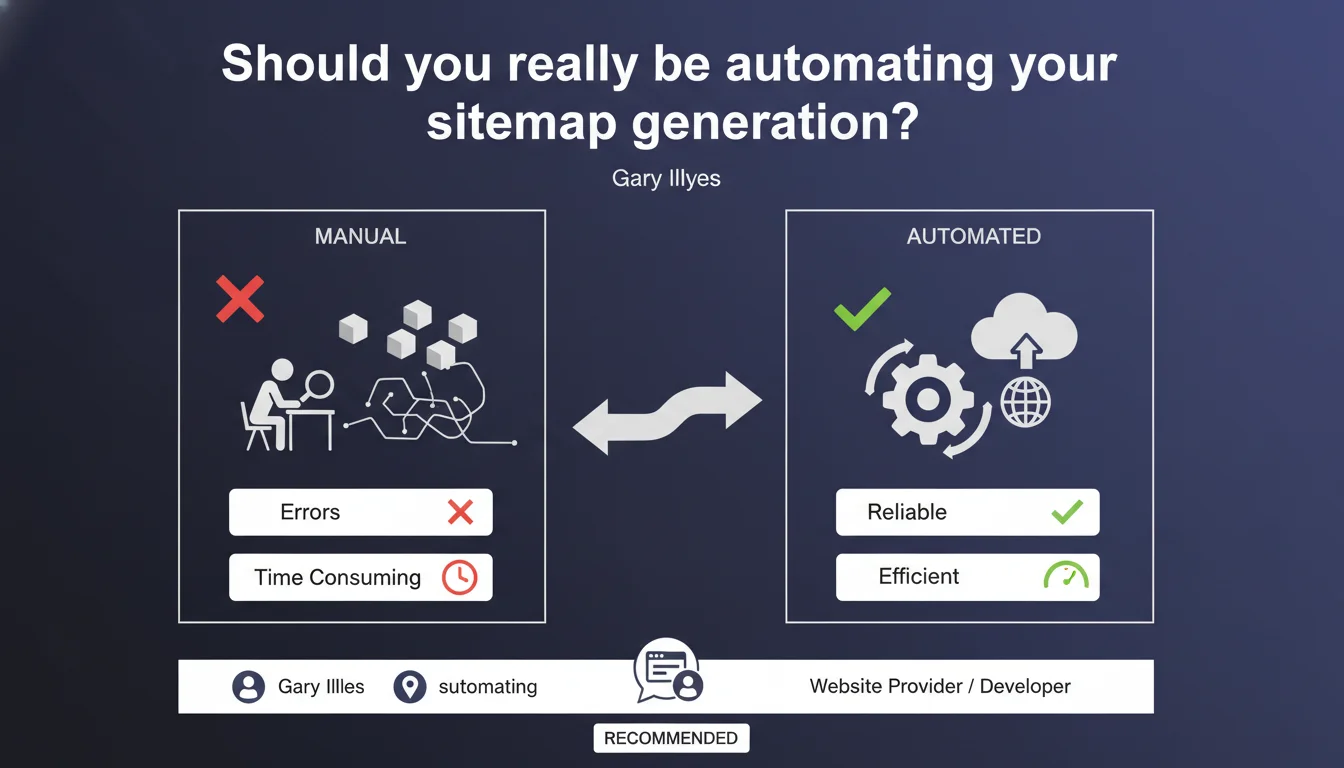

Google recommends automatically generating sitemap files rather than creating them manually. Automation reduces human errors and ensures consistency between the content actually present on your site and what is declared in the sitemap. A recommendation that seems obvious, but which conceals some practical nuances.

What you need to understand

Why does Google insist on automating sitemaps?

The official reason comes down to one word: consistency. A manually generated sitemap quickly becomes outdated as soon as a page is added, removed, or modified. Errors accumulate — invalid URLs, non-existent pages referenced, arbitrary priority tags.

Google wants to avoid crawling dead URLs or wasting time on sitemaps that don't reflect the reality of your site. Automation ensures that every content modification is immediately reflected in the XML file.

What exactly do we mean by automatic generation?

It means having the CMS or framework generate the sitemap on the fly, by querying the database or file system. WordPress with Yoast or Rank Math, Shopify, PrestaShop — most modern platforms offer this functionality natively or via extension.

For custom sites, this involves coding a dedicated route that dynamically compiles URLs from published content. The alternative: a CRON script that regenerates the file at regular intervals.

Does this recommendation apply to all websites?

In theory yes. In practice, some contexts require post-generation manual adjustments. Consider sites with complex business logic: certain pages must be excluded based on rules that go beyond simple publication status.

- Automation = fewer human errors and real-time updates

- Modern CMS: most integrate automatic generation natively

- Custom sites: requires specific development (dynamic route or CRON script)

- Special cases: certain business logic may require occasional manual intervention

SEO Expert opinion

Does this statement really reflect field practices?

Let's be honest: 99% of SEO practitioners already automate their sitemaps. Gary Illyes's recommendation is preaching to the choir for anyone working with a decent CMS for at least a decade.

What's puzzling is the timing of this statement. Why reiterate such a basic principle now? Two hypotheses: either Google is still observing too many gross errors on legacy sites, or it's laying the groundwork for stricter requirements on sitemap quality.

What are the limitations of complete automation?

Blind automation can sometimes cause problems. A misconfigured WordPress plugin can generate thousands of unnecessary URLs — archive pages, tags, empty categories. Result: crawl budget dilution and sitemap pollution.

Another thorny case: sites with complex pagination or filtering facets. Automation must integrate intelligent exclusion rules, otherwise the sitemap explodes in volume. [To verify]: Google has never provided precise guidelines on the optimal number of URLs per sitemap in an e-commerce context with thousands of combinations.

And what about temporary pages — events, flash promotions? Automation includes them by default, but should they really be indexed if they disappear within 48 hours? The nuance is missing from this generic recommendation.

In what cases does manual intervention remain justified?

Some sites require fine-grained control over what enters the sitemap. Think of platforms with user-generated content: forums, marketplaces, UGC sites. Automation may include empty profile pages, spam threads, unmoderated product sheets.

Same for multilingual sites with complex hreflang logic. Automation must be supervised — a bug in generation and your entire international architecture falls apart.

Practical impact and recommendations

How to implement automatic generation correctly?

If you're on WordPress, Shopify, or PrestaShop, enable a recognized SEO plugin (Yoast, Rank Math, SEO Press). Configure exclusions: no author pages, no empty categories, no date archives.

For custom-built sites, create a dedicated route (e.g., /sitemap.xml) that queries your database and compiles published URLs in real-time. Alternative: CRON script that regenerates the file hourly or daily depending on your content update frequency.

In all cases, test XML syntax before submitting to Google. A malformed sitemap can be silently ignored.

What pitfalls should you absolutely avoid?

First pitfall: generating a sitemap with non-canonical URLs. If your site uses tracking or session parameters, automation must filter them out.

Second common error: including pages blocked by robots.txt. Automation doesn't always verify this consistency — as a result, you declare to Google URLs it cannot crawl.

Third point: excessive volume. A sitemap can contain a maximum of 50,000 URLs. Beyond that, split with a sitemap index. Automation must handle this logic, otherwise you'll exceed the limit without realizing it.

How do you verify that everything is working as intended?

Regularly check in Google Search Console: Sitemaps section. Verify the number of URLs submitted vs. detected. A significant gap signals a problem.

Compare the sitemap with server logs: Is Googlebot actually crawling the declared URLs? If certain sitemap pages are never visited, it's either a priority issue or they're unreachable.

- Enable automatic generation via CMS or custom development

- Configure exclusions (archives, empty tags, tracking parameters)

- Test XML syntax and verify W3C compliance

- Exclude URLs blocked by robots.txt

- Split into sitemap index if volume > 50,000 URLs

- Monitor in Search Console: URLs submitted vs. detected

- Cross-reference with server logs to validate actual crawling

❓ Frequently Asked Questions

Un sitemap est-il obligatoire pour être indexé par Google ?

Faut-il soumettre le sitemap à chaque modification de contenu ?

Peut-on avoir plusieurs sitemaps pour un même site ?

Les balises de priorité et fréquence de changement dans le sitemap servent-elles encore ?

Que faire si Search Console signale des erreurs dans mon sitemap automatisé ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 22/02/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.