Official statement

Other statements from this video 9 ▾

- □ Faut-il vraiment compter sur les recommandations de la Search Console pour optimiser son site ?

- □ Pourquoi Google Search Console conserve-t-elle enfin vos filtres entre chaque changement de propriété ?

- □ Faut-il s'inquiéter de la suppression du cache Google et de l'opérateur cache: ?

- □ Faut-il encore utiliser la balise meta noarchive après la suppression du cache Google ?

- □ Le paramètre srsltid dans les URLs e-commerce : faut-il s'en préoccuper en SEO ?

- □ Pourquoi Google met-il soudainement à jour sa documentation sur le SEO vidéo, les liens de titre et les crawlers ?

- □ Faut-il vraiment limiter l'usage de l'API d'indexation aux types de contenu spécifiques ?

- □ Pourquoi les URLs avec symbole dièse ne peuvent-elles pas servir de canoniques ?

- □ Faut-il adopter le format AVIF maintenant que Google Images le supporte ?

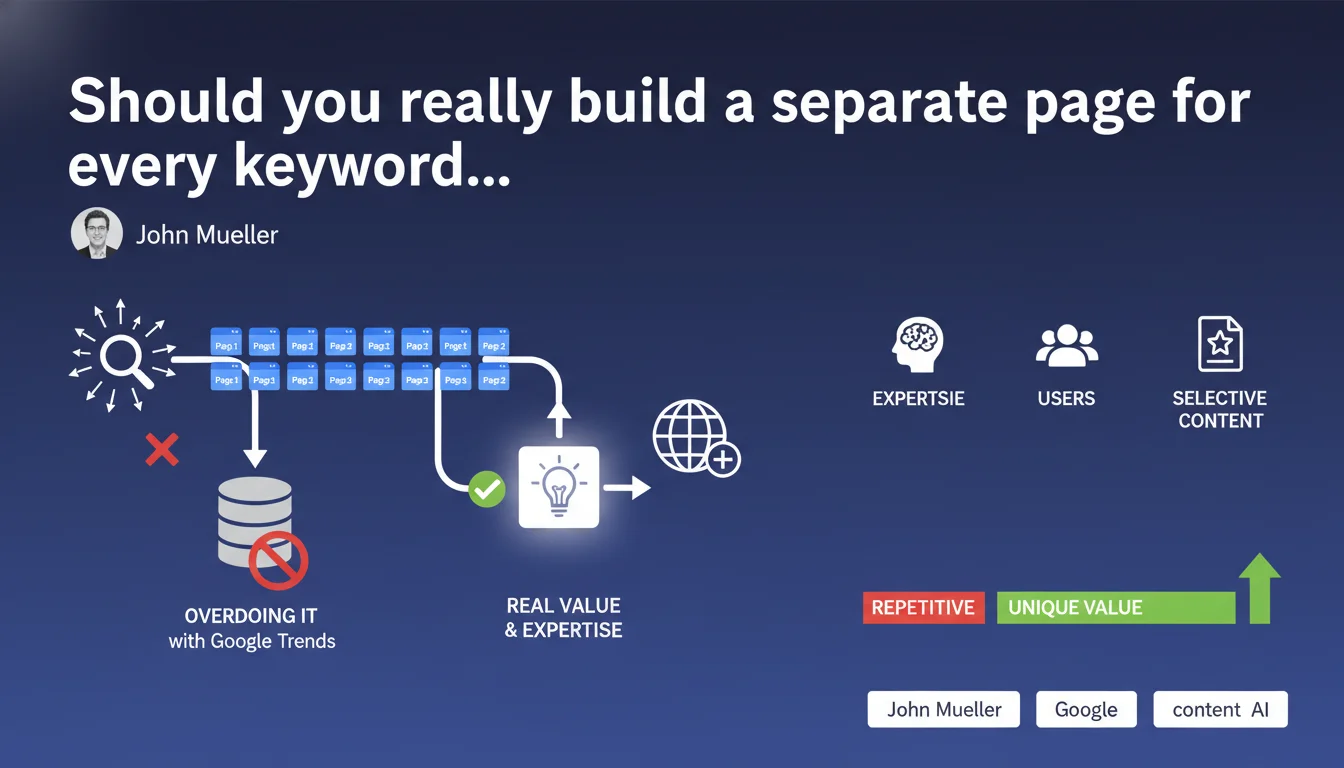

Google explicitly advises against multiplying pages to cover every search query variation identified through tools like Google Trends. The focus should be on delivering genuine user value and showcasing your unique expertise, rather than attempting exhaustive coverage of all possible semantic variations.

What you need to understand

Why is Google warning against this approach?

This statement targets a common pitfall: the abuse of keyword research tools to create dozens of nearly identical pages, each targeting a minor variation of the same query. Google Trends, Semrush, Ahrefs — all these tools reveal hundreds of semantic variations around a given topic.

The temptation is strong to create a separate page for "best CRM software," "best CRM tool," "top CRM software," and so on. Except from the user's perspective — and therefore Google's — these pages offer no meaningful differentiation. They dilute your site's authority and clutter the index.

What does "adding real value" actually mean in practice?

Google emphasizes two criteria: unique expertise and user relevance. A page has value if it offers an original angle, exclusive data, in-depth analysis, or an answer competitors haven't provided.

Simply repeating what everyone else says while changing a few words no longer cuts it. The algorithm detects these weakly differentiated pages and penalizes them through quality signals (notably the Helpful Content Update).

What are the risks of this strategy?

- Internal cannibalization: multiple pages compete for the same queries, none ranks properly

- Crawl budget dilution: Google wastes crawl resources on redundant pages instead of exploring high-value content

- Spam signals: multiplying similar pages can trigger manipulation detection, especially if content is auto-generated

- Degraded user experience: visitors encounter pages that all look the same, increasing bounce rate and weakening behavioral signals

SEO Expert opinion

Is this position from Google actually new?

No. It's a reminder of a principle as old as modern SEO itself: quality trumps quantity. What's changing is how aggressively Google now enforces this principle through algorithmic filters.

From the Panda updates (already old) through the Helpful Content Update, the direction has been clear. But with the explosion of generative AI tools and the ease of creating content at scale, Google is turning up the heat. John Mueller's message isn't casual — it's aimed directly at industrial content production strategies.

When does this rule not apply?

There are contexts where multiplying pages remains legitimate. An e-commerce site with genuinely different product variants (size, color, model) legitimately needs separate pages. Similarly, a news site covering multiple angles of an event can create separate articles if each provides a unique editorial angle.

The distinction lies in intent: are you creating this page because it addresses a distinct user need, or simply because a tool flagged 50 monthly searches for that variation? If it's the latter, skip it.

What are the limitations of this statement?

Google remains deliberately vague on acceptable thresholds. How many similar pages is "too many"? No numeric answer. [To verify]: in practice, some high-authority sites continue ranking with dozens of near-duplicate pages, while smaller sites face penalties for far less.

The threshold seems to depend on the overall domain trust level and perceived quality of remaining content. In other words: what works for a big site won't work for a small one. It's unfair, but it's what's observed in the field.

Practical impact and recommendations

What should you do concretely?

Audit first. Identify clusters of pages with semantic overlap. Use a crawl tool (Screaming Frog, Oncrawl) combined with content analysis to spot pages with high lexical overlap targeting identical search intent.

Then consolidate. Instead of 10 pages on minor variations, create one comprehensive pillar page covering the entire topic and addressing all intent variations. Redirect redundant pages via 301 to this single resource.

Which mistakes should you absolutely avoid?

Don't base page creation decisions solely on search volume. A keyword with 200 monthly searches doesn't automatically justify a dedicated URL if the intent is already covered elsewhere on your site.

Also avoid pseudo-differentiation: changing the H1 and three sentences in an identical template isn't enough. Google analyzes content at a deep semantic level, not just exact words. If two pages say the same thing with different wording, they'll be flagged as redundant.

How can you verify your site complies with this directive?

- Run a cannibalization audit: identify pages ranking on the same queries

- Use Google Search Console to spot shared impressions across multiple site URLs

- Analyze your crawl rate: if Google crawls many pages but indexes few, that's a weak content signal

- Check engagement metrics (time on page, bounce rate): similar pages will show mediocre numbers

- Test relevance: for each page, ask yourself if it answers a unique question the others don't address

- Consolidate ruthlessly: one exhaustive 3000-word page beats five middling 600-word pages

This Google directive forces a paradigm shift: moving from a quantitative logic (cover all keywords) to a qualitative one (build authoritative resources). Sites that adapt to this approach quickly gain thematic authority and long-term performance improvements.

Implementing this strategy requires deep expertise in semantic analysis, information architecture, and technical SEO. If you lack resources or clarity on the best approach for your specific industry, partnering with an experienced SEO agency can be the difference between effective overhaul and irreversible traffic loss.

❓ Frequently Asked Questions

Dois-je supprimer toutes mes pages ciblant des variantes de mots-clés ?

Comment savoir si deux pages sont trop similaires aux yeux de Google ?

Peut-on encore utiliser Google Trends pour la stratégie de contenu ?

Est-ce que cette directive s'applique aussi aux sites e-commerce ?

Combien de temps avant de voir les effets d'une consolidation de pages ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 13/11/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.