Official statement

Other statements from this video 11 ▾

- □ Le HTML invalide nuit-il vraiment au référencement naturel ?

- □ Faut-il encore utiliser la balise meta keywords en SEO ?

- □ Les commentaires HTML ont-ils un impact sur le référencement Google ?

- □ Les noms de classes CSS influencent-ils vraiment votre référencement naturel ?

- □ Votre thème WordPress sabote-t-il votre référencement sans que vous le sachiez ?

- □ Les Core Web Vitals sont-ils vraiment un levier de classement dans Google ?

- □ Comment vérifier que JavaScript ne bloque pas l'indexation de votre contenu ?

- □ Pourquoi l'API d'indexation Google reste-t-elle bloquée sur deux types de contenus ?

- □ Angular bénéficie-t-il d'un traitement de faveur chez Google ?

- □ Faut-il vraiment virer tous ces scripts Google de votre site ?

- □ La structure HTML sémantique est-elle vraiment un facteur de compréhension pour Google ?

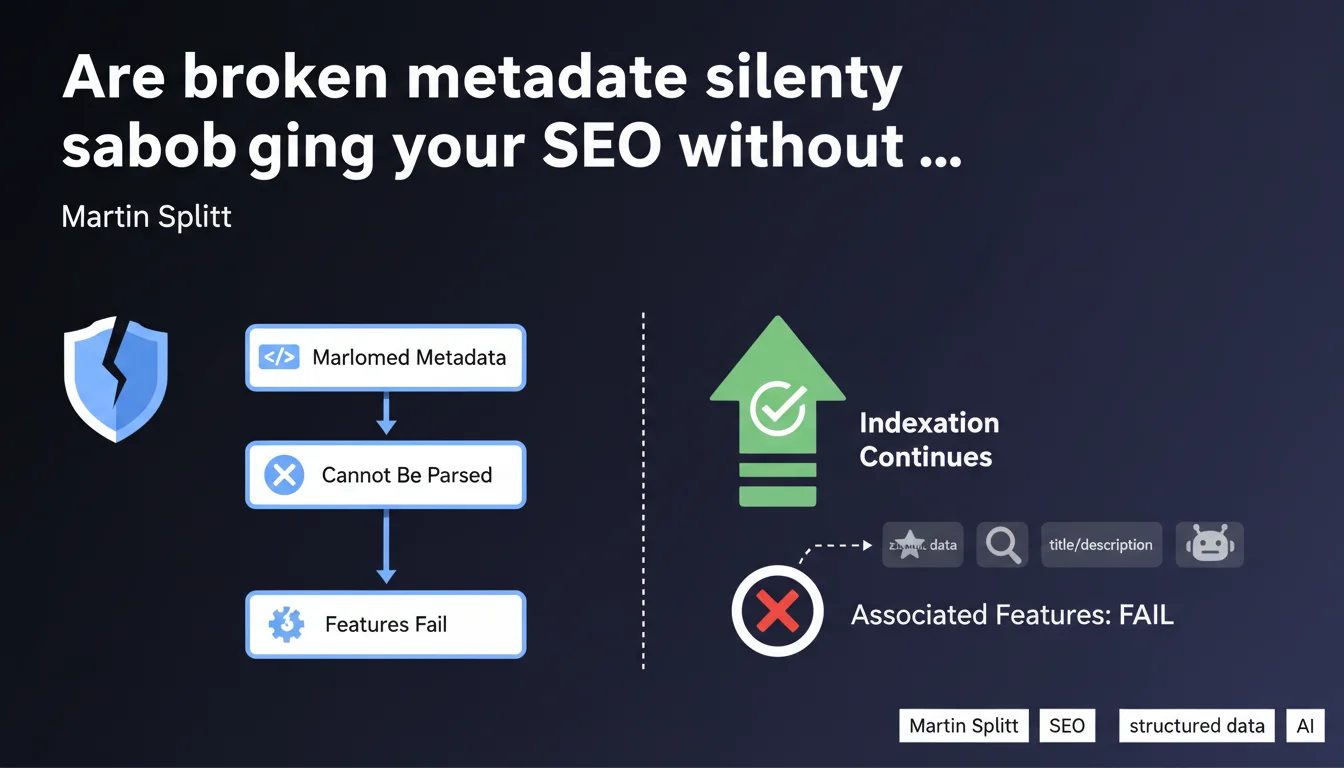

Malformed metadata (structured data, meta tags, robots.txt) doesn't block page indexation, but it disables all associated features. Google attempts to parse, fails, then ignores — result: loss of rich snippets, partial deindexation, or directives ignored. The site remains accessible, but stripped of its SEO leverage.

What you need to understand

What does "broken metadata" actually mean in practice?

Metadata is broken when it doesn't respect the syntax expected by Google's parser. This could be improperly closed JSON-LD, a meta description tag with unescaped quotes, or a robots.txt file with contradictory directives.

Google attempts to read, fails to parse, and abandons the attempt. No visible error message for users — just complete silence on the search engine side.

Why does indexation continue despite the error?

Indexation relies on textual content and basic HTML structure. Metadata are additional layers: they enrich, clarify, direct — but don't determine access to the corpus.

If your robots tag is unreadable, Googlebot indexes by default. If your structured data crashes, the standard snippet displays. The engine doesn't block — it degrades.

Which features fail first?

- Rich snippets: stars, prices, availability — all disappear if JSON-LD or microdata are invalid

- Robots directives: noindex, nofollow ignored if syntax is flawed

- Meta descriptions: Google generates a random excerpt if the tag is corrupted

- Canonical and hreflang: infinite loops or variant deindexation if poorly declared

- XML sitemaps: URLs ignored if the file contains forbidden characters or unclosed tags

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. We regularly observe sites with syntactically incorrect JSON-LD schemas that continue to rank but lose their SERP stars overnight. Google doesn't always notify via Search Console — the error remains silent.

On the other hand, broken meta robots tags can trigger erratic behavior: sometimes ignored, sometimes partially interpreted. I've seen noindex fail due to a missing space, with the page remaining indexed for months. [To verify] whether Google applies a fallback mechanism or if it's pure chance depending on which parser is used.

What nuances should be added?

Martin Splitt discusses "broken metadata," but the boundary between broken and tolerated is fuzzy. Google has permissive parsers for certain tags (HTML5 allows approximations), but zero tolerance for JSON-LD or XML.

Another point: some errors don't "break" everything. A missing attribute in an Open Graph tag doesn't block social sharing — Facebook generates a degraded preview. Same for Twitter Cards. The devil is in the details.

When doesn't this rule apply?

Redirects and HTTP status codes aren't metadata — if they fail, indexation fails too. A misconfigured 301 or chronic 5xx prevents Googlebot from accessing content.

Same for JavaScript rendering: if your SPA crashes before displaying the DOM, Google indexes nothing. Metadata is just one layer — the foundation remains technical availability.

Practical impact and recommendations

How do I detect broken metadata on my site?

Use Google's Structured Data Validator, the Mobile-Friendly Test (which also parses meta tags), and audit your robots.txt with the dedicated tool in Search Console. For hreflang, Screaming Frog or Sitebulb detect loops and syntax errors.

Automate with CI/CD tests: invalid JSON-LD should never reach production. Linters like jsonlint or schema-dts in TypeScript prevent 90% of errors.

Which errors should you prioritize avoiding?

- Malformed JSON-LD (single quotes instead of double, extra commas)

- Meta tags with duplicate attributes or empty values (e.g.,

content="") - Robots.txt with poorly placed wildcards or unknown User-agents

- Canonical pointing to a 404 URL or with unhandled dynamic parameters

- Hreflang without return tag (non-reciprocal) or with invalid language codes

- XML sitemap not UTF-8 encoded or containing 3xx/4xx URLs

What concrete steps should you take to fix these issues?

Prioritize critical errors flagged in Search Console (Enhancements section). Test each page type (category, product, article) with the validator before deployment. Implement monitoring to detect regressions after each release.

For complex sites with multiple templates, a comprehensive technical audit can reveal invisible surface-level errors. Automated tools don't always catch contextual nuances — expert review spots logical inconsistencies (e.g., a product marked "in stock" while the CMS shows "out of stock").

❓ Frequently Asked Questions

Une erreur JSON-LD peut-elle entraîner une pénalité Google ?

Si ma meta description est cassée, Google en génère-t-il une automatiquement ?

Un robots.txt invalide bloque-t-il l'indexation ?

Les erreurs de structured data affectent-elles le ranking ?

Faut-il corriger toutes les erreurs remontées dans Search Console ?

🎥 From the same video 11

Other SEO insights extracted from this same Google Search Central video · published on 26/06/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.