Official statement

Other statements from this video 10 ▾

- □ Faut-il encore optimiser ses meta descriptions si Google les ignore ?

- □ Faut-il encore optimiser les meta descriptions pour le SEO ?

- □ Un seul lien suffit-il vraiment pour que Google découvre et indexe votre site ?

- □ Faut-il encore utiliser rel=prev/next pour la pagination ?

- □ Le contenu boilerplate nuit-il vraiment au référencement de vos pages ?

- □ Les redirections IP géolocalisées tuent-elles votre crawl Google ?

- □ Comment Google détermine-t-il vraiment la localisation d'un utilisateur pour le SEO local ?

- □ Les bases de données IP pour la géolocalisation sont-elles vraiment fiables pour le SEO international ?

- □ Google peut-il vraiment afficher des rich results sans schema markup ?

- □ Faut-il configurer le header Content-Language pour les PDF et fichiers non-HTML ?

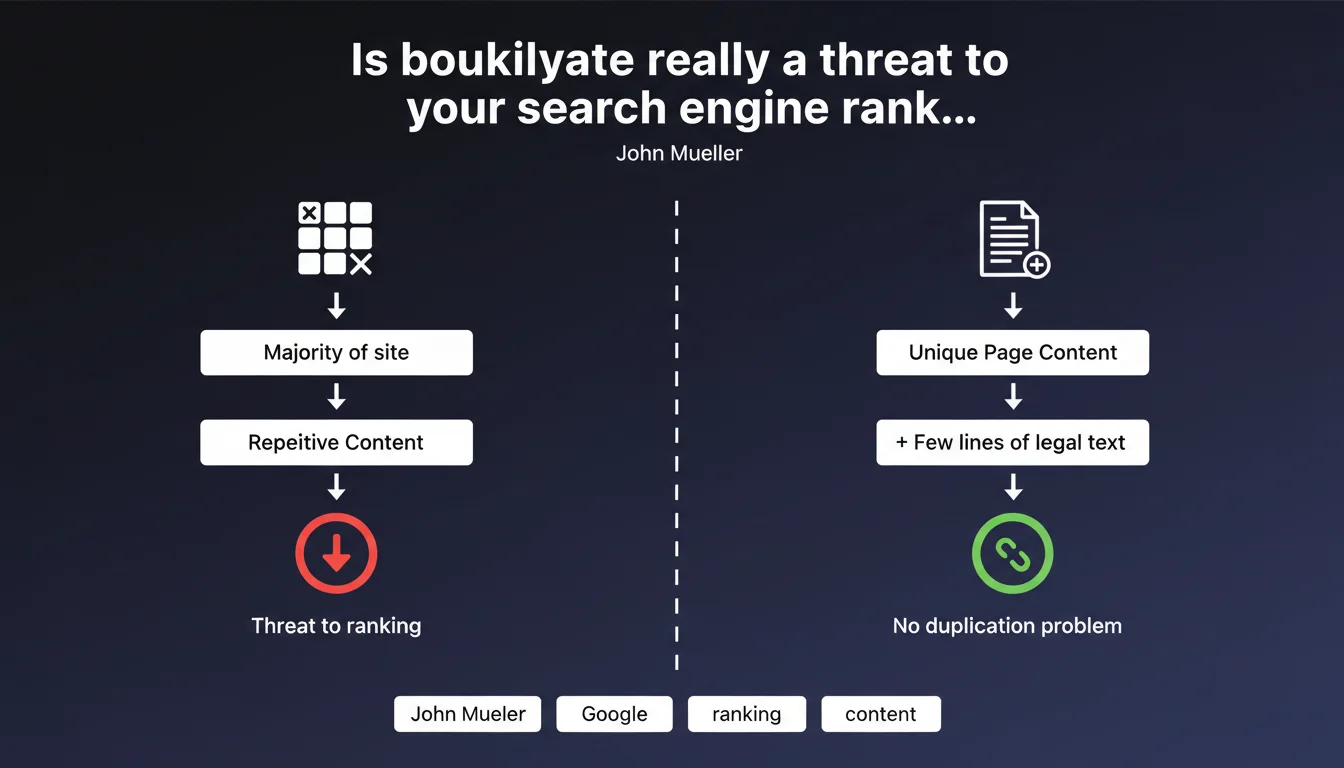

Google tolerates repetitive content (boilerplate) perfectly fine as long as it doesn't represent the majority of your pages' content. Adding legal notices, disclaimers or standard texts to pages that already have unique content creates no duplication problem whatsoever. The critical threshold is reached when boilerplate becomes the main component of your site.

What you need to understand

What exactly does Google consider as problematic boilerplate?

The term boilerplate refers to all those repetitive elements that appear on multiple pages: legal notices, standard footers, identical sidebars, disclaimers, duplicated contact forms. Google makes a critical distinction here between presence and proportion.

Mueller's statement establishes that the problem arises only when this repetitive content constitutes the majority of your pages' content. In clear terms: if your page contains 200 words of unique content and 50 words of boilerplate, you're well within acceptable limits.

Why does Google show this tolerance toward repetitive content?

The algorithm has evolved enough to distinguish main editorial content from structural content. Google knows how to identify what is navigation, footer, sidebar — and doesn't penalize their recurring presence.

The search engine seeks to evaluate the unique added value of each page. As long as a substantial portion of the content is specific and provides something distinct, the rest is considered normal technical noise.

What is the threshold that tips the balance into problematic territory?

Mueller speaks of "majority of pages" composed of boilerplate. It's intentionally vague — no precise percentage announced. The alarm bell rings when your site becomes an empty shell with anecdotal unique content.

Concretely, if 80% of your pages display 30 words of unique content buried in 200 words of repetitive text, you enter the red zone. Google then considers your site as not providing enough differentiated content per URL.

- Boilerplate is not a problem in itself — its proportion is what matters

- Google distinguishes editorial content from recurring structural content

- Risk appears when unique content becomes minority on the majority of pages

- Adding legal notices to content-rich pages creates no problem whatsoever

- No official numerical threshold — the logic is qualitative, not mathematical

SEO Expert opinion

Does this statement align with real-world observations?

Absolutely. We've observed for years that sites with substantial footers, recurring sidebars, or systematic disclaimers are not penalized as long as the main content remains solid. E-commerce sites with their terms and conditions, return policies and other recurring blocks rank perfectly well.

However, content farms that generate 5000 pages with 95% identical template and 5% cosmetic variation — those actually disappear from indexes or stagnate in deep rankings. The pattern is clear: Google tolerates structural repetition, but sanctions value dilution.

What nuances should be added to this official position?

Mueller speaks of "majority of pages" without clarifying whether he means by page volume or content volume per page. This is crucial. A site can have 20% of its URLs with excessive boilerplate — if these pages represent 80% of crawl budget or impressions, the problem still exists.

Another blind spot: he doesn't mention near-duplicate content. Pages with 80% unique content but structurally identical (same outline, same H2 headings, minimal variations) can create cannibalization issues even without excessive boilerplate. [To verify]: Does Google treat near-duplicate the same way as boilerplate?

Final point — the definition of "unique content" isn't specified. Does it mean textually unique content or informationally unique content? Automatically rewritten 200 words remain poor content even without strict duplication.

In what cases does this rule become insufficient?

Multilingual or multi-regional sites often end up with nearly identical structures by language. Technically, it's not boilerplate in the strict sense — but the effect is similar if translation is poor or automated. Google can then consider the whole thing as weak content.

Sites with extensive pagination create another edge case. Your pages 2, 3, 4… of a category share 95% of their structure. If unique editorial content (category intros, descriptions) is absent or minimal, you fall into the "majority boilerplate" scenario without intending to.

Practical impact and recommendations

How do you audit the boilerplate to unique content ratio on your site?

Take a representative sample of your page templates (homepage, category, product sheet, blog article). For each, visually identify what is repetitive (header, footer, sidebar, disclaimers) versus what is specific to the page.

Measure in word count. If your unique content falls below 60-70% of total visible content, you're entering a risk zone. Below 50%, it's problematic. A tool like Screaming Frog can extract text by blocks to automate this analysis across thousands of URLs.

What corrective actions should you take if you have excessive boilerplate?

First solution: enrich unique content. Add category descriptions, contextual introductions, specific editorial blocks. The goal is to reverse the ratio in favor of differentiated content.

Second lever: clean up unnecessary boilerplate. Those three paragraphs of legal notices repeated on every product page? Condense them or move them to a footer link. That sidebar with 15 recurring links? Lighten it or make it contextual.

Third option for extreme cases: deindex or consolidate. If certain pages provide no unique value and are essentially templates, put them on noindex or merge them. It's better to have 100 solid pages than 1000 empty shells.

How do you prevent this problem on new content and architectures?

Establish an editorial charter with minimum unique content requirements by page type. Example: 300 words minimum unique content for a category, 150 for a product sheet. These thresholds ensure boilerplate remains minority.

When designing your templates, think modularity. Rather than an identical 500-word footer everywhere, create light versions for certain pages or make some blocks conditional based on context.

- Audit the boilerplate to unique content ratio on a sample of each page type

- Aim for at minimum 60-70% unique content per page

- Systematically enrich pages poor in specific content

- Clean up superfluous repetitive elements or condense them

- Deindex pages with no real unique value

- Establish minimum unique content requirements in your editorial guidelines

- Design flexible templates rather than rigid ones

- Regularly monitor new sections to prevent drift

❓ Frequently Asked Questions

Faut-il supprimer les mentions légales de mes pages pour éviter le boilerplate ?

Mon site e-commerce a des fiches produits courtes avec beaucoup de footer — est-ce risqué ?

Le boilerplate dans les sidebars compte-t-il dans le calcul de Google ?

Existe-t-il un pourcentage précis de boilerplate à ne pas dépasser ?

Les pages de pagination avec peu de contenu éditorial sont-elles concernées ?

🎥 From the same video 10

Other SEO insights extracted from this same Google Search Central video · published on 25/04/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.