Official statement

Other statements from this video 5 ▾

- □ Les opinions Google sur le Web3 reflètent-elles vraiment la position du moteur de recherche ?

- □ Les contenus en communautés privées sont-ils vraiment invisibles pour Google ?

- □ Les créateurs doivent-ils vraiment contrôler ce qui est indexé par Google ?

- □ Pourquoi Google ne peut-il pas indexer les contenus sans URL crawlable ?

- □ Google va-t-il abandonner le crawl traditionnel pour indexer le web social ?

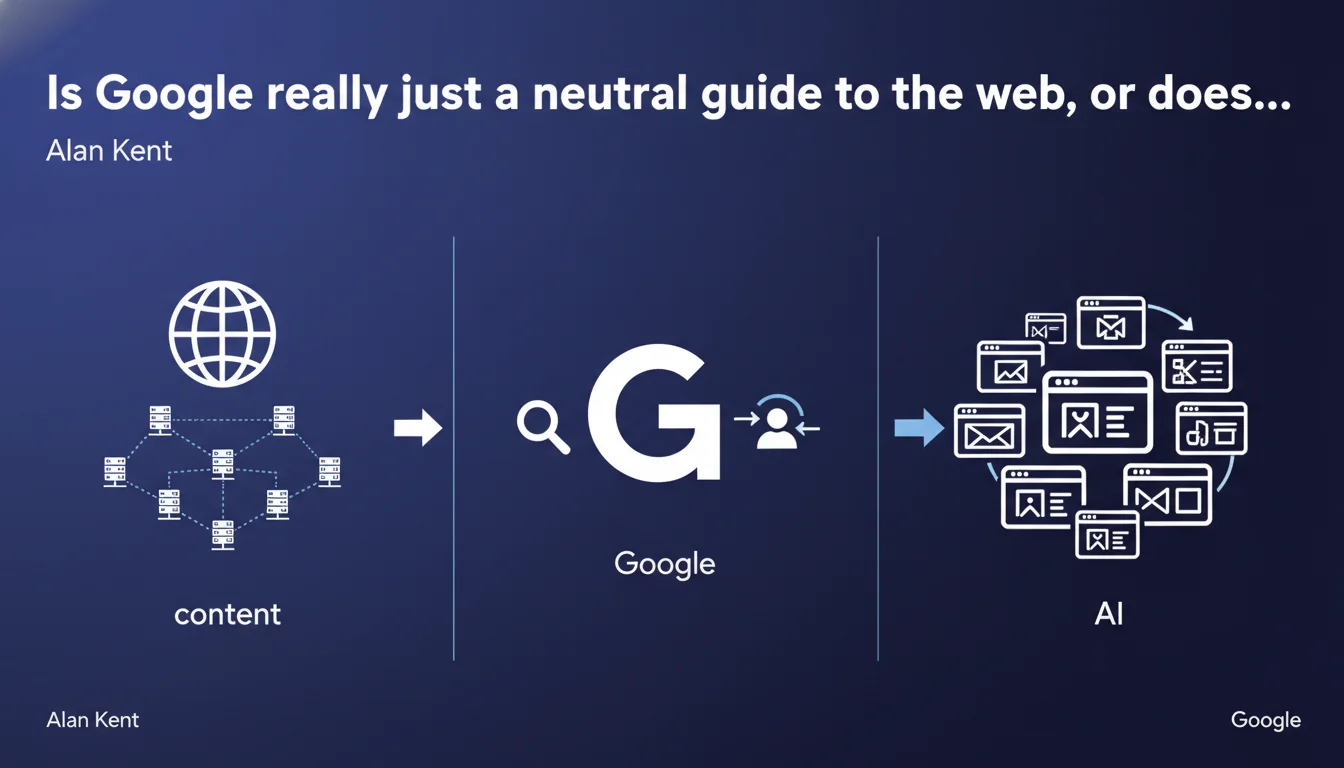

Alan Kent reminds us that Google doesn't host the content it indexes — it simply points to servers distributed around the world. The search engine acts as a traffic director, not a hosting provider. For SEO professionals, this means that the quality of your site's technical infrastructure remains critical for being discovered and crawled effectively.

What you need to understand

This statement comes in a context where Google is regularly accused of favoring its own content (featured snippets, People Also Ask, Knowledge Graph) to the detriment of third-party sites. Kent clarifies: the search engine doesn't store the pages, it indexes and references them.

Why is Google making such a point about this?

Because the confusion between indexation and hosting is common, even among regulators. Saying "Google displays my content" isn't accurate: it displays a link to your server. The distinction is both legal and technical.

In practice, it means that if your server is slow, inaccessible, or misconfigured, Google can't do anything to improve the user experience — it simply redirects to a failing resource.

What does this change for SEO in your daily work?

It reinforces the importance of technical infrastructure: server response time, CDN, DNS management, availability. Google doesn't compensate for your hosting weaknesses.

It also reminds us that the web remains decentralized by nature. Your content lives on your server. Google is just a giant index — not a centralized CMS.

- Google doesn't store your pages: it crawls them, indexes them, then sends users back to your servers.

- The quality of your infrastructure directly impacts your visibility: if the server is slow or unstable, crawling and UX suffer.

- The web remains distributed: each site is responsible for its own hosting and availability.

- Google plays the role of traffic director, not content distributor. It directs traffic, but doesn't guarantee the experience once the user arrives on the site.

SEO Expert opinion

Does this statement really match what we observe in reality?

Yes, technically. Google doesn't host your complete HTML pages. It stores a copy in its index, certainly, but the user is redirected back to your server.

But the distinction becomes blurry with featured snippets, AMP, Google Cache, and soon generative AI. These features display content directly in the SERPs, without the user clicking through to the source site. Saying Google "only directs" becomes an understatement.

Why this communication now?

Likely a response to regulatory pressure around Google's monopoly. By emphasizing the decentralized nature of the web, Kent defends the idea that Google remains a simple intermediary — not a gatekeeper that captures traffic.

Let's be honest: it's true in theory, but increasingly less true in practice. When 60% of mobile searches end without a click, it's hard to claim that Google "directs toward distributed content."

What are the consequences for SEOs?

You can no longer rely solely on traditional organic traffic. You also need to optimize for rich snippets, PAA, carousels — everything that captures attention before the click.

And paradoxically, it strengthens the importance of technical infrastructure: if Google directs well toward your servers, they still need to handle the load and respond quickly. Otherwise, the user leaves just as fast.

Practical impact and recommendations

What should you check first on your infrastructure?

Your server response time (TTFB) should be below 200 ms ideally. Beyond 600 ms, you start losing points on user experience and potentially on crawl budget.

Make sure your hosting can handle traffic spikes. A server that goes down when Googlebot passes is a direct negative signal.

How do you optimize the relationship between Google and your servers?

Use a CDN to distribute static content as close as possible to users. This reduces latency and improves overall availability.

Properly configure your robots.txt and sitemap.xml files to guide Googlebot toward your priority pages without wasting crawl budget on unnecessary sections.

Monitor server errors (5xx) in Search Console. A sudden spike may signal an infrastructure problem that directly impacts your visibility.

- Measure TTFB on your main pages and optimize it below 200 ms

- Implement a CDN to reduce geographic latency

- Check server availability with 24/7 monitoring (Uptime Robot, Pingdom, etc.)

- Analyze server logs to detect 5xx errors and fix them quickly

- Optimize crawl budget via robots.txt and sitemap to prioritize strategic pages

- Test server response under load (load testing) to anticipate traffic spikes

❓ Frequently Asked Questions

Google stocke-t-il vraiment une copie complète de mes pages ?

Un serveur lent peut-il vraiment faire baisser mon classement ?

Est-ce que Google compense les problèmes d'hébergement ?

Pourquoi Google insiste-t-il sur le caractère décentralisé du web ?

Les featured snippets contredisent-ils cette déclaration ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 19/05/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.