Official statement

Other statements from this video 9 ▾

- □ Why is Google replacing your title tags with H1 headings?

- □ Does Google really index titles modified by client-side JavaScript, or does it stick with the default?

- □ Should you abandon client-side JavaScript rendering to succeed in SEO?

- □ Should you abandon dynamic rendering for SEO?

- □ Does the URL inspection tool really reveal what Google actually sees during JavaScript rendering?

- □ Does JavaScript-rendered content really impact your Google indexation, and should you worry about it?

- □ Is server-side rendering really faster than client-side rendering for SEO?

- □ Can Lighthouse really diagnose your critical rendering problems for Google?

- □ Should you really crawl your website every three months to avoid technical problems?

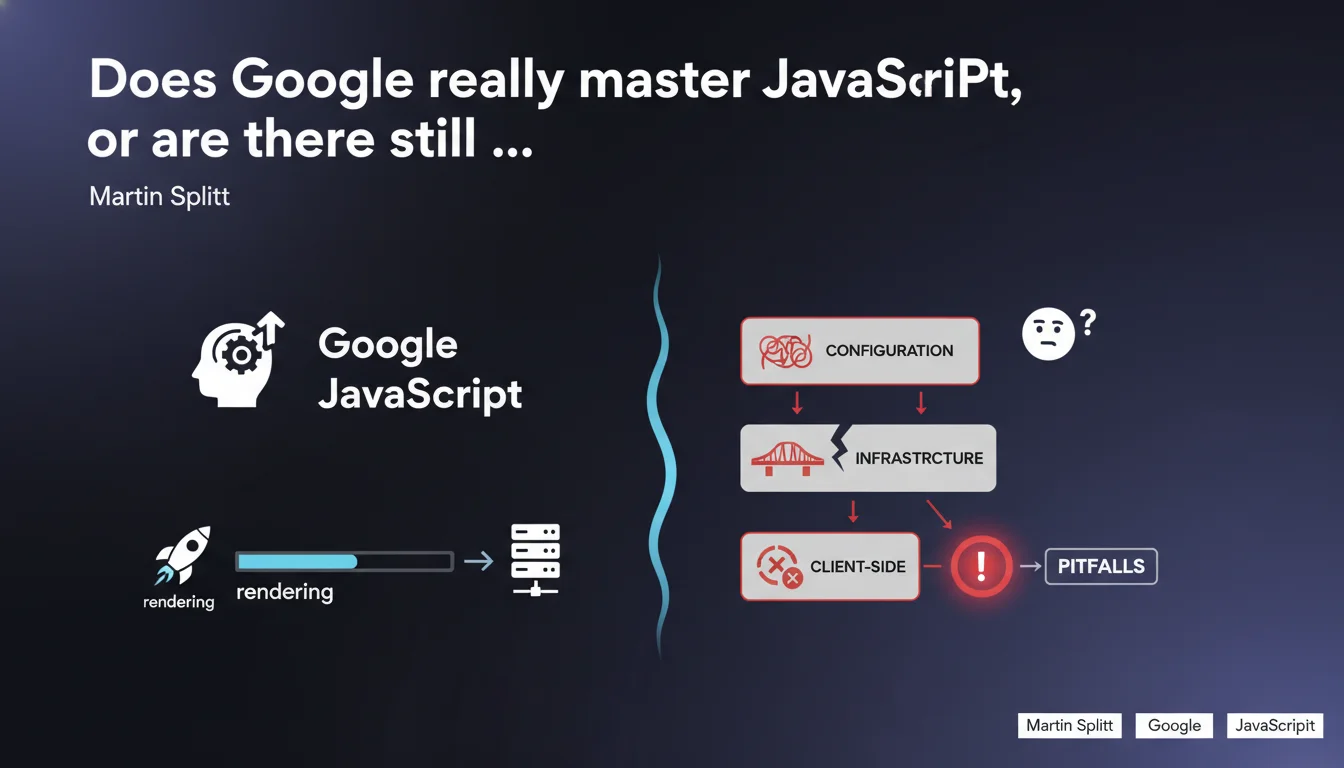

Google claims to have significantly improved its ability to render client-side JavaScript, but warns that problems persist depending on technical configurations. In practical terms: JS rendering works better than before, but it's not foolproof and depends heavily on your infrastructure.

What you need to understand

What does this JavaScript rendering improvement really mean?

Google indicates it has invested heavily in its rendering engine to process dynamically generated content by JavaScript. The modern Googlebot uses a recent version of Chromium, which theoretically allows it to handle frameworks like React, Vue, or Angular.

This doesn't mean all JavaScript sites are indexed optimally. Server configuration, load times, execution errors and external dependencies can still compromise rendering.

Why does Google remain cautious despite these improvements?

This statement contains a crucial nuance: capabilities have improved, certainly, but problems persist. Martin Splitt doesn't say "everything works perfectly," he says "it works better, but be careful." This is essential.

The variables influencing rendering are numerous: processing delays, crawl budget, blocked resources, console errors. A site can work perfectly in a regular browser and fail on Googlebot's side.

What cases does JavaScript rendering still cause problems with?

Typically, sites with JavaScript execution time that's too long, those dependent on unstable third-party resources, or those generating content after user interactions. Misconfigured lazy loading remains a frequent source of errors.

Google can also face difficulties with Single Page Applications (SPAs) that don't properly handle server-side navigation or that don't implement hybrid rendering.

- Google's JavaScript rendering has improved, but it's not free from failures

- Problems depend on the specific technical infrastructure of each site

- Client-side rendering remains slower and less reliable than server-side rendering (SSR)

- JS execution errors invisible in the browser can block Googlebot

- Search Console allows you to detect certain problems, but not all

SEO Expert opinion

Does this claim match what we observe in the field?

Yes and no. The progress is real — full React sites that weren't indexed years ago are today. But calling these improvements "significant" requires nuance. JavaScript rendering remains fundamentally problematic for many configurations.

In dozens of audits, we find that Google does manage to crawl and index JS content, but often with significant delays, missing pages, or partially rendered content. The problem is no longer binary (it works/it doesn't), it has become contextual and unpredictable.

Is Google transparent about the real limitations of the system?

Not really. This statement remains vague on a crucial point: what types of configurations still pose problems? Martin Splitt mentions this depends on "specific infrastructure," but without providing precise criteria. [To verify] in each context.

We'd like to know: what execution time thresholds? What console errors are tolerated? How many blocked resources break rendering? The absence of clear metrics forces empirical testing, which is costly in time and resources.

Has JavaScript rendering really become "safe" for SEO?

No. Let's be honest: even though Google has improved, SSR or static generation remain superior for indexing. Client-side rendering systematically introduces additional latency — Googlebot must first crawl the page, then queue it for rendering.

This gap between crawl and indexing can take several hours or even several days on low-authority sites. For time-sensitive content (news, e-commerce with limited stock), this is a major disadvantage.

Practical impact and recommendations

Should you abandon client-side JavaScript for SEO?

Not necessarily, but you must implement a hybrid rendering strategy as soon as possible. Server-Side Rendering (SSR) or static generation (SSG) should be prioritized for critical content: category pages, product sheets, blog articles.

Client-side JavaScript remains acceptable for secondary elements — filters, animations, interactive elements not essential to indexable content. The idea: ensure that basic HTML contains the essentials, even if JavaScript fails.

How do you verify that Google is rendering my JavaScript content correctly?

Use the URL inspection tool in Search Console, but don't rely solely on it. This tool sometimes uses different resources than regular crawling. Systematically compare the "crawled" version with the "rendered" version.

Implement automated regression tests that verify critical content appears in rendered HTML. Tools like Puppeteer or Playwright allow you to simulate Googlebot behavior and identify errors before production deployment.

What concrete actions should you take right now?

- Audit JavaScript execution times with Chrome DevTools and identify blocking scripts

- Verify that main content appears in source HTML, not just after JS execution

- Implement server-side rendering (SSR) or pre-generation (SSG) for strategic pages

- Test each new JS feature with the URL inspection tool in Search Console

- Monitor console errors that could block Googlebot rendering

- Implement a graceful degradation strategy: the site must work even if JavaScript fails

- Avoid blocking critical resources in robots.txt (essential CSS, JS)

- Consider dynamic rendering as a temporary solution if SSR is complex to implement

❓ Frequently Asked Questions

Google indexe-t-il vraiment tout le contenu JavaScript aujourd'hui ?

Dois-je abandonner React ou Vue pour mon site si je veux être bien référencé ?

L'outil d'inspection d'URL de la Search Console suffit-il pour vérifier le rendu ?

Quels sont les principaux problèmes qui bloquent encore le rendu JavaScript par Google ?

Le dynamic rendering est-il une bonne solution pour le SEO ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 05/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.