Official statement

What you need to understand

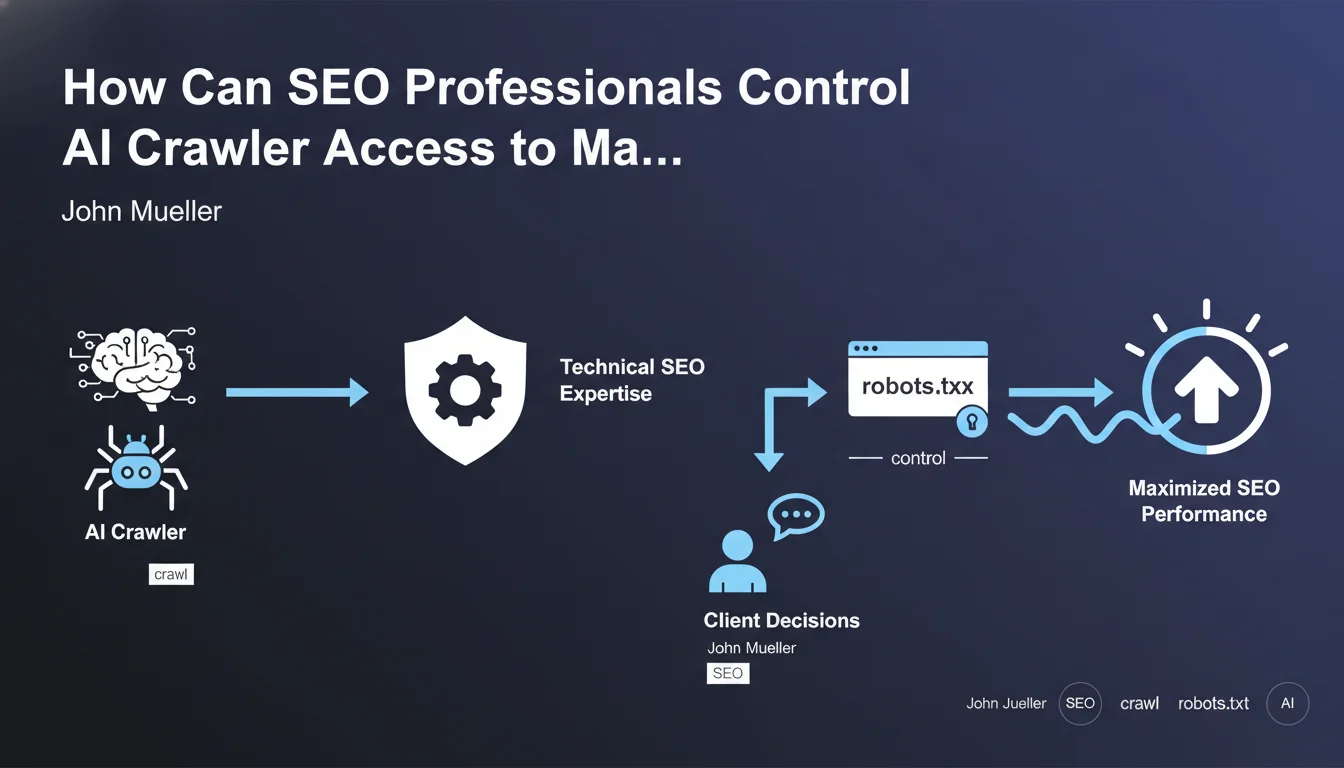

Google officially acknowledges that technical SEO professionals occupy a strategic position in managing AI crawler bots. This statement underscores their role as expert advisors to clients facing the growing challenges of artificial intelligence.

SEO professionals possess in-depth knowledge of crawling mechanisms and control tools such as the robots.txt file, meta robots, or even the extended robots.txt file for specific AI bots (GPTBot, Google-Extended, etc.).

This expertise enables them to strategically advise their clients on how to manage access for these new crawlers. It's a delicate balance between content protection and visibility.

- Technical SEO professionals are legitimate in guiding business decisions regarding AI

- Mastery of robots.txt and crawl directives becomes a major strategic asset

- Selective blocking of AI bots must be carefully considered to avoid harming organic search rankings

- A nuanced approach is necessary depending on each client's business objectives

SEO Expert opinion

This statement is particularly consistent with the market evolution I've been observing since 2023. SEO professionals who understand the implications of AI on crawl budget and visibility indeed have a major advisory advantage.

However, this position needs to be nuanced. Systematically blocking AI bots may seem protective in the short term, but could deprive a site of visibility in future AI-enhanced search tools (SGE, Copilot, Perplexity). The decision must be aligned with the client's overall strategy.

I also observe that certain sectors (publishing, premium content creation) have more interest in restricting access, while others (e-commerce, local services) benefit from maximum exposure. Advice must be contextualized according to the business model.

Practical impact and recommendations

- Immediately audit your current robots.txt file to identify which AI bots currently have access to your content

- Document all known AI user-agents: GPTBot (OpenAI), Google-Extended (Google), CCBot (Common Crawl), anthropic-ai, Claude-Web, etc.

- Establish a clear policy with your clients regarding their positioning toward generative AI: maximum protection or controlled exposure?

- Never block classic Googlebot if you want to maintain your traditional organic search rankings

- Test progressive blocking: start by blocking Google-Extended and observe the impact on traffic and rankings over 2-3 months

- Monitor server logs to identify new AI bots that regularly appear (new market players)

- Create differentiated segments: certain site sections can be open to AI bots (promotional content) while others are protected (premium content)

- Document your recommendations with business scenarios: potential impact of blocking vs. exposure, depending on the client's revenue model

- Educate your clients about the issues: they must understand that this decision has implications for their future visibility in AI tools

- Review the strategy quarterly as the AI bot ecosystem evolves rapidly, with new players and new rules

Implementing a coherent AI crawler management strategy requires sharp technical expertise and constant monitoring. The implications are both technical (server configuration, robots.txt, HTTP headers) and strategic (business positioning, intellectual property protection).

For companies that want to fine-tune this emerging dimension of SEO, particularly in sensitive sectors or with premium content stakes, guidance from a specialized SEO agency provides personalized analysis and a strategy tailored to specific objectives, while avoiding configuration errors that could negatively impact traditional search rankings.

💬 Comments (0)

Be the first to comment.