Official statement

Other statements from this video 8 ▾

- □ Pourquoi Google refuse-t-il de viser 100% de fiabilité pour son moteur de recherche ?

- □ Google vérifie-t-il réellement l'expérience utilisateur au-delà des codes HTTP ?

- □ Comment Google gère-t-il les pics de trafic sans pénaliser le référencement ?

- □ Pourquoi Google traite-t-il certaines requêtes à moindre coût que d'autres ?

- □ Comment Google provisionne-t-il ses ressources serveur pour les pics de trafic prévisibles ?

- □ Google peut-il réellement voler des ressources à l'indexation pour stabiliser son moteur de recherche ?

- □ Comment Google gère-t-il les incidents de ranking avec des mitigations rapides ?

- □ Pourquoi Google coupe-t-il brutalement certains data centers en cas d'incident ?

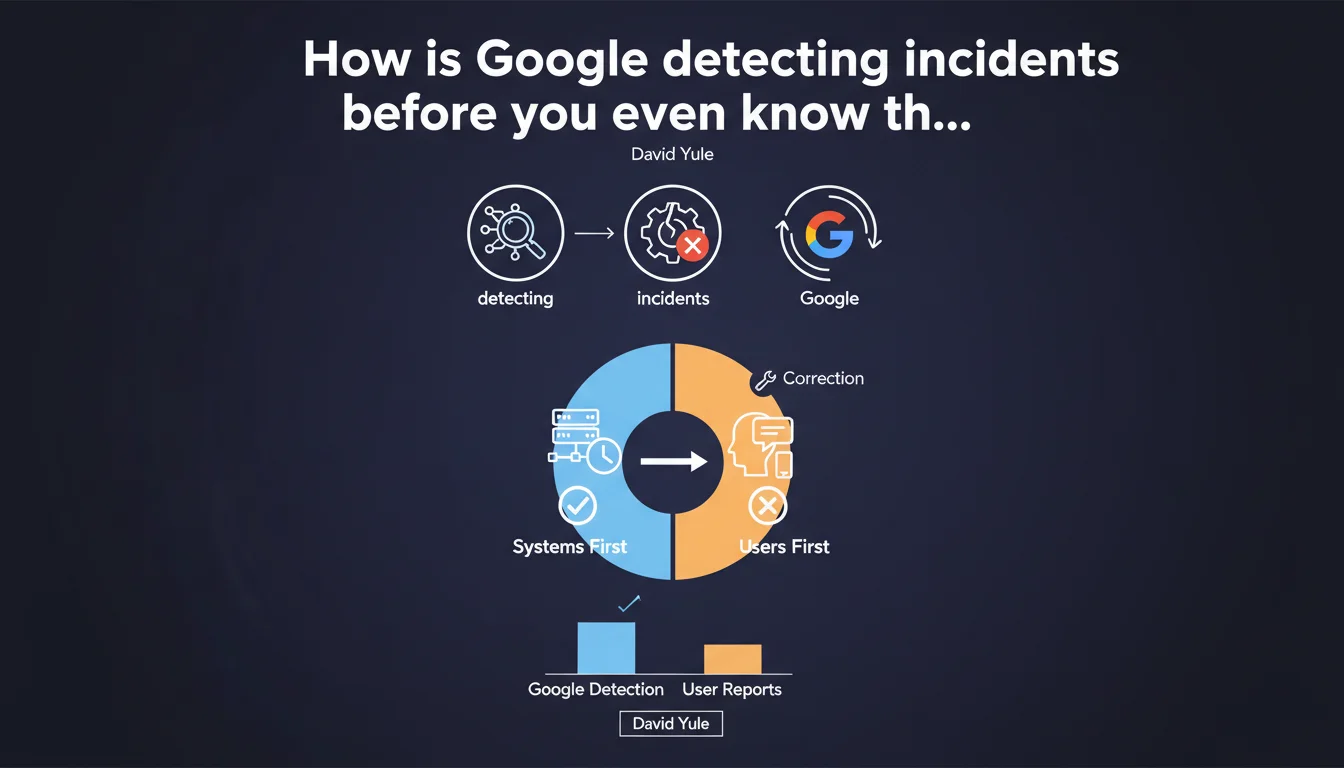

Google measures how many incidents are detected internally by its SRE teams versus reported by users. When a user reports a problem first, it reveals a gap in their monitoring. The goal: identify malfunctions before they impact user experience or go viral on social media.

What you need to understand

What is an SRE team and why is this monitoring critical?

SRE (Site Reliability Engineering) teams at Google have a clear mission: ensure the availability and stability of services. They continuously monitor performance, response times, server errors, indexation anomalies — everything that can degrade user experience.

When David Yule explains that the goal is to detect incidents internally before users report them on Twitter or elsewhere, he reveals a key metric for Google: the ratio of incidents detected internally versus incidents reported externally. The more this ratio leans toward internal detection, the better the infrastructure performs.

What does it mean when a user detects an incident first?

It's an alarm signal. If SEO professionals or webmasters need to alert Google about an indexation issue, crawl problem, or unusual ranking behavior, it means their monitoring systems weren't sensitive enough. The gap in monitoring must then be addressed.

For us, SEO practitioners, this explains why certain bugs can go unnoticed for hours or even days — until someone tweets about it and the team reacts. Google implicitly acknowledges that their monitoring is not infallible.

Why this transparency about their internal process?

This statement is rare. Google communicates little about its internal mechanics. Here, David Yule lifts the veil on an operational performance metric: the ability to anticipate problems rather than react to them.

For SEOs, this is critical information: if you detect an incident and Google doesn't react immediately, it's not necessarily bad faith — it's possibly a blind spot in their monitoring that they'll correct after your report.

- Google's SRE teams measure their effectiveness on their ability to detect incidents before users do.

- An incident reported by a user reveals a gap in monitoring that must be corrected.

- Google implicitly acknowledges that its monitoring system is not perfect and must be continuously adjusted.

- This transparency explains why certain SEO bugs go unnoticed for hours before external reporting triggers a response.

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Yes, and it's actually reassuring. How many times have we seen massive indexation anomalies, unexplained traffic drops, or completely deregulated SERPs — and Google only reacts after a Twitter flood? This statement confirms what we suspected: their monitoring has limits.

The fact that they measure this metric shows they're aware of the problem. But let's be honest: this transparency doesn't change our day-to-day reality. We'll continue reporting bugs, and they'll continue patching afterward. [To verify]: is this ratio improving over time? No public data on that.

What nuances should be added to this claim?

Google is talking about incidents before they are reported on social media. This implies that minor incidents, those affecting isolated sites or invisible niches, can go completely unnoticed. If nobody tweets, no signal — and potentially no detection.

Another point: this logic applies to systemic incidents (global indexation bug, Googlebot outage, etc.). But what about localized problems on a single site or cluster of pages? There, their monitoring is probably blind. And it's up to us to diagnose.

What does this statement reveal about Google's internal culture?

David Yule speaks of a performance metric — this is SRE engineer language. At Google, everything is quantified, measured, optimized. The fact that they track the internal/external incidents ratio shows a culture of continuous improvement.

But beware: this obsession with metrics can also create biases. If the SRE team is evaluated on their ability to detect incidents first, they risk over-optimizing this metric at the expense of other priorities — like transparency or resolution speed.

Practical impact and recommendations

What should you do concretely when you detect an incident?

First, document everything: screenshots, Search Console logs, Analytics data, SERP captures. The more tangible evidence you have, the more seriously your report will be taken. Google receives thousands of vague complaints — the one that arrives with precise data stands out.

Next, report through the right channels: Twitter can work if you have an audience or if others confirm your observation. But official forums (Google Search Central) and feedback forms remain the preferred routes. And that's where it gets stuck: response times are often long.

What mistakes should you avoid when you suspect a Google bug?

Don't cry wolf too quickly. Before reporting an incident, verify it's not a local problem: server down, misconfigured robots.txt, manual penalty, algorithm change that impacts you specifically. False positives damage your credibility.

Another classic mistake: waiting passively. If you detect something odd and Google doesn't react, keep investigating on your end. Sometimes, what looks like a Google bug is actually a signal your site is sending poorly — and that you need to fix.

How to improve your own monitoring to anticipate problems?

Set up automatic alerts: traffic drop exceeding X%, sudden drop in indexed pages, increase in 4xx/5xx errors in Search Console. The earlier you detect, the more damage you limit.

Use third-party monitoring tools (Semrush, Ahrefs, OnCrawl, etc.) that can spot anomalies Search Console doesn't capture immediately. And most importantly: cross-reference sources. An isolated signal can be noise — three converging signals, that's a pattern.

- Systematically document every incident with screenshots, logs, and quantified data before reporting.

- Verify the problem isn't local (server, configuration, penalty) before blaming Google.

- Use official channels (Search Central, feedback forms) rather than relying solely on Twitter.

- Set up automatic alerts on critical KPIs: traffic, indexation, server errors.

- Cross-reference monitoring sources (Search Console, Analytics, third-party tools) to validate anomalies.

- Don't stay passive: if Google doesn't react, continue investigating on your side to identify potential internal issues.

❓ Frequently Asked Questions

Est-ce que Google corrige tous les incidents signalés par les utilisateurs ?

Pourquoi Google ne communique pas plus sur les incidents en cours ?

Comment savoir si un drop de trafic est lié à un bug Google ou à un problème interne ?

Les équipes SRE de Google interviennent-elles sur tous les services (Search, Ads, Analytics) ?

Faut-il signaler un incident même si on n'est pas sûr que c'est un bug Google ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/10/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.