Official statement

Other statements from this video 9 ▾

- □ L'expérience utilisateur impacte-t-elle directement le SEO ou seulement les conversions ?

- □ Le taux de rebond élevé est-il vraiment un signal d'alerte pour votre SEO ?

- □ Pourquoi votre expertise SEO vous aveugle-t-elle face aux vrais besoins de vos utilisateurs ?

- □ Quand faut-il lancer une recherche UX pour améliorer son SEO ?

- □ Les évaluations négatives de vos pages sont-elles un signal SEO à investiguer ?

- □ Faut-il vraiment commencer par une évaluation heuristique avant de tester avec de vrais utilisateurs ?

- □ Le cognitive walkthrough peut-il améliorer le SEO par l'expérience utilisateur ?

- □ Pourquoi la triangulation qualitative-quantitative transforme-t-elle votre recherche UX en levier SEO ?

- □ Pourquoi 100 utilisateurs ne suffisent jamais pour valider une stratégie d'expérience utilisateur SEO ?

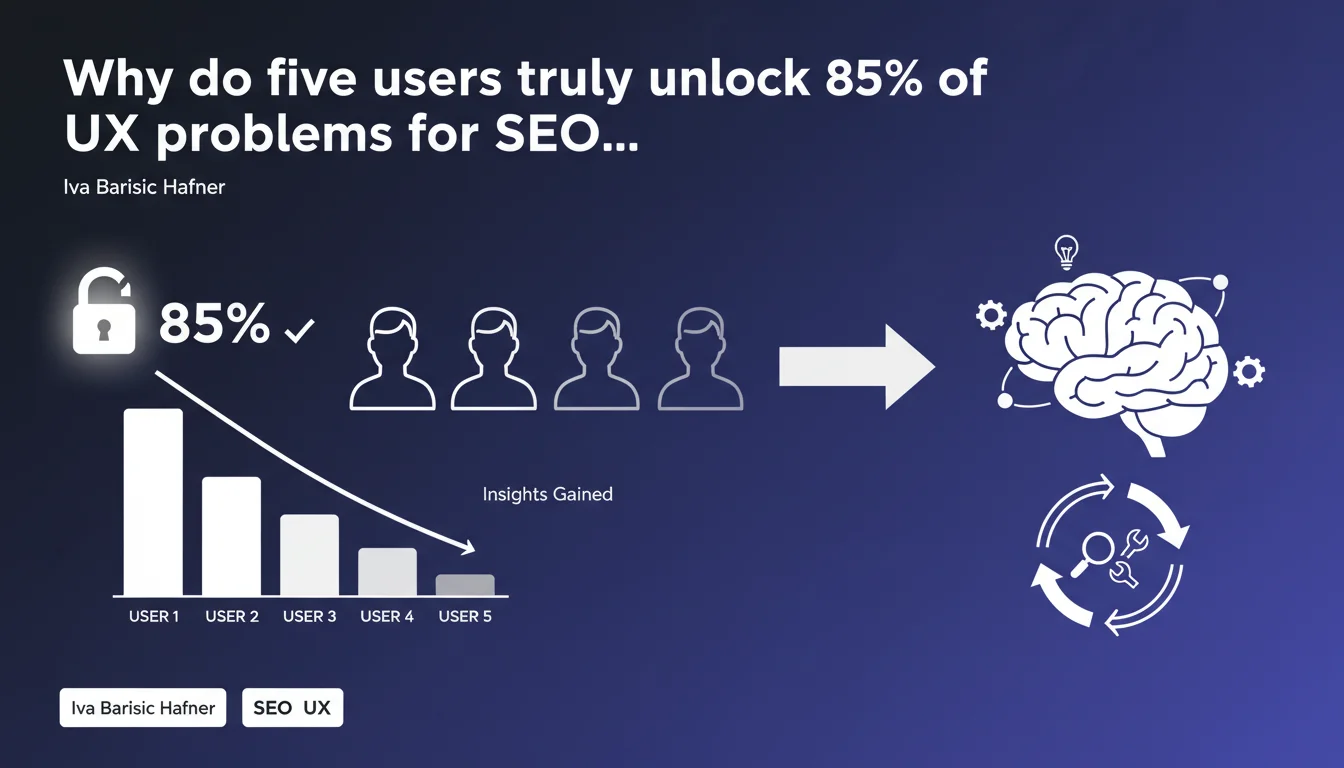

Google reminds us that in qualitative research, five participants are sufficient to identify the majority of user experience issues. Beyond that point, new insights drop off dramatically. For SEOs working on UX and Core Web Vitals, this means you can get actionable feedback without recruiting dozens of testers.

What you need to understand

Where does this five-user rule come from?

Google's statement is based on a principle established in UX research since the 1990s: the curve of discovering usability problems follows a logarithmic progression. The first tester reveals roughly 30% of issues, the second adds 25%, the third 15%… and so on.

Beyond five participants, you enter a zone of diminishing returns. New insights become marginal, repetitive, or concern extremely specific edge cases. This rule allows you to optimize the time/budget/added value ratio.

Why is Google communicating about this topic now?

Iva Barisic Hafner works at Google and is reminding us of a fundamental principle in user research that is often misunderstood. Many people think you need massive samples to obtain reliable data — this is true for quantitative research, not qualitative.

In SEO, this distinction is crucial. If you test the usability of a conversion page or the accessibility of a navigation menu, five observation sessions are enough to spot 85% of friction points. To statistically validate a click-through rate, you obviously need a much larger volume.

What is the direct implication for an SEO practitioner?

An SEO optimizing user experience (navigation, readability, conversion path) can run lightweight qualitative tests — five users observed in real conditions — to quickly identify what's blocking progress.

No need to wait for a panel of 50 people. You gain in agility and responsiveness, which is essential when you need to fix problems before an audit or migration.

- Qualitative research: 5 users are enough to identify 85% of usability and UX issues

- Diminishing returns: beyond 5, new insights are marginal and repetitive

- Key distinction: this principle does not apply to quantitative validation (A/B testing, conversion rates, etc.)

- SEO agility: allows rapid testing without mobilizing dozens of participants or heavy budgets

- Direct observation: effective for detecting navigation friction, accessibility issues, confusion zones

SEO Expert opinion

Is this rule universally applicable in SEO?

Yes and no. It works perfectly for identifying qualitative problems: a poorly designed menu, an unreadable page on mobile, a confusing conversion funnel. Five observations are more than enough to spot these friction points.

However, if you're testing the impact of a modification on a conversion rate or measurable behavior (time spent, scroll depth, bounce rate), five users will give you no statistical significance. You then need to switch to quantitative methods — A/B testing, analytics, large-scale heatmaps.

The problem is that many SEOs conflate the two approaches. They run qualitative tests and draw quantified conclusions from them, or conversely wait for massive volumes to detect an obvious ergonomic issue. [To verify]: Google does not specify how to articulate the two methods in a complete SEO workflow.

What are the limitations of this approach?

Five users are sufficient if your target audience is homogeneous. If you're optimizing a mainstream B2C e-commerce site with users of varying profiles (age, technical skill, devices), five people will cover only a fraction of segments.

In this case, you either need to segment your tests (5 users per persona) or accept that certain issues won't be detected. This is where the rule shows its limits: it assumes a relatively uniform audience.

Another point — and it's rarely stated — this method relies on active and structured observation. If you simply let five people navigate without clear instructions or an analysis framework, you'll get noise, not insights. Study quality depends as much on protocol as on participant count.

Is this statement consistent with observed practices?

Completely. UX agencies have applied this rule for decades. SEOs, however, often ignore it. Many still think a user test must be expensive and mobilize dozens of people — when a lightweight protocol with five participants can unlock immediate optimizations.

The real challenge is interpretation. Five users reveal problems, but provide no indication of their actual frequency or business impact. This is where you need to cross-reference with quantitative data: analytics, exit rates, large-scale recorded sessions.

Practical impact and recommendations

What exactly should you do to apply this method?

First, define what you're testing. Product page usability? Menu clarity? Form accessibility? Each test must have a precise objective, or you'll waste time.

Next, recruit five participants representative of your target. You don't need a perfect panel, but avoid testing only colleagues or technical profiles if your audience is mainstream. Prepare an observation protocol: clear instructions, tasks to complete, analysis framework.

Conduct sessions in real conditions (device, connection, usage context). Record the screen and reactions, take notes. After five sessions, compile recurring problems — those appearing in at least 3 participants deserve immediate correction.

What mistakes should you avoid?

Don't conflate qualitative and quantitative. Five users don't validate a strategic choice, they reveal friction. If you want to measure a modification's impact on a KPI, move to A/B testing with significant volume.

Another common mistake: testing without a clear hypothesis. If you observe five people navigating randomly, you'll get noise. Define what you're trying to optimize before launching tests.

Finally, don't overestimate this method's scope. It works for usability issues, not for validating content choices, positioning, or editorial strategy. For that, you need to cross-reference with real data.

How do you integrate this approach into an existing SEO workflow?

Integrate lightweight qualitative tests before each major optimization: navigation redesign, template change, conversion funnel addition. Five sessions are enough to spot major friction points.

Then cross-reference with quantitative data: analytics, heatmaps, session recordings. User tests reveal the "why," data reveals the "how much." Both are complementary.

If you're working on a complex or multi-segment site, consider segmenting tests: five users per persona or usage type. This scales quickly, but remains manageable.

- Define a precise objective for each test (usability, accessibility, path clarity)

- Recruit five participants representative of your primary target

- Prepare a structured observation protocol with concrete tasks

- Test in real conditions (device, connection, usage context)

- Compile recurring problems and prioritize corrections

- Cross-reference qualitative insights with quantitative data (analytics, heatmaps)

- Don't use this method to validate KPIs — switch to A/B testing in that case

- Segment tests if your audience is heterogeneous (5 users per persona)

❓ Frequently Asked Questions

Cette règle des cinq utilisateurs s'applique-t-elle aussi aux tests A/B ?

Faut-il recruter cinq utilisateurs par persona ou cinq au total ?

Peut-on remplacer ces tests par l'analyse des heatmaps ?

Cette méthode fonctionne-t-elle pour tester du contenu éditorial ?

Cinq utilisateurs suffisent-ils pour un site e-commerce avec des milliers de visiteurs par jour ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 31/10/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.