Official statement

Other statements from this video 9 ▾

- □ Google favorisait-il vraiment le HTML au détriment du JavaScript pour l'indexation ?

- □ Les spinners de chargement peuvent-ils vraiment bloquer l'indexation de vos pages JavaScript ?

- □ Pourquoi l'indexation JavaScript prend-elle 3 à 6 mois après le crawl ?

- □ Pourquoi vos liens JavaScript ralentissent-ils la découverte de vos pages par Google ?

- □ Le JavaScript peut-il vraiment être indexé plus vite que l'HTML ?

- □ Comment vérifier si Google rend vraiment votre JavaScript avec la méthode du honeypot ?

- □ Tous les frameworks JavaScript sont-ils vraiment égaux face au crawl de Google ?

- □ Google ment-il sur le rendu JavaScript ou simplifie-t-il juste la vérité ?

- □ Faut-il vraiment corriger la technique avant de miser sur le contenu et les backlinks ?

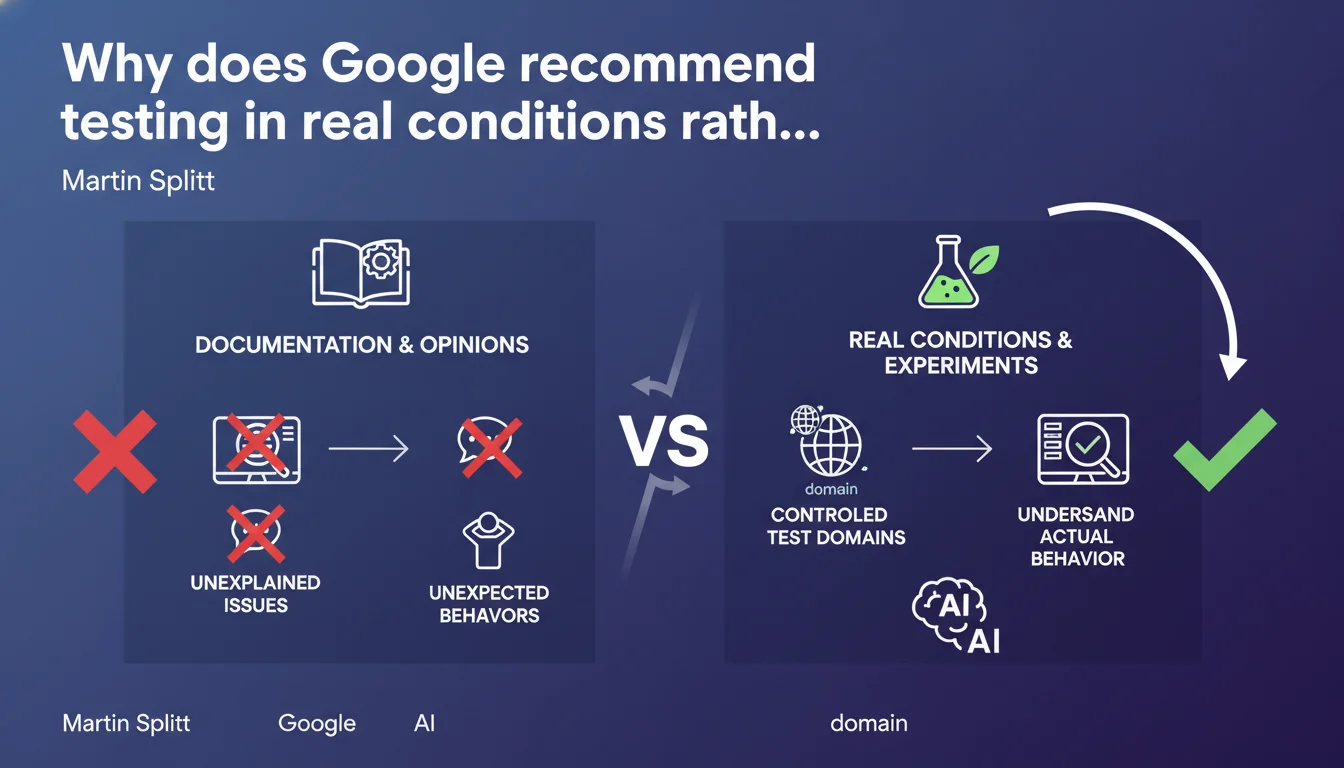

Martin Splitt argues that when facing unexplained Google behaviors, creating controlled experiments on test domains remains the only reliable method to understand what's actually happening. Documentation and opinions are no longer enough — you need to test, measure, and compare.

What you need to understand

Why does Google encourage experimentation rather than simply reading its documentation?

Splitt's statement starts from a simple observation: official documentation doesn't cover every possible scenario. Real Googlebot behaviors, indexation, crawling — it all varies depending on context.

When a site experiences an unexplained drop or a feature doesn't behave as expected, relying solely on official guides or forums risks leading to wrong conclusions. Controlled experimentation — testing on a dedicated domain, isolating variables, measuring differences — becomes the only scientific approach to diagnose a problem.

What is a controlled experiment in technical SEO?

Concretely, this means creating an isolated testing environment: a domain or subdomain where you can manipulate a single variable at a time (URL structure, tags, JS rendering, etc.) and observe Google's reaction.

The idea: compare two identical versions except for one specific point. For example, test whether Googlebot crawls differently a page rendered in CSR versus SSR. Or verify whether a specific robots.txt directive actually blocks what you think it blocks.

Why does this approach become essential when facing "unexpected behaviors"?

Because Google doesn't document everything. Some internal mechanisms are never detailed publicly. Others evolve without official announcement.

Faced with this, you have two options: speculate based on opinions (even expert ones), or test methodically. Splitt clearly pushes toward the latter. The underlying message? Stop guessing, measure instead.

- Official documentation doesn't cover all real-world cases encountered in the field

- Controlled experiments allow you to isolate variables and measure the precise impact of a change

- Unexplained behaviors require a scientific approach rather than hypotheses or opinions

- Testing on dedicated domains avoids taking risks on production sites

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes and no. Many senior SEO professionals already practice experimentation — particularly technical teams at large e-commerce or media sites. But the majority of practitioners don't have the time or resources to set up dedicated test environments.

What Splitt is saying here is that without experimentation, you're flying blind. Let's be honest: it's true, but it's also an admission that Google's documentation remains too vague to be reliable in all contexts. [To verify]: how far should we take this logic? Should every micro-optimization be tested before deploying it to production?

What limits should be placed on this approach?

First limitation: the cost in time and infrastructure. Setting up a test domain, waiting for Google to crawl it regularly, isolating variables — all of this takes weeks, even months. For a freelancer or small business, it's unrealistic.

Second limitation: not all behaviors are reproducible. Google treats a newly created domain differently from an established site with authority. The results of a test on a fresh domain don't necessarily apply to a mature site. And that's something Splitt doesn't clarify — which somewhat weakens his argument.

In which cases is this recommendation truly relevant?

Controlled experimentation becomes essential in three specific situations: complex technical migration (CMS change, architecture overhaul), recurring unexplained problem (crawl drops, unexplained deindexation), or hypothesis validation before massive deployment (impact of a URL structure change on 100,000 pages).

For everything else — standard optimizations, schema markup implementation, internal linking improvements — documentation and proven experience are sufficient. No need to reinvent the wheel every time.

Practical impact and recommendations

What concretely should you do to set up controlled experiments?

First prerequisite: have an indexable testing environment. This can be a subdomain (test.yoursite.com), a separate domain, or even specific pages on an existing site — as long as you can isolate the metrics.

Second step: define a clear and measurable hypothesis. "Does Google index a page faster with content structured in JSON-LD than a page without it?" For example. The hypothesis must be binary: yes/no, more/less, better/worse.

Third step: limit variables. If you're testing the impact of JavaScript rendering, everything else (content, tags, links) must remain strictly identical between the two versions. Otherwise, it's impossible to attribute the observed effect to the correct cause.

What mistakes should you avoid when experimenting?

Mistake #1: testing on a site with no history. A freshly created domain has no authority, no regular crawling. Results will be skewed by the fact that Google doesn't take it seriously. Ideally, prepare your test domain several months in advance.

Mistake #2: concluding too quickly. A behavior change observed over 48 hours proves nothing. You need to wait for several crawl cycles, cross-reference Search Console data with server logs, verify reproducibility.

Mistake #3: generalizing from a single test. What works on your test domain doesn't necessarily apply to your main site if contexts differ (authority, content volume, history). Always validate in real conditions before a massive rollout.

How should you measure the results of an SEO experiment?

Three data sources are essential: Search Console (indexation, coverage, performance), server logs (crawl frequency, depth, user-agent), and ranking tracking tools if the goal is to measure ranking impact.

Compare metrics before/after over a sufficiently long period (minimum 4 weeks). Isolate external events (algorithm updates, seasonality). A statistically significant result requires volume and time.

- Create an indexable testing environment (subdomain or dedicated domain)

- Define a clear, measurable hypothesis and limit the variables being tested

- Prepare the test domain in advance so it's regularly crawled

- Measure over multiple crawl cycles (minimum 4 weeks) before concluding

- Cross-reference Search Console, server logs, and ranking tools

- Never generalize from a single isolated test

- Validate in real conditions before massive rollout to the main site

❓ Frequently Asked Questions

Faut-il systématiquement tester chaque optimisation SEO avant de la déployer ?

Combien de temps faut-il pour qu'un test SEO donne des résultats exploitables ?

Peut-on tester directement sur le site principal plutôt que sur un domaine séparé ?

Les résultats d'un test sur un domaine sans autorité sont-ils fiables ?

Quelles métriques surveiller pour valider une expérience SEO ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 01/02/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.