Official statement

Other statements from this video 1 ▾

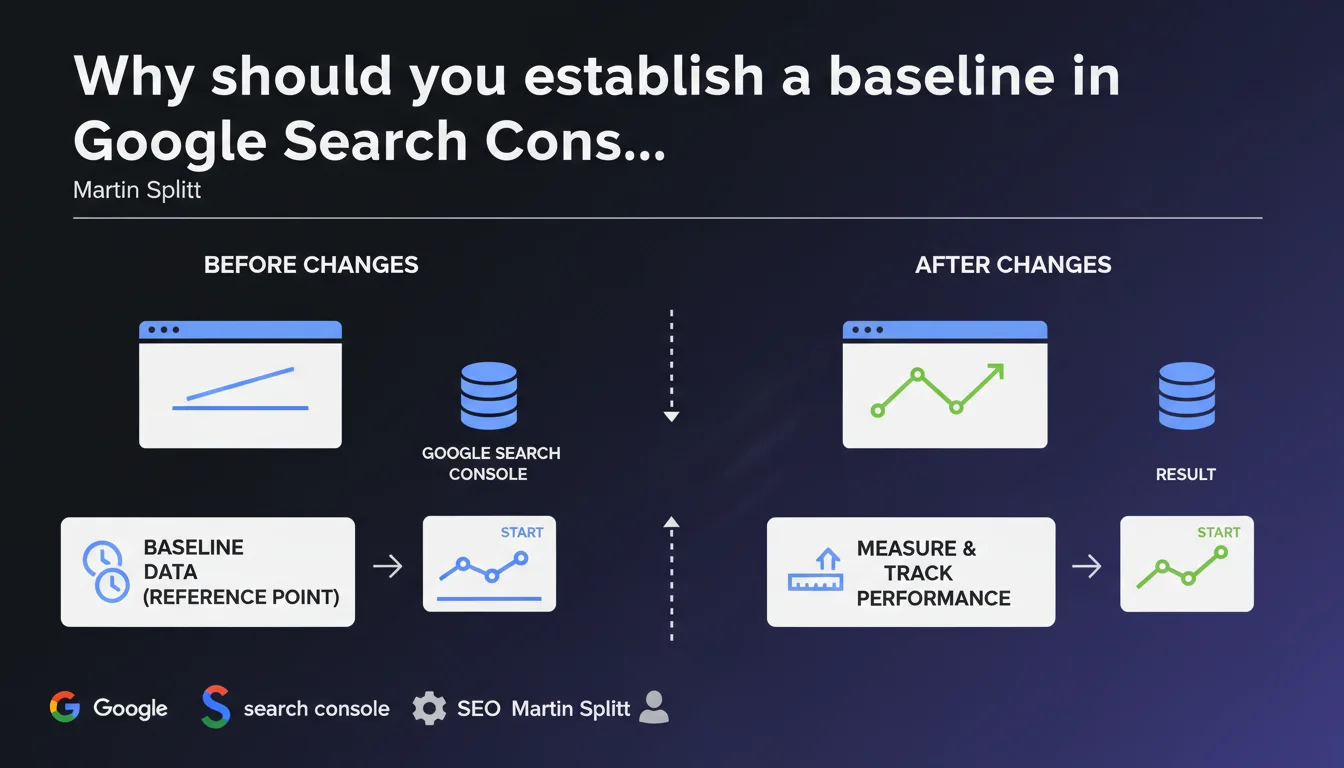

Martin Splitt emphasizes the critical importance of establishing a precise reference point in Google Search Console before implementing any SEO modifications. Without a baseline, it's impossible to objectively measure the real impact of your actions. It's the difference between flying blind and navigating with reliable instruments.

What you need to understand

What is a baseline and why does Google insist on it?

A baseline is your initial state of play — a detailed snapshot of your performance before any intervention. Concretely: impressions, clicks, average positions, click-through rate by query, and performance by page type.

Google Search Console provides this data, but you need to capture it before changing anything. Otherwise, how would you know if your redesign boosted traffic… or if it was just seasonality?

What specific metrics should you track in this baseline?

Organic impressions and clicks per page, per query, and per device. Average position for your strategic keywords. Click-through rate by position to identify underperforming snippets.

Also consider segmenting: desktop vs mobile, Google Images vs Web, by country if you're international. A global baseline often masks crucial variations in segments.

How long should you observe before having a reliable baseline?

At least 28 days to smooth out weekly variations. Ideally 3 months to capture seasonal fluctuations and potential algorithm updates.

If you launch an SEO action after only one week of observation, you'll never be able to isolate the effect of your action from natural background noise. Google knows this, which is why Splitt hammers it home.

- A baseline allows you to isolate the effect of your actions from algorithmic and seasonal background noise

- 28 days minimum, 3 months ideally, to capture natural variations

- Segment your data: desktop/mobile, by page type, by search intent

- Export and archive your data — GSC only retains 16 months of history

SEO Expert opinion

Is this recommendation actually applied in the field?

Let's be honest: the majority of SEO professionals I meet don't take the time to establish a solid baseline. Why? Client pressure, commercial urgency, or simply impatience to see results.

The problem — and I've verified this dozens of times — is that we then attribute gains to SEO actions when it was just natural seasonal recovery. Or worse, we panic at a drop that had nothing to do with our modifications.

What are the practical limitations of this approach?

Google Search Console aggregates data for a maximum of 16 months. If you don't export it regularly, you lose your history. And the API interface is limited to 5000 rows per query — insufficient for large sites.

Second point: GSC only shows a sample of long-tail queries. If 40% of your traffic comes from anonymized queries, your baseline is mechanically incomplete. [To verify]: Google doesn't precisely communicate the anonymized query filtering threshold, but we empirically observe it varies depending on the site's volume.

In what cases can you skip this step?

Honestly? Never, if you want to be able to justify your actions and your ROI. But I understand that in crisis situations — penalized site, sharp drop — you don't have the luxury of waiting 3 months.

In that case, capture at least a partial baseline over 7 days, and explicitly document its limitations. A flawed baseline is better than no baseline. But don't fool yourself: your conclusions will be fragile.

Practical impact and recommendations

How do you build an actionable baseline in Google Search Console?

Log into GSC, go to the Performance tab. Select a period of at least 28 days (or 90 days for more robustness). Export the data to a CSV file or Google Sheets.

Then segment by query, page, country, device. Identify your 20 main queries, your 10 best-performing pages, and note their average position, CTR, and impressions. These figures are your reference point.

What mistakes should you avoid when establishing a baseline?

First common mistake: comparing non-comparable periods. November vs July, for example — seasonality skews everything. Always compare to the same period the previous year or smooth over several weeks.

Second mistake: only looking at global averages. A site might lose 20% traffic on mobile but gain 10% on desktop — the average masks the diagnosis. Segment, always.

What should you do once the baseline is established?

Keep it outside GSC (Google Sheets, Data Studio, Excel). Schedule regular checkpoints: 1 month, 3 months, 6 months after your SEO actions. Compare evolutions to your baseline, not to short-term fluctuations.

And above all: document your actions with precise timestamps. If you publish 50 pieces of content on March 15th, note it. Otherwise, it's impossible to correlate cause and effect 3 months later.

- Export GSC data over a minimum of 28 days (90 ideally) before any action

- Segment by query, page, device, country to avoid misleading averages

- Archive the baseline outside GSC (CSV, Sheets, BigQuery) to preserve history beyond 16 months

- Schedule checkpoints at 1, 3, and 6 months to measure evolution

- Cross-reference GSC with Analytics and third-party tools (Ahrefs, SEMrush) for a complete view

- Never compare non-comparable periods (seasonality, algorithm updates)

❓ Frequently Asked Questions

Combien de temps minimum faut-il pour établir une baseline fiable dans GSC ?

Google Search Console conserve-t-il tout l'historique des données de performances ?

Peut-on se fier uniquement à GSC pour établir une baseline complète ?

Comment comparer les performances avant/après si le site a subi une migration ou une refonte ?

Faut-il établir une baseline différente pour chaque type de page ?

🎥 From the same video 1

Other SEO insights extracted from this same Google Search Central video · published on 06/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.