Official statement

Other statements from this video 13 ▾

- □ Le SEO technique est-il vraiment encore indispensable pour le référencement ?

- □ Faut-il arrêter d'obseder sur les détails techniques obscurs en SEO ?

- □ Search Console est-elle vraiment efficace pour diagnostiquer vos problèmes SEO ?

- □ Pourquoi Google privilégie-t-il systématiquement la page d'accueil dans son processus d'indexation ?

- □ La duplication de contenu provient-elle vraiment toujours de copié-collé exact ?

- □ Faut-il vraiment sacrifier le volume de trafic au profit de la pertinence ?

- □ Les feedbacks utilisateurs sont-ils plus révélateurs que le trafic pour juger la qualité d'une page ?

- □ La qualité SEO se résume-t-elle vraiment à aider l'utilisateur à accomplir sa tâche ?

- □ Faut-il vraiment miser sur une perspective unique pour ranker dans une niche saturée ?

- □ Faut-il vraiment fusionner et rediriger du contenu régulièrement pour améliorer son SEO ?

- □ Faut-il vraiment traiter toutes les erreurs d'exploration de la même manière ?

- □ Faut-il vraiment aligner le title et le H1 pour performer en SEO ?

- □ Faut-il utiliser l'IA générative pour rédiger ses contenus SEO ?

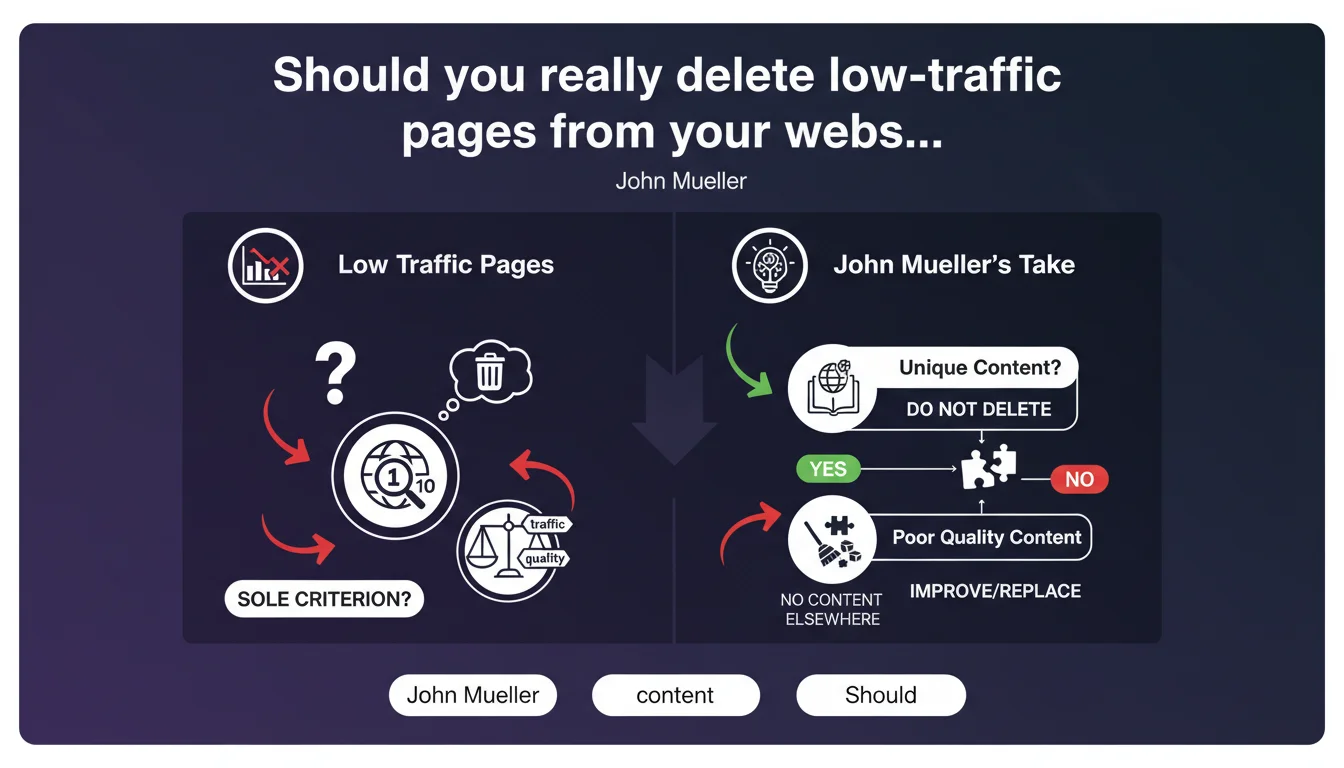

Google advises against deleting content based only on traffic metrics. A page with few visits may be the only resource on a given topic — removing it harms the web ecosystem. The real deletion criterion is the intrinsic quality of the content, not Analytics metrics.

What you need to understand

Why does Google take this position against systematic deletion?

For several years, the trend in the SEO industry has been to aggressively prune low-performing content. The idea: improve the quality-to-volume ratio to send positive signals to Google. Some audit tools even recommend automatic deletions based on traffic thresholds.

Mueller reminds us of a reality that is often forgotten: the web is not just a popularity contest. A page with 5 visits per month can answer an ultra-specific question that no one else covers. Deleting it creates an information gap — and that's exactly what Google wants to avoid.

What does "poor quality content" concretely mean according to Google?

Google doesn't give a precise definition here, but you can cross-reference it with other official statements. Poor quality content generally combines several factors: outdated or false information, internal or external duplication, lack of added value compared to what already exists, content generated in mass without editorial oversight.

What matters is the actual usefulness to the user. An ultra-specialized technical product sheet with 10 visitors per month is not poor content. A page auto-generated that repeats what 50 others already say better, yes — even if it gets 1,000 visits.

How do you identify pages worth keeping despite low traffic?

The most reliable method remains manual contextual analysis. Ask yourself these questions: does this page answer a real search intent? Is it the only available resource on this specific topic? Does it provide information you won't find elsewhere, even with marginal traffic?

Then cross-reference with Search Console data. Look at long-tail queries: some pages rank for ultra-specific terms with little search volume, but high conversion rates. Deleting these pages because they get 20 visits per month would be a major strategic error.

- Traffic alone is a misleading indicator — a page can have strategic value without volume

- Google prioritizes the intrinsic quality of content over popularity metrics

- Deleting unique content creates information gaps that Google seeks to avoid

- The real deletion criterion should be actual usefulness to the end user

- Long-tail pages with low traffic can have high ROI if they convert well

SEO Expert opinion

Is this statement consistent with field observations?

Yes and no. On one hand, we do see that sites that have massively deleted low-traffic content haven't always seen improvement — sometimes even a decline overall. Google seems less sensitive to signal-to-noise ratio than we thought.

On the other hand, documented cases show visibility increases after pruning. The problem? Those sites had deleted genuine spam: duplicate pages, auto-generated content, empty product sheets. Not unique content with low audience. The distinction is critical — and Mueller doesn't detail it enough. [To verify]

What nuances should be added to this recommendation?

Mueller talks about "the only page on the Internet on this topic." Let's be honest: how do you verify this at scale? For a site with 10,000 pages, manual auditing is unrealistic. Duplicate content analysis tools help, but don't detect thematic uniqueness.

Second point: business context matters. Should an e-commerce site with 50,000 references where 30% generate no sales in 12 months keep everything on principle? Google says "look at quality," but provides no operational threshold. The practitioner must decide alone between web philosophy and business constraints. [To verify]

In what cases does this rule clearly not apply?

Auto-generated content in mass without added value — typically thousands of empty category pages or auto-generated product sheets. Even with zero traffic, no one will mourn their disappearance, Google included.

Pages with factually false or dangerous information. Regardless of whether they're "unique" — their existence causes more harm than good. Same logic applies to obsolete content you can't or won't update: a clean 410 response is better than a page from 2015 that misleads the user.

Practical impact and recommendations

What should you concretely do with low-traffic pages?

First step: segment your inventory. Separate pages into three groups — unique content with low volume, duplicate/low quality content, and gray area to analyze. Don't rely only on Analytics sessions: look at Search Console impressions, average positions, served queries.

For identified unique content, two options. Either you keep it as is if the quality is there. Or you enrich and consolidate it with other thematically close pages to create a more comprehensive resource — without losing the specificity that makes it valuable.

What mistakes to avoid when auditing content?

Never automate deletion on a single KPI. Scripts that "delete everything under X visits" are dangerous — they don't capture uniqueness or strategic potential. It's the kind of approach that destroys years of long-tail work without even realizing it.

Second mistake: ignoring Search Console data. A page can have zero Analytics traffic but 500 impressions per month in positions 15-20. That's a clear signal: there's demand, the content exists, it just needs optimization to capture traffic. Deleting it means abandoning an opportunity already in place.

How do you structure a content audit aligned with this recommendation?

Cross three sources: Analytics (actual traffic), Search Console (visibility and queries), and manual qualitative analysis (representative sample). For each low-traffic segment, ask yourself: does this content provide information you can't find elsewhere?

If yes, keep and optimize. If no, check if it can be merged with stronger existing content. If it's spam or genuinely poor content, delete — but document your decision. Traceability matters, especially when managing thousands of pages.

- Segment inventory into three categories: unique/low traffic, spam/low quality, gray area

- Cross Analytics + Search Console to identify pages with hidden potential

- Never automate deletion on a single traffic KPI

- Manually verify the actual uniqueness of content before any decision

- Favor intelligent consolidation over outright deletion when relevant

- Document each deletion decision for traceability

- Analyze the long-tail queries served by low-volume pages

❓ Frequently Asked Questions

Dois-je garder toutes mes pages à faible trafic sans exception ?

Comment savoir si mon contenu est vraiment unique sur Internet ?

Une page avec zéro trafic mais des impressions Search Console doit-elle être gardée ?

Peut-on fusionner plusieurs pages à faible trafic en une seule plus complète ?

Google pénalise-t-il les sites qui gardent beaucoup de contenu à faible trafic ?

🎥 From the same video 13

Other SEO insights extracted from this same Google Search Central video · published on 21/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.