Official statement

Other statements from this video 8 ▾

- □ Pourquoi Google refuse-t-il de viser 100% de fiabilité pour son moteur de recherche ?

- □ Google vérifie-t-il réellement l'expérience utilisateur au-delà des codes HTTP ?

- □ Pourquoi Google veut-il détecter les incidents avant que vous ne les signaliez ?

- □ Pourquoi Google traite-t-il certaines requêtes à moindre coût que d'autres ?

- □ Comment Google provisionne-t-il ses ressources serveur pour les pics de trafic prévisibles ?

- □ Google peut-il réellement voler des ressources à l'indexation pour stabiliser son moteur de recherche ?

- □ Comment Google gère-t-il les incidents de ranking avec des mitigations rapides ?

- □ Pourquoi Google coupe-t-il brutalement certains data centers en cas d'incident ?

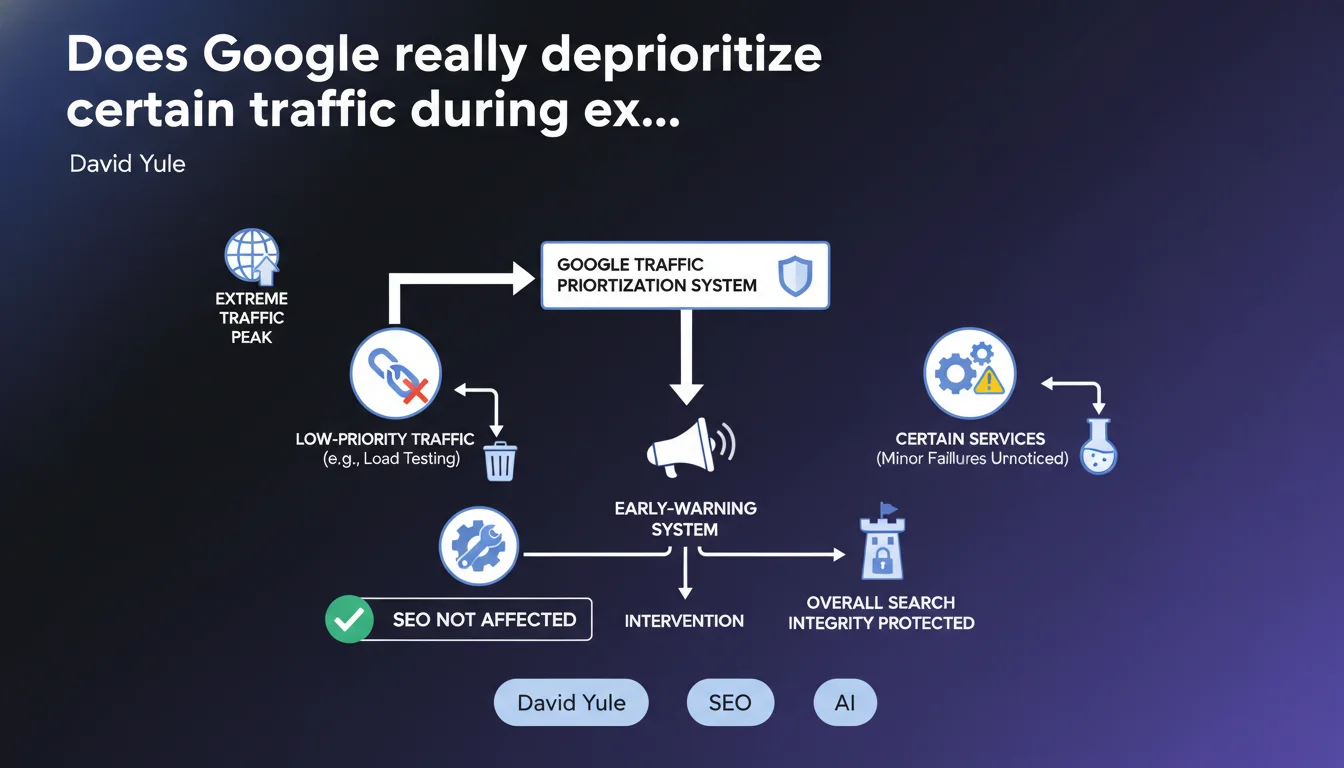

Google uses a traffic prioritization system during extreme peaks: it abandons low-priority traffic first (internal testing, non-critical services) to preserve Search integrity. This early-warning mechanism allows intervention before users experience service degradation.

What you need to understand

Why does Google need a traffic prioritization system?

During events generating massive query spikes — like the World Cup, presidential elections, or natural disasters — Google's infrastructure faces extreme loads. Without a regulation mechanism, the entire system could collapse.

Google therefore applies a priority hierarchy: user-facing production traffic remains at the top, while internal load testing, non-urgent batch jobs, or certain secondary services are sacrificed first. This approach ensures that actual user queries continue to receive reliable responses.

What services does Google consider "low priority"?

David Yule explicitly mentions internal load tests and "certain services where a few failures go unnoticed." Concretely, these are non-critical processes: deferred statistical analysis, non-real-time data consolidation, and internal experimentation.

Here's what matters: crawling and indexing of your pages likely aren't in the first wave of sacrifices. Google prioritizes the search engine's own availability, but background processes may experience temporary slowdowns.

Does this system directly impact your site's SEO?

No, not directly — at least not under normal conditions. This mechanism aims to protect overall infrastructure, not to penalize a specific site. Your rankings won't suddenly degrade because Google abandons internal test traffic.

However, if your server can't handle traffic spikes generated by a major event, that's another story. Google might crawl your site at the wrong time, encounter 503 errors, and temporarily slow down exploration. But that's your infrastructure problem — not Google's.

- Internal prioritization: Google sacrifices non-critical tasks first to preserve Search

- No direct SEO impact: it's not a ranking signal, just infrastructure load management

- Early warning: the system allows Google to intervene before total collapse

- Your responsibility: ensure your own infrastructure handles traffic spikes from organic search

SEO Expert opinion

Does this statement change how we understand Google's operations?

Not really. We already knew Google operates at a scale where load management is critical. What's interesting is the explicit confirmation of a priority hierarchy. Let's be honest: that's good infrastructure sense, not a search insights revelation.

What's missing — and that's typical Google communication — is the granularity. Where exactly does crawling sit in this hierarchy? Is Googlebot throttled during extreme peaks? [Needs verification] because Yule stays vague on SEO-specific processes.

Can sites experience side effects during these peaks?

Yes, but indirectly. If Google slows down certain deferred indexing processes to preserve resources, your new page published during the World Cup might take longer to appear in the index. Not a disaster, just a delay.

The other risk: your own server. If you're generating real-time content related to the event (live blog, live scores), you'll attract massive organic traffic. If your infrastructure fails, Googlebot could encounter errors at the worst moment. And there, you're creating the problem — not Google.

Should you adjust your SEO strategy during major traffic peaks?

No, no need to panic. This prioritization system is transparent for 99.9% of sites. It concerns exceptional events — not your product launch or seasonal campaign.

However, if you operate a news or events site, ensure your infrastructure can handle it. Properly configured CDN, adequate server capacity, well-tuned caching. Otherwise, you risk losing organic traffic when you need it most — and that's your fault, not Google's.

Practical impact and recommendations

What should you monitor during events causing traffic spikes?

First, your server performance. If you publish content related to a major event, your infrastructure must handle both user traffic AND Google's crawls. A struggling server generates 503 errors, and Google naturally slows its exploration.

Next, your response times. Googlebot doesn't wait indefinitely. If your pages take 5 seconds to load during a spike, you risk incomplete or abandoned crawls. Monitor your server logs and Core Web Vitals in real time.

What mistakes should you avoid when targeting event-driven traffic?

Don't publish real-time content without testing your load capacity first. Too many news sites crash during major events precisely because they never simulated a 10x traffic spike.

Another common mistake: neglecting caching and CDN. If every request hits your database, you're done for. Static pages, object caches, CDN layers — all of this must be in place before the spike, not improvised in an emergency.

How do you verify your site is ready for an organic traffic spike?

Load testing. Simulate 5x or 10x traffic on your critical pages and observe behavior. Does your server hold up? Do your response times stay acceptable? Do your 5xx errors skyrocket?

Set up real-time alerts on your server metrics (CPU, memory, response time) and error rates. If something goes wrong during a spike, you need to know immediately — not 48 hours later reviewing logs.

- Test server load capacity with dedicated tools (JMeter, Gatling, LoadImpact)

- Configure a high-performance CDN and verify geographic cache distribution

- Monitor response times in real time during traffic spikes

- Set up alerts on 5xx errors and abnormal response times

- Optimize database queries and implement object caching

- Verify that real-time pages are properly excluded from cache if needed

❓ Frequently Asked Questions

Le crawl de mon site est-il ralenti pendant les pics de trafic Google ?

Mon référencement peut-il être pénalisé lors d'événements majeurs comme la Coupe du Monde ?

Dois-je éviter de publier du contenu important pendant les pics de trafic Google ?

Quels services Google sont sacrifiés en premier lors de pics extrêmes ?

Comment savoir si mon site a subi des problèmes de crawl pendant un pic de trafic ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 03/10/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.