Official statement

Other statements from this video 8 ▾

- □ Que se passe-t-il réellement quand Google vous inflige une action manuelle ?

- □ Un site hors ligne peut-il vraiment détruire votre trafic de toutes les sources (et pas seulement Google) ?

- □ Pourquoi une balise noindex provoque-t-elle une baisse de trafic progressive et non brutale ?

- □ Les Core Updates provoquent-elles vraiment des changements progressifs plutôt que des chutes brutales ?

- □ Pourquoi analyser 16 mois de données Search Console lors d'une chute de trafic ?

- □ Faut-il vraiment analyser tous les onglets de Search Console pour diagnostiquer une baisse de trafic ?

- □ Pourquoi devriez-vous arrêter d'analyser votre trafic SEO de manière globale ?

- □ Pourquoi Google ajoute-t-il des annotations dans Search Console et comment les interpréter ?

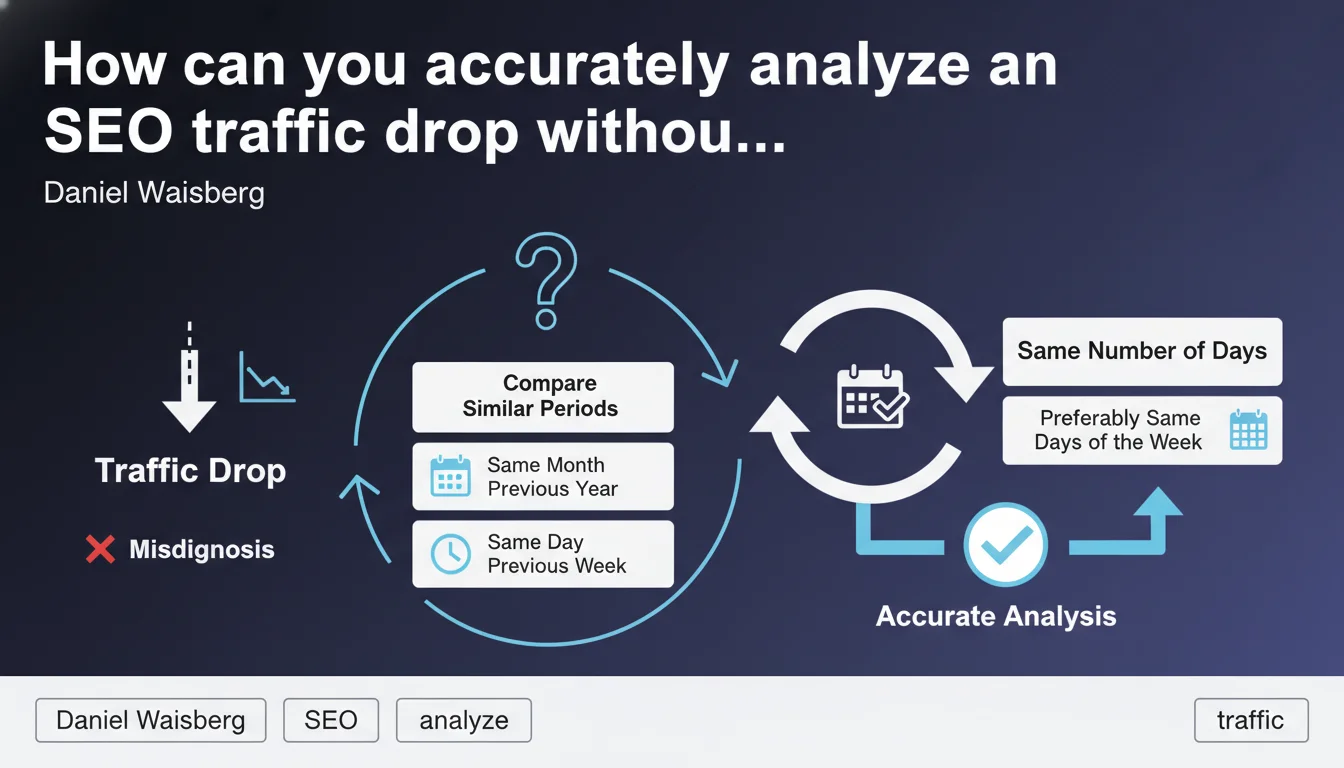

Google recommends a rigorous comparison method to analyze traffic drops: compare with the same period the previous year or the previous week, ensuring you have the same number of days and ideally the same days of the week. Without this methodological rigor, you risk attributing the drop to an algorithmic penalty when it's simply a seasonal or calendar effect.

What you need to understand

Why should you compare with the same period from the previous year?

Seasonality represents one of the most underestimated factors in traffic analysis. An e-commerce site selling swimming pools will naturally see its traffic plummet in October — this isn't a Google penalty, it's winter arriving.

By comparing October N with October N-1, you neutralize this seasonal effect. You can then identify whether the drop is structural (loss of rankings) or cyclical (simple calendar variation).

What does it mean to compare the same number of days and the same days of the week?

A February month has 28 or 29 days depending on the year. Comparing February N (28 days) with March N (31 days) skews the analysis by 10% mechanically. This is basic statistics, but errors of this type are frequent in SEO audits.

Same goes for days of the week: a B2B site sees its traffic collapse on weekends. If you compare a week with 3 weekends (a sampling error) to a normal week, you'll diagnose a drop that doesn't exist.

When should you use week-over-week comparison instead of year-over-year?

Week-over-week comparison (same day, previous week) proves relevant for detecting immediate effects from a technical change or deployment. It allows you to react quickly.

But beware: it doesn't capture seasonality. A ski rental site will see its traffic spike in January — comparing with December would show artificial growth. You must therefore combine both approaches.

- Year-over-year comparison: neutralizes seasonality, ideal for strategic diagnosis

- Week-over-week comparison: detects rapid variations, useful for technical monitoring

- Always verify the same number of days in each compared period

- Prioritize the same days of the week to avoid calendar bias

- Cross multiple comparison angles before concluding an algorithmic penalty or bug

SEO Expert opinion

Is this method enough to diagnose a real algorithmic penalty?

No, and that's where it gets tricky. Rigorous temporal comparison is a methodological prerequisite, not a final answer. It allows you to eliminate false positives (seasonality, calendar), but it says nothing about the root cause of the drop.

I've seen dozens of sites lose 40% of traffic after a Core Update, with impeccable year-over-year comparison. The diagnosis "drop confirmed" adds nothing. The real question: which pages dropped, on which search terms, and why did Google devalue this content? [Verify] whether this method is sufficient without analyzing competitor SERPs and search intent.

What interpretation errors does this approach fail to correct?

Comparing periods correctly doesn't solve the causal attribution problem. A drop detected via year-over-year comparison may coincide with a Google update… or with a better-positioned competitor launching, or with an evolution in search intent.

Let's be honest: in 60% of audits I receive, the client has already done the temporal comparison, but remains stuck because they haven't segmented by page type, by topic cluster, or by search intent. Temporal methodology is necessary but not sufficient.

In which cases does this rule become counterproductive?

On news sites or ultra-seasonal sites (events, weather, elections), year-over-year comparison can mask structural breaks. A site covering presidential elections will have a spike every 5 years — comparing with the previous year shows a collapse that has nothing to do with SEO.

Similarly, sites that underwent a complete redesign or migration between the two compared periods: the comparison becomes meaningless. You must then segment finely (migrated URLs vs new URLs, old categories vs new ones) and accept that part of the historical analysis becomes unusable.

Practical impact and recommendations

How do you implement this method in Google Search Console or Analytics?

In Google Search Console, the interface natively allows comparing two periods, but it doesn't force the same number of days. You must manually define the ranges: if you're analyzing April 1-30 N, compare with April 1-30 N-1, not "the last 30 days of the previous year".

In GA4, use custom date segments and verify the calendar displays the same day of the week (Monday to Monday, not Monday to Wednesday). A one-day offset in comparison can skew results on an e-commerce site with strong day-to-day variation.

What technical errors must you absolutely avoid?

First classic error: comparing a period including a public holiday with a period without one. A B2B site's traffic collapses on May 1st — if this date falls on a Tuesday one year and a Sunday the next, the comparison will be biased.

Second pitfall: not excluding technical downtime periods or temporary bugs. If your site was down 3 days in March N-1, comparing March N with March N-1 will show artificial growth. You must clean the data or adjust the ranges.

- Define date ranges strictly identical in number of days

- Verify that days of the week match (Monday to Sunday vs Monday to Sunday)

- Exclude or annotate known technical downtime periods

- Segment by page type and by search query to go beyond simple drop detection

- Cross temporal comparison with competitor SERP analysis

- Never conclude to an algorithmic penalty without identifying the pages and queries impacted

Should you analyze alone or get support?

Temporal comparison is an essential first filter, but in-depth diagnosis of a traffic drop requires crossing dozens of metrics: position evolution by semantic cluster, search intent analysis, technical audit, content quality review vs competition.

These cross-cutting analyses can quickly become complex to conduct alone, especially when you need to segment data finely and identify priority levers. Calling on a specialized SEO agency lets you benefit from experienced external perspective, advanced analysis tools, and personalized support to recover traffic quickly.

In summary: The rigorous comparison method is a technical prerequisite to avoid false diagnoses. But it doesn't replace qualitative analysis of content, search intent, and competition. A solid diagnosis always crosses quantitative data and fine semantic analysis.

❓ Frequently Asked Questions

Faut-il toujours comparer avec l'année précédente ou peut-on comparer mois par mois ?

Comment gérer les sites sans historique d'un an (nouveaux sites) ?

Que faire si la baisse est confirmée après comparaison rigoureuse ?

Les outils SEO tiers appliquent-ils cette méthode correctement ?

Cette méthode s'applique-t-elle aussi pour analyser une hausse de trafic ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/03/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.