Official statement

Other statements from this video 12 ▾

- □ Faut-il vraiment doubler les données produits entre le site et Merchant Center ?

- □ Pourquoi Google préfère-t-il les flux Merchant Center au crawl classique pour vos données produits ?

- □ Merchant Center peut-il vraiment booster le crawl de vos fiches produits ?

- □ Googlebot crawle-t-il vraiment les moteurs de recherche internes de votre site ?

- □ Comment vérifier l'indexation d'une page : l'outil d'inspection ou l'opérateur site: ?

- □ Pourquoi Google exige-t-il à la fois des données structurées ET Merchant Center pour afficher les prix correctement ?

- □ Les incohérences de prix entre votre site et Merchant Center peuvent-elles vraiment plomber votre visibilité produit ?

- □ Faut-il augmenter la fréquence de traitement des flux Google Merchant Center pour améliorer son référencement ?

- □ Faut-il vraiment cumuler données structurées ET flux Merchant Center pour les résultats enrichis produits ?

- □ Les résultats enrichis sont-ils vraiment à la discrétion totale de Google ?

- □ Pourquoi les erreurs Search Console et Merchant Center sabotent-elles vos résultats shopping ?

- □ Pourquoi les données structurées produit ne suffisent-elles pas pour apparaître dans l'onglet Shopping ?

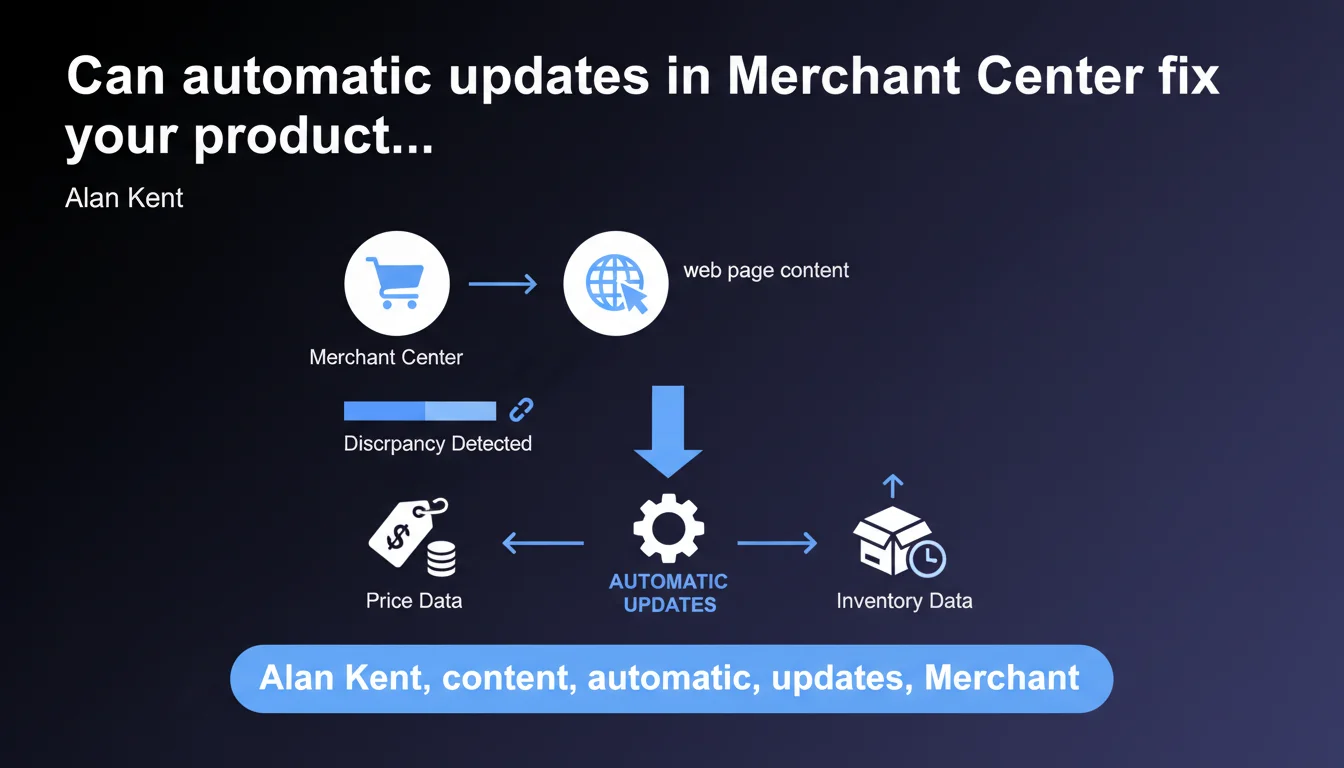

Google Merchant Center can now automatically synchronize prices and inventory by scraping your web pages when it detects discrepancies with your feed. This feature helps prevent account suspensions due to outdated data, but raises questions about the reliability of scraping and the actual control you retain over your data.

What you need to understand

How does this automatic synchronization actually work in practice?

Merchant Center now analyzes the HTML content of your product pages to extract prices and availability in real-time. When it detects a discrepancy between your data feed and what is published on your site, it automatically updates product information without waiting for your intervention.

This approach relies on structured scraping — Google reads your Schema.org tags, your microformats or directly parses the DOM to identify relevant data. The frequency of these checks is not specified, but we can assume it varies depending on your catalog volume and the reliability history of your feed.

Why is Google introducing this feature now?

Account suspensions for incorrect data remain one of the main sources of friction between e-commerce merchants and Google Shopping. A product advertised at a price that no longer matches the landing page is a policy violation that can block your entire catalog.

By automating this synchronization, Google reduces the risk of human error and lightens the burden of feed maintenance. For sites with thousands of SKUs and fluctuating prices (sales, flash promotions, dynamic adjustments), this is theoretically a significant time saver.

What are the key points to remember for an SEO professional?

- This feature does not replace your structured product feed — it acts as a safety net.

- Google scans your web pages to extract prices and inventory: the quality of your HTML markup becomes critical.

- Detected discrepancies are corrected automatically, meaning Merchant Center can override your feed if page content differs.

- No explicit guarantee on update frequency or the criteria triggering a verification.

- This approach assumes your product pages are always up-to-date and accessible — any crawl issue or client-side rendering problem can skew the results.

SEO Expert opinion

Is this automation really an advancement or a hidden risk?

Let's be honest: the idea of letting Google automatically extract data from your pages raises as many opportunities as questions. If your Schema.org markup is flawless and your prices displayed in a standard way, everything can run smoothly. But if you use heavy JavaScript to inject prices or availability, or if your HTML structure varies from one category to another, scraping can become approximative.

The other issue is the lack of transparency about triggering criteria. Google talks about "detected discrepancies," but how often? With what tolerance? If a price changes for an hour during a flash operation, will Merchant Center capture and synchronize it before you return to the standard rate? [To verify]: no public documentation specifies these thresholds.

In what cases can this feature malfunction?

Imagine a site with prices differentiated by user geographic location, or promotions accessible only after login. Google scans from its own IPs, without user context — it will therefore see a generic version of the page, not necessarily the one your customers see.

Another problematic scenario: sites that load prices via external APIs through asynchronous JavaScript. If server-side rendering is absent or partial, Google may scrape an "empty" page or with an incorrect default price. Result: synchronization based on incorrect data.

Is this statement consistent with practices observed in the field?

Google is progressively strengthening its use of scraping to validate and enrich structured data — we already see this with rich snippets and product reviews. This evolution is part of a broader logic: reduce dependence on manual feeds and align the data displayed in Shopping with the reality of the merchant site.

However, several e-commerce agencies report cases where Merchant Center misinterpreted strikethrough prices or "starting from" mentions, generating synchronization errors. The robustness of Google's HTML parsing is not infallible — and that's where the problem lies.

Practical impact and recommendations

What should you do concretely to take advantage of this feature?

First step: enable automatic article updates in your Merchant Center account settings. This option is not necessarily enabled by default and requires manual validation.

Then audit the quality of your Schema.org markup on your product pages. Google relies primarily on structured Product data with offers, price, availability properties. If these tags are missing, inconsistent or poorly formatted, scraping will lose reliability.

Also verify that your pages render correctly server-side or that your JavaScript executes within Google's crawl timeframe. Use the URL inspection tool in Search Console to see exactly what Googlebot retrieves — if the price doesn't appear in the rendered HTML, Merchant Center won't be able to synchronize it.

What mistakes should you avoid to prevent involuntary desynchronization?

Never leave outdated prices or "price on request" mentions in your HTML if your feed indicates a specific amount. Google will prioritize what it sees on the page, which can create inconsistencies.

Avoid overly complex or ambiguous HTML structures — multiple price blocks on the same page (comparators, product variants, member vs non-member prices) can mislead the scraper. Simplify the presentation of the main price and availability as much as possible.

Monitor your Merchant Center diagnostic logs after activation. Google reports detected discrepancies and applied corrections — this is your only way to quickly spot a misinterpretation.

How can you ensure this automation doesn't generate new problems?

- Enable the feature and test on a sample of products before full deployment

- Audit Schema.org markup (Product, Offer, price, availability) across all your product pages

- Verify server-side HTML rendering or rapid JS execution via Search Console

- Monitor Merchant Center alerts for at least 2 weeks after activation

- Regularly compare XML feed and scraped data to detect discrepancies

- Set up automatic alerts if the divergence rate exceeds a critical threshold

- Document special cases (quotation-based prices, custom products) to avoid false positives

This feature can significantly reduce manual errors and secure your Merchant Center account, provided your technical architecture is solid. But it requires heightened vigilance on markup quality and consistency between feed and web pages.

For complex catalogs or sites with specific technical constraints (heavy client-side rendering, dynamic prices, multi-zone), optimal implementation may require an in-depth audit and structural adjustments. In these situations, support from an e-commerce-specialized SEO agency helps secure the rollout and avoid pitfalls related to automatic scraping.

❓ Frequently Asked Questions

Cette fonctionnalité remplace-t-elle complètement mon flux produit XML ?

À quelle fréquence Google vérifie-t-il mes pages pour détecter les divergences ?

Que se passe-t-il si Google scrape un prix incorrect à cause d'une erreur temporaire sur mon site ?

Puis-je désactiver cette fonctionnalité si elle génère trop d'erreurs ?

Comment Google gère-t-il les sites avec plusieurs devises ou prix géolocalisés ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 29/08/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.