Official statement

Other statements from this video 12 ▾

- □ Faut-il vraiment doubler les données produits entre le site et Merchant Center ?

- □ Pourquoi Google préfère-t-il les flux Merchant Center au crawl classique pour vos données produits ?

- □ Merchant Center peut-il vraiment booster le crawl de vos fiches produits ?

- □ Googlebot crawle-t-il vraiment les moteurs de recherche internes de votre site ?

- □ Pourquoi Google exige-t-il à la fois des données structurées ET Merchant Center pour afficher les prix correctement ?

- □ Les incohérences de prix entre votre site et Merchant Center peuvent-elles vraiment plomber votre visibilité produit ?

- □ Faut-il augmenter la fréquence de traitement des flux Google Merchant Center pour améliorer son référencement ?

- □ Les mises à jour automatiques dans Merchant Center peuvent-elles corriger vos données produits sans intervention manuelle ?

- □ Faut-il vraiment cumuler données structurées ET flux Merchant Center pour les résultats enrichis produits ?

- □ Les résultats enrichis sont-ils vraiment à la discrétion totale de Google ?

- □ Pourquoi les erreurs Search Console et Merchant Center sabotent-elles vos résultats shopping ?

- □ Pourquoi les données structurées produit ne suffisent-elles pas pour apparaître dans l'onglet Shopping ?

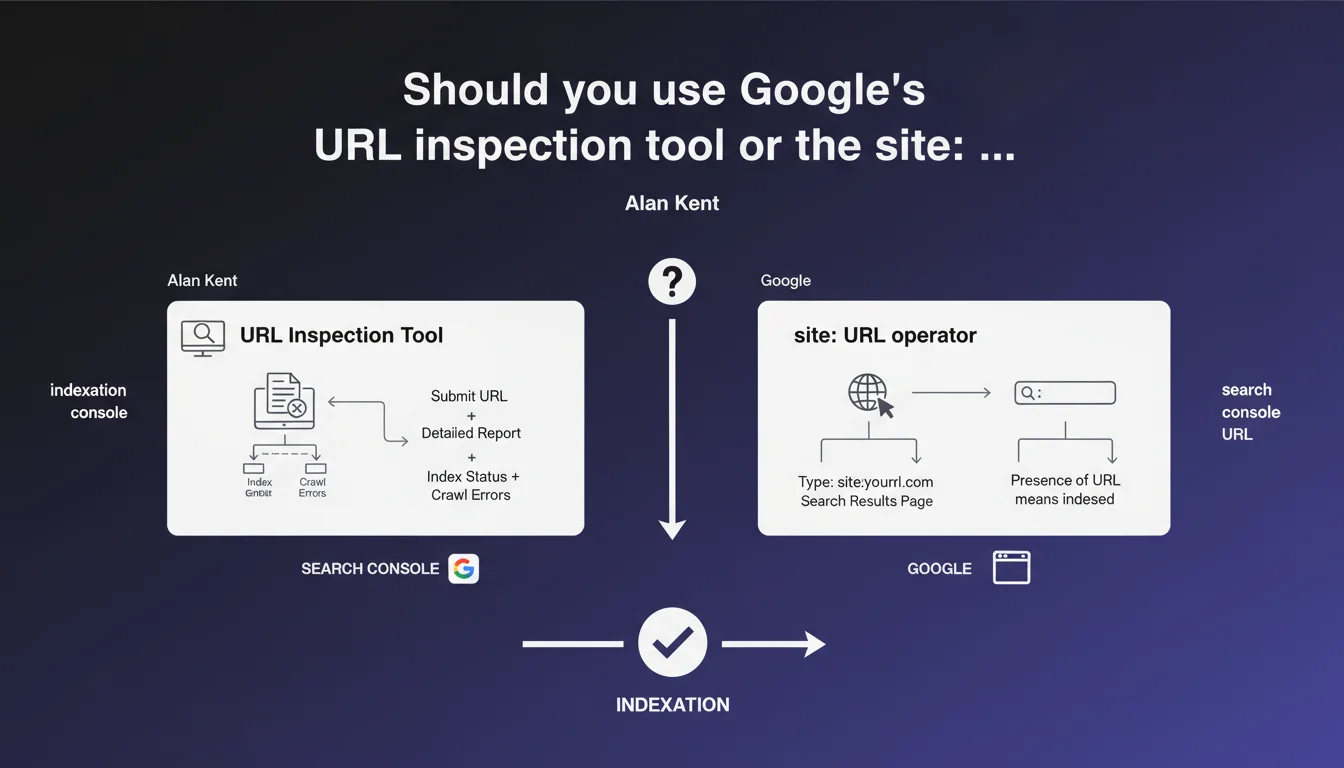

Google recommends two methods to verify if a product page is indexed: the URL inspection tool in Search Console or a search using the site:URL operator. This statement seems straightforward, but it masks technical limitations that few practitioners understand — notably update delays and false positives.

What you need to understand

Google offers two approaches to verify if a page is indexed: the URL inspection tool (via Search Console) or manual search using the site:URL operator in the search engine.

The underlying idea? Give webmasters a quick way to validate that their content is in the index. Except this statement, fairly basic, says nothing about the data discrepancies between the two tools — and that's where it gets interesting.

Is the site: operator reliable for diagnosing indexation?

No, not entirely. The site: operator queries a sometimes outdated version of the index. It can display a URL as indexed when it was recently deindexed, or vice versa.

Google has repeated this several times: this operator is not a precise diagnostic tool. It gives an indication, nothing more. For serious auditing, you need to cross-reference with the inspection tool.

What's the difference between the inspection tool and the site: operator?

The inspection tool queries the index in near real-time and displays the current state of the page: indexed, not indexed, blocking technical reasons. It's the most reliable tool.

The site: operator, meanwhile, reflects a hidden version of the index with a potential lag of several days. It's convenient for a quick check, but not for precise diagnosis.

- The inspection tool provides current and detailed data on the indexation of a specific URL

- The site: operator allows a global overview but has variable update delays

- Both methods can display divergent results for the same URL

- For reliable auditing, prioritize the inspection tool — the site: operator only serves as an initial filter

Why does Google still mention the site: operator when it's imprecise?

Probably because it remains massively used by beginner webmasters. It's quick, accessible without Search Console, and gives immediate visual results.

But on sites with thousands of pages, this operator will never tell you how many URLs are actually indexed. It lacks granularity, doesn't detect canonicalization issues, and ignores pages blocked by robots.txt or late noindex.

SEO Expert opinion

Is this statement complete considering real-world practices?

No. Alan Kent remains deliberately generic. He says nothing about edge cases: redirected URL, URL with parameters, URL canonicalized differently from the requested URL. Yet these situations are common on e-commerce sites.

In practice, the inspection tool sometimes displays "URL is not on Google", while the page appears via site: — and vice versa. [To verify]: in some observed cases, a URL can be indexed via a URL variant (with/without trailing slash, HTTP vs HTTPS) without the tool clearly signaling it.

Does the site: operator still have strategic value?

Yes, but not for what Google suggests here. Its real use? Detecting indexation leaks: internal search pages, facets, duplicates generated by unfiltered parameters.

Concretely, a search site:example.com inurl:? often reveals hundreds of parasitic indexed URLs. The inspection tool, on the other hand, only allows you to test one URL at a time — which means it's useless for this type of macro diagnosis.

What are the limitations Google never mentions?

The inspection tool only allows testing one URL at a time. For a site with 10,000 product pages, that's unusable. The Search Console API allows automation, but with a ridiculous quota: 2,000 requests per day. In other words, a full crawl takes weeks.

The site: operator, for its part, never returns the full index. Google arbitrarily caps results around 1,000 visible URLs. If your site has 50,000, you'll only see a sample — and you won't know which one.

Practical impact and recommendations

Which method to use depending on context?

To verify a critical page (flagship product page, strategic SEO landing page): inspection tool, without hesitation. You'll get the real state, blocking errors, the canonical version chosen by Google.

For quick mass auditing: the site: operator remains useful for detecting leaks (e.g., site:example.com inurl:search). But cross-reference with a crawl via Screaming Frog or Oncrawl to identify submitted URLs that aren't indexed.

How to avoid false diagnoses?

Never stop at a single signal. If the inspection tool indicates "URL is not indexed", verify the technical reason: noindex, canonical to another URL, robots.txt blocking, followed redirect.

If the site: operator displays a URL but the inspection tool says otherwise, it's probably an update delay. Wait 48 hours and re-test. Or use the "Request indexing" tool to force a refresh.

What should you monitor regularly on an e-commerce site?

- Check indexation of strategic product pages via the inspection tool once a month

- Use the site: operator to detect parasitic URLs (facets, filters, internal searches)

- Automate indexation checks via the Search Console API for sites with more than 1,000 pages

- Cross-reference Search Console data with a crawl to identify gaps between submitted URLs and indexed URLs

- Never rely solely on the site: operator to measure overall indexation coverage

❓ Frequently Asked Questions

L'opérateur site: affiche 500 résultats, mais j'ai 2000 pages sur mon site. Est-ce normal ?

Pourquoi l'outil d'inspection dit "URL n'est pas indexée" alors qu'elle apparaît via site: ?

Peut-on automatiser la vérification d'indexation pour 10 000 pages produits ?

L'opérateur site: peut-il détecter des pages indexées par erreur ?

Faut-il privilégier l'outil d'inspection ou le rapport de couverture dans Search Console ?

🎥 From the same video 12

Other SEO insights extracted from this same Google Search Central video · published on 29/08/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.