Official statement

Other statements from this video 6 ▾

- □ Comment Google découvre-t-il réellement vos pages avant de les classer ?

- □ Peut-on vraiment indexer une page sans la crawler ?

- □ Pourquoi une page indexée n'apparaît-elle pas forcément dans les résultats Google ?

- □ Pourquoi une page indexée peut-elle rester invisible dans les résultats de recherche ?

- □ Pourquoi votre contenu indexé ne se classe-t-il toujours pas ?

- □ Google retire-t-il vraiment vos pages de l'index si personne ne clique dessus ?

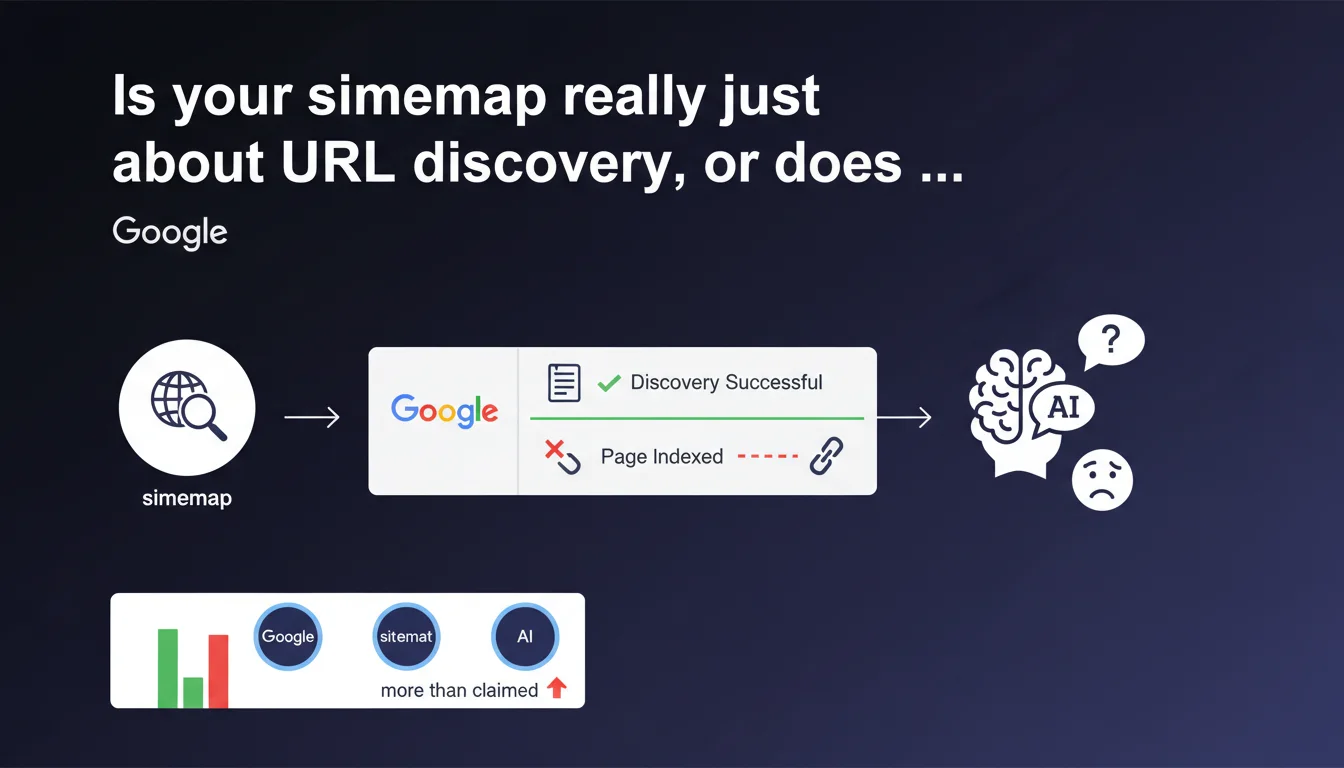

Google states that the sitemap plays a role only in the first phase of the indexing process: URL discovery. If a page is indexed, it means the sitemap fulfilled its mission. Everything that follows — crawling, quality assessment, final indexing — no longer depends on the sitemap.

What you need to understand

What is the exact function of a sitemap according to Google?

Google breaks down its process into several stages: discovery, crawling, evaluation, then indexing. The sitemap intervenes only at the very first phase.

In concrete terms? It signals to Googlebot that a URL exists somewhere on your domain. That's it. It doesn't guarantee that the page will be crawled quickly, nor that it will be judged to have sufficient quality to appear in the index.

Why is this distinction important for an SEO professional?

Because we often confuse submission and indexation. Adding a URL to the sitemap means telling Google "this page exists". But if it's not indexed after several weeks, the problem doesn't come from the sitemap — it did its job.

The blockage lies elsewhere: insufficient crawl budget, questionable content quality, duplication, problematic canonicalization, conflicting robots.txt or meta robots directives.

What does it concretely mean when "the sitemap worked"?

If your URL appears in Google's index, it means the sitemap fulfilled its role as a discovery signal. Nothing more, nothing less.

Google doesn't say that the sitemap influences rankings, or even indexing speed. It just says: "we found the URL thanks to your XML file, mission accomplished".

- The sitemap is a discovery tool, not a forced indexing lever

- A URL present in the sitemap can very well never be indexed if it doesn't meet quality criteria

- Conversely, a URL absent from the sitemap can be indexed if it's discovered via internal or external links

- The sitemap is particularly useful for new content, sites with few backlinks, or deep site architectures

- A poorly constructed sitemap (blocked URLs, redirects, 404 errors) sends contradictory signals and wastes crawl budget

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes, broadly speaking. On thousands of audits, we observe that a clean sitemap accelerates discovery — especially on recent sites or those with few links. But it never forces the indexing of a mediocre page.

Google is transparent on one point: the sitemap bypasses no quality filters. If your content is thin, duplicated, or technically problematic, it will remain out of index even with a perfect sitemap. [To verify]: the correlation between sitemap presence and indexing speed remains unclear. Google provides no figures, no indicative timeframes.

What nuances should be added to this statement?

The sitemap also has a secondary function often overlooked: it transmits metadata. Last modification date (lastmod), update frequency (changefreq), relative priority of URLs.

Let's be honest — Google has said for years that it largely ignores changefreq and priority. But lastmod? It can influence the re-crawl frequency of already-indexed pages. That's significant for a news site or e-commerce with frequent stock variations.

Another nuance: image sitemaps, video sitemaps, news sitemaps. They don't just serve discovery — they enrich content understanding. A properly structured video sitemap can improve the display of your rich snippets.

In what cases does this logic not fully apply?

On very large sites (millions of pages), the sitemap becomes an implicit prioritization tool. By including only your strategic URLs, you guide Googlebot toward what really matters.

But be careful — that's a weak signal. If your internal linking massively pushes toward pages excluded from the sitemap, Google will crawl them anyway. The sitemap never replaces a coherent link architecture.

Practical impact and recommendations

What should you concretely do with your sitemap?

Start by cleaning. Remove all URLs blocked by robots.txt, those with noindex, 301/302 redirects, 404 errors. Each useless URL in your sitemap wastes crawl budget and muddies signals.

Next, segment. On a large site, create multiple thematic sitemaps (products, categories, blog, static pages). Index them in a sitemap index. This facilitates maintenance and monitoring in Search Console.

Update lastmod only when content actually changes. Lying about this tag (lastmod changing when nothing has moved) discredits your sitemap with Google.

What mistakes should you absolutely avoid?

Don't overload your sitemaps. The theoretical limit is 50,000 URLs per file, but in practice, stay under 10,000-20,000 for average sites. The more compact, the better.

Never include URLs canonicalized to another page. If page A has a canonical tag pointing to page B, only B should appear in the sitemap. Otherwise, you send contradictory signals.

Avoid dynamic sitemaps that take 10 seconds to generate. Googlebot times out, considers the file inaccessible, and your work serves no purpose. Opt for static generation or aggressive caching.

How do you verify that your sitemap is fulfilling its function?

Go to Search Console, Sitemaps section. Look at the number of URLs submitted vs. the number indexed. A massive gap (80% not indexed) signals a problem — but not necessarily related to the sitemap itself.

Dig into the Coverage tab. URLs "Discovered, currently not indexed" or "Excluded by noindex tag" give you concrete leads. If Google says "discovered but not indexed", your sitemap worked. The blockage is elsewhere.

- Remove from sitemap all URLs that are blocked, redirected, or in error

- Segment large sitemaps (> 10,000 URLs) into multiple thematic files

- Update lastmod only when content actually changes

- Verify that your sitemap contains no URLs canonicalized to another page

- Test generation time: a sitemap taking > 3 seconds to load is problematic

- Monitor the submitted/indexed gap in Search Console to detect anomalies

- Include only your strategic URLs — quality over quantity

- Use specialized sitemaps (images, videos, news) if your content justifies it

❓ Frequently Asked Questions

Un sitemap peut-il forcer l'indexation d'une page de mauvaise qualité ?

Faut-il inclure toutes les pages du site dans le sitemap ?

Quelle est la différence entre sitemap et fichier robots.txt ?

Les balises changefreq et priority sont-elles encore utiles ?

Combien de temps après soumission une URL est-elle découverte ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 19/03/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.