Official statement

Other statements from this video 7 ▾

- □ Google Search Console : comment exploiter vraiment ses données de performance ?

- □ Search Console peut-elle vraiment piloter votre stratégie business ?

- □ Comment identifier précisément les requêtes qui génèrent réellement du trafic sur votre site ?

- □ Comment Search Console révèle-t-elle vraiment les backlinks vers votre site ?

- □ Comment vérifier si votre site mobile pose problème dans les résultats de recherche ?

- □ Comment Search Console peut-il détecter les problèmes de sécurité et spam sur votre site ?

- □ Pourquoi la surveillance régulière de l'activité de votre site est-elle devenue incontournable en SEO ?

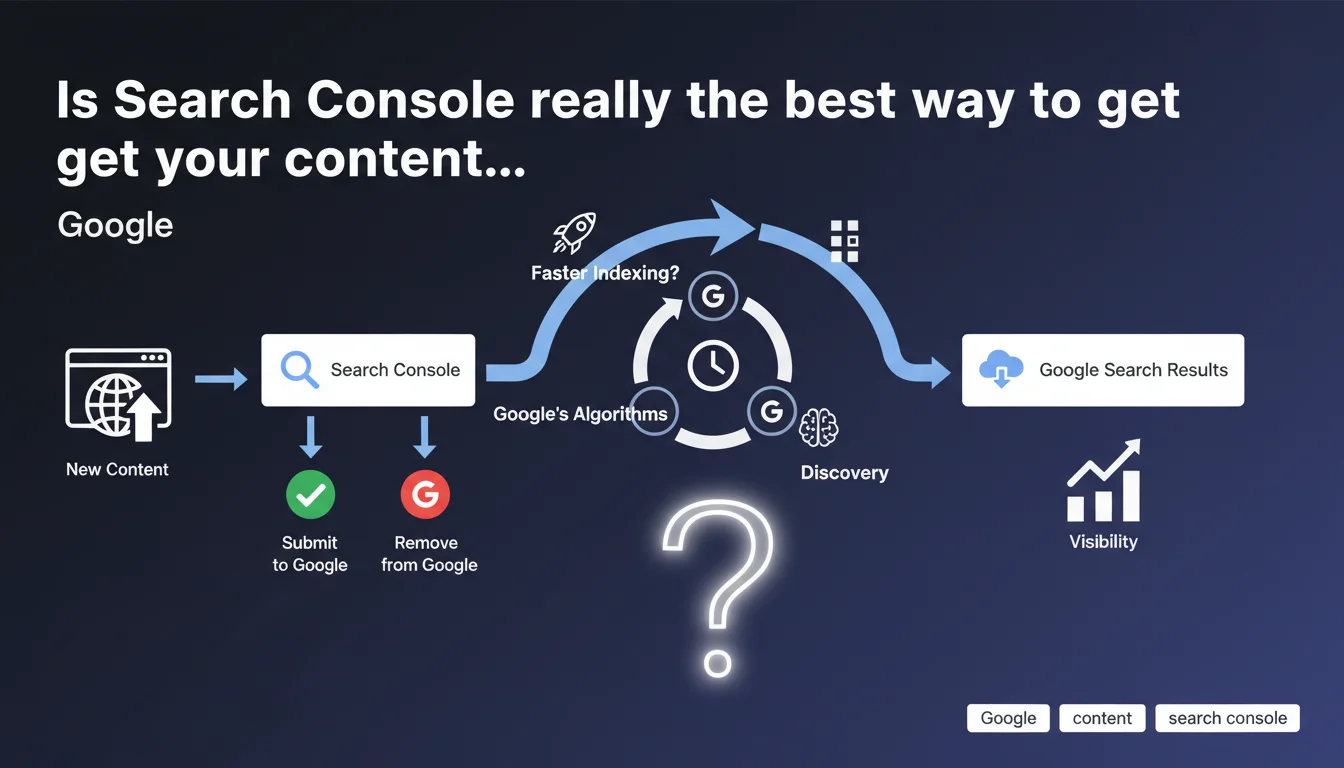

Google confirms that Search Console allows you to submit new content and remove pages from search results. Two distinct tools, two radically different purposes — one to accelerate indexation, the other to clean up what shouldn't appear in the SERPs.

What you need to understand

What are the two submission tools available in Search Console?

Google provides URL inspection to request indexation of a specific page. This function allows you to flag new or modified content to the search engine. It's a request, not a guarantee — Google ultimately decides whether the page deserves to be indexed.

The second tool concerns content removal already present in the index. Less well-known, it serves to temporarily or permanently remove URLs from search results. The two mechanisms don't operate in the same space: one accelerates arrival, the other organizes departure.

Is manual submission essential for getting indexed?

No. Natural crawling remains the primary indexation channel for the vast majority of web pages. If your site has a clean XML sitemap and coherent internal linking, Googlebot will find your new pages without manual intervention.

Submission via Search Console mainly serves to save time on urgent content — a press release, critical correction, or breaking news article. For a properly structured site that publishes regularly, it provides only marginal gains on standard content.

Why does Google also offer a removal tool?

Because not all webmasters master technical directives (noindex, 410, programmatic deindexation). The removal tool offers a quick solution to take down an embarrassing page, accidental duplicate, or obsolete content polluting the SERPs.

Caution: removals via Search Console are often temporary (6 months). For permanent removal, you must combine with server-side solutions — noindex tag, 404/410 code, or robots.txt depending on the case. The tool doesn't replace rigorous technical management.

- URL inspection: accelerates indexation of a specific page, useful for urgent content

- XML Sitemap: primary channel to communicate all your indexable URLs

- Removal tool: temporarily removes content from results, must be combined with technical directives

- Natural crawl: remains the primary indexation mechanism, manual submission is just a nudge

- No guarantee: submitting a URL doesn't force Google to index it if it doesn't meet quality standards

SEO Expert opinion

Does this statement really reflect what we observe in the field?

Yes, but with important nuances. URL inspection effectively works to accelerate indexation — we observe processing within hours on sites with adequate crawl budget. However, on massive or poorly crawled sites, even manual submission can stall for days.

The real problem is that Google doesn't specify the eligibility criteria. A submitted page may remain pending if it lacks quality, backlinks, or if the overall site has trust issues. [To verify]: no official data on the actual acceptance rate of manual submissions nor on average timelines by site type.

Is the removal tool really effective for cleaning the index?

Effective, yes. Reliable long-term, that's another story. Removals via Search Console expire after 6 months — if the page remains accessible and crawlable, it will return to the index. Many SEOs don't realize this and are surprised to see URLs they thought removed reappear.

For permanent cleanup, you must imperatively combine the tool with technical deindexation: noindex in meta or X-Robots-Tag, HTTP 404 or 410 code, or even robots.txt blocking depending on context. The Search Console tool serves as a quick fix, not a structural solution.

Does Google deliberately omit critical information?

Clearly. This statement passes over submission quotas in silence — you can't spam URL inspection on 500 pages per day. Google imposes undisclosed limits, variable based on site history. Exceeding these thresholds can even trigger algorithmic distrust.

Another silence: the cases where submission is counterproductive. Submitting massive amounts of weak or duplicate content can draw attention to pages Google would have naturally ignored. Sometimes doing nothing beats rushing into a submission. [To verify]: the actual impact of massive submission of mediocre pages on domain-wide trust.

Practical impact and recommendations

When should you really submit a URL via Search Console?

Reserve manual submission for urgent situations: correcting an error visible in the SERPs, publishing breaking news content, critical update to a strategic page. For everything else, let natural crawling and your sitemap do the work.

Avoid systematically submitting every new page — it's a waste of time and can trigger quotas. If your publishing rhythm requires recurring fast indexation, the problem lies elsewhere: internal linking failures, poorly configured sitemap, or insufficient crawl budget.

How do you correctly use the removal tool without creating new problems?

Before requesting removal, ask yourself: should this page really disappear permanently or just be temporarily deindexed? If permanent, apply the technical solution first (404, 410, noindex) then use the tool to accelerate removal.

If temporary — page under maintenance, content to revise — favor noindex over the removal tool. Once the 6 months expire, you don't want the page reappearing unexpectedly with obsolete content still live.

What mistakes should you absolutely avoid with these tools?

Never submit a URL that returns a server error (404, 500, 503). Google will attempt to crawl it, fail, and possibly note this inconsistency. Always verify the page is accessible and compliant before requesting indexation.

Also avoid removing URLs still receiving qualified organic traffic. Analyze Search Console and Analytics data before triggering removal — some pages seemingly low-value in SEO actually generate conversions through long-tail queries.

- Reserve URL inspection for urgent or strategic content only

- Verify the page is accessible and error-free before any submission

- Always pair the removal tool with a permanent technical directive (noindex, 404, 410)

- Analyze traffic and conversions for a URL before removing it from the SERPs

- Don't submit massive amounts of weak content — prioritize quality over quantity

- Set up a clean XML sitemap to automate discovery of new pages

- Monitor temporary removals that expire after 6 months

- Respect undocumented submission quotas to avoid spam signals

❓ Frequently Asked Questions

Combien de temps faut-il pour qu'une URL soumise soit indexée ?

Peut-on soumettre plusieurs URLs d'un coup dans Search Console ?

Une suppression via Search Console est-elle définitive ?

Faut-il soumettre chaque nouvelle page publiée sur son site ?

Peut-on soumettre une URL bloquée par le robots.txt ?

🎥 From the same video 7

Other SEO insights extracted from this same Google Search Central video · published on 24/02/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.