Official statement

Other statements from this video 9 ▾

- □ Comment Google crawle-t-il vraiment vos pages web ?

- □ Comment Google découvre-t-il vraiment vos nouvelles pages ?

- □ Pourquoi Google ne découvre-t-il pas toutes les URLs de votre site ?

- □ Comment Googlebot décide-t-il quelles pages crawler sur votre site ?

- □ Googlebot ralentit-il volontairement sur votre site pour ne pas le surcharger ?

- □ Pourquoi Googlebot ignore-t-il une partie des URLs qu'il découvre ?

- □ Googlebot peut-il vraiment crawler le contenu derrière une page de connexion ?

- □ Pourquoi Google ne voit-il pas votre contenu JavaScript sans rendering ?

- □ Faut-il vraiment automatiser la génération de vos sitemaps ?

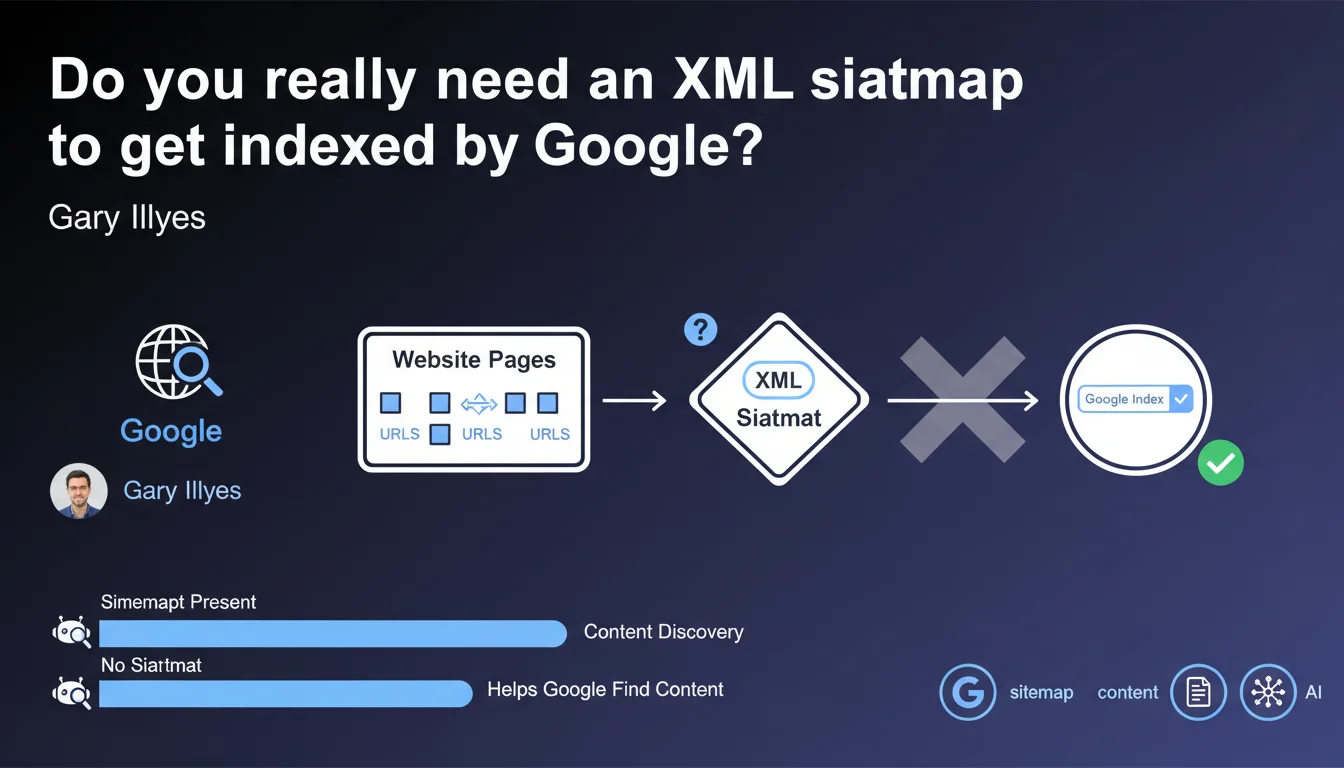

Google confirms that XML sitemaps are not required for indexation, but they do facilitate content discovery. In practice: a well-structured site with proper internal linking can do without one, but a sitemap remains a valuable safety net, especially for large volumes or poorly connected content.

What you need to understand

Why does Google insist that sitemaps are not mandatory?

Google wants to clear up a persistent misconception: a sitemap is not a technical prerequisite for Googlebot to crawl and index your pages. If your internal architecture is clean, with coherent internal linking and links from your homepage to your strategic content, the bot will naturally discover your site.

This clarification also aims to remind us that submitting a sitemap in no way guarantees indexation. It's an aid to discovery, not an indexation command. Google remains the sole authority over what it crawls, indexes, and ranks.

When does a sitemap actually become useful?

Sitemaps shine on large or rapidly growing sites: e-commerce with thousands of product pages, media outlets publishing content daily, user-generated content platforms. They allow Google to quickly detect new URLs without waiting for an internal link to point to them.

Another classic case: orphaned or poorly linked pages. If a section of your site suffers from structural isolation, the sitemap partially compensates for this weakness. Be careful though — it's a band-aid, not a solution.

Is XML the only valid format?

Gary mentions that it's the most popular, and that's true: XML remains the reference standard for search engines. But Google also accepts TXT format (one URL per line) and RSS/Atom for recent content feeds.

For most websites, XML offers the best flexibility: lastmod tags, priority, changefreq (although Google largely ignores these last two). Modern CMS platforms generate it automatically, so there's little reason to skip it.

- A sitemap is not required if your internal linking is solid and Google naturally discovers your pages

- It becomes valuable for large sites, those with high publication frequency, or containing isolated pages

- XML remains the standard format, although TXT and RSS are supported for specific use cases

- Submitting a sitemap guarantees neither crawl nor indexation — it's a signal, not an instruction

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, absolutely. We regularly observe that sites without sitemaps — small well-linked blogs, well-structured brochure sites — index without issue. Conversely, poorly executed sitemaps (URLs in noindex, redirects, duplicate content) don't save anyone.

The problem is that many practitioners confuse "aid to discovery" with "guarantee of indexation". The sitemap remains a passive submission channel. If your content is mediocre or your crawl budget is saturated by useless pages, the sitemap won't change anything.

What nuances should be added to this claim?

Saying a sitemap "is not required" is technically true, but the risk of going without depends heavily on context. On a 50-page site with clear architecture, fine. On a 100,000-URL site with varying click depths, you're playing with fire.

Gary also doesn't specify that sitemaps also serve to signal updates via the lastmod tag. Properly exploited (and honestly populated), it accelerates re-crawling of modified pages. This is an often-underestimated lever for news sites or e-commerce.

When should you really worry about a missing sitemap?

Let's be honest: if Google takes weeks to discover your new pages while you're publishing daily, the absence of a sitemap is probably not the only culprit. First check your crawl budget, your robots.txt, your server response times.

On the other hand, for multilingual or multi-regional sites, the sitemap becomes a valuable signaling tool for hreflang tags. This is a use case where its utility goes beyond simple discovery.

Practical impact and recommendations

What should you actually do with this information?

First step: audit your current sitemap. Look in Search Console at the coverage rate (URLs submitted vs indexed). If you have 10,000 URLs submitted and 3,000 indexed, dig into the reasons — quality issues, poorly managed canonicals, forgotten noindex tags.

Second reflex: segment your sitemaps if your site exceeds 5,000 pages. Create a sitemap index with separate files by type (products, blog, static pages). This facilitates diagnosis and allows Google to prioritize crawling.

What errors should you absolutely avoid?

Never list in a sitemap URLs that are noindex, 404, 301, or canonicalized to another page. This is noise that pollutes the signal and wastes Googlebot's time — and therefore erodes your crawl budget.

Another classic pitfall: the priority tag. Google largely ignores it, so don't waste time optimizing it. However, lastmod must be accurate — don't put today's date on all URLs if nothing has changed.

How can you verify that your sitemap strategy is effective?

Use Search Console to track the discovery rate: how long between submitting a new URL and its first crawl? If it's quick (a few hours to 2 days), your sitemap is doing its job.

Also compare the behavior of pages present in the sitemap vs absent. If pages outside the sitemap but well-linked index faster, this is a sign that your internal linking takes priority over the sitemap — which is actually a good structural indicator.

- Verify that the sitemap contains only 200 status, indexable, and canonical URLs

- Segment sitemaps by content type on sites with more than 5,000 pages

- Populate lastmod only when content is actually modified

- Monitor the coverage rate in Search Console (URLs submitted / indexed)

- Compare discovery speed for pages with and without sitemap to evaluate real effectiveness

- Regularly clean up obsolete URLs (redirects, deletions)

❓ Frequently Asked Questions

Un site peut-il être indexé sans sitemap XML ?

Les balises priority et changefreq du sitemap XML sont-elles utiles ?

Combien d'URLs maximum dans un sitemap XML ?

Faut-il soumettre le sitemap dans la Search Console ou suffit-il de le déclarer dans le robots.txt ?

Peut-on mettre des URLs en noindex dans un sitemap ?

🎥 From the same video 9

Other SEO insights extracted from this same Google Search Central video · published on 22/02/2024

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.