Official statement

Other statements from this video 6 ▾

- □ Le Client-Side Rendering met-il vraiment votre indexation en danger ?

- □ Pourquoi la visibilité du contenu conditionne-t-elle réellement l'indexation par Google ?

- □ L'hydration est-elle vraiment la solution miracle aux problèmes SEO du JavaScript ?

- □ Le Server-Side Rendering garantit-il vraiment l'indexation de votre contenu JavaScript ?

- □ L'hydration est-elle vraiment un compromis technique acceptable pour le SEO ?

- □ Comment choisir la bonne stratégie de rendu pour optimiser son référencement naturel ?

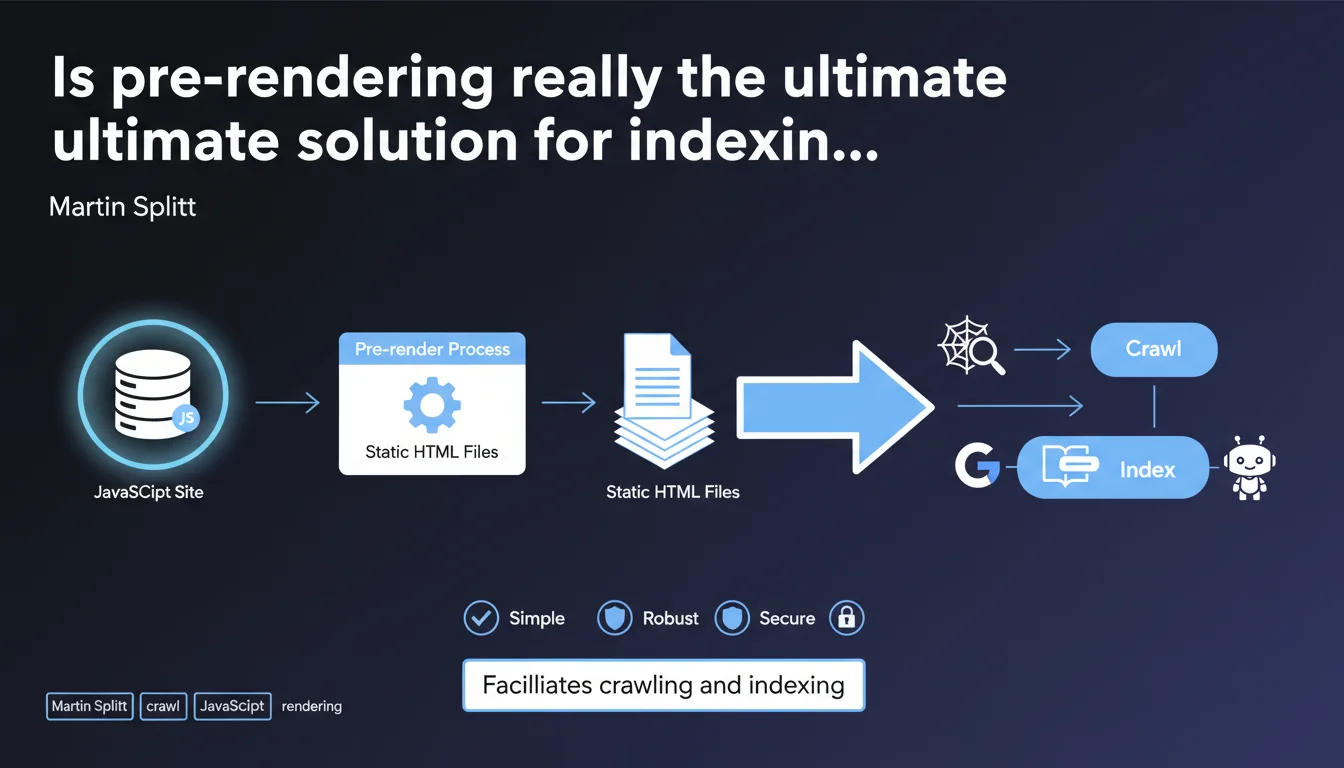

Google confirms that pre-rendering facilitates crawling and indexing by generating static HTML files. This approach eliminates client-side rendering issues, but does it remain relevant given the evolution of JavaScript SEO? Technical simplicity comes at a cost: it's not always suited to complex dynamic sites.

What you need to understand

Why is Google emphasizing pre-rendering now?

Pre-rendering generates static HTML files at build time, before any user or bot requests them. Unlike server-side rendering (SSR), which generates HTML on the fly, pre-rendering creates a frozen version of each page.

Google pushes this approach because it eliminates variables: no JavaScript to execute, no headless browser dependency, no rendering latency. The crawler accesses the complete HTML directly. For Google, this is the ideal scenario — zero friction.

What are the concrete advantages for indexing?

First point: crawl speed. Bots don't have to wait for JavaScript to execute. They fetch the HTML and move to the next page. Your crawl budget is used more efficiently.

Second point: reliability. With pre-rendering, what you see in development is exactly what Googlebot sees. No surprises from JavaScript timeouts, slow external APIs, or client-side rendering errors.

What limitations does this approach impose?

Pre-rendering works for sites whose content changes little or predictably. A blog, a brochure website, documentation — perfect. But as soon as you have personalized content, real-time feeds, or thousands of dynamic pages, it becomes problematic.

Generating thousands of static HTML files with each update becomes a bottleneck. Build time explodes, and you end up with frozen content that no longer reflects reality.

- Static HTML = crawling and indexing without technical friction

- No JavaScript to execute = crawl budget savings and reduced rendering errors

- Suited to low-update-frequency sites, problematic for dynamic or personalized content

- Enhanced security: smaller server-side attack surface, everything is pre-generated

SEO Expert opinion

Is this statement consistent with field observations?

Yes and no. Google intentionally simplifies the message. On static or semi-static sites, pre-rendering works flawlessly — we observe indexation rates close to 100% and reduced crawl times.

But Martin Splitt sidesteps a critical point: most modern sites are not static. E-commerce, SaaS platforms, marketplaces — these environments require fresh, personalized content, often generated based on the user. Pre-rendering becomes a hindrance, not a solution. [To verify]: Google doesn't specify when pre-rendering becomes counterproductive in terms of maintenance and scalability.

In what cases is this approach not recommended?

Let's be honest: pre-rendering isn't a magic wand. If your site generates pages based on user parameters (filters, geolocation, personalized content), you can't pre-render all possible combinations.

Another case: sites with frequent updates. Imagine a news site publishing 50 articles daily. Relaunch a full build with each publication? Unmanageable. SSR or Incremental Static Regeneration (ISR) becomes more relevant.

What are the real implications for modern frameworks?

Next.js, Nuxt, SvelteKit — all offer pre-rendering by default. But they also offer SSR and ISR. The choice depends on your use case, not a generic Google recommendation.

What Google doesn't say: even with pure client-side rendering, if your JavaScript is well optimized (code splitting, lazy loading, no blocking dependencies), indexing works. It's just less predictable. Pre-rendering eliminates uncertainty — but at the cost of flexibility.

Practical impact and recommendations

What should you concretely do if you already use a modern framework?

If you're on Next.js, prioritize Static Site Generation (SSG) for pages whose content rarely changes: category pages, landing pages, editorial content. Reserve SSR for product pages, user profiles, or anything requiring fresh data.

For Nuxt, enable target: 'static' mode and use nuxt generate to pre-render static routes. Complement with revalidation rules for content that evolves periodically.

What errors should you avoid when implementing pre-rendering?

Don't pre-render pages that depend on dynamic parameters or user sessions. You'll generate inconsistent versions or, worse, expose sensitive data in static files.

Another classic mistake: forgetting to update pre-rendered files. A site frozen in time loses relevance. Automate builds via CI/CD so each content modification triggers regeneration.

How do you verify that your pre-rendering works correctly?

Use Search Console to analyze crawl logs. If Googlebot accesses HTML directly without JavaScript rendering, you're good. Also verify via the URL inspection tool: rendered HTML must match source HTML.

Test with curl or wget: fetch the page in bot mode and verify that critical content (titles, text, internal links) is present without JavaScript enabled.

- Identify pages suitable for pre-rendering: static content, low update frequency

- Configure your framework (Next.js SSG, Nuxt generate, etc.) to pre-render these routes

- Automate builds via CI/CD to regenerate files with each modification

- Test generated HTML with curl or Google's inspection tool

- Monitor crawl logs in Search Console to validate absence of rendering errors

- Plan a hybrid strategy (SSG + SSR or ISR) for dynamic content

❓ Frequently Asked Questions

Le pré-rendering remplace-t-il complètement le SSR ?

Google indexe-t-il moins bien les sites en client-side rendering ?

Peut-on combiner pré-rendering et SSR sur un même site ?

Quels frameworks facilitent le pré-rendering ?

Le temps de build devient-il un problème avec des milliers de pages ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 08/01/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.