Official statement

Other statements from this video 2 ▾

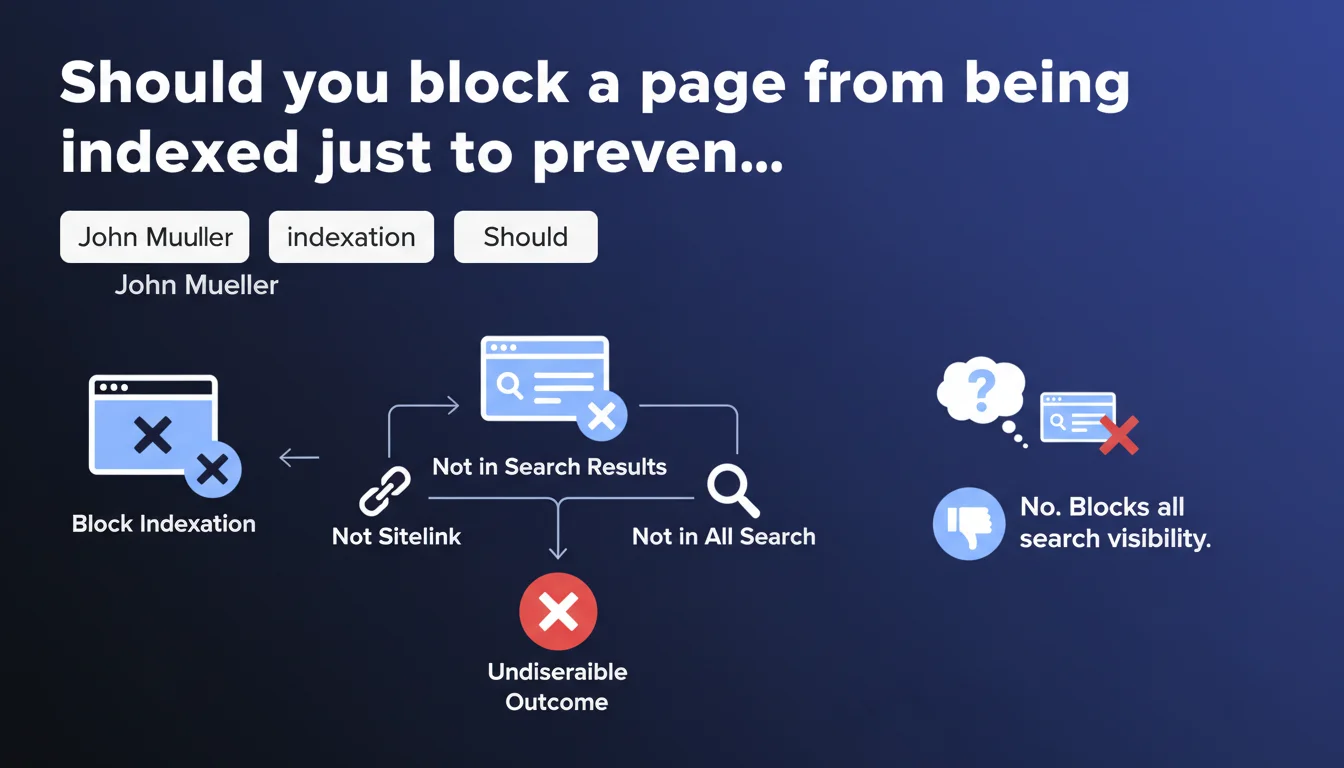

Google confirms that the only way to prevent a page from appearing as a sitelink is to completely block its indexation. This radical solution removes the page from all search results, not just sitelinks. There is no method to specifically target sitelinks without impacting overall visibility.

What you need to understand

What is a sitelink and why would you want to block it?

Sitelinks are those subpages that Google displays under your site's main result in the SERPs. They take up visual space, direct clicks, and — here's the problem — they completely escape your direct editorial control.

Some SEO professionals want to hide specific pages: login pages, legal notices, technical URLs, or internal content that has no interest for users coming from Google. The problem? Google decides alone which links to display, without consulting anyone.

What is the official solution proposed by Google?

Mueller is clear: block the complete indexation of the page via robots.txt or noindex tag. No half measures, no hidden parameter in Search Console to say "OK for indexation, but not for sitelink".

Concretely, if you deindex the page, it disappears from all results — including standard organic ones. You lose all potential traffic to that URL. It's a brutal trade-off: total visibility or total invisibility.

Why doesn't Google offer granular control?

The official answer? Sitelinks are generated algorithmically to improve user experience. Google believes it knows better than you which pages to serve to the user.

In practice, this means you have no leverage to say "this page can rank, but should never be a sitelink". The algorithm decides, period. And when it gets it wrong — because it sometimes does — you only have one nuclear option.

- No parameter in Search Console to exclude a URL from sitelinks specifically

- No dedicated meta tag to control display as a sitelink

- Only solution: noindex or robots.txt blocking — with total loss of visibility

- Google prioritizes its algorithm over your editorial strategy on this specific point

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Yes, and that's precisely what frustrates practitioners. We've observed for years that Google systematically ignores attempts to manipulate sitelinks via HTML structure, internal linking, or other workarounds. Search Console data shows that sitelinks change at the algorithm's discretion, with no obvious correlation to our actions.

Let's be honest: this statement merely confirms a reality that every SEO professional already knows. But it definitively closes the door on hope for fine-grained control. No gray areas, no elegant hacks — just a binary choice.

In what cases does this rule cause problems?

Imagine an e-commerce site with a "My Account" page that regularly appears as a sitelink. It's useful for logged-in customers, but completely useless for a new visitor arriving from Google. You want it indexed for internal linking and crawling, but not on the SERP storefront.

Another classic case: filter or sort pages that rank well but should never appear as a main entry point. Block their indexation? You lose SEO juice. Keep them? You suffer from counterproductive sitelinks. [To verify] whether Google will ever refine this mechanism — nothing currently indicates it will.

What nuances should be noted?

Mueller's statement is factual, but it obscures one data point: the frequency of sitelink changes. Google adjusts them regularly based on user behavior. A problematic page today might disappear from sitelinks tomorrow without any intervention on your part.

Before brutally deindexing, monitor evolution over 2-3 months. Sometimes the problem resolves itself. If the sitelink persists and genuinely harms CTR or user experience, then — and only then — consider noindex.

Practical impact and recommendations

What should you concretely do if a sitelink is problematic?

First, quantify the real impact. An ugly or inappropriate sitelink doesn't automatically justify radical action. Analyze the CTR on this link in Search Console, compare with other sitelinks, evaluate bounce rate for visitors arriving on this page.

If the problem is proven — drop in CTR, user confusion, technical page appearing by mistake — then two options: accept it or deindex. No gray area. Google offers no parameter to say "index, but not as a sitelink".

What mistakes should you absolutely avoid?

Don't deindex lightly. Too many SEO professionals panic when seeing an "unexpected" page as a sitelink and pass it to noindex without thinking. Result? Loss of organic traffic on that URL, loss of SEO juice in internal linking, and sometimes no real gain in global CTR.

Another mistake: believing that optimizing internal linking or renaming the page will be sufficient. It can have marginal influence, but Google remains the master of the final decision. Don't waste weeks fine-tuning architecture if the sitelink doesn't change — move to noindex or accept it.

How can you verify that your decision is correct?

Before noindexing, simulate the impact. Identify all pages linking to the URL in question, estimate the organic traffic it currently captures (even if minimal), and project the loss. If this page serves as an internal hub, its removal can weaken the entire section.

After noindex, monitor the evolution of other sitelinks. Sometimes Google replaces the blocked link with another equally irrelevant one. In that case, you've made the situation worse. Test, measure, adjust — and document each decision to understand patterns over the long term.

- Analyze the CTR and real traffic of the problematic sitelink before any action

- Verify that the page doesn't play a key role in internal linking or PageRank flow

- Test deindexation over a limited period (3-6 months) and measure overall impact

- Document current sitelinks to detect changes post-noindex

- Never deindex a strategic page just to "clean up" your SERPs

❓ Frequently Asked Questions

Existe-t-il une balise meta pour empêcher une page d'apparaître en sitelink ?

Puis-je influencer les sitelinks via le maillage interne ou les ancres ?

Si je noindex une page, les liens internes vers elle perdent-ils leur valeur SEO ?

Google peut-il afficher un sitelink vers une page bloquée par robots.txt ?

Les sitelinks changent-ils souvent sans intervention de ma part ?

🎥 From the same video 2

Other SEO insights extracted from this same Google Search Central video · published on 17/07/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.