Official statement

Other statements from this video 6 ▾

- □ Pourquoi la visibilité du contenu conditionne-t-elle réellement l'indexation par Google ?

- □ L'hydration est-elle vraiment la solution miracle aux problèmes SEO du JavaScript ?

- □ Le pré-rendering est-il la solution ultime pour l'indexation des sites JavaScript ?

- □ Le Server-Side Rendering garantit-il vraiment l'indexation de votre contenu JavaScript ?

- □ L'hydration est-elle vraiment un compromis technique acceptable pour le SEO ?

- □ Comment choisir la bonne stratégie de rendu pour optimiser son référencement naturel ?

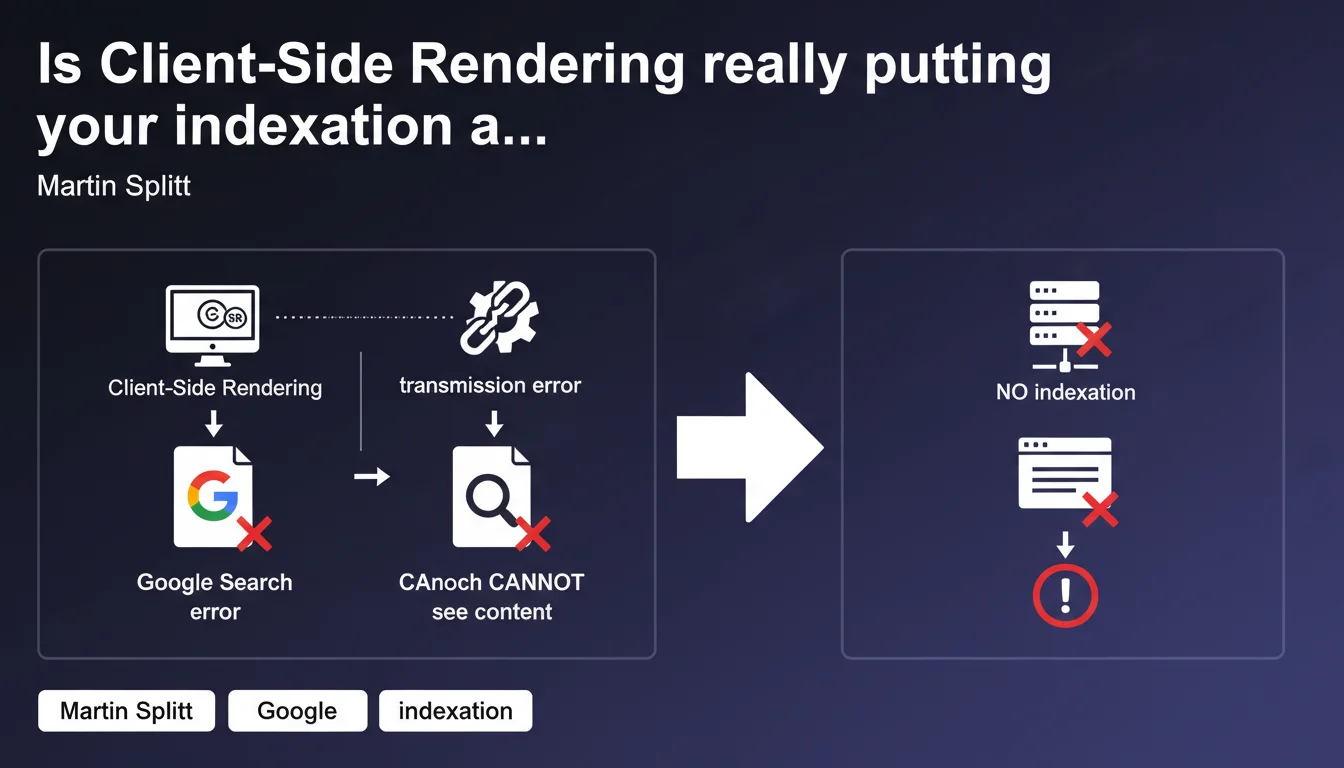

Google confirms that CSR represents a critical indexation risk: any failure in JavaScript execution on the client side prevents search engines from seeing and indexing your content. Unlike Server-Side Rendering, which guarantees HTML content delivery, CSR introduces a single point of failure that can render your pages invisible to Google.

What you need to understand

Why does Google consider CSR risky?

Client-Side Rendering delegates to the browser the responsibility of generating the final HTML via JavaScript. This approach works perfectly for the human user, but becomes problematic for search engines.

Concretely? If JavaScript fails during the crawl — timeout, script error, blocked resource — Google receives an empty or incomplete page. Result: no content to index. This is exactly what Martin Splitt points out here.

How does CSR differ from Server-Side Rendering?

With SSR, the server directly sends the complete HTML. Googlebot receives the content immediately, without depending on JavaScript execution. The content is guaranteed — even if JS crashes, the essentials remain indexable.

CSR inverts this logic: the server delivers an almost-empty HTML skeleton, and it's the browser (or Googlebot) that must execute JavaScript to display the real content. This extra step introduces a structural fragility.

What are the concrete failure points of CSR?

- Execution timeout: Google allocates limited time for JavaScript rendering. If your app is heavy, the content may never appear.

- JavaScript errors: A bug, a broken dependency, an unavailable CDN — and your content disappears for Googlebot.

- Blocked resources: Robots.txt blocking a critical JS file, misconfigured CORS, or simple network issue during the crawl.

- Wasted crawl budget: Google must render each page, which consumes more resources and slows down overall indexation.

- Indexation delay: CSR content goes through a rendering queue, delaying its appearance in the index from several hours to several days.

SEO Expert opinion

Does this statement match real-world reality?

Absolutely. Audits regularly show pure CSR sites losing 30 to 70% of their indexable content due to undetected JavaScript errors. SEO monitoring tools consistently report discrepancies between raw HTML and final rendering.

A classic case: an e-commerce site migrates to React without SSR. Result after 3 months? Product pages loaded in JavaScript no longer appear in Google Shopping. The crawl confirms that Googlebot receives an empty <div id="root"></div> and abandons it.

Is Google telling the whole truth about its ability to render JavaScript?

Let's be honest: Google can render JavaScript, but that doesn't mean it will always do so correctly or quickly. [To verify]: Google claims to process CSR "like a modern browser," but tests show notable differences.

Concrete examples — Googlebot doesn't always handle ES6+ polyfills, misses certain event listeners, and may ignore content loaded after user interaction (infinite scroll, aggressive lazy loading). The statement "we render JavaScript" is technically true, but hides a problematic variability of results.

Are there cases where CSR remains acceptable?

Yes, but they are rare. If your site has no SEO stakes (intranet, SaaS application behind login, business tool), CSR poses no indexation problem — since there's nothing to index.

For everything else — blog, e-commerce, media site, corporate site — pure CSR is a ticking SEO time bomb. Even sites that "work" today in CSR live under the permanent threat of invisible regression.

Practical impact and recommendations

What concrete steps should you take?

Priority number one: abandon pure CSR. Migrate to SSR (Next.js, Nuxt.js, SvelteKit) or Static Site Generation (SSG) to guarantee that critical HTML reaches Googlebot directly.

If a complete overhaul isn't feasible immediately, implement at minimum Pre-Rendering (Prerender.io, Rendertron) to serve HTML snapshots to crawlers. It's not an ideal solution — but it's better than leaving Google playing Russian roulette with your JavaScript.

How can you verify that your CSR isn't sabotaging your indexation?

Test the actual rendering of Googlebot with the URL inspection tool in Search Console. Compare the raw HTML (View Source) and the rendered HTML (Rendered HTML). If entire content blocks are missing from the rendering, you have a problem.

Another critical check: analyze server logs to identify JavaScript errors on Googlebot's side. Many sites serve code that works in production but crashes with Google due to missing dependencies or timeouts.

What errors should you absolutely avoid?

- Never block JavaScript or CSS files in robots.txt — Google needs them to render the page.

- Avoid pure CSR frameworks (Create React App without SSR) for sites with SEO stakes.

- Don't rely on JavaScript lazy loading for critical content — Googlebot may miss it.

- Systematically test Googlebot rendering after each major front-end deployment.

- Monitor indexation variations with a tool like OnCrawl, Botify, or Screaming Frog Log Analyzer.

- Implement an SSR fallback for strategic pages (product pages, articles, landing pages).

- Document critical JavaScript dependencies and monitor their availability (CDN, third-party APIs).

❓ Frequently Asked Questions

Google indexe-t-il vraiment le contenu en CSR ou faut-il obligatoirement du SSR ?

Quels frameworks sont compatibles SSR pour éviter les problèmes de CSR ?

Le Pre-Rendering est-il une solution acceptable pour le SEO ?

Comment savoir si Googlebot a bien rendu ma page JavaScript ?

Un site en CSR peut-il ranker correctement malgré tout ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 08/01/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.