Official statement

Other statements from this video 6 ▾

- □ Comment exploiter Google Search Console pour détecter vos pages à fort potentiel inexploité ?

- □ Comment identifier vos pages qui gaspillent leur potentiel de trafic dans Search Console ?

- □ Faut-il vraiment demander une réindexation après chaque mise à jour de contenu ?

- □ Comment mesurer efficacement l'impact réel de vos optimisations SEO dans Search Console ?

- □ Comment identifier les opportunités de contenu à fort potentiel grâce à la demande croissante ?

- □ La Search Console peut-elle vraiment orienter votre stratégie SEO ?

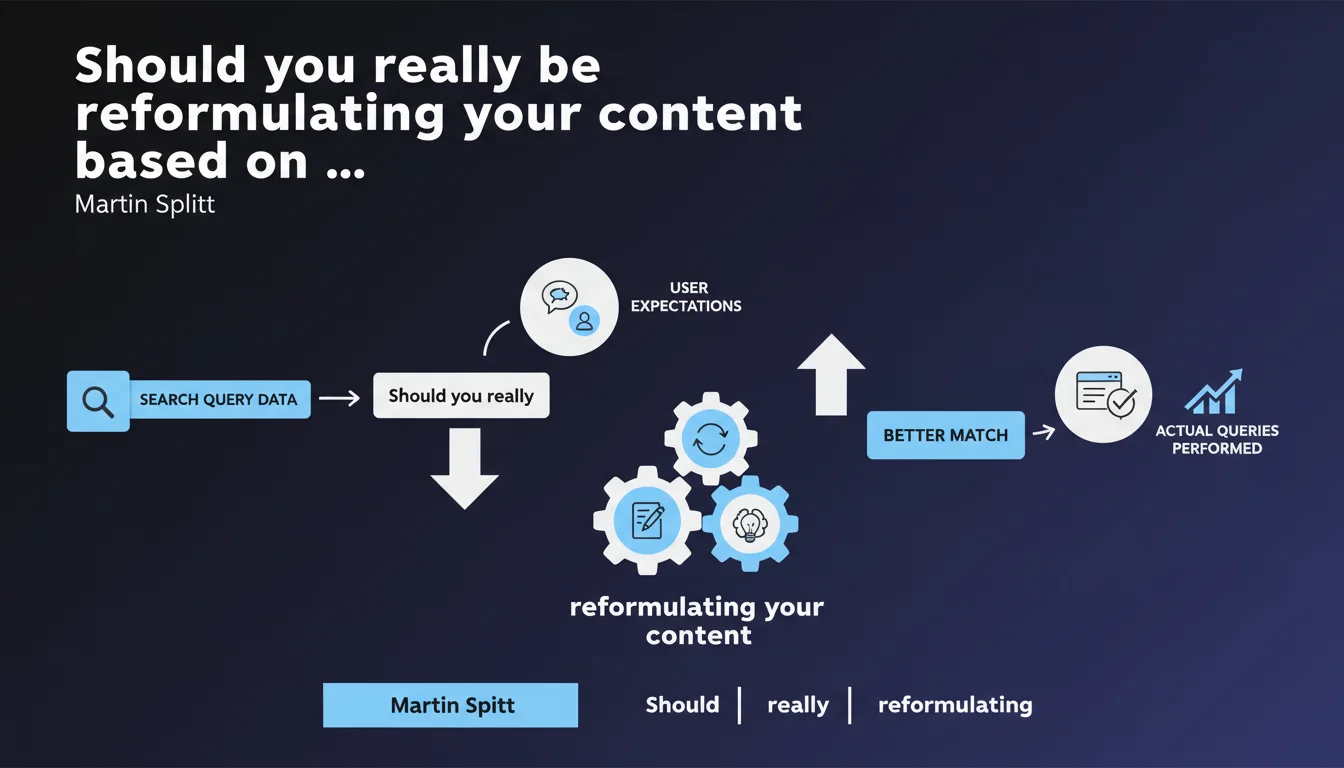

Google recommends using real data from search queries performed by internet users to adjust the wording and structure of your content. The objective: align your editorial architecture with actual search intentions, not what you imagine them to be. A data-driven approach that may require rewriting entire sections of existing content.

What you need to understand

Why does Google insist on content reformulation?

Martin Splitt's statement targets a recurring problem: the gap between the vocabulary content creators use and what users actually search for. Writers often employ industry jargon, technical terms, or phrasing they consider "elegant," while internet users search with simple words, direct questions, sometimes awkward phrasing.

Google has access to billions of real queries and observes that matching between intent and content frequently fails because of this lexical gap. Reformulating doesn't mean gaming the system or over-optimizing — it's about adjusting the text surface so the algorithm can more easily identify document relevance.

What does "restructuring" your content actually mean in practice?

Restructuring goes beyond simple word replacement. It involves reorganizing your editorial architecture: changing the order of sections, splitting an overly broad article into multiple targeted pieces, creating FAQs that answer the questions users actually ask, adjusting titles and subheadings to reflect real user phrasing.

If your GSC data shows users searching for "how to do X without Y" while your title says "Alternative methods for X," you have an alignment problem — and therefore an immediate improvement opportunity.

Where does this query data come from?

The primary sources are Google Search Console (queries generating impressions), Google Analytics (internal search terms if you have a site search engine), and third-party tools like SEMrush or Ahrefs that aggregate search volume and associated questions.

The classic mistake is relying solely on estimated search volumes. Real queries observed in GSC, even with low impressions, often reveal very specific search intentions that generic estimates don't capture.

- Use GSC to identify queries generating impressions but few clicks: an indicator of poor title/meta alignment.

- Analyze questions asked in forums, Reddit, Quora to understand your audience's actual vocabulary.

- Create distinct content for query variations that seem similar but express different intentions.

- Don't rely solely on intuition or marketing personas — real data often surprises.

SEO Expert opinion

Is this recommendation really new?

No, and that's precisely what's worth questioning. Semantic optimization and query analysis have been documented SEO practices for over a decade. The fact that Google is restating this advice now suggests either that most sites still ignore this approach, or that the algorithm has recently increased the weight assigned to this criterion.

My field observation: the best-performing sites in 2023-2025 are those that have systematized GSC analysis in their editorial process. Not one-off optimization, but a continuous cycle of refinement. Sites treating content creation as a fixed process are losing ground.

What are the limitations of this approach?

Blindly reformulating can create problems. If you adapt every paragraph to query variations, you risk diluting editorial coherence and producing disjointed content that tries to answer everything and satisfies nothing.

Another pitfall: attempting to cover every possible wording of the same question leads to internal cannibalization. Three nearly identical articles targeting "best SEO tool," "which SEO tool to choose," "SEO tools comparison" will compete with each other. Better to create one solid article naturally covering these variations. [To verify]: Google claims to handle these variations, but in practice, semantic duplication remains penalizing.

In what cases does this rule not apply?

On thought leadership or innovation topics, the queries don't exist yet — you're creating demand. Reformulating based on non-existent data would be counterproductive.

Similarly, for brand or institutional content, the goal isn't always maximum organic traffic. Adapting vocabulary to match queries could weaken brand positioning if that positioning relies on specific terminology.

Practical impact and recommendations

How do you identify which content to reformulate first?

Start with GSC, Performance tab. Filter for pages with CTR below 2% and more than 1,000 monthly impressions. These pages appear in SERPs but don't generate clicks: a sign of title/meta formulation problems or inadequate positioning.

Next, analyze queries ranking in positions 8-20: you're visible but not enough to capture traffic. Often, targeted reformulation is enough to reach the first page.

What methodology should you apply to restructure?

Create a mapping spreadsheet: for each underperforming page, list the 10-15 main queries generating impressions. Group them by intent: informational, transactional, navigational.

If multiple intents coexist on the same page, consider splitting the content. If the intent is homogeneous but phrasing varies, adjust H2/H3 to naturally integrate these variations. Then verify that your introduction immediately answers the most frequent question — this is often where bounce rate is determined.

What tools can you use to automate this analysis?

GSC API export combined with Python or Google Sheets lets you cross-reference queries, rankings, and CTR. Tools like Screaming Frog or OnCrawl can audit alignment between titles/headings and target queries.

For semantic analysis, Answer The Public, AlsoAsked, or People Also Ask reveal associated questions. But be careful: these tools aggregate suggestions, not necessarily real queries with actual volume. Always prioritize GSC data.

- Export your GSC data over at least 6 months to smooth seasonal variations.

- Identify 10 pages with strong potential (high impressions, low CTR).

- Reformulate titles, meta descriptions, and H1 by incorporating actual user phrasing.

- Restructure H2/H3 to explicitly answer questions observed in GSC.

- Create FAQs at the end of articles capturing relevant long-tail queries.

- Monitor CTR and ranking changes over 4-6 weeks post-modification.

- Repeat the process: semantic optimization is iterative, not one-time.

❓ Frequently Asked Questions

Faut-il réécrire tous mes anciens contenus en fonction des nouvelles requêtes ?

Comment éviter la sur-optimisation en reformulant mon contenu ?

Les données GSC suffisent-elles ou faut-il d'autres sources ?

Reformuler améliore-t-il vraiment le classement ou seulement le CTR ?

À quelle fréquence dois-je réviser mes contenus selon cette méthode ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 02/11/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.